Interviews are more than just a Q&A session—they’re a chance to prove your worth. This blog dives into essential Normalizing interview questions and expert tips to help you align your answers with what hiring managers are looking for. Start preparing to shine!

Questions Asked in Normalizing Interview

Q 1. Explain the different normal forms (1NF, 2NF, 3NF, BCNF, 4NF, 5NF).

Database normalization is a process of organizing data to reduce redundancy and improve data integrity. It’s achieved by splitting databases into two or more tables and defining relationships between the tables. The different normal forms represent progressively stricter rules for organizing data, each addressing different types of redundancy. Let’s explore them:

- 1NF (First Normal Form): Eliminates repeating groups of data within a table. Each column should contain only atomic values (indivisible values). Think of it as removing arrays or lists within a single cell. Example: Instead of having a ‘PhoneNumbers’ column with multiple numbers in a single cell, you’d have separate rows for each phone number.

- 2NF (Second Normal Form): Builds upon 1NF. It requires the database to be in 1NF and eliminates redundant data that depends on only part of the primary key (partial dependency). If you have a composite key (multiple columns forming the primary key), attributes dependent only on part of the key are moved into a separate table.

- 3NF (Third Normal Form): Builds on 2NF. It addresses transitive dependencies. This means that no non-key attribute should be functionally dependent on another non-key attribute. In simpler words, attributes should depend directly on the primary key, not indirectly through another attribute.

- BCNF (Boyce-Codd Normal Form): A stricter version of 3NF. It addresses some anomalies that 3NF doesn’t entirely solve. Every determinant (an attribute that determines other attributes) must be a candidate key (a minimal set of attributes that uniquely identify a row).

- 4NF (Fourth Normal Form): Addresses multi-valued dependencies. This occurs when a single record can have multiple values for an attribute independent of other attributes. For example, a student can have multiple courses and multiple skills, and these are unrelated.

- 5NF (Fifth Normal Form): The highest level. It addresses join dependencies. It ensures that a table can’t be losslessly decomposed into smaller tables without losing information. It’s rarely needed in practical applications.

Think of it like building with LEGOs. 1NF is like having all your bricks in a single pile, 2NF, 3NF, and beyond are like organizing them into increasingly logical and efficient structures.

Q 2. What is functional dependency and how does it relate to normalization?

A functional dependency describes a relationship between attributes where one or more attributes uniquely determine another attribute. It’s written as X → Y, meaning ‘X functionally determines Y’. In a table, if you know the value of X, you automatically know the value of Y. This is crucial in normalization because it helps identify redundancies and improve data integrity.

For example, in a table with attributes CustomerID and CustomerName, we have the functional dependency CustomerID → CustomerName. Because a CustomerID uniquely identifies a CustomerName. If there’s more than one name associated with a single CustomerID, it violates the functional dependency and indicates a problem in data organization that normalization aims to fix.

Normalization leverages functional dependencies to identify and eliminate data redundancy. By understanding which attributes determine others, we can create separate tables to hold related data and connect them using relationships, avoiding data duplication and anomalies.

Q 3. Describe the process of normalizing a relational database.

Normalizing a relational database is an iterative process. It involves analyzing the tables, identifying functional dependencies, and decomposing tables into smaller, more structured tables. Here’s a general process:

- Analyze the existing database: Identify all attributes and their relationships.

- Identify candidate keys: Determine the minimal set of attributes that uniquely identify each row.

- Identify functional dependencies: Determine which attributes functionally determine other attributes.

- Apply normalization rules: Start with 1NF, then 2NF, and so on until you reach the desired normal form. This involves creating new tables and moving attributes to eliminate redundancy and anomalies.

- Establish relationships between tables: Define foreign keys in the related tables to maintain referential integrity (ensuring data consistency across related tables).

- Test and refine: Test the normalized database for data integrity and consistency. You may need to adjust the tables based on the testing results.

This is an iterative process; you might need to revisit earlier steps as you refine your understanding of the relationships between data during normalization.

Q 4. What are transitive dependencies and how do they violate normalization?

A transitive dependency occurs when a non-key attribute is functionally dependent on another non-key attribute, which in turn is functionally dependent on the primary key. This creates redundancy and violates 3NF. For example:

Let’s say we have a table Employees with attributes: EmployeeID (primary key), DepartmentID, and DepartmentLocation. Here, DepartmentID → DepartmentLocation, and EmployeeID → DepartmentID. This means EmployeeID indirectly determines DepartmentLocation through DepartmentID. This is a transitive dependency.

To resolve this, we create a separate table Departments with attributes DepartmentID (primary key) and DepartmentLocation. The Employees table would then only need to store EmployeeID and DepartmentID. This eliminates redundancy—the department location is only stored once.

Q 5. How do you identify redundant data in a database?

Identifying redundant data involves looking for repeated information in a database. This can be done by carefully examining the database structure and data itself. Several approaches help:

- Analyzing table structures: Look for attributes that seem to repeat information. For instance, if the same information appears in multiple columns of a single table, it indicates redundancy.

- Identifying functional dependencies: If non-key attributes are functionally dependent on non-key attributes (transitive dependencies), it points to redundancy.

- Checking for multi-valued dependencies: If an attribute can have multiple unrelated values for a single record, that’s an indication of redundancy.

- Data inspection: A visual inspection of the data can often reveal obvious redundancies where the same information is repeated across multiple records.

- Using database tools: Specialized database design tools and query analyzers can help to identify redundancies automatically by analyzing the data structure and relationships.

Redundancy often manifests as similar data points appearing across multiple rows or columns, often violating the principles of normalization.

Q 6. What are the advantages and disadvantages of normalization?

Normalization offers several advantages but also comes with some drawbacks:

- Advantages:

- Reduced data redundancy: This saves storage space and prevents inconsistencies.

- Improved data integrity: Easier to update and maintain data consistency.

- Simplified data modification: Changes can be made in one place rather than many.

- Better database design: Leads to a more organized, well-structured database.

- Disadvantages:

- Increased complexity: More tables and joins increase the complexity of database queries.

- Performance overhead: Joining multiple tables to retrieve data can impact query performance if not optimized properly.

- Increased storage space (sometimes): Though normalization generally reduces redundancy, the overhead of joining multiple tables can sometimes lead to increased overall space usage if not carefully optimized.

Q 7. Explain the trade-offs between normalization and performance.

There’s a trade-off between normalization and performance. Highly normalized databases reduce redundancy but may increase query complexity due to the need for joins between multiple tables. This can lead to slower query performance if the database isn’t carefully indexed and optimized.

The ideal level of normalization depends on the application’s specific needs. For applications prioritizing data integrity and consistency over raw speed (like financial systems), a higher degree of normalization is acceptable. For applications prioritizing speed (like online gaming), denormalization (a process that intentionally introduces redundancy to improve performance) might be a preferable approach. A well-designed database often finds a balance between these needs; it might not be completely normalized, employing techniques like indexing and query optimization to mitigate performance issues.

The key is finding the right balance. Over-normalization can lead to unnecessarily complex queries and reduced performance. Under-normalization, however, leads to data redundancy and the associated problems.

Q 8. How does normalization impact database query performance?

Normalization significantly impacts database query performance. A well-normalized database, by minimizing redundancy and improving data integrity, generally leads to faster and more efficient queries. This is because:

- Reduced Data I/O: Fewer redundant data means less data needs to be read from and written to the disk during query execution. This directly translates to faster query response times.

- Smaller Table Sizes: Normalized tables are typically smaller, leading to quicker scans and joins. Queries involving large, unnormalized tables can take significantly longer to complete.

- Simplified Query Logic: Complex queries needed to extract information from a poorly structured database can be simplified with a normalized design. Simpler queries are generally faster to execute.

However, it’s crucial to note that over-normalization can sometimes lead to performance degradation. Excessive joins across multiple tables to retrieve related data can offset the benefits of reduced redundancy. The key is to find the right balance – a normalized structure optimized for performance within the context of your specific application.

Q 9. What are the common anomalies found in unnormalized databases?

Unnormalized databases are prone to several anomalies that compromise data integrity and consistency. The most common are:

- Update Anomalies: Occur when updating a single piece of data requires changing multiple records. For example, if customer address is stored redundantly with each order, changing the customer’s address requires updating every order record.

- Insertion Anomalies: Arise when you can’t add new data without adding other unnecessary data. Imagine trying to add a new product to a database without an associated supplier, even if the supplier information is irrelevant at this stage.

- Deletion Anomalies: Occur when deleting one piece of data unintentionally deletes other data. Deleting a customer might also delete all associated orders if the data isn’t properly separated.

These anomalies complicate data management, leading to potential inconsistencies and errors in your data.

Q 10. How do you choose the appropriate normal form for a given database?

Choosing the right normal form involves a careful balance between data integrity and query performance. You shouldn’t aim for the highest normal form (like BCNF or 5NF) without considering the practical implications. Here’s a guideline:

- Start with 1NF, 2NF, and 3NF: These forms address the most common anomalies and should be sufficient for many applications.

- Assess Data Redundancy: Analyze the data model to identify potential redundancy and the anomalies it might cause.

- Consider Query Performance: Excessive normalization can lead to many joins in queries, impacting performance. If the database is for a low-traffic website, 3NF might be sufficient, but if it is a high-transaction system, you may want to stop at 2NF.

- Evaluate Business Rules: Some business rules might prevent achieving a higher normal form. For example, business rules dictating data denormalization for performance reasons might contradict the requirement for a high normal form.

In practice, most databases reach 3NF, and going beyond that often provides diminishing returns and added complexity. It’s more critical to correctly apply the lower normal forms than to strive for perfection in higher-order forms.

Q 11. Explain denormalization and when it might be necessary.

Denormalization is the process of intentionally introducing redundancy into a database to improve performance. It’s the opposite of normalization. It’s often necessary when:

- Query performance is critical: Many joins can slow down queries. Denormalization creates redundant copies of data to reduce the need for joins.

- The system has high read-to-write ratios: If reads significantly outnumber writes, the cost of occasional update anomalies is offset by the performance gains.

- Data volume is enormous: Extremely large datasets might benefit from denormalization to reduce the overhead of complex joins.

Example: Consider an e-commerce website. For faster product page loading, the product’s category, sub-category, and other related details can be stored redundantly on the product table itself, avoiding joins during product page retrieval. The cost of occasional redundancy in updates is far outweighed by the dramatic performance improvement. However, careful consideration of data integrity needs to accompany denormalization decisions.

Q 12. How do you handle data redundancy in a non-normalized database?

Handling data redundancy in a non-normalized database is a challenging task, as redundancy is the root cause of many problems. The best approach is to normalize the database. However, if normalization isn’t immediately feasible, several strategies can mitigate the risks:

- Data Validation: Implement strict validation rules to ensure data consistency across redundant fields. This minimizes inconsistencies but doesn’t eliminate the redundancy.

- Triggers and Stored Procedures: Use database triggers to automatically update redundant fields whenever the primary data changes. This reduces the risk of inconsistencies but adds complexity.

- Views: Create database views that present a consistent and simplified view of the data, hiding the redundancy from users. However, this doesn’t directly address the underlying redundancy problem.

- Careful Updates: Establish strict update procedures to ensure that all redundant instances of data are modified consistently whenever a change is needed. This is prone to human error.

Ultimately, normalization is the most robust solution to data redundancy, but these strategies can provide temporary relief while longer-term normalization planning is implemented.

Q 13. Describe a situation where you had to normalize a database. What were the challenges?

In a previous project, I normalized a customer relationship management (CRM) database that had significant data redundancy, leading to update and deletion anomalies. The database initially stored customer information (name, address, phone number) directly within the order tables. This caused issues whenever customer information needed updating; every order had to be updated individually.

Challenges:

- Data Migration: Moving data from the unnormalized structure to the normalized structure was a complex task. It required writing scripts to extract data from the existing tables and load them into the newly created, normalized tables.

- Application Changes: The applications interacting with the database had to be modified to work with the new schema. This required close collaboration with developers and thorough testing.

- Data Consistency Issues: During the migration, resolving data inconsistencies caused by redundancy was crucial. This involved identifying and correcting discrepancies in duplicated data.

- Downtime Management: Minimizing downtime during the migration was a significant challenge. The migration process needed to be carefully planned to ensure minimal disruption to the organization’s operations.

After normalization, update and deletion anomalies were eliminated, data integrity improved, and query performance increased. The database became more maintainable and scalable. This demonstrated the significant benefits of database normalization, despite the initial challenges involved in its implementation.

Q 14. What are the differences between 1NF and 2NF?

The differences between 1NF and 2NF are subtle but crucial for database design. Both aim to reduce data redundancy, but they approach it differently.

- 1NF (First Normal Form): Eliminates repeating groups of data within a table. Each column should contain atomic values—single, indivisible pieces of information. This is achieved by creating separate tables for related data and using foreign keys to link them.

- 2NF (Second Normal Form): Builds upon 1NF. It requires that the database is in 1NF and that all non-key attributes are fully functionally dependent on the entire primary key. This means that no non-key attribute should be dependent on only part of the primary key (if the primary key has more than one attribute).

Example: Consider a table with Order ID, Product ID, Product Name, and Product Price. The primary key is Order ID and Product ID. In 1NF this would be a valid table, but in 2NF it would be problematic because Product Name and Product Price are dependent only on Product ID (not the whole primary key). To achieve 2NF, you would separate Product ID, Product Name, and Product Price into a separate table.

Q 15. What are the differences between 2NF and 3NF?

Both Second Normal Form (2NF) and Third Normal Form (3NF) are stages in database normalization, a process designed to minimize data redundancy and improve data integrity. The key difference lies in how they address transitive dependency.

2NF eliminates redundant data by addressing partial dependencies. A partial dependency occurs when a non-key attribute depends on only part of a composite key (a key made up of multiple attributes), not the whole key. Think of a table with Order ID, Product ID, and Product Name. Product Name depends only on Product ID, not the entire Order ID and Product ID combination. In 2NF, you’d split this into two tables: one for orders and products linking them and another for product information.

3NF builds upon 2NF by eliminating transitive dependencies. A transitive dependency exists when a non-key attribute is dependent on another non-key attribute, rather than the primary key. Imagine adding Supplier Name to our example. Now, Supplier Name indirectly depends on the Order ID via Product ID. In 3NF, we separate Supplier Name into a separate table linked to the product table. This eliminates redundancy and ensures data integrity, making updates easier and preventing data inconsistencies.

In short: 2NF tackles partial dependencies, while 3NF tackles transitive dependencies, leading to a more refined and efficient database structure.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What is Boyce-Codd Normal Form (BCNF) and when is it used?

Boyce-Codd Normal Form (BCNF) is a higher level of normalization than 3NF. It’s a stricter form that aims to eliminate redundancy even further by addressing a specific type of dependency: the functional dependency of a non-key attribute on a non-key attribute and even another non-key attribute depending on only part of the key(similar to a partial dependency).

BCNF states that for every functional dependency, the left-hand side must be a candidate key (a minimal set of attributes that uniquely identifies a record). If any non-key attribute determines part of a candidate key, or another non-key attribute, the table is not in BCNF. This is subtle, but important. 3NF handles many common situations, but BCNF eliminates more subtle redundancies that can arise in more complex scenarios.

BCNF is used when the highest level of data integrity is crucial and potential data anomalies from even subtle redundancies must be eliminated. It’s often applied in systems handling highly sensitive or critical data, where consistency is paramount. However, enforcing BCNF can sometimes lead to more tables and increased complexity in database design.

Q 17. Explain the concept of a candidate key.

A candidate key is a minimal set of attributes that uniquely identifies each record in a table. ‘Minimal’ means that no subset of the attributes can uniquely identify records on its own. Think of it as a ‘fingerprint’ for each row. A table can have multiple candidate keys. For example, in a ‘Employees’ table, both EmployeeID and NationalID could potentially uniquely identify each employee, therefore both are candidate keys.

Consider a table about books: ISBN, Title, Author. ISBN is a candidate key because it uniquely identifies a specific book. If no two books share the same title and author then Title and Author together could also be a candidate key – a composite candidate key.

Q 18. Explain the concept of a primary key.

The primary key is simply one of the candidate keys chosen to uniquely identify each record in a table. It’s the key the database system uses as the primary means for locating and referencing data. Only one primary key can be assigned per table, even if the table has multiple candidate keys.

Going back to the ‘Employees’ table, if we choose EmployeeID as the primary key, we’re saying that EmployeeID will be the unique identifier used in all queries and relationships involving this table.

Q 19. Explain the concept of a foreign key.

A foreign key is a column or a set of columns in one table that refers to the primary key of another table. It establishes a link between tables, representing a relationship between them. This is the core of relational database design, letting us create powerful links between datasets.

For instance, imagine a table Orders with a column CustomerID. This CustomerID is a foreign key referencing the CustomerID (primary key) in a separate Customers table. This foreign key allows us to easily retrieve customer information related to each order without data duplication.

Q 20. What is a composite key?

A composite key is a primary key (or a candidate key) consisting of multiple attributes combined to uniquely identify a record. It’s needed when a single attribute isn’t sufficient for unique identification.

Consider a table representing courses taken by students. A single student might take the same course multiple times, so we can’t use just StudentID or CourseID. Instead, the composite key would be (StudentID, CourseID). This combination will uniquely identify each instance of a student taking a specific course.

Q 21. How do you use SQL to enforce referential integrity?

Referential integrity ensures that relationships between tables remain consistent. In SQL, we enforce this using foreign key constraints. This prevents actions that would destroy links between tables or leave dangling references. The basic approach involves defining a foreign key constraint in the child table (the one with the foreign key) referencing the primary key of the parent table.

For example, to enforce referential integrity between our Orders and Customers tables, we’d use a statement like this:

ALTER TABLE Orders ADD CONSTRAINT FK_Orders_Customers FOREIGN KEY (CustomerID) REFERENCES Customers(CustomerID);This SQL code adds a foreign key constraint named FK_Orders_Customers to the Orders table. It specifies that the CustomerID column in Orders must reference a valid CustomerID in the Customers table. Attempting to insert an order with a non-existent CustomerID would result in an error, maintaining data integrity.

Q 22. How do you design a database schema using normalization principles?

Designing a database schema using normalization principles involves systematically organizing data to reduce redundancy and improve data integrity. It’s like tidying up a messy room – instead of having multiple copies of the same information scattered everywhere, you organize everything into clearly defined spaces.

The process typically involves applying a series of normal forms, starting with the first normal form (1NF) and progressing to higher normal forms (2NF, 3NF, BCNF, etc.), depending on the complexity of the data and the desired level of optimization. Each normal form addresses specific types of redundancy and anomalies.

- 1NF: Eliminate repeating groups of data within a table. Each column should contain atomic values (single values).

- 2NF: Eliminate redundant data that depends on only part of the primary key (in tables with composite keys).

- 3NF: Eliminate redundant data that depends on non-key attributes (transitive dependencies).

For example, imagine a database for a library. An un-normalized table might look like this:

BookID Title Author ISBN MemberID MemberName MemberAddress 1 The Lord of the Rings J.R.R. Tolkien 978-0618002255 101 Alice Smith 123 Main St 2 Pride and Prejudice Jane Austen 978-0141439518 102 Bob Johnson 456 Oak Ave

This is highly redundant. Normalization would break this down into separate tables for Books, Members, and potentially even Authors, linking them through foreign keys to establish relationships. This eliminates redundancy and improves data consistency.

Q 23. Can you explain the role of normalization in maintaining data integrity?

Normalization plays a crucial role in maintaining data integrity by minimizing data redundancy and inconsistencies. Redundancy leads to anomalies – update, insertion, and deletion anomalies – that can corrupt the database.

- Update Anomaly: If a piece of data is duplicated in multiple places, updating it in one location but not in others leads to inconsistencies.

- Insertion Anomaly: You can’t add information about a new member without also providing a book they’ve borrowed.

- Deletion Anomaly: Deleting a book record might accidentally delete information about members who borrowed it.

By eliminating redundancy through normalization, we eliminate these anomalies, ensuring data accuracy and reliability. It’s like having a well-organized filing system: you know exactly where to find information, and updates are consistent and easy.

Q 24. How does normalization impact data updates and modifications?

Normalization significantly impacts data updates and modifications. In a normalized database, updates are much simpler and less error-prone. Because data is not duplicated, a single update is sufficient to change a value across the entire database. This reduces the risk of inconsistencies.

For example, if a member changes their address, in a normalized database you only need to update the address in the ‘Members’ table. In an unnormalized database, you’d need to find and update the address everywhere it appears, increasing the chance of human error.

Similarly, inserting new data is easier and more efficient. There’s no need to worry about replicating information across multiple tables, simplifying data entry and reducing the possibility of missing values or inconsistencies.

Q 25. What tools or techniques can assist in the normalization process?

Several tools and techniques can assist in the normalization process. Database design tools (like ERwin Data Modeler or similar software) offer visual representations of database schemas and help in identifying dependencies and potential anomalies. These tools often include automated normalization features.

Beyond software, a thorough understanding of functional dependencies is crucial. This involves carefully analyzing the relationships between different attributes in the data. For example, understanding that ‘BookID’ determines ‘Title’ helps in deciding which attributes should be in which table.

Furthermore, techniques like dependency diagrams and normalization algorithms can be used to systematically break down tables and identify suitable keys for relationships. Careful planning and a clear understanding of the data model are essential for successful normalization.

Q 26. Describe the impact of normalization on data consistency.

Normalization significantly improves data consistency by eliminating redundancy and inconsistencies. Because data is stored only once, there’s no risk of conflicting or outdated information. This leads to a more reliable and accurate database.

Imagine a customer database with multiple entries for the same customer. This could lead to confusion regarding their contact details, order history, and other crucial information. Normalization ensures that each customer has a single, consistent record, making data analysis and reporting more reliable.

Data consistency is vital for making informed business decisions. Without it, you risk inaccurate reporting, faulty analytics, and flawed conclusions, ultimately impacting strategic choices and operational efficiency.

Q 27. How do you identify and resolve anomalies in a database?

Identifying and resolving anomalies in a database involves carefully analyzing the data for redundancy and inconsistencies. This often requires examining the relationships between different attributes and identifying functional dependencies.

Anomalies often manifest as update, insertion, or deletion problems. For example, if updating a customer’s address requires modifying multiple records, it indicates a redundancy issue. Similarly, the inability to add a new product without also specifying its supplier points to an insertion anomaly.

Resolving these anomalies involves applying normalization principles. This might require splitting tables, adding new tables to establish relationships, and carefully defining primary and foreign keys. The process often involves iterative refinement, where anomalies are identified and addressed in stages until a suitably normalized schema is achieved.

Q 28. Discuss the importance of normalization in big data environments.

Normalization remains highly important in big data environments, although the specific approach might need adjustments. While the sheer volume of data can make complete normalization to the highest normal forms computationally expensive, the fundamental principles remain crucial for managing data quality and ensuring consistency.

In big data scenarios, a pragmatic approach often involves applying normalization selectively. This might involve normalizing core transactional data to a high degree while accepting a degree of denormalization in data warehouses or data lakes designed for analytical processing. The goal is to balance data consistency with performance and scalability considerations.

Techniques like schema-on-read, where data is stored in a less structured format and normalized only during query processing, are becoming increasingly popular in big data to optimize performance. But the core principles of data redundancy reduction and consistency remain central to managing large datasets effectively.

Key Topics to Learn for Normalizing Interview

- Relational Database Design: Understand the fundamentals of relational databases, including entities, attributes, and relationships. Practice designing efficient and normalized database schemas.

- Normalization Forms (1NF, 2NF, 3NF, BCNF): Master the definitions and practical application of each normal form. Be prepared to identify anomalies (insertion, update, deletion) and explain how normalization eliminates them.

- Functional Dependencies: Understand how to identify and utilize functional dependencies to determine the appropriate normal form for a given database schema. Practice creating and analyzing dependency diagrams.

- Data Integrity and Consistency: Explain the importance of normalization in maintaining data integrity and consistency within a database system. Discuss the benefits in terms of data accuracy and reduced redundancy.

- Practical Application in SQL: Demonstrate your ability to translate normalization theory into practical SQL implementation. Be ready to discuss the impact of normalization on query performance and data management.

- Denormalization Techniques: While focusing on normalization, be prepared to discuss scenarios where denormalization might be beneficial and explain the trade-offs involved.

- Advanced Normalization Forms (4NF, 5NF): While less frequently tested, familiarity with these higher normal forms demonstrates a deeper understanding of database design principles.

Next Steps

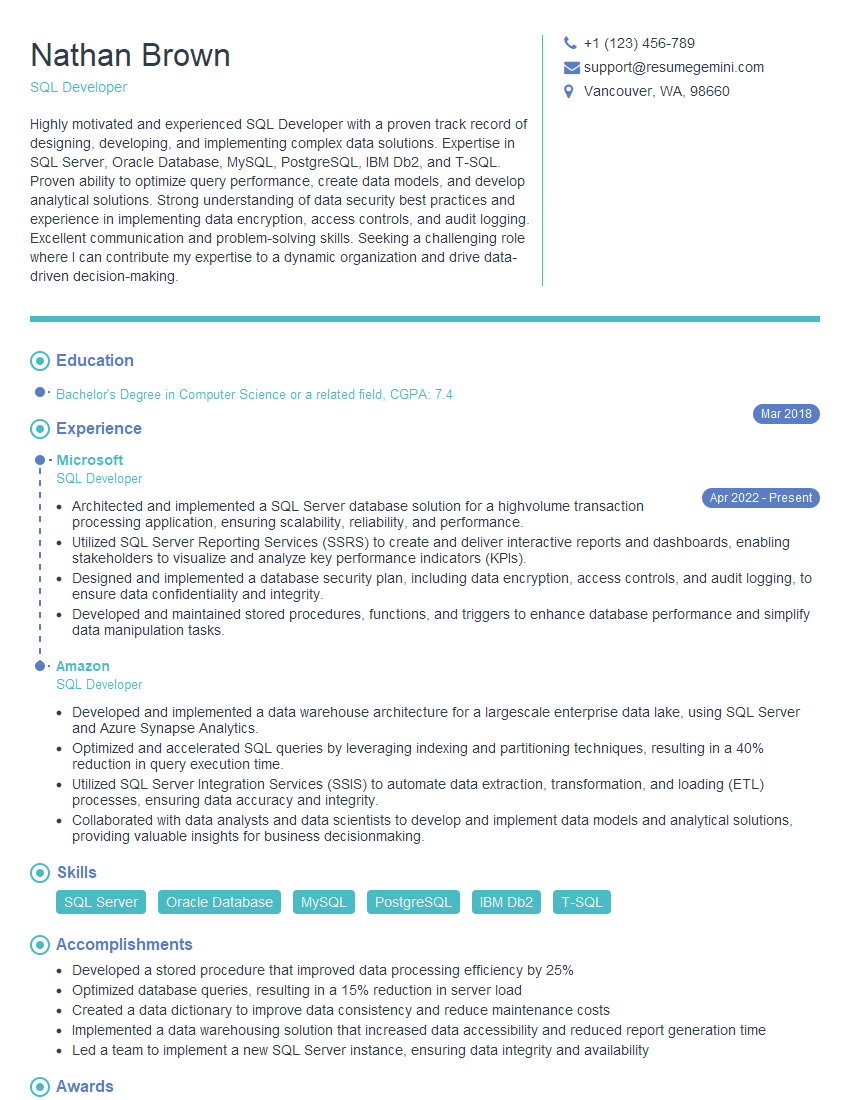

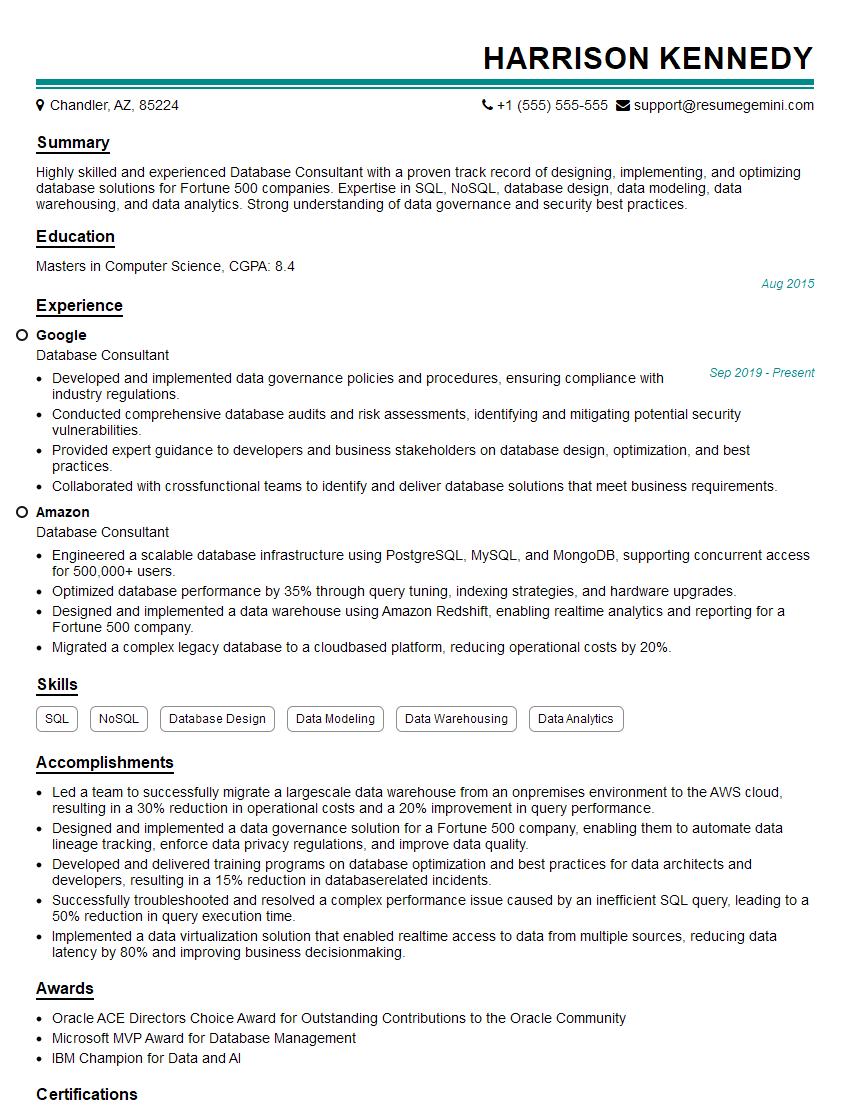

Mastering database normalization is crucial for career advancement in database administration, data engineering, and software development roles. A strong understanding of normalization showcases your ability to design efficient and maintainable database systems, a highly sought-after skill in today’s data-driven world. To maximize your job prospects, create an ATS-friendly resume that highlights your normalization expertise. We highly recommend using ResumeGemini to build a professional and impactful resume. ResumeGemini provides examples of resumes tailored to Normalizing, helping you showcase your skills effectively and land your dream job.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples