Preparation is the key to success in any interview. In this post, we’ll explore crucial Spatial Reference Frame Realization interview questions and equip you with strategies to craft impactful answers. Whether you’re a beginner or a pro, these tips will elevate your preparation.

Questions Asked in Spatial Reference Frame Realization Interview

Q 1. Explain the concept of a geodetic datum and its importance in Spatial Reference Frame Realization.

A geodetic datum is a reference system used to define the shape and size of the Earth, and the position of points on its surface. Think of it as a framework for all location data. It’s crucial in Spatial Reference Frame Realization because it provides the foundation for accurately representing geographic locations. Without a datum, coordinates would be meaningless – they wouldn’t relate to any real-world location. Different datums exist because the Earth isn’t a perfect sphere; its shape is complex and varies regionally. Each datum uses a specific mathematical model to approximate this shape, resulting in slightly different coordinate values for the same location depending on the datum used. The choice of datum is vital for ensuring the accuracy and consistency of spatial data in any application.

Q 2. Describe different types of datums (e.g., horizontal, vertical).

Datums are categorized based on the dimensions they represent. We primarily encounter horizontal and vertical datums.

- Horizontal Datums: These define the shape and size of the Earth’s surface (or a specific region of it) and are used to determine the latitude and longitude of points. Examples include NAD83 (North American Datum of 1983) and WGS84 (World Geodetic System 1984). They are based on ellipsoids, mathematical models approximating the Earth’s shape.

- Vertical Datums: These define the height or elevation of points above or below a reference surface, typically mean sea level. Examples include NAVD88 (North American Vertical Datum of 1988) and various local datums based on tidal gauge measurements. The accuracy of a vertical datum depends on the accuracy of the tide gauge data and how well that is represented through the mathematical modeling of the geoid (an equipotential surface of the Earth’s gravity field, close to, but not exactly, the mean sea level).

It’s important to note that horizontal and vertical datums are often used together to completely define a location in 3D space.

Q 3. What are the key differences between geographic and projected coordinate systems?

Geographic and projected coordinate systems are fundamentally different ways of representing locations on the Earth’s surface.

- Geographic Coordinate Systems (GCS): These use latitude and longitude to specify location. Latitude measures north-south position, and longitude measures east-west position, both relative to the datum. GCS coordinates are always in degrees and are based on a spherical or ellipsoidal model of the Earth. Think of it like using lines of latitude and longitude on a globe.

- Projected Coordinate Systems (PCS): These transform the curved surface of the Earth onto a flat plane using mathematical projections. This involves distortions, as it’s impossible to perfectly represent a curved surface on a flat one without some degree of error. PCS coordinates are usually expressed in linear units like meters or feet. The choice of projection depends on the area being mapped and the type of distortion that can be tolerated. For example, a map of a large area might use a cylindrical projection while a map of a small area might use a conical or azimuthal projection.

In essence, a GCS is a global representation while a PCS is a local, planar representation, suitable for specific regions. A PCS always refers to a GCS (and its underlying datum) since it’s derived from it.

Q 4. Explain the process of coordinate transformation between different datums.

Coordinate transformation between different datums is a crucial process for ensuring the compatibility of spatial data from various sources. It involves converting coordinates from one datum to another. This is typically done using datum transformation parameters, which are sets of numerical values that define the mathematical relationship between two datums. These parameters can include translations (shifts in X, Y, and Z directions), rotations, and scale differences. The process often involves using specialized algorithms and software tools that implement these transformations, many of which rely on geodetic transformations such as a 7-parameter transformation or using grid-based methods (like NTv2).

For example, converting coordinates from NAD27 to NAD83 might require applying a set of parameters to adjust for the differences in the underlying ellipsoids and geodetic reference frames. The specific method used depends on the datums involved and the accuracy required. Often, transformation grids are employed which provide more accurate transformations.

Q 5. Discuss common coordinate reference systems (e.g., WGS84, NAD83).

Several common coordinate reference systems are widely used:

- WGS84 (World Geodetic System 1984): This is a global horizontal datum widely used in GPS and many GIS applications. It’s based on an ellipsoid that closely approximates the Earth’s shape. It is considered the most widely accepted coordinate reference system globally.

- NAD83 (North American Datum of 1983): This is a horizontal datum primarily used in North America. It’s a more accurate representation of the Earth’s shape in North America compared to its predecessor, NAD27.

The choice between WGS84 and NAD83, or other datums, depends on the geographic area and the accuracy requirements of the project. Often, for applications that need to display data in a specific regional context and high accuracy, a regional datum may be required, whilst WGS84 is best suited for general applications that do not need high-precision accuracy.

Q 6. How do you handle datum transformations in GIS software?

Most GIS software packages have built-in tools for handling datum transformations. The specific steps might vary slightly depending on the software, but the general process usually involves:

- Identifying the source and target datums: Determine the datum of the input data and the desired datum for the output.

- Selecting the appropriate transformation method: Choose a method that is accurate for the datums involved and the geographic extent of the data. This could involve selecting parameters or specifying a transformation grid.

- Applying the transformation: Use the software’s tools to transform the coordinates from the source datum to the target datum. This might involve a simple projection change, or a more complex datum transformation and projection change simultaneously.

- Verifying the results: After transformation, it’s important to check the accuracy of the transformation by comparing some known control points before fully using the transformed data.

For example, in ArcGIS, you would use the ‘Project’ or ‘Define Projection’ tools. QGIS provides similar functionality. These processes often automate the selection of the appropriate transformation parameters or grids.

Q 7. What are the implications of using an incorrect spatial reference system?

Using an incorrect spatial reference system can lead to significant errors and problems in spatial analysis and data visualization. The consequences can range from minor inaccuracies to major misinterpretations and flawed results.

- Inaccurate measurements and distances: Incorrect datums and projections lead to errors in calculating distances, areas, and other spatial metrics.

- Spatial misalignment: Layers and features might not overlay correctly, leading to inaccurate analysis and visualization. Imagine trying to merge data from different sources, each using a different datum. This will cause the datasets to not align correctly.

- Incorrect spatial relationships: Analyses relying on proximity, intersection, or other spatial relationships may yield incorrect outcomes if the data isn’t in a consistent spatial reference system.

- Errors in decision-making: In applications like urban planning, environmental management, or resource allocation, inaccurate spatial data can lead to wrong decisions with significant real-world implications.

Therefore, verifying and managing spatial reference systems accurately is fundamental to reliable spatial analysis.

Q 8. Explain the concept of map projections and their purpose.

Map projections are mathematical transformations that translate the three-dimensional surface of the Earth onto a two-dimensional map. Because it’s impossible to perfectly represent a sphere on a flat surface without distortion, projections introduce compromises. The purpose is to create a usable representation of the Earth’s surface for various applications, like navigation, land surveying, and geographic information systems (GIS).

Think of it like trying to flatten an orange peel – you’ll inevitably get some stretching or tearing. Map projections aim to minimize these distortions, but the type of distortion depends on the chosen projection.

Q 9. Describe different types of map projections (e.g., Mercator, UTM).

There are many different map projections, each with its strengths and weaknesses. Some common examples include:

- Mercator Projection: This cylindrical projection is conformal, meaning it preserves shapes of small areas but significantly distorts area, especially near the poles. It’s famous for its use in nautical navigation because rhumb lines (lines of constant bearing) appear as straight lines.

- Universal Transverse Mercator (UTM): This is a transverse cylindrical projection that divides the Earth into 60 zones, each spanning 6 degrees of longitude. It minimizes distortion within each zone and is widely used for large-scale mapping and surveying projects because it provides relatively accurate distances and directions.

- Lambert Conformal Conic Projection: This conic projection is often used for mapping mid-latitude regions. It minimizes distortion along standard parallels (lines of latitude) and is particularly useful for representing areas that are long in the east-west direction.

- Albers Equal-Area Conic Projection: This projection is designed to preserve area, making it suitable for thematic mapping where area comparisons are important (e.g., population density maps).

These are just a few examples; many other projections exist, each designed to optimize different properties.

Q 10. What are the advantages and disadvantages of different map projections?

The choice of map projection involves trade-offs. Here’s a comparison of some advantages and disadvantages:

- Mercator:

- Advantages: Preserves shape (conformality), straight rhumb lines are useful for navigation.

- Disadvantages: Severe area distortion at higher latitudes, Greenland appears much larger than it actually is relative to South America.

- UTM:

- Advantages: Minimizes distortion within zones, good for large-scale mapping and surveying.

- Disadvantages: Requires the use of multiple zones for large areas, distortion increases away from the central meridian of each zone.

- Lambert Conformal Conic:

- Advantages: Good shape preservation in mid-latitude regions.

- Disadvantages: Distortion increases away from standard parallels.

- Albers Equal-Area Conic:

- Advantages: Preserves area, suitable for thematic mapping.

- Disadvantages: Distorts shape, especially at higher latitudes.

Q 11. How do you select the appropriate projection for a specific application?

Selecting the appropriate map projection depends heavily on the specific application and the area being mapped. Consider these factors:

- Extent of the area: A small area might be adequately represented by a simple projection, while a large area might require a more complex projection or multiple zones.

- Purpose of the map: Navigation requires a projection that accurately represents direction, thematic mapping requires one that preserves area, and topographic mapping might require one that minimizes distortion in both shape and area.

- Scale of the map: Large-scale maps allow for less demanding projections compared to small-scale maps.

- Desired properties: Choose a projection that prioritizes the most important properties (e.g., shape preservation, area preservation, distance preservation).

Often, a GIS professional will use the project’s requirements and area of interest to consult a projection selection guide, or consider expert advice to decide which projection will best suit the mapping project.

Q 12. Explain the concept of a geoid and its role in vertical datum definition.

A geoid is a model of the Earth’s shape that represents the equipotential surface of the Earth’s gravity field that best fits, in a least-squares sense, global mean sea level. In simpler terms, it’s a model of the Earth’s gravity field; imagine the surface of the oceans if they were extended under the continents, considering the influence of gravity. This model takes into account the uneven distribution of mass within and on the Earth. The geoid plays a crucial role in defining vertical datums, which are reference surfaces used to measure elevation.

Elevations are typically referenced to a vertical datum, often tied to a specific geoid model. This ensures consistent height measurements across different locations and is important for tasks like flood modeling, surveying, and construction.

Q 13. What is the difference between ellipsoids and geoids?

Ellipsoids and geoids are both mathematical models of the Earth’s shape, but they represent different aspects:

- Ellipsoid: A mathematical approximation of the Earth’s shape using a slightly flattened sphere (oblate spheroid). It’s a smooth, regular surface defined by parameters like semi-major and semi-minor axes. Ellipsoids are primarily used for horizontal positioning (latitude and longitude).

- Geoid: A model of the Earth’s gravity field, representing mean sea level. It’s an irregular surface that deviates from the ellipsoid due to variations in the Earth’s gravity. Geoids are primarily used for vertical positioning (elevation).

The key difference is that the ellipsoid is a smooth, mathematical surface, while the geoid is an irregular surface that reflects the uneven distribution of mass on the Earth. They are related because height above the ellipsoid (ellipsoidal height) and height above the geoid (orthometric height) are often used together to provide a complete spatial reference.

Q 14. How do you determine the accuracy of spatial data?

Determining the accuracy of spatial data is crucial for ensuring reliable results in any spatial application. Several factors contribute to the overall accuracy and must be carefully examined. These include:

- Positional Accuracy: This refers to the accuracy of the coordinates of spatial features. It is often expressed as a root mean square error (RMSE) or a circular error probable (CEP), which indicates how much the measured locations might deviate from their true locations. Data sources like GPS, LiDAR, and aerial photography have different inherent positional accuracies.

- Attribute Accuracy: This refers to the accuracy of non-spatial information associated with spatial features, such as land use classifications or population counts. Data quality is often evaluated by comparing against known ground truth data.

- Completeness: This measures whether all features of interest have been captured in the dataset. Gaps or missing features will impact the reliability of analysis.

- Logical Consistency: The dataset must be logically consistent; for example, the topology of a road network should be correct, and there should be no overlapping polygons.

- Temporal Accuracy: For data collected over time, the accuracy of the temporal aspects is also important.

Determining accuracy involves comparing the spatial data to a known standard or performing statistical analysis on the data itself. Metadata associated with spatial data often provides information about its expected accuracy, but independent validation is often necessary.

Q 15. Describe different methods for georeferencing imagery or data.

Georeferencing is the process of assigning geographic coordinates (latitude and longitude) to points on an image or dataset, thus linking it to a known spatial reference system. Think of it like adding a map grid to a photo to show its location on Earth. Several methods exist, each with its own strengths and weaknesses:

Ground Control Points (GCPs): This is the most common method. We identify easily identifiable features (like building corners or intersections) in both the imagery and a reference map (e.g., a high-resolution aerial photo or a topographic map). We then use software to match these points and calculate a transformation that aligns the imagery to the map’s coordinate system. This is analogous to aligning two puzzles pieces by matching corresponding features.

Direct Georeferencing: If the imagery or data already contains embedded GPS coordinates (like images from a drone equipped with a GPS module), this method leverages that information directly. It’s faster and simpler than GCPs but relies on the accuracy of the embedded GPS data.

Indirect Georeferencing: This involves using metadata or existing spatial information to infer the location of the imagery. For example, if we know the camera’s location and orientation when the picture was taken, we can use this information to geometrically correct the image.

Image Matching: This automated method uses algorithms to identify similar features in the imagery and a reference dataset to automatically find matching points for georeferencing. It’s efficient but requires high-quality imagery and sometimes manual intervention for refinement.

The choice of method depends on factors such as data availability, accuracy requirements, and available resources. For example, high-accuracy mapping projects might rely on GCPs, while quickly georeferencing a casual photograph might use direct georeferencing.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain the concept of spatial accuracy and precision.

Spatial accuracy and precision are often confused but represent distinct aspects of spatial data quality. Think of it like shooting a target:

Accuracy refers to how close the measured values are to the true values. A high accuracy means the measurements are very close to the actual locations. In our target analogy, this is how close the shots are to the bullseye.

Precision refers to how close the measured values are to each other. High precision indicates the measurements are clustered together, even if they’re not close to the true value. In our analogy, this is how tightly clustered the shots are, regardless of whether they hit the bullseye.

For example, a GPS device might consistently report locations within a 1-meter radius (high precision), but those locations may be consistently offset by 5 meters from their true positions (low accuracy). Conversely, measurements could be scattered widely (low precision) but the average location could be quite close to the true position (high accuracy).

Q 17. What are common sources of error in spatial data?

Errors in spatial data are common and can arise from various sources:

Measurement Errors: Inaccuracies in the original data collection methods (e.g., GPS errors, inaccurate surveying techniques). For example, using an older GPS device with low accuracy.

Data Processing Errors: Errors introduced during data processing, such as misalignment, incorrect transformations, or faulty algorithms. An example would be applying a wrong projection during conversion.

Data Representation Errors: Limitations in how spatial data is represented (e.g., generalization, simplification). A map showing roads as lines instead of their actual widths is an example of simplification.

Conceptual Errors: Errors arising from misinterpretations or incorrect assumptions about the data. An example is using the wrong coordinate system for a project.

Attribute Errors: Errors in the descriptive information associated with spatial features (e.g., incorrect addresses, mislabeled features). For example, a database incorrectly listing a building’s height.

Understanding these sources of error is crucial for assessing data quality and applying appropriate error correction techniques.

Q 18. How do you address inconsistencies in spatial data?

Addressing inconsistencies in spatial data often requires a multi-step approach:

Data Cleaning: This involves identifying and correcting obvious errors, such as duplicate entries, missing values, and inconsistencies in attribute data. This often requires scripting or using data cleaning tools.

Data Transformation: Converting data into a consistent format, coordinate system, and projection. This might involve reprojection or resampling.

Data Integration: Combining data from multiple sources, ensuring consistency in attributes and geometry. This can involve spatial joins and overlays.

Data Validation: Using automated checks and visual inspections to verify the consistency and accuracy of the data. This ensures any changes made don’t introduce new errors.

Spatial Adjustment Techniques: Applying techniques like rubber sheeting or affine transformations to reduce positional discrepancies.

The specific approach depends on the nature and extent of the inconsistencies. For example, minor discrepancies can be addressed through simple data cleaning, whereas major inconsistencies might require more complex data transformation and integration techniques.

Q 19. Describe your experience with specific GIS software (e.g., ArcGIS, QGIS).

I have extensive experience with both ArcGIS and QGIS, using them for various geospatial projects. In ArcGIS, I’m proficient in utilizing geoprocessing tools for tasks like spatial analysis, raster processing, and data management. For example, I used ArcGIS Pro’s ModelBuilder to automate complex workflows for processing large LiDAR datasets, improving efficiency significantly.

With QGIS, I appreciate its open-source nature and flexibility, particularly for tasks involving data visualization and custom scripting. I’ve used QGIS’s Processing Toolbox extensively to perform batch geoprocessing operations and created custom plugins using Python to enhance its functionality. A recent example involved developing a plugin to automate the conversion of large raster datasets into vector data for efficient analysis.

Q 20. How do you manage large geospatial datasets?

Managing large geospatial datasets effectively involves several key strategies:

Data Partitioning: Dividing large datasets into smaller, manageable chunks to improve processing speed and reduce memory usage. This is analogous to splitting a large document into smaller chapters.

Database Management Systems (DBMS): Utilizing spatial DBMS such as PostGIS (with PostgreSQL) or Oracle Spatial for efficient data storage, retrieval, and querying. These systems are optimized for handling the complexities of spatial data.

Cloud Computing: Leveraging cloud platforms like Amazon Web Services (AWS) or Google Cloud Platform (GCP) to store and process large datasets, taking advantage of their scalable infrastructure.

Data Compression: Applying appropriate compression techniques (e.g., lossless compression for preserving data integrity) to reduce storage space and improve transfer speeds.

Data Optimization: Techniques like creating spatial indexes to speed up spatial queries and optimizing data structures for faster processing.

The specific techniques employed will depend on the size and nature of the data, available resources, and project requirements. For instance, a large-scale environmental monitoring project might necessitate the use of cloud computing and optimized spatial databases, whereas a smaller-scale project could manage data effectively using local storage and data partitioning.

Q 21. Explain your experience with spatial data formats (e.g., shapefiles, GeoTIFF, GeoJSON).

I have worked extensively with various spatial data formats, each with its own strengths and weaknesses:

Shapefiles: A widely used vector format representing geographic features as points, lines, and polygons. Simple, widely supported, but limited in terms of metadata handling and attribute complexity. I commonly use shapefiles for storing relatively small vector datasets for tasks such as mapping road networks or building footprints.

GeoTIFF: A raster format that incorporates georeferencing information directly into the file, making it easy to handle and integrate into GIS software. Its support for various compression methods makes it suitable for handling large raster datasets. I often use GeoTIFF for satellite imagery and elevation data.

GeoJSON: A text-based, open standard vector format ideal for web mapping and data exchange. It’s lightweight, human-readable, and easily parsed by various applications. Its use has become prevalent in web-based GIS applications where data needs to be efficiently exchanged between servers and clients.

The choice of format depends heavily on the application. For example, shapefiles might be suitable for simple vector data while GeoTIFF is preferred for raster data, and GeoJSON is favored for web-based applications that need to handle data in a lightweight format.

Q 22. Describe your experience with spatial analysis techniques.

Spatial analysis techniques are the heart of extracting meaningful information from geographic data. My experience encompasses a wide range of these techniques, from basic operations like buffering and overlay analysis to more advanced methods such as spatial interpolation, network analysis, and geostatistics. I’m proficient in using various Geographic Information Systems (GIS) software packages like ArcGIS and QGIS to perform these analyses.

For instance, I’ve used spatial interpolation (e.g., kriging) to estimate rainfall patterns across a region with sparse weather station data. This involved understanding the underlying statistical assumptions and selecting appropriate interpolation methods based on the data characteristics. Another example is my work on network analysis where I optimized delivery routes for a logistics company by considering road networks, traffic patterns, and delivery time windows. This required proficiency in shortest-path algorithms and network constraint modeling.

Furthermore, I have extensive experience in using spatial statistics to analyze point patterns, identify spatial autocorrelation, and perform spatial regression analysis to understand relationships between different geographic variables. This includes understanding concepts like Moran’s I and Geary’s C for spatial autocorrelation analysis.

Q 23. How do you ensure data quality and integrity in spatial data management?

Data quality and integrity are paramount in spatial data management. My approach involves a multi-faceted strategy focused on prevention and detection. This begins with careful data acquisition and rigorous quality control checks at every stage of the process.

- Data Source Assessment: I meticulously evaluate the reliability and accuracy of source data, considering factors such as data resolution, accuracy standards, and potential biases.

- Data Cleaning: This involves identifying and correcting errors like spatial inconsistencies, attribute errors, and topological errors. I use both manual and automated methods to accomplish this, depending on the complexity and size of the dataset. Techniques include identifying and removing duplicate records, correcting attribute values, and validating geometry using GIS tools.

- Metadata Management: Comprehensive metadata documentation is vital. This includes detailed information about data sources, projections, accuracy assessments, and processing history. This ensures traceability and facilitates data reuse and understanding.

- Data Validation: Regular validation checks are performed using both visual inspection and automated techniques. This involves verifying spatial relationships, checking for topology errors, and comparing data against known standards.

- Data Backup and Archiving: Robust backup and archiving strategies prevent data loss and facilitate data recovery in case of unforeseen circumstances.

For example, in a project involving land use data, I detected inconsistencies in polygon geometries using topological validation tools. This revealed overlaps and gaps that were corrected through editing and data reconciliation.

Q 24. Explain your understanding of metadata and its importance in spatial data.

Metadata is the descriptive information about spatial data. Think of it as a comprehensive label that provides all the essential details needed to understand, interpret, and use the data effectively. It’s crucial because it ensures data discoverability, interoperability, and reusability.

High-quality metadata should include information on the data’s origin, creation date, spatial extent, coordinate system, accuracy, attribute definitions, and any processing steps undertaken. Standard metadata schemas, like those defined by the ISO 19115 standard, are used to structure this information consistently. The importance lies in:

- Data Discoverability: Clear metadata allows users to easily find the relevant datasets for their needs.

- Data Interoperability: Standardized metadata facilitates data exchange between different systems and organizations.

- Data Quality Assessment: Metadata provides crucial information on data accuracy and limitations, which allows users to assess the suitability of the data for their intended use.

- Data Reusability: Well-documented metadata reduces the time and effort required to understand and use existing datasets, promoting efficiency.

Without comprehensive metadata, finding and using spatial data can become a significant challenge, leading to potential errors and misinterpretations.

Q 25. Describe a project where you had to deal with complex spatial reference systems.

In a recent project involving the integration of elevation data from multiple sources for a hydrological model, I encountered a complex scenario involving different spatial reference systems (SRS). The project involved integrating LiDAR data (projected in UTM Zone 17N), elevation contours from a topographic map (using a State Plane Coordinate System), and satellite-derived elevation data (using a geodetic datum different from the others).

The challenge was to ensure seamless integration despite these inconsistencies. My approach involved:

- SRS Identification and Definition: I meticulously identified and documented the SRS of each dataset using the EPSG codes and other metadata.

- Coordinate System Transformation: I utilized GIS software to perform accurate coordinate transformations, converting all datasets to a common SRS (WGS 84 UTM Zone 17N in this instance). I ensured the use of appropriate transformation parameters to minimize errors during projection.

- Accuracy Assessment: Following the transformation, I performed a thorough accuracy assessment to quantify any potential distortions or errors introduced during the transformation process.

- Data Validation: I performed spatial validation on the transformed data to verify consistency and identify any residual discrepancies.

This systematic approach ensured the successful integration of the various elevation datasets, resulting in a reliable and accurate digital elevation model that formed the basis for the hydrological modeling. Careful consideration of datum transformations and the use of appropriate projection techniques was critical to achieving this.

Q 26. How do you handle inconsistencies between different spatial datasets?

Handling inconsistencies between different spatial datasets is a common challenge in spatial data integration. My approach involves a structured process to identify, analyze, and resolve these inconsistencies.

- Data Reconciliation: This involves systematically comparing the datasets, identifying areas of overlap or conflict, and evaluating the reasons for the inconsistencies. The causes could be errors in data acquisition, different data resolutions, or varying update frequencies.

- Data Transformation: Sometimes, inconsistencies can be resolved by transforming one or more datasets to a common coordinate system, projection, or attribute structure. As mentioned earlier, careful attention must be paid to transformation methods and error analysis.

- Spatial Adjustment: If minor geometric discrepancies exist, spatial adjustment techniques might be employed to align features between datasets. This can include rubber-sheeting or other georeferencing methods.

- Attribute Reconciliation: Discrepancies in attribute values might require data standardization or using fuzzy matching techniques to find corresponding records across different datasets. This can be aided by data cleansing and standardization procedures.

- Data Integration Strategy: The choice of integration method depends on the nature of the inconsistencies and the project goals. Options include overlay analysis, spatial joins, or creating composite datasets.

For example, if two datasets have slightly different boundaries for the same administrative unit, I would evaluate the source accuracy of each and determine the best strategy for resolving the boundary differences—possibly using a weighted average or manually correcting based on higher-resolution data.

Q 27. Describe your experience with spatial data validation and error correction.

Spatial data validation and error correction are crucial steps in ensuring data quality. My experience encompasses a range of validation techniques and error correction strategies. I routinely use both automated and manual methods, tailored to the specific data type and the nature of potential errors.

- Topological Validation: I use GIS software to check for topological errors such as overlaps, gaps, and dangling nodes in polygon or line datasets. These errors can significantly impact the accuracy of spatial analysis.

- Geometric Accuracy Assessment: I evaluate the geometric accuracy of spatial data by comparing it against known ground truth data or using accuracy assessments derived from the data acquisition methods. This helps quantify the level of uncertainty associated with the data.

- Attribute Consistency Checks: I verify the consistency and completeness of attribute data using range checks, consistency checks, and completeness checks. This helps identify and correct erroneous or missing attribute information.

- Data Editing and Correction: Once errors are identified, I employ appropriate editing tools to correct the data. This can range from simple attribute corrections to complex geometric editing using GIS software.

- Error Reporting and Documentation: Throughout the validation process, I meticulously document the identified errors, the methods used to correct them, and any residual uncertainties.

In a recent project, during the validation of a road network dataset, I identified several dangling nodes using topological validation. These were resolved by editing the road linework to correctly connect the nodes, improving the overall data quality.

Q 28. Explain your knowledge of current trends and advancements in Spatial Reference Frame Realization.

The field of Spatial Reference Frame Realization is constantly evolving, driven by advancements in technology and the increasing demand for highly accurate and consistent geospatial data. Several key trends are shaping the future:

- Increased Use of GNSS (Global Navigation Satellite Systems): The integration of multiple GNSS constellations (GPS, GLONASS, Galileo, BeiDou) is improving the accuracy and reliability of positioning data. Techniques like Real-Time Kinematic (RTK) GPS are increasingly used for high-precision surveying.

- Advancements in Inertial Navigation Systems (INS): INS technology is being combined with GNSS to improve positioning accuracy, particularly in areas with limited GNSS signal reception.

- Development of Advanced Geodetic Models: Ongoing efforts are focused on developing more accurate geodetic models that better represent the Earth’s complex shape and gravitational field. These improvements contribute to enhanced positioning accuracy and consistency.

- Improved Data Integration and Processing Techniques: Advances in computing power and data processing techniques are leading to more efficient and accurate data integration and processing. Techniques like point cloud processing are becoming vital in the creation of high-resolution 3D models.

- Expansion of Crowdsourced Geospatial Data: The increasing use of crowdsourced data, such as data from smartphones and other mobile devices, is providing opportunities for more comprehensive and up-to-date spatial information. However, rigorous quality control procedures are essential to manage the variability in accuracy and reliability.

These advancements are contributing to better understanding of our planet, supporting improved infrastructure development, natural resource management, and many other applications. I continuously stay abreast of these developments through professional journals, conferences, and online resources to ensure I’m using the best practices and technologies.

Key Topics to Learn for Spatial Reference Frame Realization Interview

- Datum Transformations: Understand different datum types (geocentric, local), transformation methods (e.g., Helmert, Molodensky-Badekas), and the implications of choosing the appropriate transformation for specific applications. Consider the impact of accuracy and precision.

- Coordinate Systems: Master the differences between geographic (latitude/longitude) and projected coordinate systems (UTM, State Plane, etc.). Be prepared to discuss map projections, their properties (e.g., conformal, equal-area), and their suitability for various applications.

- Geodetic Networks and Control Points: Discuss the role of geodetic networks in defining and maintaining a spatial reference frame. Understand the concept of control points and their importance in geospatial data accuracy. Be ready to discuss different types of control points and their accuracies.

- Practical Applications: Be ready to discuss real-world examples where accurate spatial reference frame realization is critical. This could include applications in surveying, GIS, remote sensing, navigation, or precision agriculture. Prepare examples that demonstrate your understanding of practical challenges and solutions.

- Error Analysis and Propagation: Discuss how errors in the spatial reference frame can propagate through geospatial analyses and impact the accuracy of results. Be prepared to discuss methods for error detection, mitigation, and uncertainty quantification.

- Software and Tools: Familiarize yourself with common software and tools used in spatial reference frame realization (e.g., specific GIS software, geodetic processing packages). While specific software may vary, discussing your familiarity with relevant toolsets is valuable.

- Emerging Technologies: Stay updated on the latest advancements in technologies relevant to spatial reference frame realization, such as GNSS (Global Navigation Satellite Systems) and Inertial Navigation Systems. Show your passion for the field by staying current.

Next Steps

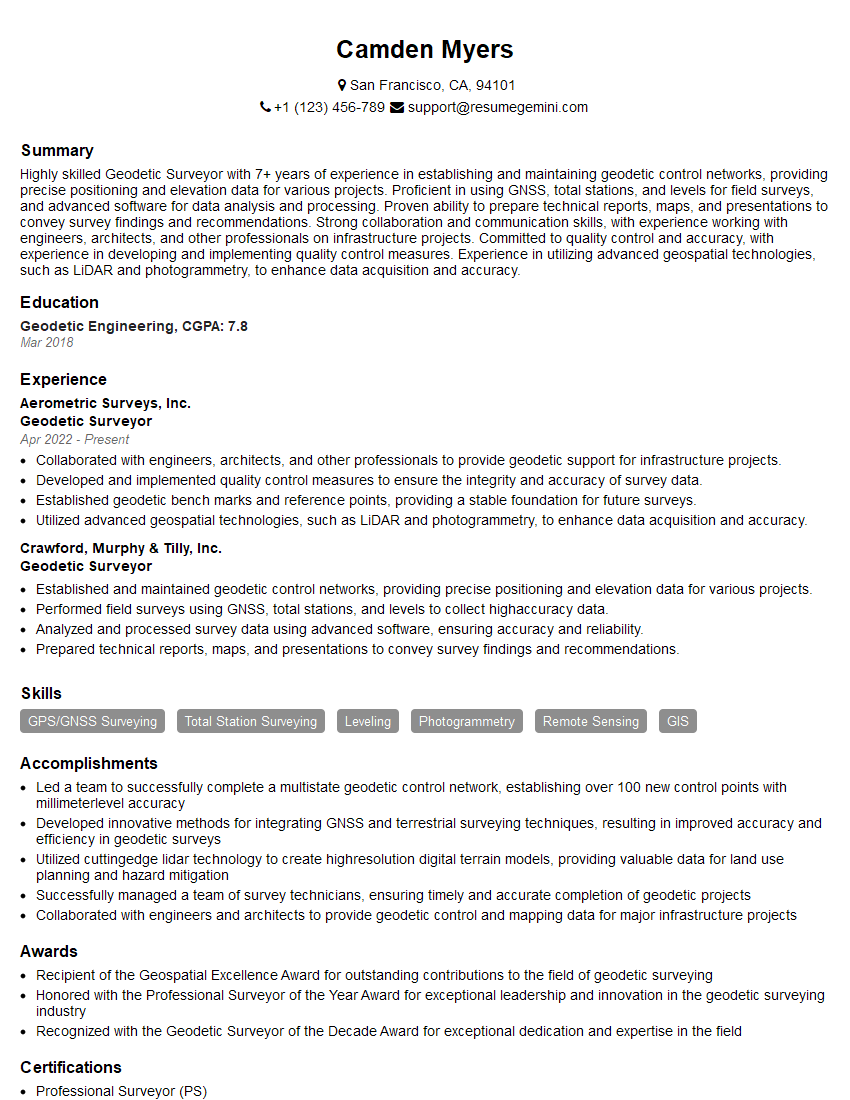

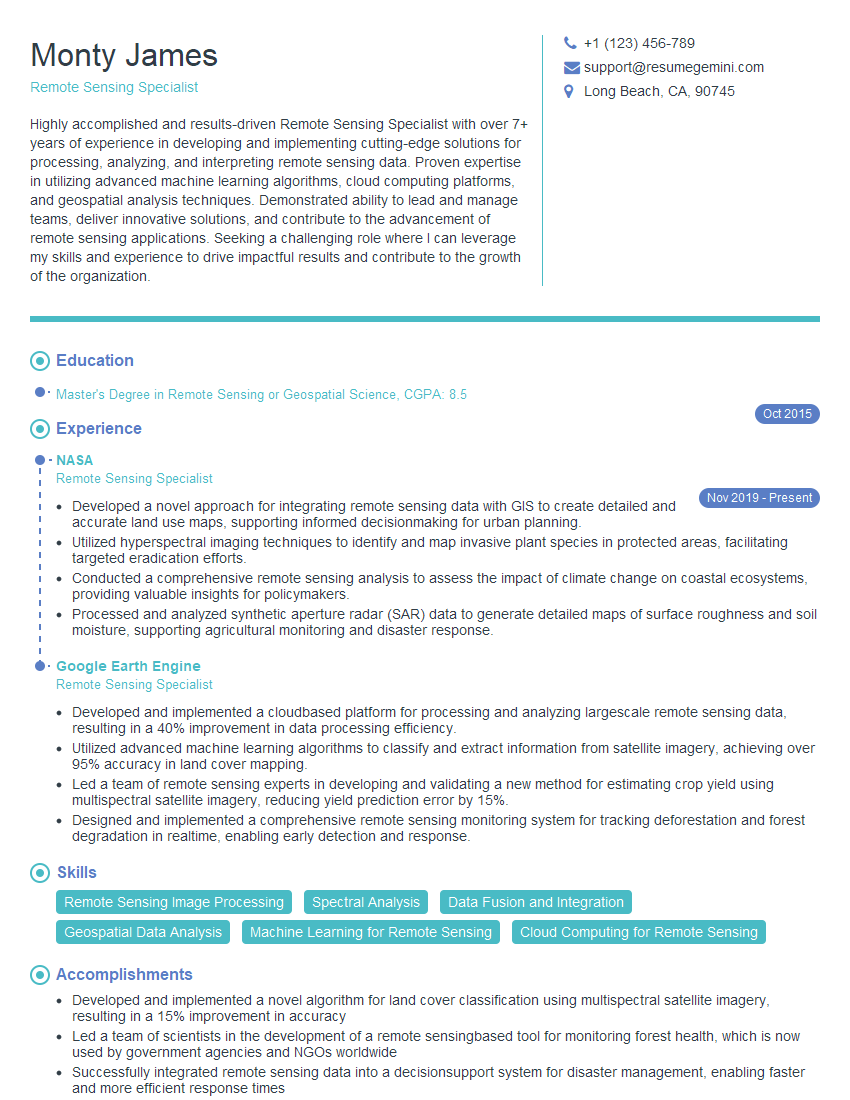

Mastering Spatial Reference Frame Realization significantly enhances your career prospects in geospatial science and related fields. It demonstrates a deep understanding of fundamental concepts and opens doors to exciting opportunities in research, development, and application. To maximize your job search success, crafting a compelling and ATS-friendly resume is crucial. ResumeGemini is a trusted resource that can help you build a professional resume that highlights your skills and experience effectively. Examples of resumes tailored to Spatial Reference Frame Realization are available, providing valuable templates and guidance to help you present yourself in the best possible light.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples