Interviews are opportunities to demonstrate your expertise, and this guide is here to help you shine. Explore the essential Geodetic Data Standards and Best Practices interview questions that employers frequently ask, paired with strategies for crafting responses that set you apart from the competition.

Questions Asked in Geodetic Data Standards and Best Practices Interview

Q 1. Explain the importance of horizontal and vertical datums in geodetic surveying.

Horizontal and vertical datums are fundamental reference systems in geodetic surveying. Think of them as the underlying frameworks upon which all spatial measurements are based. A horizontal datum defines the position of points on the Earth’s surface in a two-dimensional plane (latitude and longitude), while a vertical datum defines the elevation or height of points relative to a reference surface, usually mean sea level. Without these datums, measurements would be relative and meaningless, impossible to compare or integrate with other data sets. For example, a map showing the location of a building using the NAD83 datum will have different coordinates than the same building plotted using the WGS84 datum. Similarly, elevations measured relative to a local tide gauge (a vertical datum) wouldn’t be directly comparable to elevations based on the North American Vertical Datum of 1988 (NAVD88).

The choice of datum is crucial. It impacts the accuracy and consistency of spatial analysis, particularly in large-scale projects or those requiring high precision. Inconsistent datum usage leads to errors in distance calculations, area computations, and the overlaying of different datasets. Choosing the appropriate datum depends on the geographical area, the project’s scale, and the required accuracy.

Q 2. Describe the different types of coordinate systems and their applications.

Coordinate systems are mathematical frameworks for expressing locations on the Earth’s surface. Several types exist, each with specific applications:

- Geographic Coordinate System (GCS): Uses latitude and longitude to define locations on a spherical or ellipsoidal model of the Earth. It’s suited for global positioning and large-scale mapping. For instance, locating a city on a world map.

- Projected Coordinate System (PCS): Transforms the curved surface of the Earth into a flat plane, enabling accurate distance and area calculations within a defined region. Examples include UTM (Universal Transverse Mercator) – commonly used for large-scale mapping projects – and State Plane Coordinate Systems (SPCS) – designed for use within specific states in the US to minimize distortion.

- Grid Coordinate System: Uses a rectangular grid overlaid on a map or region, such as a local cadastral survey. These are frequently used in local engineering and land surveying projects, simplifying measurements.

The choice of coordinate system is project-specific. A global navigation satellite system (GNSS) like GPS inherently uses a GCS, while local surveying often employs a PCS to minimize distortion within the project area. Mismatches in coordinate systems can lead to significant errors if not carefully transformed during data integration.

Q 3. What are the key elements of a geodetic data standard, such as the ISO 19100 series?

Geodetic data standards, such as those defined in the ISO 19100 series, are crucial for ensuring interoperability and data quality. Key elements include:

- Metadata: Comprehensive information describing the data’s origin, acquisition methods, accuracy, coordinate system, and any other relevant details. This is akin to the label on a food item, providing crucial information for proper usage.

- Data Models: Standardized structures for representing geospatial data. This ensures consistency in how the data is organized and interpreted, preventing ambiguity.

- Encoding Standards: Methods for representing geospatial data in digital formats, such as shapefiles, GeoJSON, or GeoTIFF. These standards specify how the data should be structured to guarantee compatibility across different software platforms.

- Accuracy Standards: Specifications defining acceptable levels of precision and uncertainty in measurements. This is essential for ensuring the reliability of data used in critical applications like infrastructure management.

- Quality Control Measures: Processes and procedures for validating and verifying the quality of data throughout its lifecycle. This includes checks for consistency, completeness, and correctness.

Adherence to these standards facilitates data exchange, reduces errors, and improves the overall reliability and usability of geospatial information.

Q 4. How do you ensure the accuracy and precision of geodetic data?

Ensuring the accuracy and precision of geodetic data requires a multi-faceted approach:

- Precise Instruments: Utilizing high-precision GNSS receivers, total stations, and leveling instruments.

- Rigorous Field Procedures: Implementing strict protocols for data acquisition, including proper instrument calibration, atmospheric correction, and meticulous observation recording.

- Data Validation and Quality Control: Applying various techniques for error detection and correction, such as statistical analysis, blunder detection, and data consistency checks.

- Appropriate Data Processing Techniques: Employing suitable algorithms and software for data adjustment, transformation, and integration.

- Regular Calibration and Maintenance: Maintaining and calibrating surveying equipment to ensure their accuracy and reliability. Just like regular car maintenance, this is crucial.

For example, applying least-squares adjustment techniques to a network of GNSS observations allows us to determine the most probable coordinates of the points, thereby minimizing the impact of random errors. Furthermore, careful planning of survey designs minimizes the impact of systematic errors.

Q 5. Explain the concept of data quality and its importance in geodetic projects.

Data quality in geodetic projects refers to the fitness of the data for its intended use. It encompasses aspects like accuracy, completeness, consistency, and timeliness. High-quality data is essential because it directly impacts the reliability and validity of analyses and decisions based on it. Poor data quality can lead to incorrect conclusions, wasted resources, and even safety hazards, especially in critical applications such as infrastructure design or disaster response.

Consider a bridge design. Inaccurate elevation data could result in an improperly designed bridge, leading to structural instability or even collapse. Data quality management involves establishing clear quality standards, implementing appropriate quality control procedures during data acquisition and processing, and regularly monitoring and assessing data quality throughout the project lifecycle.

Q 6. What are the common sources of error in geodetic measurements?

Several sources contribute to errors in geodetic measurements:

- Instrumental Errors: Imperfections or miscalibration of surveying equipment. For example, a misaligned total station or a faulty GNSS receiver.

- Atmospheric Effects: Variations in atmospheric pressure, temperature, and humidity can affect signal propagation in GNSS and refraction in optical instruments.

- Human Errors: Mistakes in data recording, observation taking, or data processing. For example, incorrectly reading a measurement or entering data incorrectly.

- Natural Phenomena: Movement of the Earth’s crust, changes in sea level, or other natural processes can introduce inaccuracies.

- Multipath Errors: In GNSS, signals reflecting off buildings or other surfaces can lead to errors in positioning.

Understanding these error sources is vital for designing accurate survey methodologies and implementing appropriate error mitigation strategies.

Q 7. How do you handle discrepancies between different geodetic datasets?

Handling discrepancies between geodetic datasets requires a systematic approach:

- Identify the Discrepancies: Compare the datasets using appropriate visualization and analysis tools to pinpoint areas of disagreement.

- Investigate the Sources of Discrepancies: Examine metadata, data acquisition methods, coordinate systems, and other relevant information to determine the likely causes of the differences. This might involve checking for different datums or projection systems.

- Assess the Impact of the Discrepancies: Evaluate the magnitude and potential consequences of the discrepancies on the intended use of the data.

- Develop a Reconciliation Strategy: Choose an appropriate method for resolving the discrepancies, which might include data transformation, adjustment, or rejection of inconsistent data. A rigorous transformation needs to be applied if different datums or projections are used.

- Document the Reconciliation Process: Maintain clear records of the methods used and the outcomes obtained, including any assumptions or limitations.

It’s crucial to remember that simply choosing one dataset over another isn’t always the best solution. A thorough understanding of the data and error sources is key to choosing the appropriate reconciliation strategy. A weighted average, based on the assessed quality of the various datasets, might be a suitable solution in some cases.

Q 8. Describe your experience with geospatial data processing software.

My experience with geospatial data processing software spans over a decade, encompassing a wide range of tools. I’m proficient in ArcGIS Pro, QGIS, and GDAL/OGR, utilizing them for tasks like data cleaning, spatial analysis, and visualization. For instance, I used ArcGIS Pro to perform a complex terrain analysis for a highway construction project, leveraging its 3D capabilities and spatial modeling tools. In another project, I employed QGIS’s open-source functionalities for processing a massive LiDAR dataset, taking advantage of its plugin ecosystem for efficient data management. My experience extends to scripting and automation using Python within these environments, streamlining workflows and improving efficiency. This automation includes things like batch processing of geospatial files and the creation of custom tools for repetitive tasks.

Q 9. What are the different methods for geodetic data transformation and projection?

Geodetic data transformation and projection involve converting coordinates from one coordinate system to another. This is crucial because different datasets often use different systems. Common methods include:

- Datum Transformations: These shift coordinates between different datums (reference surfaces for Earth). Examples include converting from NAD83 to WGS84 using methods like Molodensky-Badekas or a grid-based transformation (like NTv2). Choosing the right method depends on accuracy requirements and the geographic area.

- Map Projections: These transform coordinates from a 3D ellipsoid to a 2D plane, inevitably causing distortion. Common projections include Mercator (preserving direction), UTM (preserving area), and Albers Equal-Area (preserving area). The choice of projection is critical; a Mercator projection, for example, severely distorts areas at high latitudes.

- Coordinate System Transformations: This encompasses both datum and projection changes, often involving a series of steps. Software packages automatically handle these complex sequences.

Imagine trying to overlay a map of a city (using a projected coordinate system) on top of satellite imagery (often using a geographic coordinate system). Without proper transformation, the layers would be misaligned. This is where the importance of accurate geodetic data transformations and projections comes into play. A slight misalignment can lead to significant errors in analysis.

Q 10. Explain the concept of spatial referencing and its importance.

Spatial referencing defines the location of geographic features by assigning them coordinates within a specific coordinate reference system (CRS). A CRS comprises a datum (reference ellipsoid and its orientation) and a map projection (the method used to represent the 3D Earth on a 2D surface). It’s the backbone of geospatial data management. Think of it as the address for every point on a map. Without it, you wouldn’t know where anything is!

Its importance is paramount:

- Data Integration: Allows combining data from different sources, ensuring features align correctly.

- Analysis: Accurate spatial analysis depends on correctly referenced data. Distance calculations, area measurements, and proximity analysis are all invalid without proper referencing.

- Visualization: Maps and other visualizations depend on a well-defined spatial reference system for accurate display.

For example, mismatched CRSs during overlay analysis can lead to incorrect results, such as inaccurate measurements of overlap between land parcels. This can have serious consequences in applications like land management and environmental planning.

Q 11. How do you manage metadata associated with geodetic data?

Metadata is crucial for understanding and using geodetic data. I manage metadata using a combination of methods. I follow established standards like ISO 19115 (Metadata Standard for Geographic Information) and FGDC metadata standards. I usually use metadata editors integrated into GIS software, such as ArcGIS Pro’s metadata editor or QGIS’s built-in tools. However, when dealing with large datasets or diverse formats, I use dedicated metadata management systems. The metadata includes information about data acquisition, processing steps, accuracy, projection, and any limitations of the dataset. This ensures others can understand the data’s origin, quality, and limitations before using it.

Imagine receiving a dataset without metadata. You wouldn’t know the projection, the datum, the accuracy, or the acquisition date. This could lead to errors and misinterpretations. Proper metadata management provides a complete and trustworthy record of the geospatial data.

Q 12. What are the best practices for data storage and archiving?

Best practices for geodetic data storage and archiving involve a combination of technical and organizational strategies:

- Structured File System: Organize data using a clear and consistent directory structure to facilitate retrieval.

- Data Backup and Redundancy: Regularly back up data to multiple locations using cloud storage or local servers for disaster recovery.

- Data Versioning: Track changes and maintain different versions of the dataset to allow for rollback if needed.

- Metadata Integration: Ensure metadata is properly stored and linked to the corresponding datasets.

- Data Formats: Choose open and widely used formats to ensure long-term accessibility.

- Access Control: Implement security measures to restrict access based on user roles.

A well-organized archive avoids data loss and ensures the long-term usability of valuable geospatial information. A poorly organized archive leads to wasted time searching for files, difficulty in understanding data contents, and potential for loss of vital information.

Q 13. Describe your experience working with different geodetic data formats (e.g., shapefiles, GeoTIFF, GeoJSON).

I have extensive experience working with various geodetic data formats. Shapefiles, though limited, are still commonly used for vector data (points, lines, polygons). GeoTIFF is a standard for raster data (images, elevation models), offering efficient storage and support for georeferencing. GeoJSON is a versatile format suitable for web mapping and data exchange, supporting both vector and attribute information. I’ve used each format extensively in different contexts; for example, I used shapefiles for managing land parcels in a cadastral mapping project, GeoTIFFs for analyzing satellite imagery, and GeoJSON for visualizing data on a web map. My experience also extends to less common formats, such as KML and GML, providing flexibility in handling diverse datasets. Understanding the strengths and weaknesses of each format enables me to choose the optimal one for a given application.

Q 14. How do you assess the completeness and consistency of geodetic datasets?

Assessing the completeness and consistency of geodetic datasets requires a multi-faceted approach:

- Data Validation: Use software tools and scripts to check for inconsistencies, such as overlapping polygons or missing attribute values. GDAL tools provide excellent capabilities for this.

- Spatial Checks: Perform topological checks to identify errors like gaps, slivers, or self-intersections in polygons.

- Attribute Checks: Verify data integrity by examining attribute tables for consistency and accuracy. This includes checking for data type violations and unreasonable values.

- Metadata Review: Carefully examine the metadata to identify potential limitations or errors in data acquisition or processing.

- Visual Inspection: Visualizing the data within a GIS provides a valuable means to detect obvious errors and inconsistencies.

- Accuracy Assessment: Compare the data against known reliable data sources or ground-truth measurements to assess accuracy and completeness.

Consider a dataset of building footprints. Inconsistencies might include overlaps, gaps, or footprints that fall outside their designated lot boundaries. Such errors can lead to miscalculations of building areas, potentially impacting tax assessments or emergency response planning. Rigorous quality control procedures are essential to ensure reliable results.

Q 15. What are the ethical considerations related to the use and distribution of geodetic data?

Ethical considerations in handling geodetic data are paramount, ensuring responsible use and preventing harm. This encompasses several key areas:

- Data Privacy and Security: Geodetic data can indirectly reveal sensitive information about individuals or locations. Robust security measures, access control, and anonymization techniques are vital to protect privacy. For example, precise coordinates near a private residence shouldn’t be publicly available without consent.

- Data Integrity and Accuracy: Presenting inaccurate or manipulated data can have serious consequences, from navigational errors to infrastructure failures. Maintaining data integrity through rigorous quality control and transparent data provenance is essential. Think of the implications of a slightly inaccurate elevation model for a bridge design.

- Data Ownership and Intellectual Property: Clear guidelines regarding data ownership and intellectual property rights are crucial. Proper attribution and licensing agreements prevent misuse and protect the rights of data creators. This is particularly relevant for high-resolution datasets generated through significant investment.

- Bias and Fairness: Geospatial data can reflect existing societal biases. Careful consideration is needed to identify and mitigate biases in data collection, processing, and analysis to ensure equitable outcomes. This might involve addressing discrepancies in data density across different regions.

- Transparency and Openness: Whenever possible, open and transparent data practices promote trust and facilitate collaboration. Making data available under appropriate licenses and providing clear documentation aids reproducibility and accountability.

Ignoring these ethical considerations can lead to legal issues, reputational damage, and significant societal harm. A strong ethical framework is fundamental to responsible geospatial practice.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with quality control and quality assurance processes for geodetic data.

My experience in geodetic data quality control (QC) and quality assurance (QA) involves a multi-stage approach. It begins with meticulous planning of data acquisition, selecting appropriate instrumentation and methodologies based on the project’s requirements and accuracy goals. This includes factors like atmospheric corrections, equipment calibration, and appropriate survey design.

During data processing, rigorous QC checks are implemented at each stage. This might involve:

- Gross error detection: Identifying outliers or impossible values through statistical analysis and visual inspection.

- Redundancy checks: Utilizing redundant measurements to detect and resolve inconsistencies. For example, comparing measurements from different survey techniques or instruments.

- Adjustment techniques: Employing least squares adjustment or other methods to reconcile discrepancies and obtain the best-fit solution.

- Accuracy assessment: Calculating precision and accuracy metrics, such as root mean square error (RMSE), to quantify the uncertainty of the data.

Post-processing QA involves a comprehensive review of the processed data, metadata, and documentation to ensure consistency and adherence to project specifications. This includes independent verification and validation checks. I’ve used various software packages, such as Leica GeoOffice and Trimble Business Center, which aid in automating many of these QC/QA procedures. A key aspect is maintaining a detailed audit trail of all processing steps, facilitating traceability and allowing for easy identification of potential issues.

Q 17. How do you address issues related to data uncertainty and error propagation?

Data uncertainty and error propagation are inherent in geodetic data. Addressing these requires a multi-pronged approach starting with a clear understanding of the sources of uncertainty.

Sources of Uncertainty can stem from various factors: instrument limitations, atmospheric effects, human error, and model approximations. Quantifying these uncertainties, often expressed as standard deviations or covariance matrices, is crucial.

Error Propagation is managed through statistical methods. When combining data from multiple sources or performing calculations, uncertainties accumulate. Propagation models, often based on linearization and covariance matrices, are used to estimate the uncertainty in the final results. For instance, if we’re calculating a distance from coordinates with inherent uncertainties, the final distance will also have an associated uncertainty that can be derived via error propagation.

Uncertainty Assessment and Management techniques, like Monte Carlo simulations or least squares adjustment with stochastic models, help estimate and reduce the impact of uncertainties. Transparency about uncertainty is critical; the results should always be presented along with their associated uncertainties. This allows users to make informed decisions and avoid overconfidence in the data’s accuracy.

Q 18. What is your experience with data visualization techniques for geodetic information?

My experience encompasses a range of data visualization techniques for geodetic information, tailored to the specific needs of the project and the target audience. These include:

- 2D mapping: Using GIS software (e.g., ArcGIS, QGIS) to create thematic maps, displaying features like elevation contours, points, lines, and polygons. Different symbologies and color ramps are used to enhance visual clarity.

- 3D visualization: Creating 3D models and terrain surfaces using software like ArcGIS Pro or specialized terrain rendering tools, providing a more immersive and intuitive representation of the data. This is particularly useful for infrastructure planning or impact assessments.

- Interactive dashboards: Developing web-based dashboards that allow users to explore geodetic data dynamically. This may involve integrating map viewers with other data sources, providing tools for filtering and analysis, and incorporating animations for showcasing temporal changes.

- Animation and time series analysis: Creating animations to visualize changes over time. This might include showcasing deformation over a period, illustrating the evolution of a landscape, or displaying the movement of a glacier.

- Virtual and Augmented Reality (VR/AR): Leveraging VR/AR technology to create highly engaging representations that help users experience the data in a more realistic and intuitive way. This is useful for public outreach or specialized training.

Effective data visualization is not just about creating aesthetically pleasing images; it’s about clearly communicating information and facilitating insights. The choice of technique depends heavily on the data and the audience. For example, simple maps are sufficient for a public audience, whereas more detailed 3D visualizations might be required for engineers.

Q 19. Describe your knowledge of different map projections and their suitability for various applications.

Map projections are fundamental to representing the three-dimensional Earth on a two-dimensional surface. Understanding their characteristics is crucial for selecting the appropriate projection for a given application. Different projections have different strengths and weaknesses, impacting shape, area, distance, and direction accuracy.

Examples of Map Projections:

- Mercator: Preserves angles, making it suitable for navigation, but distorts areas significantly at higher latitudes.

- Lambert Conformal Conic: Preserves angles and shapes over smaller areas, commonly used for topographic maps.

- Albers Equal-Area Conic: Preserves areas, ideal for applications involving land area calculations or resource management, but distorts shapes.

- UTM (Universal Transverse Mercator): Divides the Earth into zones, minimizing distortion within each zone. Widely used for large-scale mapping.

- WGS 84: The standard geodetic datum, defining the Earth’s shape and coordinate system. It’s a crucial element for accurate global positioning.

Suitability for Applications relies on the specific needs. For instance, a Mercator projection is suitable for navigation, while an equal-area projection is better for thematic mapping focused on land areas. Choosing the wrong projection can lead to misinterpretations or inaccurate results. Careful consideration of the projection’s properties in relation to the application is essential for reliable analysis.

Q 20. How do you ensure the interoperability of geodetic data across different systems and platforms?

Ensuring interoperability of geodetic data across different systems and platforms is critical for seamless data exchange and analysis. This involves adherence to established data standards and the use of consistent formats and metadata.

Key strategies for achieving interoperability include:

- Adoption of standard data formats: Using widely accepted formats like Shapefile, GeoJSON, GeoPackage, or KML ensures compatibility across various software and platforms. These formats provide structured ways to represent geographic features and their attributes.

- Use of consistent coordinate reference systems (CRS): Specifying the CRS unambiguously prevents misinterpretations caused by different coordinate systems. WGS 84 is a common standard, but others are used depending on the region and application.

- Implementation of comprehensive metadata: Providing detailed metadata, adhering to standards like ISO 19115, is crucial for understanding data content, origin, accuracy, and limitations. This metadata acts as a passport for the data, ensuring that users know exactly what they are working with.

- Use of web services: Employing web services like WMS (Web Map Service) and WFS (Web Feature Service) allows for accessing and sharing geodetic data across the internet. These services facilitate dynamic access to data, reducing the need for transferring large files.

- Data transformation and projection: Using appropriate tools to transform data between different coordinate systems and map projections ensures consistency.

Neglecting interoperability can lead to data silos, hindering collaboration and analysis. A well-structured approach to data management, coupled with adherence to standards, is key to successful data integration and sharing.

Q 21. Explain the concept of spatial analysis and its applications in geodetic surveying.

Spatial analysis involves examining the location, spatial relationships, and patterns of geographic features to gain insights and solve problems. In geodetic surveying, it plays a critical role in various applications.

Key Concepts in Spatial Analysis:

- Proximity analysis: Determining distances and identifying features within a certain radius of a point. For example, finding buildings within a certain distance of a proposed road.

- Overlay analysis: Combining different spatial datasets to identify areas that share common characteristics. For instance, overlaying a soil map with a land use map to assess suitable locations for agriculture.

- Network analysis: Analyzing connectivity within a network of features, such as roads or pipelines. This might involve finding the shortest route between two points or identifying critical network components.

- Interpolation: Estimating values at unsampled locations based on known values. This is commonly used to create elevation models from scattered point data.

- Spatial statistics: Employing statistical techniques to analyze spatial patterns and relationships. This might involve testing for spatial autocorrelation or identifying spatial clusters.

Applications in Geodetic Surveying:

- Land use planning: Identifying suitable areas for development or conservation.

- Infrastructure design: Optimizing routes, networks, and locations of infrastructure assets.

- Environmental modeling: Analyzing and predicting environmental processes, like pollution dispersion or landslide risk.

- Precision agriculture: Optimizing farming practices based on spatial variations in soil properties and crop yields.

- Disaster response: Assessing damage, planning relief efforts, and identifying areas at risk.

Spatial analysis empowers geodetic surveyors to move beyond simple measurements and extract valuable information from spatial data, enabling informed decision-making across various fields.

Q 22. Describe your experience with geodetic data modeling and database design.

Geodetic data modeling and database design are crucial for efficiently managing and analyzing vast amounts of spatial information. My experience involves creating robust database schemas that accommodate various geodetic data types, including points, lines, polygons, and surfaces, represented using formats like Shapefiles, GeoJSON, and GeoPackages. I’ve worked extensively with PostGIS, a spatial extension for PostgreSQL, leveraging its powerful spatial functions for querying, analysis, and data manipulation. For example, I designed a database for a national land surveying project, incorporating attributes like elevation, land use, and ownership alongside spatial data, optimizing queries for efficient retrieval of specific data subsets. This involved careful consideration of spatial indexes to improve query performance and data integrity constraints to maintain data quality.

A key aspect of my approach is adhering to relevant data standards (like those from the Open Geospatial Consortium – OGC) to ensure interoperability and data exchange with other systems. I also focus on designing flexible schemas to accommodate future data expansion and changes in data requirements. For instance, I’ve incorporated user-defined types to represent specific geodetic objects (like survey monuments) and created custom functions to handle complex geospatial calculations within the database, improving the overall efficiency of the system.

Q 23. What are the challenges in integrating geodetic data from various sources?

Integrating geodetic data from diverse sources presents several significant challenges. Inconsistencies in coordinate systems (datums, projections) are a major hurdle. Data from different sources might use different horizontal datums (like NAD83 and WGS84) or map projections (UTM, State Plane), requiring complex coordinate transformations to ensure consistency. Another issue is differing data formats and structures – dealing with Shapefiles, GeoJSON, GeoTIFFs, and other formats necessitates careful data transformation and validation procedures. Data quality also varies greatly; some sources might have high accuracy, while others might be less precise, potentially introducing errors in analysis.

Furthermore, metadata discrepancies can complicate integration. Incomplete or inconsistent metadata makes it difficult to understand the source, accuracy, and limitations of each dataset. Finally, resolving discrepancies in feature representations—for example, where boundaries overlap or differ slightly between datasets—requires careful reconciliation and potentially manual intervention. Addressing these challenges requires a robust data integration workflow that incorporates coordinate transformations, data validation, and careful metadata management.

Q 24. How do you ensure the long-term preservation and accessibility of geodetic data?

Long-term preservation and accessibility of geodetic data are paramount. This involves implementing a comprehensive data management strategy that encompasses data backup and archiving, metadata standardization, and the use of open and widely supported data formats. I employ strategies like regular data backups to multiple locations (e.g., cloud storage and on-site servers) to prevent data loss. Employing version control systems (like Git) allows for tracking data changes over time.

Metadata is crucial; detailed and standardized metadata describing the data’s source, accuracy, limitations, and coordinate system must be included. Choosing open formats like GeoPackage, which are designed for long-term preservation and interoperability, is essential. Furthermore, establishing robust data access procedures, perhaps through web services (like WMS or WFS), provides controlled and easy access to data while limiting direct modification to preserve data integrity. Finally, regularly reviewing and updating data and metadata to account for data degradation, new standards, or changing user needs ensures continued relevance and usability.

Q 25. What are some common industry standards related to geospatial data exchange?

Several industry standards govern geospatial data exchange, ensuring interoperability and consistency. The Open Geospatial Consortium (OGC) plays a key role, developing standards like:

- GeoPackage: A portable, self-describing, and open format for storing geospatial data.

- Shapefile: A widely used, albeit somewhat limited, vector data format.

- GeoJSON: A text-based format for representing geographic features.

- Web Map Service (WMS): A standard for serving map images over the web.

- Web Feature Service (WFS): A standard for accessing vector features over the web.

In addition to OGC standards, other important standards include the ISO 19100 series of standards for geographic information, which provide comprehensive guidelines for metadata and data quality. Adherence to these standards ensures that geospatial data can be easily shared, integrated, and utilized across different platforms and applications.

Q 26. Explain your understanding of coordinate system transformations (e.g., datum transformations).

Coordinate system transformations, especially datum transformations, are vital for integrating geodetic data from different sources. Datums are reference surfaces used to define the positions of points on the Earth. Different datums can lead to significant positional differences, even over short distances. For instance, NAD27 and NAD83 (North American Datums) differ by several meters in many locations. Transformations involve converting coordinates from one datum to another, using mathematical models that account for the differences in the reference surfaces.

Common transformation methods include grid-based transformations (using shift grids that store the differences between datums at various locations) and parametric transformations (using mathematical functions to model the differences). Software packages like GDAL/OGR provide functionalities for performing these transformations. For example, using GDAL, one can easily transform coordinates from NAD83 to WGS84 using a suitable transformation parameters file. Choosing the appropriate transformation method depends on the accuracy requirements, geographic region, and available transformation parameters.

gdalwarp -t_srs EPSG:4326 input.tif output.tifThis command-line example uses GDAL to reproject a GeoTIFF image from an unknown projection to WGS84 (EPSG:4326).

Q 27. Describe your experience with GNSS (Global Navigation Satellite Systems) data processing and analysis.

My experience with GNSS data processing and analysis involves using specialized software packages like RTKLIB, Bernese GNSS Software, or commercial solutions to process raw observation data from GNSS receivers. This involves several steps:

- Data Download and Preprocessing: Downloading raw observation data from GNSS receivers and performing initial quality checks to remove obvious errors.

- Precise Point Positioning (PPP): Using precise satellite orbits and clock corrections to obtain highly accurate positions.

- Kinematic Positioning: Determining the position of a receiver as it moves over time, using techniques like Real-Time Kinematic (RTK) or Post-Processed Kinematic (PPK).

- Data Analysis and Validation: Assessing the accuracy of processed positions through statistical analysis and comparison with independent data sources.

For instance, I’ve used RTKLIB to process data from a network of GNSS receivers to create a highly accurate 3D model of a construction site. This involved careful consideration of atmospheric effects (like ionospheric and tropospheric delays), multipath errors, and receiver clock biases. Understanding the principles of GNSS error sources is critical to ensuring the accuracy and reliability of processed results.

Q 28. How do you validate and verify the accuracy of geodetic data?

Validating and verifying the accuracy of geodetic data is crucial for ensuring data quality. This involves a multi-step process combining visual inspection, statistical analysis, and comparison with independent data sources:

- Visual Inspection: Examining the data visually using GIS software to identify obvious errors, such as spatial inconsistencies or unrealistic values.

- Statistical Analysis: Performing statistical analysis to assess the distribution of data and identify outliers or anomalies.

- Comparison with Independent Data Sources: Comparing the data with independent, high-quality data sources, such as other surveys or high-resolution imagery, to assess data accuracy.

- Root Mean Square Error (RMSE): Calculating the RMSE to quantify the overall accuracy of the dataset.

For example, in a recent project involving topographic surveying, I compared our survey data with a LiDAR-derived Digital Elevation Model (DEM). This comparison revealed some discrepancies that were further investigated and corrected. Systematic errors were identified and addressed through adjustments. The process of data validation and verification ensures that the final data is reliable and suitable for its intended use. Documentation of the validation steps is crucial for maintaining transparency and traceability.

Key Topics to Learn for Geodetic Data Standards and Best Practices Interview

- Coordinate Reference Systems (CRS): Understanding different datums (e.g., WGS84, NAD83), projections (e.g., UTM, State Plane), and their implications for data accuracy and interoperability. Practical application: Transforming data between different CRS for analysis and visualization.

- Geospatial Data Formats: Proficiency in common formats like Shapefiles, GeoJSON, GeoTIFF, and their respective strengths and weaknesses. Practical application: Choosing the appropriate format for a specific project based on data size, complexity, and intended use.

- Data Quality and Metadata: Understanding principles of data accuracy, completeness, consistency, and lineage. Importance of creating comprehensive metadata to ensure data discoverability and usability. Practical application: Implementing quality control checks during data processing and creating standardized metadata records.

- Spatial Data Infrastructure (SDI): Knowledge of SDI principles and how they facilitate data sharing and collaboration. Practical application: Understanding the role of metadata catalogs and data access services within an SDI.

- Best Practices for Data Management: Understanding version control, data backup and recovery strategies, and data security protocols. Practical application: Developing a robust workflow for managing geospatial data throughout its lifecycle.

- Error Propagation and Analysis: Understanding sources of error in geodetic data and techniques for error mitigation and analysis. Practical application: Assessing the uncertainty associated with geospatial data products.

- Data Visualization and Presentation: Effective communication of geospatial data through maps, charts, and reports. Practical application: Creating clear and informative visualizations to support decision-making.

Next Steps

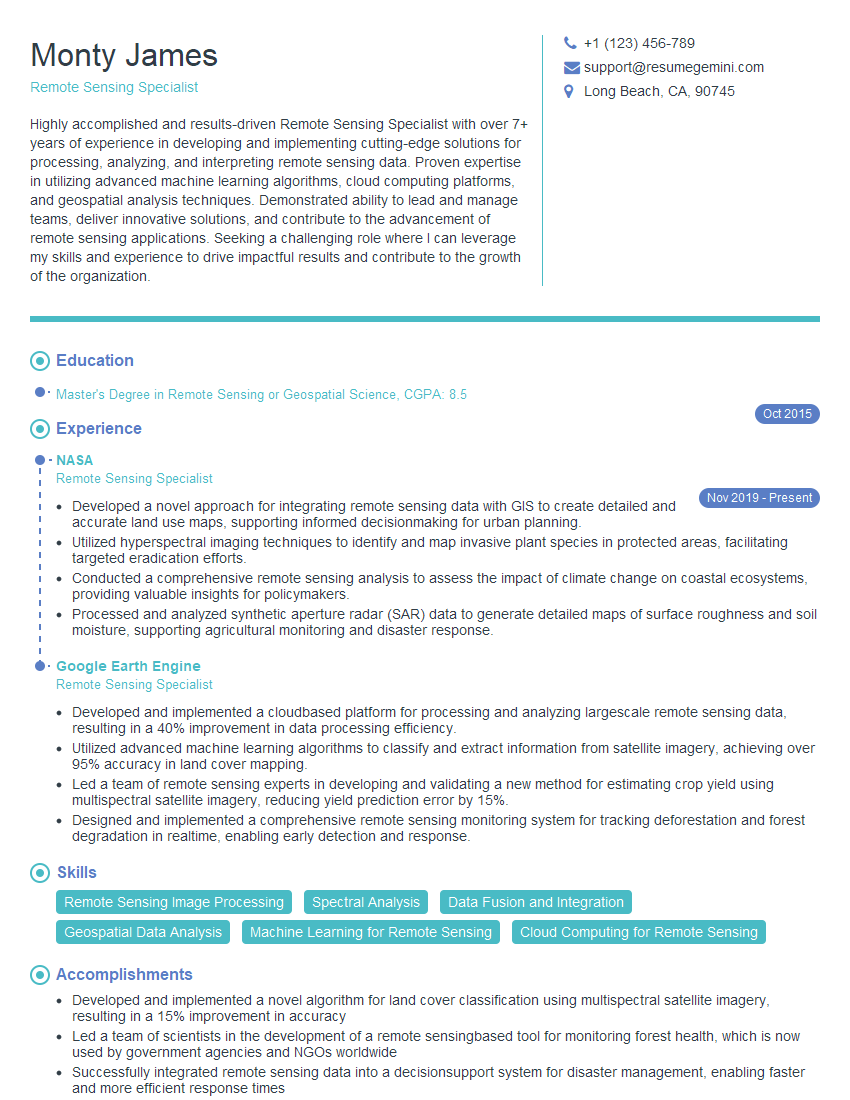

Mastering Geodetic Data Standards and Best Practices is crucial for career advancement in the geospatial field. It demonstrates a commitment to accuracy, efficiency, and collaboration – highly valued attributes in today’s data-driven world. To significantly boost your job prospects, focus on creating an ATS-friendly resume that effectively showcases your skills and experience. ResumeGemini is a trusted resource that can help you build a professional and impactful resume. Examples of resumes tailored to Geodetic Data Standards and Best Practices are available to help you craft your perfect application.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples