Unlock your full potential by mastering the most common Distributed System Architecture interview questions. This blog offers a deep dive into the critical topics, ensuring you’re not only prepared to answer but to excel. With these insights, you’ll approach your interview with clarity and confidence.

Questions Asked in Distributed System Architecture Interview

Q 1. Explain the CAP theorem and its implications for distributed system design.

The CAP theorem, also known as Brewer’s theorem, is a fundamental limitation in distributed data stores. It states that a distributed system can only satisfy two out of the following three guarantees at any given time: Consistency, Availability, and Partition tolerance.

Consistency means that all nodes in the system see the same data at the same time. Imagine a shared whiteboard: consistency ensures everyone sees the same text. Availability means that every request receives a response (non-error response), without guarantee of the data being the most up-to-date. Think of a website; even if the database is temporarily down, you still get a page (maybe an error page). Partition tolerance means the system continues to operate even if communication between nodes is disrupted, for example, due to network failure. This is crucial because network partitions are extremely common in distributed systems.

The implications are significant: you must choose which two guarantees to prioritize based on your system’s needs. For instance, a financial transaction system prioritizes consistency and partition tolerance, sacrificing some availability during network issues. Conversely, a social media feed might prioritize availability and partition tolerance, accepting eventual consistency to ensure users always see something.

Q 2. Describe different consistency models in distributed systems (e.g., strong, eventual).

Consistency models define how data consistency is maintained across a distributed system. Here are a few:

- Strong Consistency: All nodes see the same data at the same time. This provides a simple programming model, but it’s difficult to achieve in a distributed setting with network latency and failures. A classic example would be a bank account balance – you need strong consistency to avoid overdrafts.

- Sequential Consistency: The result of any execution appears as if the operations were executed sequentially in some order, even if they were actually performed concurrently.

- Linearizability: A stronger version of sequential consistency where each operation appears to take effect instantaneously at some point between its invocation and response.

- Eventual Consistency: Data will eventually become consistent throughout the system, but there may be temporary inconsistencies. This is common in systems that prioritize availability. Think of email: your sent email may not appear in your recipient’s inbox instantly.

- Causal Consistency: If one operation (A) causally precedes another operation (B) (A caused B), then all nodes will observe them in the same order. This model allows for greater flexibility than strict consistency while still providing a degree of predictability.

Q 3. What are the trade-offs between consistency and availability?

The trade-off between consistency and availability is a core challenge in distributed systems design. It’s essentially the heart of the CAP theorem.

High Consistency, Low Availability: In systems requiring strong consistency (like financial systems), a network partition can lead to reduced availability. To maintain consistency during a partition, the system may have to reject requests to prevent data conflicts, making it unavailable to users.

High Availability, Low Consistency: Systems that prioritize availability (like many web applications) often tolerate temporary inconsistencies. During network partitions, some nodes might continue to process requests, potentially leading to temporary data discrepancies that are resolved later. This approach ensures users can still access the service, even if the data isn’t perfectly consistent.

The decision depends entirely on the application. A system needing absolute data accuracy (e.g., banking) will favour consistency, even if it means temporary unavailability. A social media feed, on the other hand, prioritizes availability to ensure users can always post and read updates, even if there are slight inconsistencies.

Q 4. Explain the concept of distributed consensus and its challenges.

Distributed consensus is the problem of agreeing on a single value among a group of independent, potentially faulty nodes in a distributed system. This is a fundamental problem in building reliable distributed systems. Imagine a committee trying to decide on a single course of action—every member must agree.

The challenges are numerous:

- Fault Tolerance: The system must continue to operate correctly even if some nodes fail (crash, become unresponsive, or behave maliciously).

- Network Partitions: The system must handle network disruptions that might isolate subgroups of nodes.

- Byzantine Faults: Handling nodes that act arbitrarily, providing incorrect or inconsistent information, is significantly harder than handling simple crashes.

- Performance: Achieving consensus quickly and efficiently is crucial for many applications.

Solving distributed consensus reliably is complex. It requires sophisticated algorithms that can withstand failures and maintain agreement, even under adverse conditions.

Q 5. Compare and contrast different distributed consensus algorithms (e.g., Paxos, Raft).

Paxos and Raft are two prominent distributed consensus algorithms. Both aim to achieve consensus, but they differ in their approach and complexity:

- Paxos: Paxos is known for its mathematical rigor and correctness, but it’s notoriously complex to understand and implement. It uses a leader-based approach, where a single node (the leader) proposes values, and other nodes accept or reject them. Its complexity arises from its multiple phases and intricate failure handling mechanisms.

- Raft: Raft is designed to be easier to understand and implement than Paxos. It also uses a leader-based approach, but it simplifies the process with a clearer structure and improved fault tolerance mechanisms. It prioritizes simplicity and clarity in its design, making it more approachable for developers.

Comparison: Both aim for the same goal—distributed consensus. Raft prioritizes simplicity and ease of understanding at the cost of potentially slightly less optimized performance in some scenarios. Paxos focuses on mathematical correctness and performance, but it comes with increased implementation complexity.

In practice: Raft’s simplicity makes it a more popular choice for practical implementations, especially in systems where ease of maintenance and understanding are important. Paxos may be preferred in scenarios demanding extremely high performance and a very rigorous theoretical foundation.

Q 6. How do you handle failures in a distributed system?

Handling failures in a distributed system is paramount to its reliability and availability. A robust strategy involves multiple layers:

- Detection: Employ mechanisms (heartbeat monitoring, timeouts) to promptly identify failures (node crashes, network partitions).

- Recovery: Implement strategies to restore the system to a consistent state after failures. This may involve restarting failed nodes, replicating data, or using backup systems.

- Redundancy: Employ techniques like replication and data sharding to ensure data and services are available even if some components fail. Multiple copies of data are stored across different nodes, ensuring that if one node fails, the data is still accessible from others.

- Fault Tolerance: Design the system to gracefully handle failures. This can involve using techniques like circuit breakers, retries, and fallback mechanisms to mitigate the impact of failures on the user experience.

- Monitoring and Logging: Continuous monitoring and detailed logging are crucial to identify patterns, understand the root causes of failures, and improve the system’s resilience over time.

The exact approach depends heavily on the system’s architecture and the types of failures it’s expected to encounter.

Q 7. Explain different strategies for fault tolerance in distributed systems.

Fault tolerance is crucial for building reliable distributed systems. Several strategies can be employed:

- Replication: Creating multiple copies of data or services across different nodes. If one node fails, the others can still provide service. This could be active-active (all replicas process requests), or active-passive (a backup replica takes over if the primary fails).

- Data Sharding: Partitioning data across multiple nodes to distribute the load and improve scalability. This improves availability and reduces the impact of individual node failures.

- Redundancy: Using backup components or systems to provide service if the primary components fail. This can include redundant power supplies, network connections, and servers.

- Error Handling and Retries: Implementing robust error handling mechanisms to detect and recover from errors gracefully. This often involves retrying failed operations after a delay, or implementing circuit breakers to prevent repeated attempts when a service is clearly unavailable.

- Checkpointing and Rollback: Periodically saving the system’s state (checkpointing) to allow recovery to a previous consistent state in case of failures. This enables the system to “rollback” to a known good state.

- Self-Healing: Designing the system to automatically detect and correct failures without human intervention. This often uses automated monitoring, recovery mechanisms, and deployment strategies.

The choice of strategies depends on the specific requirements of the system and the types of failures it needs to withstand. Often, a combination of techniques is employed to achieve a high degree of fault tolerance.

Q 8. Describe different approaches to data replication in distributed systems.

Data replication in distributed systems involves creating copies of data across multiple nodes to enhance availability, performance, and fault tolerance. Several approaches exist, each with its own trade-offs:

- Master-Slave Replication: One master node manages writes, and slave nodes passively replicate data from the master. This is simple but has a single point of failure at the master. Imagine a database server – one is the main source, others mirror it. If the main one fails, the others take over, but only after some delay.

- Master-Master Replication: Multiple master nodes can accept writes, requiring conflict resolution mechanisms (like timestamps or last-write-wins). This is more complex but provides higher write availability than master-slave. Think of a high-availability email system where multiple servers handle incoming emails to reduce delays.

- Multi-Master Replication: Similar to master-master but with more sophisticated conflict resolution strategies needed to handle concurrent updates from different masters, often requiring sophisticated techniques to ensure consistency, like using a conflict resolution algorithm (e.g., last write wins).

- Active-Active Replication: All replicas are active and handle both reads and writes. This offers the highest availability and performance but necessitates advanced algorithms to keep all replicas consistent. Think of a financial trading system, where every server has to be up-to-date and can handle transactions.

- Chain Replication: Data is replicated in a chain, where each node receives the data from the previous node. This simplifies the update process, but a failure in the chain breaks the connection. Suitable for applications that require sequential processing of data.

The best approach depends on the specific needs of the application, considering factors like availability requirements, performance needs, data consistency levels, and the complexity of managing the replication process.

Q 9. What are the benefits and drawbacks of using microservices architecture?

Microservices architecture involves breaking down a large application into smaller, independent services. Each service focuses on a specific business function and can be developed, deployed, and scaled independently.

- Benefits:

- Improved Scalability: Individual services can be scaled independently based on their specific needs.

- Increased Agility: Faster development cycles and easier deployment of new features and updates.

- Technology Diversity: Different services can use the most appropriate technologies.

- Fault Isolation: Failure in one service doesn’t necessarily affect the entire application.

- Drawbacks:

- Increased Complexity: Managing many services increases operational overhead.

- Distributed System Challenges: Issues like data consistency, network latency, and service discovery need careful consideration.

- Testing and Debugging: Testing and debugging distributed systems is more complex than monoliths.

- Operational Overhead: Deploying and monitoring many services requires more infrastructure and tools.

For example, a large e-commerce platform might have separate microservices for user authentication, product catalog, shopping cart, payment processing, and order management. Each service can be developed and deployed independently, providing better scalability and maintainability compared to a monolithic architecture.

Q 10. How do you design a distributed system for high availability?

Designing for high availability focuses on minimizing downtime. Key strategies include:

- Redundancy: Replicate critical components (servers, databases, network devices) across multiple locations or availability zones. If one fails, others take over seamlessly.

- Load Balancing: Distribute incoming requests across multiple servers to prevent overload on any single server.

- Failover Mechanisms: Implement automatic failover mechanisms to quickly switch to backup components in case of failures. This ensures continuous operation with minimal disruption.

- Health Checks: Regularly monitor the health of all components and trigger failovers if necessary. Think of a heartbeat signal constantly checking server status.

- Monitoring and Alerting: Implement comprehensive monitoring and alerting systems to detect and respond to potential issues promptly.

Imagine a website for a major airline – high availability is critical. If the booking system crashes, it can have devastating financial repercussions. Redundancy and failover are therefore crucial. Health checks constantly ensure everything is running smoothly, and alerting systems notify engineers of potential problems instantly.

Q 11. How do you design a distributed system for scalability?

Scalability in distributed systems means handling increasing workloads without compromising performance. Key strategies include:

- Horizontal Scaling: Adding more servers to handle the increased load. This is generally more cost-effective than vertical scaling.

- Vertical Scaling: Upgrading existing servers with more powerful hardware (more RAM, faster processors). While easier to implement, it’s limited by the hardware constraints of a single machine.

- Data Partitioning: Distribute data across multiple databases or storage systems to improve performance and reduce contention.

- Caching: Store frequently accessed data in a cache closer to the clients to reduce latency.

- Microservices Architecture: Break down the application into smaller independent services that can be scaled independently.

Consider a social media platform like Twitter. The need to handle millions of tweets simultaneously requires horizontal scaling – adding more servers to process and store this massive data volume.

Q 12. Explain different strategies for load balancing in distributed systems.

Load balancing distributes incoming traffic across multiple servers to prevent overload. Common strategies include:

- Round Robin: Distribute requests sequentially to each server in a circular manner. Simple but may not consider server load.

- Least Connections: Direct requests to the server with the fewest active connections. Effective but requires monitoring server load.

- Weighted Round Robin: Assign weights to servers based on their capacity. More powerful servers handle more requests. This allows you to account for differences in server capacity.

- IP Hash: Distribute requests based on the client’s IP address, ensuring consistent routing for each client. Useful for maintaining session affinity.

- Consistent Hashing: Maps servers and data to a consistent hash ring, minimizing disruption during server additions or removals.

In a web server farm, least connections or weighted round robin would be effective strategies to handle fluctuating user traffic and server capacities. Consistent hashing helps maintain data locality during scaling.

Q 13. How do you ensure data consistency across multiple databases in a distributed system?

Ensuring data consistency across multiple databases in a distributed system is a complex problem. Strategies include:

- Two-Phase Commit (2PC): A distributed transaction protocol that ensures atomicity and consistency across multiple databases. It’s reliable but can be slow and susceptible to blocking.

- Paxos or Raft: Consensus algorithms used to achieve agreement on the state of the system across multiple nodes. They are more complex but offer better fault tolerance than 2PC.

- Eventual Consistency: Accept that data might be temporarily inconsistent across databases, but will eventually converge. Suitable for applications that can tolerate some inconsistency (e.g., email systems).

- Data Replication with Conflict Resolution: Use sophisticated replication techniques (e.g., multi-master replication) with conflict resolution mechanisms to manage concurrent updates.

- Database sharding with single-point database write for a shard: Partition data into smaller sets (shards), each stored in a separate database. While consistency is maintained within each shard, there might be consistency challenges between shards.

The choice depends on the application’s requirements for consistency and performance. For high-availability financial systems, 2PC might be necessary, while a social media platform may be able to tolerate eventual consistency.

Q 14. Describe different approaches to distributed tracing.

Distributed tracing helps understand the flow of requests across multiple services in a distributed system. Approaches include:

- OpenTelemetry: A collection of tools, APIs, and SDKs for instrumenting, generating, collecting, and exporting telemetry data (metrics, logs, and traces).

- Zipkin: A distributed tracing system that collects and displays traces from microservices and other distributed systems. Good for visualizing requests across services.

- Jaeger: Another popular distributed tracing system that is highly scalable and efficient for large-scale distributed systems.

- Custom Solutions: Some organizations build custom tracing systems tailored to their specific needs. This approach requires significant engineering effort.

Each approach usually involves injecting unique identifiers (trace IDs and span IDs) into requests to track their journey across multiple services. When a failure occurs, tracing helps identify the root cause by showing the flow of the request through all involved services. Think of it like a detective tracking a case by examining clues found at each location, finally determining where and why the case went wrong.

Q 15. Explain the concept of eventual consistency and when it is appropriate to use it.

Eventual consistency is a data consistency model in which updates to the data are propagated through the system asynchronously. This means that after a write operation, it may take some time before all nodes in the distributed system reflect that update. Think of it like sending a postcard – you drop it in the mail, but it takes a while to reach its destination. The recipient (another node in the system) eventually gets the update, but not immediately.

It’s appropriate to use eventual consistency when:

- High availability is paramount: If the system needs to remain operational even with network partitions, eventual consistency is more fault-tolerant. Immediate consistency may lead to unavailability during network problems.

- Performance is critical: Achieving immediate consistency across a large distributed system can be extremely slow and resource-intensive. Eventual consistency prioritizes speed and scalability.

- Data is not highly sensitive: If slight delays in data consistency are acceptable (e.g., a social media feed), eventual consistency is a reasonable choice. Use cases involving financial transactions or critical systems generally demand stronger consistency guarantees.

Example: Consider a large-scale content delivery network (CDN). When a user uploads a photo, it might be immediately visible on their profile, but it may take some time for all CDN nodes globally to update, ensuring eventual consistency across all user locations.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What are the challenges of debugging distributed systems?

Debugging distributed systems is notoriously challenging compared to debugging single-machine applications. The complexities arise from the distributed nature itself:

- Non-deterministic behavior: The order of events and their timing can vary depending on network conditions and resource availability, making reproducibility difficult.

- Partial failures: A single component might fail, leaving the system in an inconsistent state that’s hard to diagnose.

- Data inconsistency: Different nodes might hold different versions of the data, leading to confusing discrepancies.

- Lack of global state view: It’s difficult to get a holistic view of the entire system’s state at any given moment.

- Tool limitations: Traditional debugging tools are often not designed to handle the scale and complexity of distributed systems. Debugging often requires custom tools and distributed tracing systems.

Effective debugging strategies include distributed tracing, logging aggregation, and careful design of system components to improve observability. Techniques like using consistent hashing for data placement and idempotent operations help manage data consistency. Employing robust monitoring and alerting systems can also help in detecting and addressing issues early on.

Q 17. How do you monitor and manage a distributed system?

Monitoring and managing a distributed system requires a multi-faceted approach leveraging various tools and techniques.

- Metrics collection: Employ tools like Prometheus, Grafana, or Datadog to collect crucial system metrics (CPU usage, memory consumption, network latency, request throughput, error rates). These metrics provide insights into the system’s health and performance.

- Logging and tracing: Implement robust centralized logging systems (e.g., Elasticsearch, Fluentd, Kibana – the ELK stack) and distributed tracing (e.g., Jaeger, Zipkin) to track requests and identify bottlenecks or errors. This allows you to trace requests across multiple services.

- Alerting: Set up alerts based on predefined thresholds for critical metrics. Immediate notifications about anomalies help quickly address issues.

- Automated scaling and deployment: Leverage tools like Kubernetes or Docker Swarm for automated scaling and deployment of services to handle fluctuating workloads and simplify deployments.

- Centralized dashboards: Integrate metrics, logs, and traces into centralized dashboards to provide a holistic view of the system’s health and status.

Example: If your system is experiencing high latency, metrics might reveal CPU bottlenecks on a specific node, logs might pinpoint errors in a particular service, and tracing can help identify the specific request causing the delay. Automated scaling can then add more resources to address the issue.

Q 18. Explain different strategies for securing a distributed system.

Securing a distributed system requires a layered approach:

- Authentication and Authorization: Implement robust authentication mechanisms (e.g., OAuth 2.0, JWT) to verify the identity of users and services. Authorization controls determine what resources users and services can access.

- Data encryption: Encrypt data both in transit (using TLS/SSL) and at rest (using encryption at the database level). This protects data from unauthorized access.

- Input validation and sanitization: Validate and sanitize all user inputs to prevent injection attacks (SQL injection, cross-site scripting).

- Access control lists (ACLs): Use ACLs to restrict access to sensitive resources.

- Regular security audits and penetration testing: Conduct regular security audits and penetration tests to identify vulnerabilities.

- Secure configuration management: Securely manage system configurations and secrets using tools like HashiCorp Vault.

- Network segmentation: Isolate sensitive components of the system from less sensitive ones.

Example: A microservice architecture might use service-to-service authentication with JWTs to verify the identity of requests. Database encryption protects sensitive user data. Regular security scans and penetration testing proactively identify and resolve vulnerabilities.

Q 19. What are the performance implications of different data consistency models?

Different data consistency models have significant performance implications:

- Strict consistency (atomic consistency): Every read receives the most recent write. This provides strong consistency but severely impacts performance, especially in distributed settings. It requires coordinating updates across all nodes before acknowledging the write, leading to high latency and reduced throughput.

- Sequential consistency: Reads from a node observe the operations in the order in which they were performed. This is a stronger consistency model than eventual consistency, but still less strict than strict consistency. The performance impact is less severe than strict consistency.

- Eventual consistency: Reads may not necessarily reflect the most recent writes, trading off strong consistency for improved performance and scalability. This is suitable for many applications that tolerate some data staleness.

In Summary: Strict consistency offers the strongest consistency but the lowest performance; eventual consistency offers the highest performance but the weakest consistency guarantees. The choice depends heavily on the application’s needs and tolerance for data staleness.

Q 20. Describe different approaches to handling network partitions in a distributed system.

Network partitions are inevitable in distributed systems. Several approaches can mitigate their impact:

- Quorum-based techniques: Require a minimum number of nodes (a quorum) to agree on the state before an operation is considered successful. This helps prevent inconsistent updates during partitions.

- Conflict-free replicated data types (CRDTs): These data structures are designed to be updated concurrently without causing conflicts, even in the presence of network partitions. Once connectivity is restored, the different versions of the data can be efficiently merged.

- Versioning and conflict resolution: Each data update is assigned a version number. When a network partition is resolved, the system compares versions to determine which update is the most recent and resolves conflicts according to predefined strategies (e.g., last-write-wins).

- State machines and consensus protocols: Using state machines with consensus protocols (e.g., Paxos, Raft) ensures agreement among nodes on the system’s state, making it resilient to partitions. These protocols help achieve consistency, albeit with some added complexity.

The best approach often depends on the specific requirements of the application and the nature of the data. For example, CRDTs are well-suited for applications requiring high availability and eventual consistency, while consensus protocols are suitable for applications requiring strong consistency.

Q 21. How do you handle distributed transactions in a distributed system?

Handling distributed transactions in a distributed system is crucial for maintaining data consistency and integrity. It’s far more complex than in a single-database system because we must coordinate updates across multiple databases or services.

- Two-phase commit (2PC): A classic approach where a coordinator manages the transaction across multiple participants. It ensures that either all participants commit or all roll back, preserving atomicity. However, 2PC can suffer from performance issues and blocking during failures.

- Three-phase commit (3PC): An improvement over 2PC that reduces the blocking time during failures, but it is still complex to implement and manage.

- Saga pattern: This pattern decomposes the transaction into a series of local transactions, each operating on a single database. If a local transaction fails, compensating transactions are executed to undo the effects of previous transactions. While not guaranteeing atomicity, it offers better fault tolerance than 2PC.

- Message queues and event sourcing: Asynchronous communication via message queues allows decoupling the different parts of a transaction. Event sourcing captures the history of changes as a sequence of events, allowing for easier recovery and replay in case of failures.

The choice of approach depends on the system’s requirements for consistency, fault tolerance, and performance. Simple systems might leverage message queues, while highly critical systems might need a more robust solution like a carefully designed Saga with compensating transactions. For systems requiring absolute atomicity, even with distributed nodes, distributed transactions are extremely difficult to implement correctly and efficiently.

Q 22. Explain different strategies for data partitioning in a distributed system.

Data partitioning, also known as sharding, is a crucial technique in distributed systems for managing large datasets across multiple machines. The goal is to distribute the load and improve performance by avoiding a single point of failure or bottleneck. Several strategies exist, each with its own trade-offs:

- Range Partitioning: Data is divided into ranges based on a key attribute. For example, users with IDs from 1-1000 go to server A, 1001-2000 to server B, and so on. This is simple to implement but can lead to uneven distribution if data is not uniformly distributed across the key range.

- Hash Partitioning: A hash function is applied to the key, and the result determines the partition. This provides a more even distribution than range partitioning, even with unevenly distributed data. However, adding or removing partitions can be complex, as it requires rehashing existing data.

- List Partitioning: Partitions are defined explicitly by listing the keys belonging to each partition. This is flexible but requires manual management and can become cumbersome as the number of partitions grows.

- Composite Partitioning: Combines multiple partitioning strategies. For example, you might use range partitioning for a broad categorization and then hash partitioning within each range for finer distribution. This allows optimization for different aspects of the data.

Choosing the right strategy depends heavily on the data characteristics, query patterns, and scalability requirements. For instance, a social media platform might use composite partitioning, combining user location (range) and user ID (hash) for efficient data access.

Q 23. Compare and contrast different messaging systems (e.g., Kafka, RabbitMQ).

Kafka and RabbitMQ are both popular message brokers, but they differ significantly in their architectures and use cases.

- Kafka: Designed for high-throughput, distributed streaming platforms. It’s a distributed, fault-tolerant, and scalable system. Data is stored persistently in topics and partitioned for parallel processing. Kafka excels in handling large volumes of real-time data streams, making it ideal for applications like log aggregation, stream processing, and event sourcing. Think of it like a high-speed highway for data, designed for continuous flow.

- RabbitMQ: A general-purpose message broker that supports a wider range of messaging protocols (AMQP, STOMP, MQTT, etc.). It offers features like message queues, exchanges, and bindings for more complex routing scenarios. It’s more flexible than Kafka but might not scale as efficiently for extremely high-throughput scenarios. Imagine it as a well-organized post office, capable of handling various message types and delivery methods but potentially slower for massive volumes.

Key Differences Summarized:

- Scalability: Kafka excels at horizontal scalability for high-throughput streams; RabbitMQ is scalable but might not match Kafka’s performance at the highest volumes.

- Persistence: Kafka offers persistent messaging, ensuring data is not lost in case of failures; RabbitMQ provides persistence options but it’s not a core design feature.

- Message Ordering Guarantees: Kafka guarantees message ordering within a partition; RabbitMQ offers message ordering guarantees depending on configuration and exchange type.

- Use Cases: Kafka is suited for real-time data streaming; RabbitMQ is versatile and works well for various applications, including microservices communication.

Q 24. How do you design a distributed system for low latency?

Designing a low-latency distributed system requires careful consideration of several factors:

- Reduce Network Hops: Minimize the number of network calls required to complete an operation. Co-locating services, using efficient communication protocols (e.g., gRPC instead of REST), and caching frequently accessed data can significantly reduce latency.

- Optimize Data Locality: Ensure data is accessed from the nearest server. Techniques like data partitioning and caching help achieve this. Using Content Delivery Networks (CDNs) for static content can significantly lower latency for geographically dispersed users.

- Efficient Algorithms and Data Structures: Choose algorithms and data structures that minimize computational complexity. Using appropriate indexing techniques in databases can dramatically improve query performance.

- Asynchronous Processing: Handle tasks asynchronously to avoid blocking operations. Message queues are invaluable for decoupling components and ensuring responsiveness, even when handling long-running tasks.

- Load Balancing: Distribute the workload evenly across multiple servers to prevent overloading any single machine. Smart load balancers can direct requests to the least busy server, ensuring consistent low latency.

- Caching: Employ caching strategies at different layers (e.g., client-side caching, server-side caching, database caching) to reduce the need for repeated database or network calls.

For example, consider an online game. Low latency is critical for a smooth gameplay experience. Using a CDN for game assets, efficient data structures for character state management, and a robust message queue for communication between the game server and clients are all crucial for achieving low latency.

Q 25. What are some common anti-patterns in distributed system design?

Distributed systems are prone to several anti-patterns that can severely impact performance, scalability, and maintainability. Here are some common ones:

- Distributed Monolith: Trying to replicate a monolithic application across multiple servers without proper decomposition into microservices. This leads to complex deployments and a single point of failure.

- Global Shared State: Relying on a globally shared database or memory space. This creates contention and makes scaling difficult.

- Unnecessary Data Synchronization: Continuously synchronizing data across multiple nodes unnecessarily. This consumes resources and increases latency. Eventual consistency approaches should be preferred where appropriate.

- Centralized Bottlenecks: Having a single point of failure, such as a centralized database or message queue, that becomes a bottleneck as the system scales.

- Ignoring Network Issues: Not accounting for network latency and failures in the system design. This can lead to poor performance or complete system failure.

- Ignoring Operational Complexity: Underestimating the complexity of managing and monitoring a distributed system. Proper monitoring and logging strategies are crucial for maintaining a healthy system.

- Lack of Proper Monitoring and Logging: Failing to implement proper monitoring and logging mechanisms makes troubleshooting and performance analysis extremely difficult.

For example, a shopping website that attempts to maintain a single inventory database across all servers will suffer from contention and scalability issues—a classic case of a global shared state anti-pattern. Adopting a distributed database or caching mechanism would improve scalability.

Q 26. Explain the concept of eventual consistency and when it’s appropriate.

Eventual consistency is a consistency model in which updates to the system will propagate throughout the system eventually, but there is no guarantee of immediate consistency. In other words, multiple replicas of the data may temporarily have different values, but they will eventually converge to the same value.

It’s appropriate to use eventual consistency when:

- High Availability is paramount: Eventual consistency tolerates network partitions and temporary outages, ensuring high availability even when some nodes are unavailable.

- Strong Consistency is not critical: The application can tolerate some temporary inconsistencies. For example, in a social media platform, the number of likes on a post might show slight discrepancies temporarily across different user devices; eventual consistency would be an acceptable trade-off for high availability and scalability.

- Scalability is a major concern: Eventual consistency architectures often scale better than strongly consistent ones because they avoid the overhead of distributed transactions.

However, eventual consistency is not suitable for applications requiring immediate consistency, such as financial transactions or real-time bidding systems. In those scenarios, strong consistency (e.g., linearizability) is crucial.

Q 27. How would you approach designing a globally distributed system?

Designing a globally distributed system presents unique challenges due to geographical distances, varying network conditions, and regulatory constraints. A key strategy is to employ a multi-region architecture, distributing data and services across multiple data centers in different geographical locations.

Here’s a step-by-step approach:

- Region Selection: Choose data center locations based on user proximity, latency requirements, and regulatory compliance.

- Data Replication and Consistency: Implement a robust data replication strategy, choosing between eventual consistency (for high availability and scalability) or strong consistency (for critical applications). Replication techniques like multi-master replication or active-passive replication need to be carefully considered.

- Service Placement: Strategically place services in regions that optimize data locality and network performance.

- Network Optimization: Utilize Content Delivery Networks (CDNs) to cache static content closer to users. Implement efficient routing and load balancing strategies to handle regional traffic effectively.

- Fault Tolerance and Disaster Recovery: Build redundancy into the system to ensure availability in case of failures in a particular region. This includes data backups, automatic failover mechanisms, and disaster recovery plans.

- Global Naming and Addressing: Employ a global naming service that allows clients to access services regardless of their physical location.

- Monitoring and Management: Implement comprehensive monitoring and management tools to track performance, identify issues, and manage the globally distributed system effectively. This includes logging, alerting, and centralized dashboards.

For example, a global e-commerce platform needs a globally distributed architecture to ensure low latency for customers across the world and high availability in case of regional outages. They’d need to consider data replication, service placement, and robust disaster recovery mechanisms.

Q 28. Discuss your experience with specific distributed system technologies (e.g., Kubernetes, Apache Kafka).

I have extensive experience with Kubernetes and Apache Kafka, two cornerstone technologies in modern distributed systems.

Kubernetes: I’ve used Kubernetes for orchestrating containerized applications at scale, managing deployments, scaling, and upgrades seamlessly. My experience includes:

- Designing and implementing Kubernetes clusters with multiple nodes, utilizing various network configurations (e.g., Calico, Flannel).

- Using Kubernetes deployments, stateful sets, and daemon sets for managing application state and availability.

- Leveraging Kubernetes services and ingress controllers for service discovery and external access.

- Implementing robust monitoring and logging strategies using tools such as Prometheus and Grafana.

- Automating deployments and rollouts using CI/CD pipelines integrated with Kubernetes.

Apache Kafka: My work with Kafka has focused on building high-throughput, real-time data streaming pipelines. Specifically:

- Designing and implementing Kafka topics, partitions, and consumer groups for efficient data handling.

- Using Kafka Streams or other stream processing frameworks (e.g., Apache Flink) to perform real-time data transformations and aggregations.

- Integrating Kafka with various data sources and sinks (e.g., databases, message queues).

- Managing Kafka clusters, ensuring scalability and high availability.

- Troubleshooting and optimizing Kafka performance based on monitoring data.

In one project, we used Kubernetes to manage a Kafka cluster and the stream processing applications that consumed data from it. This allowed us to automate deployment, scaling, and upgrades, significantly improving operational efficiency and resilience.

Key Topics to Learn for Distributed System Architecture Interview

- Consistency and Fault Tolerance: Explore different consistency models (e.g., strong, eventual) and techniques to ensure data consistency and system resilience in the face of failures. Consider practical applications like distributed databases and leader election algorithms.

- Data Partitioning and Replication: Understand various strategies for partitioning data across multiple nodes (e.g., range partitioning, hash partitioning) and the benefits and trade-offs of different replication techniques (e.g., master-slave, multi-master).

- Distributed Consensus and Agreement: Grasp the complexities of achieving consensus in a distributed environment. Familiarize yourself with algorithms like Paxos and Raft, understanding their strengths and weaknesses in practical scenarios.

- Microservices Architecture: Learn about designing, building, and deploying applications as a collection of small, independent services. Explore service discovery, inter-service communication (e.g., REST, gRPC), and API gateways.

- Message Queues and Stream Processing: Understand the role of message queues (e.g., Kafka, RabbitMQ) in asynchronous communication and distributed systems. Explore stream processing frameworks (e.g., Apache Flink, Apache Kafka Streams) and their applications in real-time data processing.

- Distributed Transaction Management: Explore techniques for managing transactions that span multiple nodes or services, considering concepts like two-phase commit and sagas.

- Security in Distributed Systems: Understand security considerations unique to distributed systems, including authentication, authorization, and data encryption. Explore common security vulnerabilities and mitigation strategies.

- Performance Optimization and Monitoring: Learn techniques for optimizing the performance of distributed systems, including load balancing, caching, and monitoring tools for identifying bottlenecks and issues.

Next Steps

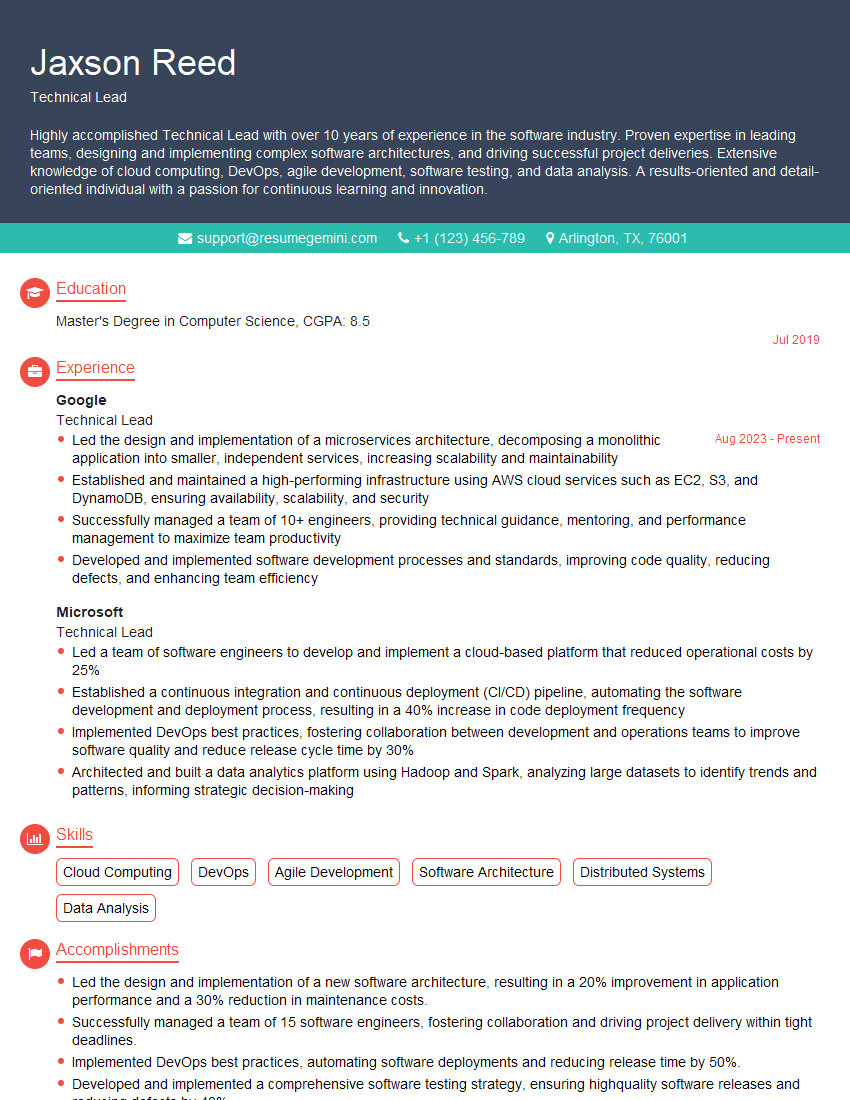

Mastering Distributed System Architecture is crucial for career advancement in today’s technology landscape. It opens doors to high-demand roles with significant impact. To maximize your job prospects, crafting a compelling and ATS-friendly resume is essential. ResumeGemini can significantly enhance your resume-building experience, helping you create a professional document that showcases your skills effectively. ResumeGemini offers examples of resumes tailored to Distributed System Architecture roles, providing valuable templates and guidance to help you stand out from the competition.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

I Redesigned Spongebob Squarepants and his main characters of my artwork.

https://www.deviantart.com/reimaginesponge/art/Redesigned-Spongebob-characters-1223583608

IT gave me an insight and words to use and be able to think of examples

Hi, I’m Jay, we have a few potential clients that are interested in your services, thought you might be a good fit. I’d love to talk about the details, when do you have time to talk?

Best,

Jay

Founder | CEO