Interviews are opportunities to demonstrate your expertise, and this guide is here to help you shine. Explore the essential Reference Frame Implementation interview questions that employers frequently ask, paired with strategies for crafting responses that set you apart from the competition.

Questions Asked in Reference Frame Implementation Interview

Q 1. Explain the concept of a reference frame and its importance in robotics.

Imagine you’re describing the location of a treasure chest. You wouldn’t just say “it’s over there!” You’d need a reference point, like a specific tree or a landmark. A reference frame in robotics serves the same purpose. It’s a coordinate system that defines a location and orientation in space. Without a reference frame, we can’t accurately describe the position and orientation of a robot or any object within its environment. It’s fundamental to robot navigation, manipulation, and control – think of it as the robot’s map of the world.

For example, a robot arm needs to know its own position relative to the base and the position of an object it’s meant to grasp. This is all defined within different reference frames that allow the robot’s controller to accurately calculate the required movements.

Q 2. Describe different types of reference frames used in robotics (e.g., world, base, camera).

Robotics utilizes several key reference frames:

- World Frame: This is the global, fixed coordinate system. It’s the ultimate reference point – imagine it as the map of the entire workspace.

- Base Frame: Attached to the robot’s base or foundation. This frame moves with the base, but its orientation remains fixed relative to the base itself.

- End-Effector Frame: Located at the tip of the robot arm (the end-effector), such as a gripper or tool. This frame moves with the end-effector, reflecting its position and orientation.

- Camera Frame: Fixed to a camera, allowing the camera’s viewpoint to be incorporated into the robot’s coordinate system. This is crucial for vision-based tasks.

- Joint Frames: Each joint in a robotic arm has its own frame, describing its position and orientation relative to the previous joint.

These frames help to break down complex robot movements into smaller, more manageable transformations between adjacent frames.

Q 3. How do you perform a coordinate transformation between different reference frames?

Coordinate transformations involve converting the coordinates of a point from one reference frame to another. This is done using a transformation matrix that encapsulates both rotation and translation. Let’s say we have a point P in frame A (coordinates PA) and we want to find its coordinates PB in frame B. We would use the transformation matrix BTA (transformation from frame A to frame B) to calculate: PB = BTA * PA.

This involves combining rotations and translations to account for the differences in position and orientation between the two frames. The specific calculations depend on the type of transformation matrix used (e.g., homogeneous transformation).

Q 4. What are homogeneous transformations, and how are they used in reference frame transformations?

Homogeneous transformations are a powerful mathematical tool for representing both rotation and translation in a single 4×4 matrix. This elegantly combines the rotation matrix (3×3) and the translation vector (3×1) into a compact representation. They’re essential because they allow us to efficiently chain multiple transformations together. For instance, to get the end-effector’s position in the world frame, you’d multiply the homogeneous transformations of each joint sequentially.

Example (simplified):

World_T_EndEffector = World_T_Base * Base_T_Joint1 * Joint1_T_Joint2 * ... * JointN_T_EndEffector

This eliminates the need for separate rotation and translation calculations, simplifying the process significantly.

Q 5. Explain the role of rotation matrices in reference frame transformations.

Rotation matrices are 3×3 matrices that describe rotations in three-dimensional space. Each rotation matrix represents a specific rotation around one of the axes (x, y, or z) by a certain angle. In reference frame transformations, they’re used to represent the orientation of one frame relative to another. They’re a fundamental component of homogeneous transformations, providing the rotational part of the overall transformation.

For example, a rotation matrix can tell us how much the end-effector frame is rotated around each axis compared to the base frame. These matrices are then incorporated into the larger homogeneous transformation matrix for complete coordinate transformation.

Q 6. How do you represent orientation using quaternions or Euler angles?

Orientation can be represented in several ways:

- Euler Angles: These represent the orientation using three angles representing rotations around the x, y, and z axes (in a specific order, like roll, pitch, yaw). They’re intuitive but suffer from gimbal lock.

- Quaternions: Quaternions are a more robust method. They represent the orientation using four numbers (a scalar and a vector). They avoid gimbal lock and provide smooth interpolation between orientations. They are more complex mathematically, but computationally advantageous.

The choice depends on the specific application. Euler angles are easier to visualize but have limitations; quaternions are more robust but less intuitive.

Q 7. What are the advantages and disadvantages of using quaternions vs. Euler angles?

Euler Angles:

- Advantages: Intuitive, easy to understand and visualize.

- Disadvantages: Suffer from gimbal lock (loss of one degree of freedom), can lead to numerical instability, and interpolation isn’t always smooth.

Quaternions:

- Advantages: Avoid gimbal lock, compact representation, smooth interpolation, computationally efficient for many operations.

- Disadvantages: Less intuitive to understand and visualize than Euler angles; require more complex mathematical operations.

In summary, while Euler angles are simple to grasp, quaternions are generally preferred in robotics due to their robustness and efficiency in handling complex orientations, especially in applications requiring smooth and continuous movement.

Q 8. Describe the process of calibrating sensors to a common reference frame.

Calibrating sensors to a common reference frame is crucial for accurate data fusion and reliable system operation. It involves determining the transformation – rotation and translation – between each sensor’s individual coordinate system and a globally defined reference frame. This process typically involves a series of steps:

Choosing a Reference Frame: First, define a common reference frame. This could be the world coordinate system, a robot base frame, or another convenient point. This frame serves as the anchor for all other sensor data.

Sensor Placement and Mounting: Precisely mount each sensor and carefully record its initial position and orientation relative to its immediate surroundings. This information will later be used to calculate transformations.

Calibration Procedure: Execute a specific calibration procedure depending on the sensor type. This could involve moving a known target within the sensor’s field of view (for cameras or laser scanners), or using a precisely positioned calibration artifact (for IMUs).

Data Acquisition: Collect sensor data during the calibration procedure. This data contains information about the sensor’s measurements and its position relative to the calibration target.

Transformation Estimation: Use algorithms (such as least-squares optimization or iterative closest point (ICP)) to estimate the transformation parameters (rotation and translation) between the sensor’s local coordinate frame and the chosen reference frame. This frequently involves solving a system of equations based on the known positions and sensor measurements.

Verification and Refinement: Validate the accuracy of the estimated transformations using various methods, including visual inspection, residual analysis, and repeating the calibration procedure. Iteratively refine the transformation parameters until satisfactory accuracy is achieved.

Example: Imagine calibrating a camera and a lidar mounted on a robot arm. You’d move a checkerboard pattern (calibration target) through the field of view of both sensors. By comparing the known positions of the checkerboard points and the sensor measurements, you can determine the transformation matrices aligning the camera and lidar frames to the robot’s base frame (the reference frame).

Q 9. How do you handle sensor noise and uncertainty in reference frame transformations?

Sensor noise and uncertainty are inherent in any measurement system and significantly impact reference frame transformations. Several strategies help mitigate these issues:

Filtering: Applying filters, such as Kalman filters (discussed further in question 4), smooths noisy sensor data by combining current measurements with predictions based on a model of the system’s dynamics. This reduces the influence of random noise on the transformation estimates.

Data Fusion: Combining data from multiple sensors, as in sensor fusion (question 3), provides redundancy and helps to reduce uncertainty. If one sensor is noisy, the others can compensate.

Statistical Methods: Employing statistical methods, such as weighted averaging or covariance analysis, allows for a more robust estimation of transformations by considering the uncertainty associated with each sensor measurement.

Robust Estimation Techniques: Utilizing robust estimation techniques, like RANSAC (Random Sample Consensus), helps to identify and reject outliers in the sensor data, which are often caused by noise or errors. This is especially crucial when dealing with large datasets.

Calibration Refinement: Continuous or periodic recalibration helps account for sensor drift and changes in the environment, reducing long-term uncertainty.

In practice, a combination of these techniques is usually employed to effectively handle sensor noise and uncertainty in reference frame transformations. For instance, a Kalman filter could be used to fuse data from multiple sensors, while RANSAC can be used to identify and remove outlier measurements before applying the filter.

Q 10. Explain the concept of sensor fusion and its relevance to reference frames.

Sensor fusion is the process of combining data from multiple sensors to obtain a more accurate, reliable, and complete understanding of the environment. Its relevance to reference frames is paramount because it allows us to create a more robust and accurate representation of the world by integrating information from different perspectives and modalities.

For example, imagine using a camera, lidar, and IMU on a self-driving car. The camera provides visual information, the lidar provides distance measurements, and the IMU provides inertial information (orientation and acceleration). By fusing these sources within a common reference frame, the car obtains a significantly more accurate and complete understanding of its surroundings than it would from any single sensor alone.

In a reference frame context, sensor fusion helps to:

Improve Accuracy: Combining data from multiple sensors can reduce noise and uncertainty, leading to more accurate estimates of position, orientation, and other parameters.

Increase Reliability: Sensor fusion provides redundancy. If one sensor fails, others can compensate, ensuring continuous and robust operation.

Enhance Completeness: Different sensors provide complementary information. Sensor fusion enables a more complete picture of the environment by integrating data from various sources.

The key is to transform all sensor data into a common reference frame before fusion, ensuring consistency and enabling meaningful combination of information.

Q 11. Describe common algorithms used for sensor fusion (e.g., Kalman filter, Extended Kalman filter).

Several algorithms are commonly used for sensor fusion, each with its own strengths and weaknesses. Two prominent examples are the Kalman filter and the Extended Kalman filter:

Kalman Filter: The Kalman filter is a powerful algorithm for estimating the state of a dynamic system from a series of noisy measurements. It’s particularly well-suited for linear systems and Gaussian noise. It works by recursively updating a state estimate based on a prediction and a measurement update. The prediction step uses a dynamic model of the system, and the measurement update incorporates new sensor readings, weighting them according to their uncertainty. This process continues iteratively, refining the state estimate over time.

Extended Kalman Filter (EKF): The EKF extends the Kalman filter to handle nonlinear systems. It linearizes the nonlinear system dynamics and measurement models using first-order Taylor series expansions around the current state estimate. This approximation allows the application of the standard Kalman filter equations. However, the accuracy of the EKF depends heavily on the validity of the linearization, which can be problematic for highly nonlinear systems.

Example (Conceptual Kalman Filter):

// Simplified Kalman filter update equations x_predicted = F * x_previous + u; // Prediction P_predicted = F * P_previous * F' + Q; // Prediction covariance K = P_predicted * H' * inv(H * P_predicted * H' + R); // Kalman gain x_updated = x_predicted + K * (z - H * x_predicted); // Update P_updated = (I - K * H) * P_predicted; // Update covariance // where: // x: state vector (e.g., position, velocity) // P: covariance matrix // F: state transition matrix // u: control input // Q: process noise covariance // z: measurement vector // H: measurement matrix // R: measurement noise covariance // K: Kalman gain // I: identity matrix The choice between a Kalman filter and an EKF (or other more advanced filters like Unscented Kalman Filter) depends on the linearity of the system and the nature of the noise. For highly nonlinear systems, more sophisticated techniques might be necessary.

Q 12. How do you deal with singularities in reference frame transformations?

Singularities in reference frame transformations arise when the transformation matrix becomes ill-conditioned or non-invertible. This typically occurs when certain configurations lead to a loss of degrees of freedom. For instance, in robotic arms, singularities occur when the arm is fully extended or in certain joint configurations. These singularities can cause numerical instability and lead to inaccurate or undefined transformations.

Several strategies address singularities:

Redundant Sensors/Actuators: Using more sensors or actuators than strictly necessary provides redundancy and allows for robust estimation even in singular configurations. If one sensor becomes unreliable, others can still provide sufficient data.

Avoidance Strategies: Designing the system or algorithm to avoid singular configurations during operation is a proactive approach. This involves careful trajectory planning for robots to ensure that their configurations stay within well-conditioned areas.

Regularization: Adding small perturbations to the transformation matrix can improve its condition number, making it more numerically stable. This regularizes the inversion process and reduces the impact of singularities.

Pseudoinverse: Using the pseudoinverse instead of the standard inverse for matrix calculations provides a solution even when the matrix is singular. This gives a least-squares solution that minimizes the error.

Alternative Parameterizations: Employing alternative representations of orientation, such as quaternions instead of Euler angles, can reduce or eliminate certain types of singularities.

The best strategy depends on the specific application and the nature of the singularities. A combination of techniques is often most effective.

Q 13. What are the challenges of real-time reference frame transformations in robotics?

Real-time reference frame transformations in robotics present several challenges:

Computational Cost: Transformations, especially those involving complex sensor fusion algorithms, can be computationally expensive. Real-time processing demands efficient algorithms and hardware acceleration to meet stringent timing constraints.

Latency: Delays in processing can lead to inaccurate control actions and instability. Minimizing latency is critical for smooth and responsive robot operation.

Synchronization: Precise synchronization between different sensors is crucial for accurate fusion. Asynchronous data streams can introduce errors and inconsistencies in reference frame transformations.

Dynamic Environments: Changes in the environment, such as object movement or robot relocation, require continuous updating of reference frames. Adapting to these changes rapidly and reliably is challenging.

Sensor Drift and Noise: Sensor noise and drift, as discussed earlier, can accumulate over time, requiring robust filtering and calibration strategies to maintain accuracy.

Addressing these challenges typically involves selecting efficient algorithms, employing specialized hardware (e.g., GPUs, FPGAs), implementing sophisticated error detection and correction mechanisms, and designing robust control strategies capable of handling uncertain information.

Q 14. How do you ensure the accuracy and consistency of reference frames in a dynamic environment?

Ensuring accuracy and consistency of reference frames in dynamic environments requires a multi-faceted approach:

Continuous Calibration: Implementing strategies for continuous or periodic calibration using onboard sensors or external references helps to track and compensate for drift and changes in sensor alignment.

Loop Closure Detection: In scenarios involving mobile robots or autonomous vehicles, loop closure detection algorithms identify when the robot returns to previously visited locations, enabling the creation of a consistent map and reference frame. This is crucial for long-term navigation.

Robust Sensor Fusion: Employing robust sensor fusion techniques as described previously is vital for handling noisy and inconsistent data in dynamic environments.

External Referencing: Using external positioning systems, such as GPS or motion capture systems, provides additional information that can be integrated to improve the accuracy and consistency of the reference frames.

Error Detection and Recovery: Implementing robust error detection mechanisms is crucial. Upon detecting significant errors or inconsistencies, appropriate recovery strategies should be implemented to maintain system stability and accuracy. This could involve re-calibration, sensor re-initialization, or switching to a backup sensor.

The specific methods chosen depend on the application. For example, a self-driving car might use a combination of GPS, IMU, lidar, and cameras, continuously fusing data and recalibrating to maintain a stable and accurate reference frame, while a robotic arm in a controlled environment might rely on less complex calibration strategies.

Q 15. Explain the concept of extrinsic and intrinsic calibration parameters.

Imagine you have a camera and a LiDAR mounted on a robot. Extrinsic and intrinsic calibration parameters define how these sensors are related to each other and to the world. Intrinsic parameters describe the internal characteristics of a single sensor. For a camera, this includes focal length, principal point, and lens distortion coefficients. For a LiDAR, it might include parameters related to its laser beam divergence and range accuracy. These parameters are usually determined through factory calibration or a dedicated self-calibration process.

Extrinsic parameters, on the other hand, describe the spatial relationship between different sensors. Specifically, they define the transformation (rotation and translation) needed to convert coordinates from one sensor’s reference frame to another. For our robot example, extrinsic calibration would determine the precise position and orientation of the camera relative to the LiDAR. This transformation is often represented as a 4×4 homogeneous transformation matrix.

Think of it like this: intrinsic parameters are like the individual specifications of each instrument in an orchestra (e.g., the size and material of a violin), while extrinsic parameters describe the arrangement of the instruments on the stage (e.g., the position of the violin section relative to the brass section).

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you perform extrinsic calibration of a camera and a LiDAR sensor?

Extrinsic calibration of a camera and LiDAR involves finding the transformation matrix that aligns their coordinate systems. A common approach is to use a calibration target with known geometry (e.g., a checkerboard or a planar target with precisely marked points). The process generally involves these steps:

- Data Acquisition: Capture images from the camera and point cloud data from the LiDAR simultaneously while observing the calibration target from different viewpoints.

- Feature Extraction: Detect and extract corresponding features in both the camera images and the LiDAR point cloud. For the camera, this might involve corner detection on the checkerboard. For the LiDAR, it could be identifying points on the plane of the target.

- Correspondence Establishment: Match the features detected in the camera and LiDAR data. This is a crucial step, and robust algorithms are needed to handle noise and outliers.

- Optimization: Use an optimization algorithm (e.g., Levenberg-Marquardt) to minimize the error between the observed and expected positions of the features. This optimization process estimates the six extrinsic parameters (three for translation and three for rotation) that define the transformation between the camera and LiDAR frames.

Software libraries like OpenCV and PCL provide tools to facilitate these steps. Once the optimization is complete, the resulting transformation matrix can be used to convert points from one sensor’s frame to the other.

//Example Transformation (Conceptual): lidar_point_in_camera_frame = T_camera_lidar * lidar_point_in_lidar_frame;Q 17. Discuss the importance of timestamp synchronization in multi-sensor reference frame transformations.

Accurate timestamp synchronization is paramount in multi-sensor systems. Without it, reference frame transformations will be inaccurate and unreliable. Imagine trying to combine data from a camera that captured an image at 10:00:00.001 and a LiDAR scan at 10:00:00.010. The slight time difference, even in milliseconds, can lead to significant errors in the fused data, especially when dealing with moving objects or dynamic environments.

Inaccurate timestamps can result in ghosting effects (objects appearing in the wrong place), misalignment, and incorrect depth estimations. Effective synchronization techniques include hardware synchronization using a shared clock or software synchronization using precise time stamping and interpolation. Careful consideration needs to be given to clock drift and latency in the different sensors.

Q 18. What are common error sources in reference frame implementation?

Numerous error sources can affect reference frame implementations. These include:

- Sensor Noise and Inaccuracies: Imperfect sensors introduce noise and inaccuracies in measurements.

- Calibration Errors: Inaccurate intrinsic and extrinsic calibration parameters.

- Timestamp Synchronization Errors: Asynchronicity between sensors.

- Environmental Factors: Temperature changes can affect sensor performance.

- Numerical Errors: Round-off errors during calculations.

- Transformation Errors: Errors arising from applying the transformation matrix.

Addressing these error sources requires careful sensor selection, rigorous calibration procedures, robust synchronization strategies, and consideration of environmental factors.

Q 19. How do you debug and troubleshoot issues related to reference frame transformations?

Debugging reference frame issues is systematic. Here’s a suggested approach:

- Visual Inspection: Use visualization tools to plot the sensor data in their respective coordinate frames and the transformed data. Look for inconsistencies or unexpected patterns.

- Data Validation: Check for unrealistic values or outliers in the sensor data. Apply data filtering techniques if necessary.

- Calibration Verification: Re-evaluate the accuracy of the intrinsic and extrinsic calibration parameters. Consider recalibrating the sensors.

- Timestamp Analysis: Examine the timestamps to ensure proper synchronization. Check for inconsistencies or delays.

- Incremental Debugging: Break down the transformation process into smaller steps and check the output of each step. This helps isolate the source of error.

- Simulation: Use a simulation environment to test the reference frame implementation with known data, allowing you to isolate and troubleshoot individual components.

Systematic logging of data, intermediate steps and results throughout the processing chain will prove invaluable during debugging.

Q 20. Explain how you would handle coordinate transformations in a multi-robot system.

Handling coordinate transformations in a multi-robot system requires establishing a common global reference frame. Each robot will have its own local coordinate frame. Transformations between local frames and the global frame, and between local frames of different robots, are essential. This often involves:

- Global Positioning System (GPS): Use GPS to obtain the initial global position of each robot.

- Simultaneous Localization and Mapping (SLAM): Employ SLAM techniques to build a map and track each robot’s pose in the map.

- Transformation Matrices: Represent the transformations between different reference frames using homogeneous transformation matrices.

- Data Fusion: Combine data from different robots using appropriate data fusion techniques.

The complexity increases significantly with the number of robots, the dynamic nature of the environment, and the communication latency. Careful consideration of the chosen approach and robust algorithms are crucial.

Q 21. Describe your experience with different software libraries or tools used for reference frame transformations (e.g., ROS, Eigen).

I have extensive experience using ROS (Robot Operating System) and Eigen for reference frame transformations. ROS provides a powerful framework for multi-sensor integration, with its coordinate frame transformation capabilities implemented using tf (transform library). I’ve leveraged tf to manage transformations between various sensors (cameras, LiDAR, IMUs) and robot bases within complex robotic systems. This involves publishing and subscribing to transform messages, utilizing tf trees for efficient transformation lookups, and resolving transformations between frames.

Eigen is a powerful C++ library providing efficient linear algebra functionalities, critical for matrix operations associated with rotations and translations. I have utilized Eigen to perform computations involving homogeneous transformation matrices, including matrix multiplication, inversion, and decomposition. Its efficiency and ease of use in C++ development make it ideal for real-time applications. Combining the high-level organizational capabilities of ROS with the computational power of Eigen provides a robust and flexible framework for handling reference frame transformations.

Q 22. How do you validate the accuracy of your reference frame implementation?

Validating a reference frame implementation requires a multi-pronged approach focusing on both internal consistency and external accuracy. Internally, we check for mathematical correctness in our transformation equations. This involves rigorous unit testing, verifying that transformations are invertible (you can go back and forth between frames without loss of information) and that they conform to known geometric properties (e.g., distances remain consistent after a rotation). External validation involves comparing our computed reference frame data to ground truth measurements. For example, in robotics, we might use a high-precision motion capture system to track the robot’s pose and compare it to the pose estimated using our implemented reference frame. Discrepancies indicate potential errors in sensor calibration, transformation algorithms, or even the underlying physics model used.

We often employ statistical methods, such as calculating root mean squared error (RMSE) between our estimated and ground truth values. This provides a quantitative measure of accuracy. Visualization tools are also crucial; plotting the estimated trajectory alongside the ground truth trajectory can reveal systematic biases or outliers in our reference frame calculations. In some cases, we’ll employ redundancy, using multiple sensors or algorithms to cross-validate the reference frame data and improve robustness.

Q 23. What are the limitations of using a specific type of reference frame?

The choice of reference frame introduces limitations depending on the application. For instance, using a body-fixed frame simplifies the description of internal robot dynamics but complicates the representation of its interaction with the environment. The equations of motion become more complex when expressed in a frame that is accelerating or rotating. Conversely, an inertial frame simplifies external interactions but makes internal dynamics more challenging to model. Furthermore, using a frame that’s poorly aligned with the system’s dominant motion can lead to numerical instability in algorithms, especially when dealing with small rotational angles which can cause singularity issues in some coordinate systems (e.g. Euler angles).

For example, using a body-fixed frame on a highly maneuverable aircraft simplifies representing control surface movements, but describing the aircraft’s position relative to a ground-based target becomes significantly more complex. This complexity impacts algorithm design and potentially increases computation time. Another limitation involves sensor limitations. If a sensor’s measurements are inherently tied to a specific frame, using a different frame might necessitate complex coordinate transformations, possibly introducing noise and errors. Therefore, choosing the right frame is a careful balancing act tailored to the specific application and its computational resources.

Q 24. Explain the difference between inertial and body-fixed reference frames.

The key difference lies in their nature: an inertial reference frame is non-accelerating and non-rotating, providing a fixed point of reference in space. Think of it as a distant star that appears stationary. In contrast, a body-fixed reference frame is attached to a moving body (e.g., a robot or vehicle), meaning it shares the body’s motion. Imagine a coordinate system painted onto the body of a car.

Inertial frames simplify the description of forces and motion because Newton’s laws of motion apply directly. However, obtaining true inertial measurements can be challenging and often requires complex sensor fusion techniques that estimate the effect of acceleration and rotation. Body-fixed frames make it easier to describe internal dynamics, such as the movements of joints within a robot arm or the orientation of a spacecraft’s components relative to itself. However, the equations describing the object’s interaction with the environment become more complex as they account for the body’s motion.

Q 25. How does the choice of reference frame impact the complexity of control algorithms?

The choice of reference frame profoundly impacts the complexity of control algorithms. Using a body-fixed frame simplifies the control of internal degrees of freedom but makes external control challenging. For example, controlling a robot arm’s position relative to a stationary object is much more complex in a body-fixed frame than in a world frame (a fixed inertial frame). The control algorithm needs to account for the robot’s own movement while simultaneously controlling the end-effector’s position. This typically requires more complex transformations and potentially more computationally intensive algorithms.

Conversely, using an inertial frame simplifies external control but increases the complexity of internal control. Consider controlling the orientation of a spacecraft. While the control algorithms governing attitude are straightforward in body-fixed frames (they directly affect rotation about the spacecraft’s axes), these algorithms become more computationally complex in an inertial frame due to the need for constant transformations accounting for the spacecraft’s current orientation.

Therefore, algorithm complexity is influenced by the frame’s suitability for representing both the controlled variables and their interactions with the environment. A smart choice requires a trade-off between these factors to optimize the balance between computational efficiency and algorithmic complexity.

Q 26. Describe your experience with different coordinate systems (Cartesian, spherical, cylindrical).

I have extensive experience working with Cartesian, spherical, and cylindrical coordinate systems, each suited to specific scenarios. Cartesian coordinates (x, y, z) are the most intuitive, representing position with orthogonal axes. They are excellent for describing linear motion and are readily used in many robotics applications, particularly when dealing with straight-line trajectories or linear actuators.

Spherical coordinates (radius, azimuth, elevation) are ideal for representing positions with radial symmetry, such as the location of satellites or the trajectory of projectiles. The calculations simplify when dealing with rotations around a central point. Cylindrical coordinates (radius, azimuth, height) combine aspects of Cartesian and spherical, useful for representing systems with rotational symmetry along a central axis, like the motion of a robotic arm moving along a circular path.

The choice is context-dependent. For example, in simulating planetary motion, spherical coordinates are preferred due to the inherent radial symmetry. In robotic arm kinematics, a combination of Cartesian and cylindrical might be used, where the arm’s base position is described using Cartesian coordinates, while the arm’s configuration is described using cylindrical coordinates to account for the rotational joint.

Q 27. How do you handle transformations between different coordinate systems?

Transformations between coordinate systems are crucial in reference frame implementation. These transformations involve matrix operations that map points and vectors from one coordinate system to another. The specific transformation matrix depends on the coordinate systems involved. For example, transforming from Cartesian to spherical coordinates involves trigonometric functions like sine and cosine.

// Example: Cartesian to Spherical Transformation (assuming origin at 0,0,0) double x, y, z; // Cartesian coordinates double r, theta, phi; // Spherical coordinates r = sqrt(x*x + y*y + z*z); theta = atan2(y, x); // Azimuth angle phi = acos(z / r); // Elevation angle

Similarly, transformations from Cartesian to cylindrical or between other coordinate systems involve different but well-defined matrix operations. Error handling and numerical stability are critical aspects of these transformations, especially when dealing with potential singularities (points where the transformation matrix becomes singular and loses its invertibility). Employing robust numerical techniques and careful consideration of potential singularities is essential for reliable transformations.

Q 28. How would you approach designing a robust reference frame system for an autonomous robot?

Designing a robust reference frame system for an autonomous robot necessitates a holistic approach. First, sensor selection and placement are paramount. We need sensors that provide accurate and complementary data for pose estimation. This might include IMUs (inertial measurement units) for orientation, GPS for global positioning, wheel odometry for relative displacement, and potentially LiDAR or cameras for environmental mapping and localization. Sensor fusion techniques are then essential to combine these diverse sensor readings, accounting for their individual noise characteristics and potential biases. A Kalman filter or extended Kalman filter is a common choice for sensor fusion in such scenarios.

Second, the chosen reference frames must be carefully selected to balance the complexity of control algorithms with the accuracy requirements of the robot’s tasks. A hierarchical system might be used, with different reference frames defined at different levels. For example, a world frame, a robot base frame, and end-effector frames could be defined, with transformations between them calculated efficiently. Finally, rigorous testing and validation procedures are essential to ensure the robustness of the system under various conditions and potential failures. This includes simulations under various scenarios and real-world testing to validate the accuracy and reliability of the reference frame system.

Key Topics to Learn for Reference Frame Implementation Interview

- Coordinate Systems: Understanding different coordinate systems (Cartesian, spherical, cylindrical) and their transformations is fundamental. Practice converting between them and visualizing transformations in 3D space.

- Rotation Matrices: Mastering the creation, manipulation, and application of rotation matrices is crucial. Understand how they represent rotations and how to compose multiple rotations.

- Euler Angles: Learn the advantages and disadvantages of using Euler angles to represent rotations. Be prepared to discuss gimbal lock and alternative approaches.

- Quaternions: Understand the benefits of using quaternions for representing rotations, especially in avoiding gimbal lock. Practice quaternion arithmetic and conversions to other representations.

- Transformation Matrices: Learn how to combine translation and rotation into a single transformation matrix and apply it to points and vectors. Practice applying these transformations in various scenarios.

- Practical Applications: Be ready to discuss the application of reference frame implementation in robotics, computer graphics, aerospace engineering, or other relevant fields. Consider examples involving object tracking, motion planning, or sensor fusion.

- Error Handling and Numerical Stability: Discuss strategies for handling potential errors and maintaining numerical stability when working with transformations and rotations. Understanding the limitations of floating-point arithmetic is important.

- Algorithm Efficiency: Be prepared to analyze the computational complexity of different approaches to reference frame transformations and discuss optimization strategies.

Next Steps

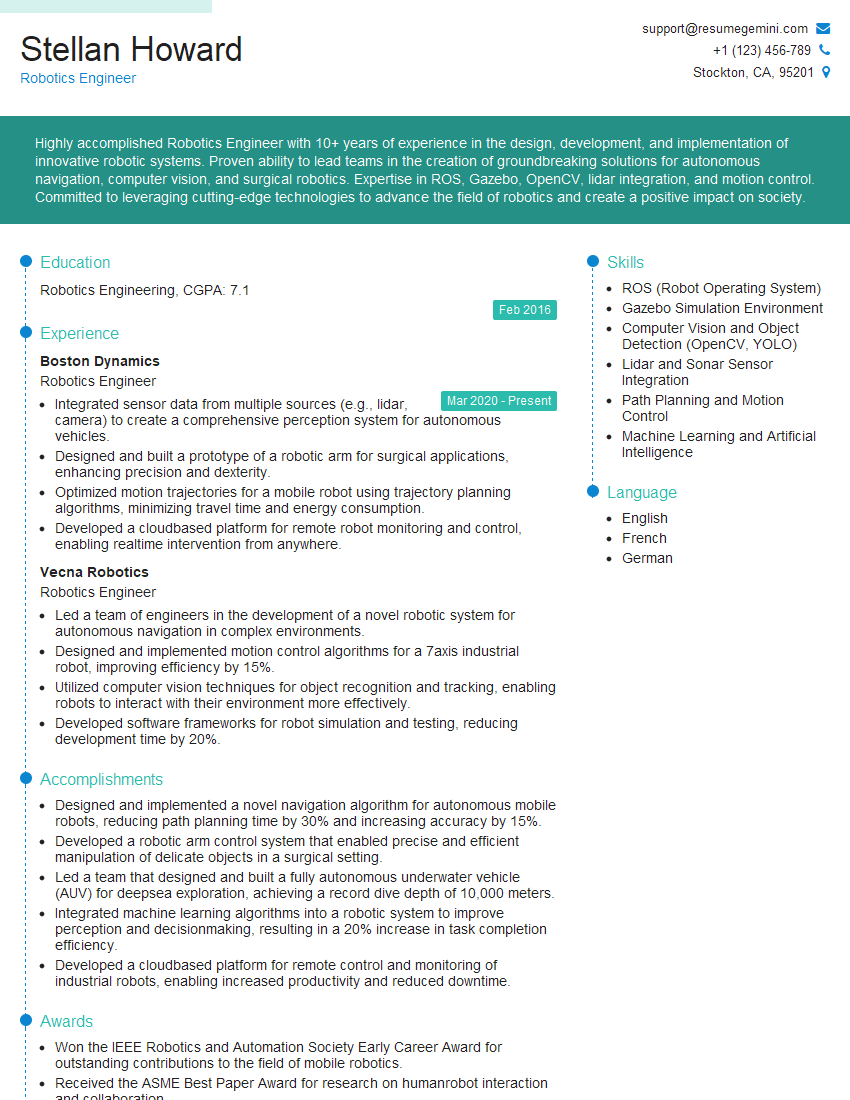

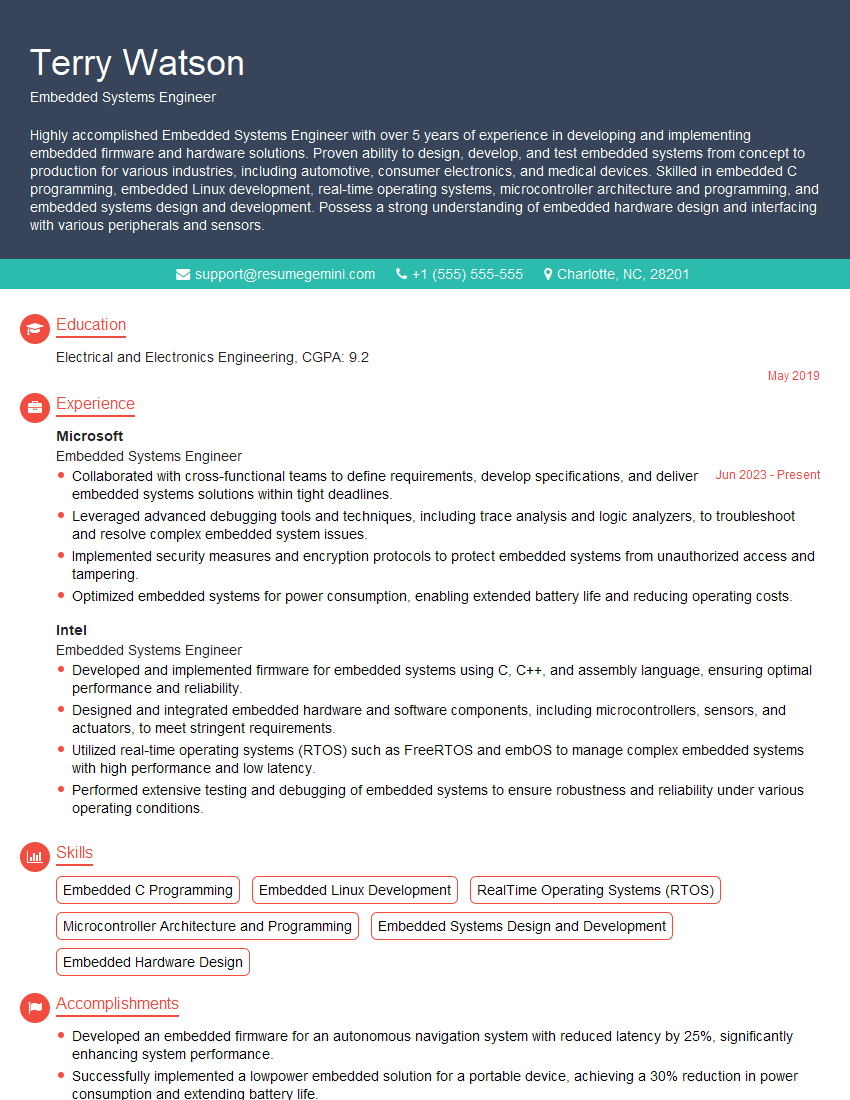

Mastering Reference Frame Implementation significantly enhances your prospects in numerous high-demand technical roles, opening doors to exciting and challenging projects. A well-crafted resume is your key to unlocking these opportunities. To maximize your impact, focus on creating an ATS-friendly resume that highlights your relevant skills and experience effectively. ResumeGemini is a trusted resource that can help you build a professional and impactful resume, showcasing your expertise in Reference Frame Implementation and other key technical skills. Examples of resumes tailored to Reference Frame Implementation are available to help guide your resume creation.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples