Every successful interview starts with knowing what to expect. In this blog, we’ll take you through the top Ability to interpret and apply scientific data interview questions, breaking them down with expert tips to help you deliver impactful answers. Step into your next interview fully prepared and ready to succeed.

Questions Asked in Ability to interpret and apply scientific data Interview

Q 1. Explain the difference between correlation and causation in scientific data.

Correlation and causation are two distinct concepts in statistics. Correlation refers to a statistical relationship between two or more variables, indicating that they tend to change together. However, correlation does not imply causation; just because two variables are correlated doesn’t mean one causes the other. Causation implies a direct cause-and-effect relationship, where a change in one variable directly leads to a change in another.

Example: Ice cream sales and crime rates might be positively correlated – both tend to increase during the summer. This doesn’t mean that increased ice cream sales cause increased crime, or vice-versa. The underlying factor is the summer heat, which influences both.

To establish causation, we need to demonstrate a plausible mechanism linking the variables and often utilize more rigorous methods like controlled experiments, where extraneous factors are minimized or controlled, to isolate the causal effect.

Q 2. How do you identify outliers in a dataset and determine their impact on analysis?

Outliers are data points that significantly deviate from the rest of the data. Identifying them is crucial because they can disproportionately influence statistical analyses. Several methods exist for outlier detection:

- Visual inspection: Scatter plots, box plots, and histograms can visually highlight points lying far from the main data cluster.

- Statistical methods: Z-scores (measuring the number of standard deviations a point is from the mean) or the Interquartile Range (IQR) method (identifying data points beyond 1.5 times the IQR from the quartiles) can quantitatively identify potential outliers.

Impact on Analysis: Outliers can inflate the mean, skew standard deviation, and distort regression lines, leading to inaccurate conclusions. The impact depends on the outlier’s magnitude and the analysis method used. Before removing them, it’s vital to investigate the reason for their existence – a data entry error, a truly unusual observation, or something else. Simply removing them without justification is inappropriate.

Example: In analyzing student test scores, a single student scoring exceptionally low might be an outlier. If this is due to an illness on the test day, it might be inappropriate to remove the data point. However, if it’s clearly due to data entry error, correction or removal might be warranted.

Q 3. Describe your experience using statistical software (e.g., R, SPSS, SAS).

I have extensive experience using statistical software, primarily R and SPSS. In R, I’m proficient in data manipulation using dplyr, data visualization with ggplot2, and statistical modeling with packages like lm (for linear models), glm (for generalized linear models), and lme4 (for mixed-effects models). I’ve used SPSS for similar tasks, particularly its user-friendly interface for descriptive statistics and basic inferential tests. My experience includes:

- Performing exploratory data analysis to understand data patterns and distributions.

- Building and evaluating statistical models to test hypotheses and make predictions.

- Creating compelling visualizations to communicate findings to both technical and non-technical audiences.

For example, I recently used R to analyze a large dataset of patient records to identify risk factors for a specific disease. This involved data cleaning, statistical modeling, and creating informative visualizations for a medical journal publication. My proficiency extends to customizing analyses, interpreting results, and effectively presenting findings.

Q 4. How do you determine the appropriate statistical test for a given research question?

Selecting the appropriate statistical test depends on several factors: the type of data (continuous, categorical, ordinal), the research question (comparing means, exploring relationships, etc.), the number of groups being compared, and whether the assumptions of the test are met.

A structured approach involves:

- Defining the research question: What are you trying to find out?

- Identifying the type of data: Are your variables continuous, categorical, or ordinal?

- Considering the number of groups: Are you comparing two groups, or more?

- Checking assumptions: Many tests assume normality, independence of observations, etc. Are these assumptions met in your data? If not, non-parametric alternatives might be considered.

- Choosing the appropriate test: Based on the above, select a suitable test (e.g., t-test, ANOVA, chi-square test, correlation analysis, regression analysis).

Example: If you want to compare the mean height of men and women, an independent samples t-test would be appropriate if the data meets the necessary assumptions. If you want to explore the relationship between age and income, correlation analysis or regression analysis might be more suitable. Incorrect test selection leads to misleading or inaccurate results. Therefore, careful consideration of these factors is crucial.

Q 5. Explain the concept of p-values and their significance in hypothesis testing.

The p-value is the probability of obtaining results as extreme as, or more extreme than, the observed results, assuming the null hypothesis is true. The null hypothesis is a statement that there is no effect or relationship between variables. A small p-value (typically less than 0.05) suggests that the observed results are unlikely to have occurred by chance alone, providing evidence against the null hypothesis. This leads to the rejection of the null hypothesis, and we conclude there is statistically significant evidence for an effect or relationship.

Important Note: A p-value does not indicate the magnitude or practical significance of the effect. A small p-value could still represent a very small effect size, and a large p-value doesn’t necessarily mean there is no effect; it just means there isn’t enough evidence to reject the null hypothesis with the given data.

Example: Imagine testing whether a new drug lowers blood pressure. A p-value of 0.03 indicates that if the drug had no effect, there’s only a 3% chance of observing the reduction in blood pressure observed in the study. This would lead us to reject the null hypothesis and conclude that the drug likely lowers blood pressure.

Q 6. How do you interpret confidence intervals?

Confidence intervals provide a range of plausible values for a population parameter (like the mean or a regression coefficient) based on a sample of data. For example, a 95% confidence interval means that if we were to repeat the study many times, 95% of the calculated confidence intervals would contain the true population parameter. It doesn’t mean there’s a 95% chance the true parameter lies within the interval; the true parameter is fixed, the interval is what’s random.

Interpreting: A narrower confidence interval indicates greater precision in estimating the population parameter, whereas a wider interval reflects greater uncertainty. If a confidence interval for a treatment effect does not include zero, it suggests a statistically significant effect. For instance, if the 95% confidence interval for the difference in mean blood pressure between a treatment and control group is (2, 5), it indicates that we are 95% confident that the true difference lies between 2 and 5 mmHg, implying a statistically significant positive effect of the treatment.

Q 7. What are the limitations of using regression analysis?

Regression analysis, while powerful, has limitations:

- Assumption violations: Regression models often assume linearity, independence of errors, constant variance, and normality of errors. Violations of these assumptions can lead to biased or inefficient estimates.

- Multicollinearity: High correlation between predictor variables can make it difficult to isolate the effect of each variable.

- Overfitting: Including too many predictor variables can lead to a model that fits the training data well but generalizes poorly to new data.

- Causation vs. correlation: Regression analysis can show associations between variables but doesn’t necessarily imply causation.

- Extrapolation: Making predictions outside the range of the observed data can be unreliable.

- Outlier sensitivity: Outliers can disproportionately influence the regression model.

Addressing these limitations often requires careful data preparation, model diagnostics, and potentially using more robust regression techniques or alternative modeling approaches.

Q 8. How do you handle missing data in a scientific dataset?

Missing data is a common challenge in scientific datasets. Ignoring it can lead to biased results, so careful handling is crucial. My approach involves a multi-step process:

- Assessment: First, I identify the extent and pattern of missing data. Is it missing completely at random (MCAR), missing at random (MAR), or missing not at random (MNAR)? This influences the best method for imputation (filling in missing values).

- Imputation Techniques: For MCAR data, simple imputation methods like mean/median imputation or k-nearest neighbors (k-NN) can be effective. For MAR or MNAR data, more sophisticated techniques are necessary, such as multiple imputation or maximum likelihood estimation. Multiple imputation is particularly useful as it accounts for the uncertainty introduced by the missing data by generating multiple imputed datasets.

- Model Selection: The choice of imputation method often depends on the type of data and the analytical model. For instance, using linear regression on data imputed with the mean might be problematic if there are non-linear relationships.

- Sensitivity Analysis: Finally, I perform a sensitivity analysis to check how much the results change depending on the imputation method used. This helps assess the robustness of the findings.

For example, in a study analyzing the effects of a new drug, missing data on patient outcomes might be due to dropouts. If the dropouts are related to the drug’s effectiveness (MNAR), then simply imputing the missing values with the mean outcome would be misleading. Multiple imputation would be a more appropriate technique to account for this complexity.

Q 9. Describe your approach to data visualization and its importance in communication.

Data visualization is essential for effectively communicating scientific findings. It allows us to move beyond raw numbers and present complex relationships in an easily digestible way. My approach emphasizes clarity, accuracy, and context.

- Choosing the Right Chart: I carefully select the most appropriate chart type for the data and the message I want to convey. Scatter plots are good for showing correlations, bar charts for comparisons, and time series plots for trends.

- Clear Labeling and Titles: Axes are always clearly labeled with units, and charts have concise but informative titles. Legends are included where necessary, ensuring readability.

- Contextualization: I avoid misleading visuals by carefully choosing scales and ensuring the context is clearly explained. For instance, I’d avoid truncating the y-axis if it distorts the magnitude of the effect.

- Interactive Visualizations: Where appropriate, I use interactive tools allowing the audience to explore the data further and uncover hidden patterns.

For instance, instead of simply reporting the correlation coefficient between two variables, I would display a scatter plot to show the strength and direction of that relationship visually. This makes it easier for non-experts to understand the finding.

Q 10. Explain the difference between descriptive, inferential, and predictive statistics.

These three branches of statistics serve different purposes:

- Descriptive Statistics: This summarizes and describes the main features of a dataset without making any inferences about a larger population. It involves calculating measures like mean, median, mode, standard deviation, and creating visualizations like histograms and box plots. Think of it as simply describing what you see in your data.

- Inferential Statistics: This goes beyond describing the data at hand and uses it to make inferences about a larger population from which the sample was drawn. It involves hypothesis testing, confidence intervals, and regression analysis. We use inferential statistics to determine if our findings from the sample are generalizable to a bigger group.

- Predictive Statistics: This aims to predict future outcomes or values based on past data. It uses techniques such as machine learning algorithms (like regression, classification, and clustering) to build models that make predictions. This is particularly useful in forecasting or making informed decisions.

For example, calculating the average age of participants in a study is descriptive statistics. Testing if there’s a significant difference in blood pressure between two treatment groups is inferential statistics. Predicting future sales based on past sales data is predictive statistics.

Q 11. How do you ensure the reproducibility of your scientific data analysis?

Reproducibility is paramount in science. My approach to ensuring reproducibility involves:

- Detailed Documentation: I meticulously document every step of my analysis, including data cleaning, preprocessing, and the choice of statistical methods. This includes versions of software used and any parameters.

- Version Control: I use version control systems like Git to track changes in my code and data. This allows me to easily revert to previous versions if necessary and collaborates with others more easily.

- Open-Source Tools: I prefer using open-source software and tools wherever possible. This ensures that others can access and run the same code.

- Data Sharing: When appropriate and ethical, I share my data and code publicly through repositories like GitHub or data archives. This enhances transparency and allows others to verify my findings.

- Well-Structured Code: I write clean, well-documented code that’s easy to understand and modify. I use comments extensively to explain what each section of the code does.

Imagine another researcher wants to reproduce my analysis. With thorough documentation and accessible code, they should be able to obtain the same (or very similar) results. This is crucial for the validation and advancement of scientific knowledge.

Q 12. What are some common pitfalls to avoid when interpreting scientific data?

Interpreting scientific data can be tricky, and several pitfalls exist:

- Confirmation Bias: This involves favoring data that confirms pre-existing beliefs and ignoring contradictory evidence. It’s important to approach the data objectively and consider all possible interpretations.

- Overfitting: In predictive modeling, overfitting occurs when the model fits the training data too closely and fails to generalize to new data. This leads to poor predictive accuracy on unseen data.

- Ignoring Context: Failing to consider the broader context of the data, including potential confounding factors or limitations of the study design.

- Misinterpreting Correlation as Causation: Just because two variables are correlated doesn’t mean one causes the other. There might be a third, unseen variable influencing both.

- Ignoring Statistical Significance: Focusing solely on statistical significance without considering the practical significance or effect size.

- P-hacking: Manipulating the data or analysis to achieve a statistically significant result.

For instance, a researcher might selectively report only the results that support their hypothesis, ignoring those that contradict it. This is a classic example of confirmation bias and can lead to inaccurate conclusions.

Q 13. How do you assess the validity and reliability of scientific findings?

Assessing the validity and reliability of scientific findings requires a critical and multi-faceted approach:

- Study Design: Examining the study design to identify potential biases or limitations. Was the sample size adequate? Was the study properly randomized and controlled?

- Methodology: Scrutinizing the methods used for data collection, analysis, and interpretation. Were appropriate statistical methods used? Were the analyses correctly performed?

- Replication: Checking whether the findings can be replicated in independent studies. Replication is a cornerstone of scientific validity.

- Peer Review: Considering the findings in light of peer review comments and publications. Peer review helps identify potential flaws and biases.

- Consistency with Existing Literature: Assessing how the findings fit within the existing body of knowledge. Do they support, contradict, or extend current understanding?

For example, a study claiming a groundbreaking discovery should be viewed with skepticism if it lacks replication, has methodological flaws, or contradicts established scientific principles. A critical appraisal requires examining various aspects of the research.

Q 14. Explain your experience with different data types (e.g., categorical, numerical, time-series).

I have extensive experience working with diverse data types:

- Numerical Data: This includes continuous data (e.g., height, weight, temperature) and discrete data (e.g., number of cars, count of events). I’m proficient in statistical analyses involving means, standard deviations, correlations, and regressions.

- Categorical Data: This involves nominal data (e.g., gender, color) and ordinal data (e.g., education level, satisfaction rating). I use techniques like chi-square tests, contingency tables, and logistic regression to analyze this type of data.

- Time-Series Data: This type of data is ordered chronologically (e.g., stock prices, temperature readings). I’m experienced in using time-series analysis methods, such as ARIMA models, to identify patterns and make predictions.

- Mixed Data Types: Many datasets contain a mixture of data types. I am skilled in handling such situations, often involving data transformation or the use of appropriate statistical methods for mixed-type data.

For example, in analyzing customer data, I might encounter numerical data (age, income), categorical data (gender, location), and time-series data (purchase history). Understanding the characteristics of each data type and selecting appropriate analysis techniques are crucial for drawing meaningful insights.

Q 15. How do you identify and address biases in scientific data?

Identifying and addressing biases in scientific data is crucial for ensuring the validity and reliability of research findings. Bias can creep in at various stages, from study design to data analysis and interpretation. My approach involves a multi-step process:

- Careful Study Design: Pre-empting bias begins with meticulously planning the study. This includes defining a clear research question, selecting a representative sample, and establishing robust methods for data collection. For example, if studying the effectiveness of a new drug, ensuring a randomized controlled trial helps mitigate selection bias.

- Data Collection Scrutiny: Thoroughly examining data collection methods is critical. Were there any systematic errors in data recording or measurement? Were there any potential confounding variables that weren’t accounted for? For instance, if surveying people about their exercise habits, biases might arise from self-reporting inaccuracies.

- Statistical Analysis: Employing appropriate statistical techniques is essential to detect and control for biases. This involves understanding and using techniques such as regression analysis to account for confounding variables and utilizing appropriate statistical tests to compare groups.

- Blind Analyses: Where feasible, employing blind or double-blind studies minimizes observer bias, where the researcher’s expectations influence data interpretation. For instance, in clinical trials, blinding the participants and researchers to treatment allocation prevents biased assessment of outcomes.

- Transparency and Peer Review: Openly sharing data and methods with the scientific community allows for critical evaluation and helps identify potential biases that may have been overlooked. The peer-review process is designed to catch and address these issues before publication.

By systematically addressing these aspects, we can strive towards minimizing bias and achieving more robust and reliable scientific conclusions.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe a time you had to interpret complex scientific data to solve a problem.

During a project investigating the impact of climate change on coral reef ecosystems, I was faced with a large, complex dataset comprising water temperature readings, coral bleaching events, and various oceanographic parameters collected over several decades. The initial challenge was the sheer volume and heterogeneity of the data, including missing values and inconsistent units.

My approach involved several steps:

- Data Cleaning and Preprocessing: This involved handling missing data using imputation techniques, standardizing units, and checking for outliers. I used R and Python to process this data, leveraging packages such as

pandasandscikit-learn. - Exploratory Data Analysis (EDA): I performed EDA using various visualization techniques to understand the patterns and relationships within the data. Histograms, scatter plots, and time series plots helped reveal correlations between water temperature and coral bleaching frequency.

- Statistical Modeling: I employed generalized linear models (GLMs) to model the relationship between water temperature and coral bleaching, accounting for other relevant variables such as ocean acidity and wave action. The results showed a strong positive correlation, supporting the hypothesis that rising water temperatures contribute to coral bleaching events.

- Model Validation: I validated my model using techniques like cross-validation to ensure its generalizability and robustness. The validation confirmed the accuracy of the model’s predictions.

This project highlighted the importance of a systematic approach to analyzing complex datasets and the need for robust statistical modeling to draw meaningful conclusions.

Q 17. What are your preferred methods for validating your scientific interpretations?

Validating scientific interpretations is crucial to ensure their reliability and accuracy. My preferred methods involve a combination of approaches:

- Replication: Independent replication of findings by other researchers is the gold standard. If multiple studies using different methods arrive at similar conclusions, it strengthens the validity of the interpretation.

- Cross-Validation: In statistical modeling, cross-validation techniques help assess the generalizability of the model and reduce the risk of overfitting. This involves splitting the data into training and testing sets to evaluate how well the model predicts unseen data.

- Sensitivity Analysis: This involves testing the robustness of the interpretation by slightly altering model parameters or assumptions. If the conclusions remain consistent across a range of inputs, it enhances confidence in the findings.

- Peer Review: Subjecting the interpretation to critical scrutiny by peers in the scientific community provides valuable feedback and helps identify potential flaws or biases.

- External Validation: Comparing findings with data from independent sources or using alternative methods helps ensure that the interpretation is consistent with a broader body of knowledge.

By employing these diverse methods, I aim to ensure that my interpretations are rigorous, reliable, and supported by strong evidence.

Q 18. How do you communicate complex scientific data to a non-technical audience?

Communicating complex scientific data to a non-technical audience requires simplifying concepts without sacrificing accuracy. My approach involves:

- Use of Analogies and Metaphors: Relating scientific concepts to everyday experiences makes them more accessible. For example, explaining the concept of gene expression using the analogy of a recipe book for a cell.

- Visualizations: Employing charts, graphs, and infographics to visually represent data makes it easier to understand. Avoid overly complex or cluttered visualizations.

- Storytelling: Framing the data within a narrative that connects with the audience’s interests and experiences makes the information more engaging and memorable.

- Plain Language: Avoiding technical jargon and using simple, clear language is essential. If technical terms are necessary, define them clearly.

- Interactive Elements: In presentations or online materials, incorporating interactive elements, such as quizzes or simulations, can enhance engagement and learning.

The key is to tailor the communication style to the audience’s level of understanding, ensuring that the message is both accurate and easily comprehensible.

Q 19. How do you stay updated on the latest advancements in data analysis techniques?

Staying current with advancements in data analysis techniques is crucial in this rapidly evolving field. My strategies include:

- Reading Scientific Literature: Regularly reviewing peer-reviewed journals and publications in my field and related areas keeps me abreast of the latest research.

- Attending Conferences and Workshops: Participating in conferences and workshops offers opportunities to learn from leading experts and network with colleagues.

- Online Courses and Webinars: Many online platforms offer courses and webinars on advanced data analysis techniques. I utilize these to deepen my knowledge and skills.

- Following Key Researchers and Organizations: Following influential researchers and organizations on social media and other platforms provides exposure to their latest work and findings.

- Participating in Online Communities: Engaging in online forums and communities dedicated to data analysis allows for sharing knowledge and learning from peers.

A commitment to continuous learning is essential to remain a competent and effective data analyst.

Q 20. What are your strengths and weaknesses in interpreting scientific data?

My strengths lie in my ability to critically assess data, identify potential biases, and select appropriate statistical methods for analysis. I possess a strong foundation in statistical modeling and programming skills to handle complex datasets. I’m also adept at communicating my findings effectively, both to technical and non-technical audiences.

One area I am continually working on is improving my proficiency with cutting-edge machine learning techniques, especially deep learning, for analyzing high-dimensional data. Although I have a foundational understanding, further focused study and practical application are needed to strengthen this aspect of my skill set.

Q 21. How would you approach analyzing a large, unstructured dataset?

Analyzing a large, unstructured dataset requires a systematic approach. My strategy would involve:

- Data Exploration and Cleaning: The first step involves exploring the data to understand its structure and characteristics. This includes identifying missing values, outliers, and inconsistencies. Techniques like regular expressions can be used to clean text data.

- Feature Extraction and Engineering: For unstructured data like text, this involves extracting relevant features, such as word frequencies, sentiment scores, or topic models. For example, using Natural Language Processing (NLP) techniques to analyze text and identify key themes.

- Dimensionality Reduction: Large datasets often have high dimensionality, making analysis challenging. Techniques like Principal Component Analysis (PCA) or t-SNE can reduce dimensionality while preserving important information.

- Model Selection: Choosing the appropriate model depends on the research question and data characteristics. For example, clustering algorithms might be used to group similar data points, while classification algorithms could be used for prediction tasks.

scikit-learnin Python provides a wide range of algorithms. - Model Evaluation and Validation: Evaluating the model’s performance using appropriate metrics (e.g., accuracy, precision, recall) and validation techniques (e.g., cross-validation) is crucial. This ensures the robustness and generalizability of the findings.

- Visualization and Interpretation: Visualizing the results effectively is critical for understanding the insights derived from the data.

Throughout this process, iterative refinement and validation are key to ensure accurate and meaningful results.

Q 22. Describe your experience with data cleaning and preprocessing techniques.

Data cleaning and preprocessing are crucial initial steps in any scientific data analysis. Think of it like preparing ingredients before cooking – you wouldn’t start baking a cake with rotten eggs! My experience encompasses a range of techniques, from handling missing values to transforming data for improved model performance.

- Handling Missing Values: I frequently employ methods like imputation (filling in missing data based on existing patterns) using techniques such as mean/median imputation, k-Nearest Neighbors imputation, or more sophisticated model-based imputation. The choice depends on the data’s nature and the potential bias introduced. For instance, using mean imputation for highly skewed data might lead to inaccurate results.

- Outlier Detection and Treatment: Identifying outliers – data points significantly different from the rest – is critical. I use box plots, scatter plots, and statistical methods like the Z-score to detect them. Treatment strategies include removal (if justified and not a substantial portion of the data), winsorizing (capping values at a certain percentile), or transformation (e.g., log transformation for skewed data).

- Data Transformation: This involves converting data into a more suitable format for analysis. Common transformations include standardization (centering data around zero with unit variance), normalization (scaling data to a specific range), and encoding categorical variables (converting text labels into numerical representations using one-hot encoding or label encoding).

- Feature Engineering: This creative process involves creating new features from existing ones to improve model accuracy. For example, in a study of plant growth, I might engineer a feature representing the ratio of height to leaf area.

In a recent project analyzing climate data, I used a combination of imputation for missing rainfall measurements (using a k-NN approach based on spatial proximity) and log transformation to address the right-skewed distribution of temperature readings, significantly improving the model’s predictive power.

Q 23. What ethical considerations are important when working with scientific data?

Ethical considerations are paramount when working with scientific data. Transparency, data privacy, and responsible data sharing are fundamental. Imagine a medical researcher using patient data without consent – the consequences are severe!

- Informed Consent: When dealing with human subjects, obtaining informed consent is non-negotiable. Participants must understand the study’s purpose, risks, and benefits before agreeing to participate.

- Data Anonymization and Privacy: Protecting participant identity is vital. This involves removing personally identifiable information (PII) such as names, addresses, and medical record numbers whenever possible. Techniques like data masking or de-identification are employed.

- Data Security: Data should be stored securely to prevent unauthorized access, modification, or disclosure. This includes using secure servers, encryption, and access control mechanisms.

- Data Integrity and Accuracy: Maintaining data integrity means ensuring the data is accurate, complete, and consistent throughout the research process. This includes proper documentation, version control, and data validation techniques.

- Bias and Fairness: Being aware of potential biases in data collection, analysis, and interpretation is critical. We need to strive for fairness and avoid perpetuating harmful stereotypes or inequalities.

- Data Sharing and Transparency: Open and transparent data sharing practices promote reproducibility and accountability. Researchers should make their data and methods readily available (within ethical and legal constraints).

In my work, I always prioritize these ethical considerations. For instance, in a study involving genomic data, I rigorously followed anonymization protocols, ensuring no individual could be identified from the analyzed data.

Q 24. How do you determine the appropriate level of significance for a hypothesis test?

The level of significance (alpha) in a hypothesis test represents the probability of rejecting the null hypothesis when it’s actually true (Type I error). It’s like setting a threshold for how much evidence you need to be convinced to reject a claim.

The commonly used significance level is 0.05, meaning there’s a 5% chance of making a Type I error. However, the appropriate alpha level depends on the context of the study and the potential consequences of making a Type I or Type II error (failing to reject a false null hypothesis).

- High-Risk Scenarios: In situations with high stakes (e.g., medical testing or drug approval), a more stringent alpha level (e.g., 0.01 or even 0.001) might be preferred to minimize the risk of false positives.

- Low-Risk Scenarios: In other cases, a less stringent alpha (e.g., 0.1) might be acceptable if the cost of missing a true effect is high.

- Prior Knowledge: Pre-existing knowledge about the phenomenon under study can also influence the choice of alpha. If there’s strong prior evidence supporting the alternative hypothesis, a slightly higher alpha might be justifiable.

- Power Analysis: Power analysis helps determine the sample size needed to detect an effect of a certain size with a given alpha and power (1-beta, where beta is the probability of a Type II error). This is essential in planning a study and determining the appropriate alpha level.

Ultimately, choosing the right alpha involves a balance between the risk of false positives and false negatives. It’s a crucial decision that should be carefully considered before conducting a hypothesis test.

Q 25. Explain your understanding of different sampling methods and their implications.

Sampling methods are essential for collecting data efficiently and representatively. Imagine trying to measure the average height of every tree in a forest – impossible! Sampling allows us to make inferences about the population based on a smaller subset.

- Simple Random Sampling: Each member of the population has an equal chance of being selected. This is like drawing names from a hat. It’s straightforward but may not be representative if the population is heterogeneous.

- Stratified Sampling: The population is divided into strata (subgroups) based on relevant characteristics (e.g., age, gender), and random samples are drawn from each stratum. This ensures representation from all subgroups.

- Cluster Sampling: The population is divided into clusters (groups), and a random sample of clusters is selected. All members within the selected clusters are then included in the sample. This is efficient but may lead to less precise estimates.

- Systematic Sampling: Every kth member of the population is selected after a random starting point. This is simple but can be biased if there’s a pattern in the population.

- Convenience Sampling: Samples are selected based on ease of access. This is often used in pilot studies but is prone to bias and shouldn’t be used for definitive conclusions.

The choice of sampling method significantly impacts the generalizability of the results. For example, using convenience sampling to study the opinions of voters might lead to a biased estimate of the overall electorate’s preferences.

Q 26. How do you evaluate the accuracy and precision of scientific measurements?

Accuracy and precision are critical aspects of scientific measurements. Accuracy reflects how close a measurement is to the true value, while precision reflects the reproducibility of the measurement (how close repeated measurements are to each other).

Imagine shooting arrows at a target. High accuracy means the arrows are clustered near the bullseye, while high precision means the arrows are tightly clustered together, regardless of whether they’re near the bullseye.

- Assessing Accuracy: Accuracy is often evaluated by comparing measurements to a known standard or reference value. The difference between the measured value and the true value is the error. The magnitude of the error indicates the accuracy.

- Assessing Precision: Precision is typically assessed by calculating the standard deviation or variance of repeated measurements. A smaller standard deviation indicates higher precision.

- Error Propagation: When measurements are combined in calculations, errors propagate. Understanding how errors combine is crucial for evaluating the overall uncertainty of the results. Techniques like error analysis are used to estimate the uncertainty.

- Calibration: Regular calibration of instruments helps ensure accuracy by comparing the instrument’s readings to a known standard.

- Control Experiments: Including control experiments in a study helps assess the accuracy and reliability of the experimental procedures and measurements.

In my work, I always carefully consider both accuracy and precision. For instance, when using a spectrophotometer to measure absorbance, I ensure the instrument is properly calibrated and perform multiple measurements to assess precision and calculate the associated uncertainty.

Q 27. Describe your experience with using scientific databases and literature.

My experience with scientific databases and literature is extensive. I am proficient in using various databases like PubMed (for biomedical literature), Web of Science (for multidisciplinary research), and specialized databases relevant to my specific field of research (e.g., GenBank for genetic sequences).

I’m adept at formulating effective search strategies using keywords, Boolean operators (AND, OR, NOT), and advanced search features to retrieve relevant publications and data. I critically evaluate the quality and reliability of the information obtained from these sources.

- Literature Reviews: I routinely conduct comprehensive literature reviews to stay updated on the latest research findings and identify knowledge gaps in my area of expertise. I use reference management software to organize and cite the literature appropriately.

- Data Mining: I’m skilled in extracting relevant data from scientific databases, such as extracting gene expression data from Gene Expression Omnibus (GEO) or protein-protein interaction data from STRING.

- Data Validation: I always validate data obtained from external sources before using it in my analysis. This involves checking data consistency, accuracy, and completeness, and cross-referencing with other reliable sources.

- Citation Management: I employ tools like Zotero or Mendeley to manage references and ensure accurate and consistent citations in my publications and reports.

In a recent research project, I used PubMed to conduct a systematic review of existing literature on a specific disease, extracting relevant data from numerous studies to build a comprehensive understanding of the disease’s pathogenesis and potential treatment strategies.

Key Topics to Learn for Ability to Interpret and Apply Scientific Data Interviews

- Data Visualization and Interpretation: Understanding various chart types (bar graphs, scatter plots, histograms, etc.), identifying trends, outliers, and correlations within datasets. Practicing critical analysis of presented data to draw meaningful conclusions.

- Statistical Analysis Fundamentals: Grasping core statistical concepts like mean, median, mode, standard deviation, and their application in interpreting data significance. Understanding p-values and confidence intervals.

- Experimental Design and Methodology: Analyzing experimental setups, identifying potential biases and limitations, and evaluating the validity and reliability of research findings. This includes understanding different study designs (e.g., randomized controlled trials, observational studies).

- Data Presentation and Communication: Effectively communicating scientific findings through clear and concise presentations, reports, or visualizations. Practicing explaining complex data in a way that is understandable to both technical and non-technical audiences.

- Problem-Solving using Scientific Data: Applying learned concepts to real-world scenarios. Practice analyzing case studies and developing data-driven solutions to scientific problems. Consider practicing with hypothetical scenarios.

- Specific Software Proficiency (if applicable): Demonstrate your skills with relevant software packages like R, Python (with libraries like Pandas and Matplotlib), SPSS, or others commonly used in your field.

Next Steps

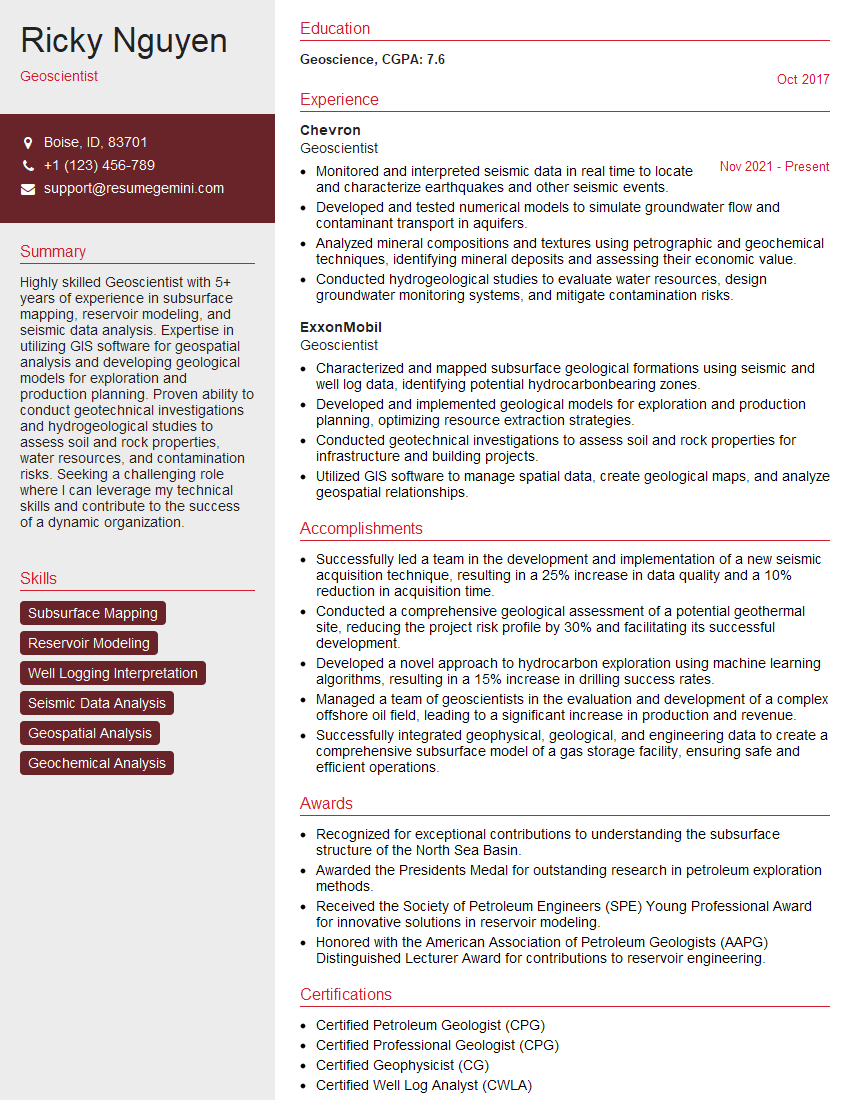

Mastering the ability to interpret and apply scientific data is crucial for career advancement in virtually any scientific field. It demonstrates critical thinking, problem-solving skills, and a deep understanding of your chosen discipline. To maximize your job prospects, create an ATS-friendly resume that clearly highlights these skills. ResumeGemini is a trusted resource that can help you build a professional and impactful resume tailored to your specific experience and target roles. Examples of resumes tailored to showcasing expertise in interpreting and applying scientific data are available through ResumeGemini to help guide your resume creation.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples