Every successful interview starts with knowing what to expect. In this blog, we’ll take you through the top Aircraft Test Execution interview questions, breaking them down with expert tips to help you deliver impactful answers. Step into your next interview fully prepared and ready to succeed.

Questions Asked in Aircraft Test Execution Interview

Q 1. Explain the different phases of aircraft testing.

Aircraft testing is a rigorous process, broken down into distinct phases to ensure safety and functionality. Think of it like building a house – you wouldn’t put on the roof before the foundation is secure! These phases are typically:

- Design Verification Testing (DVT): This early stage focuses on verifying the design’s basic functionality through simulations, component testing, and early hardware-in-the-loop (HIL) testing. We’re checking that the individual parts work as intended before combining them.

- System Integration Testing (SIT): Once individual components pass DVT, they are integrated. This phase tests the interaction between various systems (e.g., flight controls, avionics, propulsion) to ensure seamless operation. Think of it as making sure the plumbing and electrical systems work together in the house.

- Functional Testing: This phase validates that the aircraft performs its intended functions according to specifications. For example, we’d check if the landing gear deploys correctly, or if the flaps extend smoothly at specific speeds.

- Performance Testing: This phase assesses the aircraft’s performance parameters – speed, altitude, rate of climb, fuel consumption – against design targets. This is crucial for verifying the aircraft’s overall efficiency and capability.

- Endurance Testing: We push the aircraft to its limits to determine its operational lifespan and ability to withstand prolonged use. Think of it as a stress test to identify weaknesses.

- Environmental Testing: This involves subjecting the aircraft to extreme temperature, humidity, and altitude conditions to check its resilience in various climates.

- Flight Testing: The ultimate test! This involves actual flights to validate the aircraft’s performance and handling characteristics in real-world conditions. This involves many test flights, each focusing on specific parameters.

Each phase builds upon the previous one, creating a comprehensive approach to ensuring a safe and reliable aircraft.

Q 2. Describe your experience with flight test instrumentation.

My experience with flight test instrumentation is extensive. I’ve worked with a wide range of sensors and data acquisition systems (DAS), including:

- Accelerometers: Measuring aircraft acceleration and g-forces.

- Rate Gyros: Measuring angular rates of rotation (pitch, roll, yaw).

- Air Data Systems (ADS): Providing airspeed, altitude, and atmospheric pressure data.

- GPS receivers: For accurate position tracking.

- Strain gauges: Measuring stress on structural components.

- Temperature sensors: Monitoring various temperatures throughout the aircraft.

I’m proficient in configuring DAS, calibrating sensors, troubleshooting data acquisition problems, and using specialized software for data analysis. In one project, we integrated a new sensor system to monitor engine vibration in real-time, which significantly improved our ability to detect potential engine issues during flight testing.

Q 3. How do you ensure test data integrity and traceability?

Data integrity and traceability are paramount in aircraft testing. We achieve this through a combination of rigorous processes and tools:

- Calibration Procedures: All sensors and instruments are calibrated before and after each test run, ensuring accurate measurements. Calibration certificates are meticulously maintained.

- Data Acquisition Systems (DAS): Modern DAS systems are designed with redundancy, ensuring data is recorded accurately even in case of failures. Timestamping is crucial for precise data correlation.

- Data Validation: Automated scripts check for inconsistencies and outliers in the data. Manual validation is also done by experienced engineers.

- Chain of Custody: We maintain a detailed record of who handled the data at each stage, preventing tampering or loss of data. This includes timestamps and electronic signatures.

- Version Control: All test plans, procedures, data, and analysis reports are managed with version control systems (e.g., Git), allowing us to track changes and revert to previous versions if needed.

By implementing these procedures, we can ensure the accuracy and reliability of our test data, providing a solid foundation for making critical design decisions.

Q 4. What are the key differences between unit, integration, and system testing in aircraft development?

The three levels of testing – unit, integration, and system – are distinct but interconnected stages in aircraft development. They can be thought of as progressively assembling and testing the ‘house’, from the bricks to the whole structure:

- Unit Testing: This involves testing individual components or modules in isolation. For example, testing a specific software function or a single sensor. We ensure each ‘brick’ is strong.

- Integration Testing: This focuses on verifying the interactions between different units or modules. For example, ensuring the flight control system interacts correctly with the navigation system. This is like building the walls and making sure they are connected properly.

- System Testing: This is the highest level of testing, where the entire system (the complete aircraft) is evaluated as a whole. We check the complete ‘house’ for any leaks, cracks etc.. This ensures all systems work together seamlessly.

Each level builds upon the previous one, allowing for early detection and correction of errors, preventing major issues from arising during later stages. Failing to properly execute these stages could lead to costly rework, delays, and even safety risks.

Q 5. Explain your experience with different types of aircraft testing (e.g., functional, performance, endurance).

My experience spans a range of aircraft testing types:

- Functional Testing: I’ve been involved in testing various aircraft systems, including flight controls, landing gear, and avionics. For example, I verified the functionality of an advanced autopilot system in a new fighter jet.

- Performance Testing: I’ve conducted numerous flight tests to measure speed, rate of climb, fuel efficiency, and other key performance parameters. This involved analyzing the data to determine the aircraft’s adherence to design specifications. In one case, I determined the optimal approach speeds for a new passenger plane design.

- Endurance Testing: I’ve participated in long-duration flight tests to assess an aircraft’s ability to withstand prolonged operation. This included monitoring the aircraft’s structural integrity, systems performance, and crew fatigue.

- Handling Qualities Testing: This involves evaluating how the aircraft responds to pilot inputs, which directly affects the safety and efficiency of the aircraft.

These different types of testing often overlap, as a single test flight may encompass multiple objectives. For example, an endurance test can also be used to assess performance and handling qualities simultaneously.

Q 6. How do you handle unexpected test results or failures?

Unexpected results or failures are inevitable in aircraft testing. Our response is systematic and follows a structured approach:

- Immediate Action: The first priority is to ensure the safety of the aircraft and crew. If the failure poses an immediate risk, emergency procedures are initiated.

- Data Collection: All relevant data is meticulously collected, including sensor readings, flight recorder data, and pilot reports. This will help trace the origin of the error.

- Failure Analysis: A team of engineers analyzes the data to identify the root cause of the failure. This may involve simulations, modeling, and component-level inspections.

- Corrective Actions: Based on the analysis, corrective actions are implemented to address the failure. This might involve software updates, hardware modifications, or changes to testing procedures.

- Retesting: Once corrective actions are implemented, retesting is conducted to verify that the issue has been resolved and the aircraft is functioning correctly. The tests are repeated to ensure the correction is permanent.

We use a fault tree analysis and root cause analysis as our core tools. One time, during a performance test, we discovered unexpected high vibrations at a specific altitude and speed. By meticulously analyzing flight data and sensor readings, we discovered a design flaw in a wing component that we were able to correct quickly.

Q 7. Describe your experience with test planning and execution.

Test planning and execution are critical for successful aircraft testing. My approach involves:

- Defining Test Objectives: Clearly stating the goals and scope of the testing, ensuring we target specific aircraft performance or system capabilities.

- Developing Test Plans: Creating comprehensive test plans that outline test procedures, test cases, and expected results. These plans document the method used to achieve the test objectives.

- Resource Allocation: Identifying and allocating the necessary resources, including personnel, equipment, and facilities. The right resources are essential to achieve test objectives.

- Test Execution: Supervising and executing the tests according to the plan, ensuring adherence to safety regulations. This involves coordinating various teams, monitoring progress, and documenting results.

- Data Analysis: Analyzing the test data to verify compliance with specifications, and identifying areas for improvement. This helps optimize the aircraft design or operational procedures.

- Reporting: Generating comprehensive test reports that summarize the findings and recommendations.

In a recent project, I led the planning and execution of a series of flight tests to evaluate a new navigation system. We meticulously defined test cases covering various scenarios, including normal operations, failures, and emergencies, ensuring that the system is robust and reliable.

Q 8. What software tools are you proficient in for aircraft test execution?

Proficiency in software tools is crucial for efficient aircraft test execution. My expertise spans several categories. For test management and planning, I’m highly skilled in tools like Jama Software and DOORS, which allow for meticulous tracking of requirements, test cases, and defects. These tools are invaluable for maintaining traceability throughout the testing lifecycle. For data acquisition and analysis, I’m proficient with National Instruments DIAdem and similar data visualization and processing software. These are essential for interpreting the massive datasets generated during flight tests and simulations. Finally, for automating repetitive tasks and creating scripts, I have strong experience with Python and MATLAB, often used to interface with test equipment and analyze data. For example, I once used Python to automate the process of comparing flight test data against predicted values, significantly reducing the time required for initial data analysis.

- Jama Software: Requirement management and test case tracking.

- DOORS (Dynamic Object-Oriented Requirements System): Requirement management and traceability.

- NI DIAdem: Data acquisition, visualization, and analysis.

- Python: Automation, data processing, and scripting.

- MATLAB: Data analysis, modeling, and simulation.

Q 9. How do you manage risk during aircraft testing?

Risk management in aircraft testing is paramount, as safety is the highest priority. My approach is systematic, integrating risk assessment throughout the testing process. It begins with identifying potential hazards using techniques like Failure Modes and Effects Analysis (FMEA) and Hazard Analysis and Critical Control Points (HACCP). For example, we might identify the risk of engine failure during a high-speed flight test. We then analyze the probability and severity of each identified risk, quantifying the potential consequences of its occurrence. Mitigation strategies are then developed and implemented. These strategies might involve redundancy in critical systems, increased monitoring, or modifications to the test plan. Risk is continuously monitored and reassessed during testing, and the test plan is dynamically adjusted as needed. This might involve introducing additional safety checks or limiting the test envelope in response to unexpected findings. Comprehensive documentation throughout the process ensures traceability and accountability.

Q 10. What is your experience with test automation in an aircraft testing environment?

Automation is essential for increasing efficiency and reducing costs in aircraft testing. I have significant experience in automating various aspects of the testing process, including test case execution, data acquisition, and data analysis. I’ve worked extensively with frameworks like pytest and Robot Framework. These frameworks allow for the creation of reusable test scripts that can be easily integrated into continuous integration/continuous deployment (CI/CD) pipelines. For example, I developed a suite of automated tests for a flight control system using pytest. These tests verified the system’s response to various inputs and fault conditions, significantly reducing testing time and increasing test coverage. The automated tests also allowed for early detection of regressions during software updates, ensuring system reliability. I also have experience integrating test automation with HIL simulations, further increasing the efficiency and realism of testing.

Q 11. How do you ensure compliance with regulatory standards (e.g., FAA, EASA)?

Compliance with regulatory standards like those from the FAA (Federal Aviation Administration) and EASA (European Union Aviation Safety Agency) is non-negotiable. My experience encompasses meticulous adherence to these regulations in all phases of aircraft testing. This starts with understanding the specific certification requirements for the aircraft being tested, such as those outlined in Part 25 for transport category airplanes. Throughout the testing process, rigorous documentation is maintained, including test plans, test procedures, test results, and deviations. Traceability matrices are used to link test cases to requirements and ensure that all requirements are adequately verified. Data integrity is ensured through the use of calibrated equipment and validated data acquisition systems. All processes and data are subjected to independent audits and reviews to ensure compliance with regulatory guidelines. For example, in a recent project, we ensured compliance with DO-178C (Software Considerations in Airborne Systems and Equipment Certification) for software testing, carefully documenting our procedures and adhering to all safety regulations. Non-compliance is immediately reported and addressed, preventing potential safety hazards.

Q 12. Explain your experience with Hardware-in-the-Loop (HIL) testing.

Hardware-in-the-Loop (HIL) testing is a critical component of aircraft system testing, allowing for the safe and efficient testing of complex systems in a simulated environment. My experience includes designing, executing, and analyzing HIL tests for a variety of aircraft systems, including flight control systems, engine control units, and avionics. I’m proficient in using various HIL simulators and working with real-time operating systems. For example, I participated in a project testing a new autopilot system using a dSPACE HIL simulator. This involved developing test scenarios that replicated various flight conditions, including normal operation, failures, and emergencies. The HIL system allowed us to test the autopilot’s responses in a realistic environment without the risks and costs associated with flight testing. Data analysis from HIL tests helps identify design flaws and verify that the system meets its performance requirements. This allows for early detection and resolution of potential issues, saving both time and money during the development process.

Q 13. How do you interpret and analyze test data?

Interpreting and analyzing test data is a crucial aspect of aircraft test execution. This involves not only understanding the raw data but also using statistical methods and engineering judgment to extract meaningful insights. My approach begins with thorough data validation to ensure accuracy and identify any outliers or anomalies. I then use various statistical techniques like regression analysis and time-series analysis to understand trends and relationships within the data. Visualization tools, such as those in NI DIAdem, are essential in identifying patterns and potential issues. I often compare test results against predicted values derived from models and simulations to determine whether the system is performing as expected. In addition to quantitative analysis, I also utilize qualitative data, such as pilot reports and observations, to gain a comprehensive understanding of system behavior. For instance, analyzing flight test data revealed an unexpected vibration at a particular flight condition. Using data visualization and analysis, we were able to pinpoint the source of the vibration and implement a corrective action.

Q 14. Describe your experience with fault isolation and troubleshooting.

Fault isolation and troubleshooting are critical skills in aircraft testing. My approach is systematic and methodical, starting with a thorough review of the test data and any available logs to identify the symptom and its context. I then use diagnostic tools and techniques to isolate the root cause of the problem. This may involve analyzing sensor data, reviewing system logs, and performing targeted tests. I often use a combination of top-down and bottom-up approaches, starting with a high-level overview of the system and gradually drilling down to the component level. Good communication and teamwork are also vital; collaboration with engineers from different disciplines helps to understand the system’s complexities and identify the source of a malfunction. For example, during a flight test, an unexpected anomaly was observed in the flight control system. By systematically analyzing sensor data, logs, and pilot reports, we were able to trace the issue to a faulty sensor. The issue was resolved, demonstrating the ability to effectively isolate faults and implement corrective actions.

Q 15. What are your experience with different types of testing environments (e.g., lab, flightline)?

My experience encompasses both laboratory and flightline testing environments. Lab testing, often using simulators or hardware-in-the-loop (HIL) setups, allows for controlled testing of individual systems or components under various conditions. This is crucial for early-stage testing and debugging, allowing us to isolate problems before moving to more expensive and complex flight tests. For example, I’ve extensively used lab environments to test the performance of a new flight control system under simulated turbulence conditions, identifying and rectifying software glitches before risking a real flight.

Flightline testing, on the other hand, involves conducting tests on the actual aircraft. This allows us to validate system performance in a real-world environment and verify the integration of all systems. For example, I’ve participated in flight tests evaluating the performance of a new avionics suite during various flight maneuvers, including take-off, climb, cruise, and landing. The flightline environment presents unique challenges such as weather variability and unpredictable circumstances which are important factors to consider during testing and reporting.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with using test procedures and specifications.

Test procedures and specifications are the backbone of any successful test execution. They provide a structured approach, ensuring consistency and repeatability. My experience involves creating, reviewing, and executing these documents. A well-written test procedure outlines the test objectives, steps to be followed, expected results, and the acceptance criteria. Specifications define the requirements the system must meet. For example, a test procedure might detail the steps for verifying the aircraft’s autopilot system’s response to specific wind shear conditions. A related specification might define the acceptable limits for deviations from the desired flight path under those same conditions. Any deviation from the procedure is carefully documented and reported, highlighting the importance of meticulous adherence to the defined process.

Q 17. How do you document test results and generate reports?

Documentation and reporting are paramount. We use a combination of methods to document test results. This often includes detailed logs capturing sensor data, system responses, and any observed anomalies. We also utilize specialized software tools to collect, analyze, and visualize test data, creating comprehensive reports. These reports present the results against the defined requirements and specifications. For instance, in a flight test evaluating fuel efficiency, we meticulously document the fuel consumed at different flight phases and compare that to the expected values, clearly indicating whether the aircraft met or exceeded the efficiency targets. We use charts and graphs to make complex data easily digestible and accessible for all stakeholders.

Q 18. How do you prioritize test cases?

Prioritizing test cases involves a strategic approach. We consider several factors, including risk, criticality, and dependencies. High-risk test cases, those that could lead to significant safety or performance issues if they fail, receive top priority. Critical functionalities, like flight control systems, are also given precedence. We also prioritize tests based on their dependencies; some tests might require others to be completed first. We often employ a risk-based approach using techniques like Failure Modes and Effects Analysis (FMEA) to identify the most critical areas needing testing. This ensures that resources are focused on the most important aspects of the system, balancing the need for thorough testing with available time and resources.

Q 19. What is your experience with managing test budgets and timelines?

Managing test budgets and timelines requires careful planning and execution. We start by creating detailed budget breakdowns for each test phase and activity. This involves estimating the cost of personnel, equipment, materials, and travel. Timelines are meticulously planned, considering the dependencies between different test activities. Regular monitoring and reporting help us track progress against the budget and timeline and identify any potential deviations early on. For example, if unexpected problems arise during lab testing, leading to schedule delays, we immediately reassess the plan, explore alternative solutions, and communicate potential impacts to stakeholders, enabling proactive mitigation of issues.

Q 20. Explain your understanding of aircraft systems architecture.

My understanding of aircraft systems architecture is comprehensive. I know that it involves a complex interplay of various subsystems, including flight control, propulsion, avionics, and environmental control systems. These systems interact extensively, and their proper integration is crucial for the safe and efficient operation of the aircraft. I’m familiar with various communication buses like ARINC 664 and AFDX, and I understand the importance of data integrity and redundancy. For example, I understand how changes to one system, such as a new navigation system, can impact other systems and require thorough testing to ensure seamless integration and compatibility. A deep understanding of this architecture is vital for effective test planning and execution.

Q 21. How do you collaborate with other engineering teams during testing?

Collaboration is key. During testing, I actively engage with various engineering teams, including design, software, and systems engineers. Clear communication and regular meetings are essential. We utilize collaborative tools to share data, track progress, and resolve issues. For example, if a software bug is discovered during flight testing, I collaborate with the software engineers to investigate the root cause, develop a fix, and retest the affected functionality. Open communication and a collaborative mindset are essential for effectively addressing challenges and achieving the testing objectives.

Q 22. How familiar are you with different aircraft communication systems?

My familiarity with aircraft communication systems is extensive, encompassing both legacy and modern technologies. I’m proficient in understanding and troubleshooting various systems, including VHF/UHF radios, satellite communication systems (SATCOM), Air Traffic Control (ATC) systems, and data link systems like ADS-B. I understand the protocols, data formats, and operational intricacies of these systems. For instance, I have experience working with the intricacies of VHF communications, including frequency coordination, understanding different types of transmissions (e.g., voice, data), and troubleshooting interference issues. My experience also includes working with ADS-B (Automatic Dependent Surveillance-Broadcast) systems, understanding its role in providing real-time aircraft location and other data to air traffic controllers. This knowledge is crucial for ensuring safe and efficient test flights, as communication is paramount to flight safety and data acquisition.

- VHF/UHF Radios: Understanding frequency allocation, power levels, and communication protocols.

- SATCOM: Knowledge of satellite constellations, data rates, and security protocols.

- ATC Systems: Familiarity with communication procedures and regulations.

- Data Link Systems (ADS-B): Understanding data formats, accuracy, and applications in flight testing.

Q 23. What is your experience with real-time data acquisition systems?

My experience with real-time data acquisition systems (RTDAS) is considerable. I’ve worked with various systems, from simple data loggers to sophisticated, integrated systems capable of handling massive datasets from numerous sensors. I’m adept at configuring, calibrating, and troubleshooting these systems, ensuring data integrity and accuracy. This includes understanding the hardware components (sensors, data acquisition units, etc.), the software used for data processing and visualization, and the overall system architecture. For example, I’ve worked extensively with systems that collect flight data parameters like airspeed, altitude, engine performance data, and structural loads. I’m also experienced in data validation techniques, ensuring that the collected data is reliable and meaningful, preventing false positives or negatives which are extremely important for analysis and conclusions drawn from the data.

A recent project involved integrating a new RTDAS into a flight test program. This required close collaboration with software engineers, test engineers, and flight crew. We carefully planned the data acquisition strategy, considering sensor placement, data sampling rates, and data storage capacity. We successfully integrated the system, validated its performance, and collected high-quality data throughout the flight test campaign.

Q 24. How do you contribute to continuous improvement in aircraft testing processes?

My contribution to continuous improvement in aircraft testing processes involves several key strategies. I actively participate in post-test reviews, analyzing data to identify areas for optimization. This includes evaluating test procedures, data analysis methods, and overall efficiency. I also leverage data analysis to pinpoint inefficiencies and propose improvements to streamline processes. For example, using statistical analysis to demonstrate that a specific testing sequence can be significantly shortened without compromising safety or data quality. Furthermore, I proactively seek opportunities to adopt new technologies and best practices to enhance the testing process. I also contribute to the development and maintenance of test procedures, ensuring they are clear, concise, and align with evolving regulatory requirements and best practices. Finally, a commitment to knowledge sharing through mentoring junior engineers and actively participating in team discussions is crucial for driving continuous improvement.

Q 25. Describe a challenging aircraft testing situation you faced and how you overcame it.

During a high-altitude flight test, we encountered an unexpected sensor malfunction that affected crucial flight data parameters. This threatened to compromise the objectives of the test, and the pressure to deliver results was significant. My approach involved a systematic troubleshooting process. First, we isolated the affected sensor by comparing data from redundant sensors and cross-referencing it with other systems. We quickly determined the malfunction wasn’t a total system failure, but a specific sensor issue. Next, we explored alternative methods to obtain the required data, and we decided to utilise a secondary, less precise, sensor as a temporary replacement. This involved recalibrating the system to take account of the less precise sensor data which had potential impact on the accuracy of the analysis. This allowed us to salvage a significant part of the test plan. Though our initial data was impacted, we successfully completed the flight test and obtained valuable data by mitigating the impact of the sensor failure. Post-flight analysis helped us understand the root cause of the malfunction, allowing for preventative measures to be implemented in future tests.

Q 26. Explain your knowledge of different types of flight simulators and their applications in testing.

My understanding of flight simulators encompasses various types, including Hardware-in-the-Loop (HIL) simulators, Full-Flight Simulators (FFS), and lower-fidelity simulators. HIL simulators are crucial for testing aircraft systems in a controlled environment, providing realistic interactions between the system under test and simulated aircraft dynamics. FFS are used for pilot training and certification, but also offer significant value in flight testing by allowing pilots to rehearse complex procedures and test responses in different scenarios before real-world flight. Lower-fidelity simulators offer cost-effective ways to test basic functionalities and perform preliminary tests. The choice of simulator depends on the specific testing needs, budget, and the level of realism required. For instance, HIL simulators are ideal for testing avionics and control systems, ensuring seamless integration and flawless performance, while FFS are beneficial for complex scenarios where pilot interaction and response analysis are crucial.

Q 27. How do you ensure the safety of personnel during aircraft testing operations?

Ensuring personnel safety during aircraft testing is paramount. This involves rigorous adherence to safety protocols, risk assessments, and emergency procedures. We utilize comprehensive pre-flight checklists, thoroughly inspecting aircraft systems and equipment. Flight crews receive extensive training on emergency procedures and are equipped with personal protective equipment (PPE) tailored to the specific test environment. Communication channels are maintained throughout the test, with a clear chain of command and established communication protocols. The test environment is carefully monitored, considering factors like weather conditions and potential hazards. Emergency response plans are in place and regularly tested, ensuring swift and effective action in case of unforeseen circumstances. Regular safety meetings and reviews are conducted to continuously refine our processes and improve safety standards, reducing human error as well as mitigating risk.

Q 28. Explain your understanding of the software development lifecycle (SDLC) and its role in aircraft testing.

My understanding of the Software Development Lifecycle (SDLC) and its role in aircraft testing is critical. The SDLC—typically involving stages like requirements gathering, design, coding, testing, deployment, and maintenance—directly impacts the aircraft testing process. In aircraft development, rigorous testing at each SDLC stage is essential, starting with unit testing, integration testing, system testing, and ultimately flight testing. This rigorous approach ensures that software components function correctly in isolation and when integrated into the overall aircraft system. Furthermore, test methodologies such as Agile and Waterfall greatly influence the testing process. The close integration of testing and software development is crucial to ensuring system reliability, safety, and airworthiness certification. A solid understanding of SDLC enables me to identify potential issues early in the development process, thereby reducing the risk of costly rework and delays later in the lifecycle. For example, early engagement in requirements gathering prevents misunderstandings and assures testing thoroughly verifies that software meets all specifications.

Key Topics to Learn for Aircraft Test Execution Interview

- Test Planning & Methodology: Understanding different testing methodologies (e.g., Waterfall, Agile) and their application in aircraft testing. Creating comprehensive test plans, defining test cases, and managing test environments.

- Flight Test Instrumentation & Data Acquisition: Familiarization with various sensors, data acquisition systems, and their integration into aircraft. Understanding data validation and error handling.

- Test Procedure Execution & Reporting: Following established procedures meticulously, documenting test results accurately, and creating concise, informative reports. Identifying and escalating critical issues effectively.

- Aircraft Systems Knowledge: Demonstrating a foundational understanding of aircraft systems (e.g., flight controls, avionics, propulsion) relevant to the specific aircraft being tested.

- Data Analysis & Interpretation: Proficiency in analyzing test data, identifying trends, and drawing meaningful conclusions. Using appropriate tools and techniques for data visualization and presentation.

- Problem Solving & Troubleshooting: Ability to diagnose and resolve technical issues encountered during test execution. Demonstrating systematic troubleshooting approaches and effective communication during problem resolution.

- Safety & Compliance: Understanding and adhering to relevant safety regulations and compliance standards throughout the entire testing process.

- Software & Tools: Familiarity with common software and tools used in aircraft test execution (e.g., data analysis software, test management tools).

Next Steps

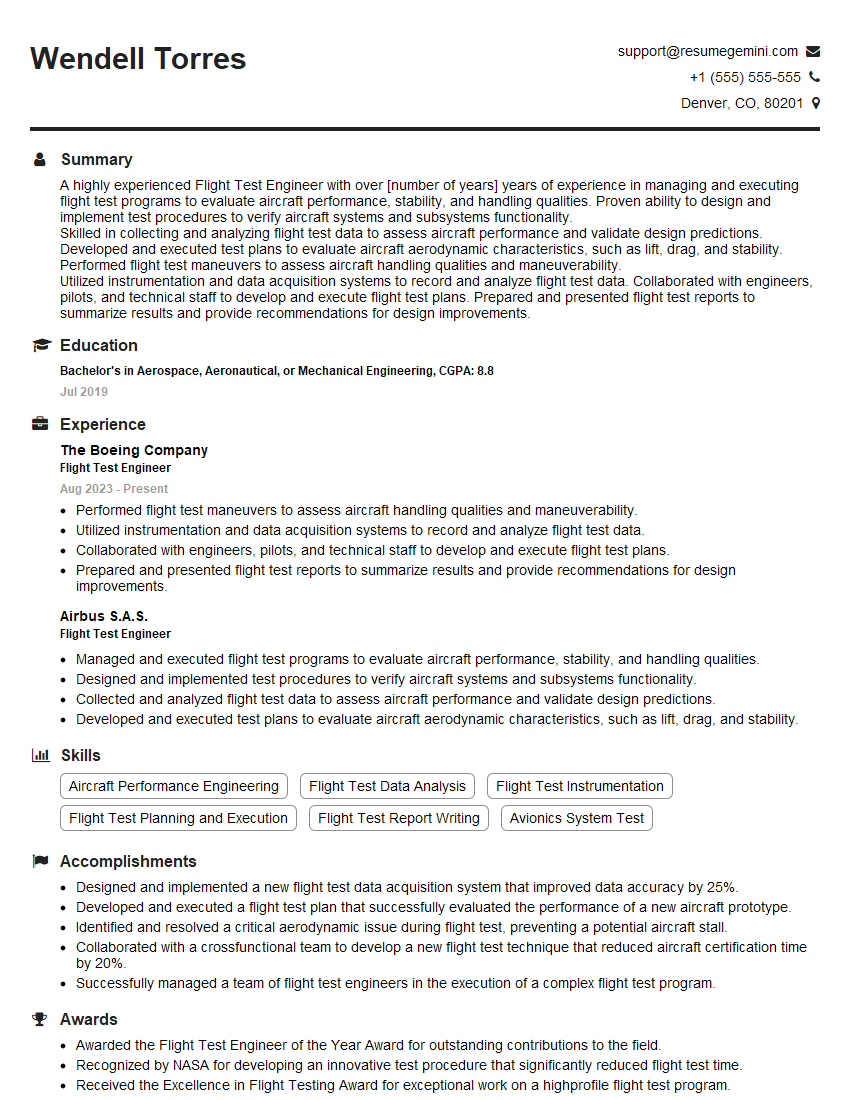

Mastering Aircraft Test Execution opens doors to exciting career opportunities in the aerospace industry, offering opportunities for growth and specialization. A strong resume is crucial to showcasing your skills and experience to potential employers. Creating an ATS-friendly resume significantly increases your chances of getting your application noticed. ResumeGemini is a trusted resource that can help you build a professional, impactful resume tailored to the aerospace industry. Examples of resumes specifically crafted for Aircraft Test Execution roles are available within ResumeGemini to guide you. Invest time in crafting a compelling resume – it’s your first impression!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples