Cracking a skill-specific interview, like one for Aircraft Test Planning, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Aircraft Test Planning Interview

Q 1. Explain the difference between a flight test plan and a test procedure.

A flight test plan is a high-level document outlining the overall strategy for testing a new aircraft or system. It defines the objectives, scope, resources, and schedule for the entire test program. Think of it as the roadmap for the entire journey. A test procedure, on the other hand, is a detailed, step-by-step instruction manual for conducting a specific test. It describes the exact steps, data to be collected, acceptance criteria, and safety precautions for a single test case. It’s like the detailed instructions for navigating a single leg of the journey.

For example, a flight test plan might state the objective is to verify the aircraft’s stall characteristics within a specified flight envelope. A corresponding test procedure would then detail the specific maneuvers, instrumentation to be used, pilot actions, and the criteria for determining a successful stall test.

Q 2. Describe your experience with risk assessment in aircraft test planning.

Risk assessment is crucial in aircraft test planning. It involves identifying potential hazards, analyzing their likelihood and severity, and implementing mitigation strategies. My experience includes using established frameworks like HAZOP (Hazard and Operability Study) and FTA (Fault Tree Analysis) to systematically evaluate risks. For instance, on a project involving a new high-lift device, we used HAZOP to identify potential issues like unintended deployment or structural failure during high-g maneuvers. We then developed mitigation strategies, such as redundancy in the control system and stringent pre-flight inspections, and assigned risk levels to each potential hazard, guiding our decision making on safety protocols and test procedures.

This involves not only considering potential failures of the aircraft system itself but also the wider operational context: pilot error, weather conditions, and potential ground-based support system failures. Quantifying these risks helps to justify the allocation of resources and prioritize safety measures to ensure the safety of the flight crew and test personnel.

Q 3. How do you prioritize test cases to maximize efficiency and coverage?

Prioritizing test cases requires a balanced approach considering risk, criticality, and dependency. I typically use a combination of techniques. Firstly, risk-based prioritization: tests addressing higher-risk components or functionalities are done first. Secondly, criticality prioritization: tests verifying the most crucial aircraft functions or performance parameters are performed earlier. Lastly, dependency prioritization: tests relying on other test results are scheduled accordingly. For example, tests verifying the flight control system stability would be prioritized over tests of the in-flight entertainment system.

A risk matrix helps visualize the interplay between risk and criticality. Using this, we can prioritize tests that contribute to the highest risk reduction or assure critical function, efficiently covering maximum functionality, starting with the most crucial aspects, and proceeding systematically.

Q 4. What are the key considerations for developing a test plan for a new aircraft system?

Developing a test plan for a new aircraft system necessitates meticulous attention to numerous factors. Firstly, a clear definition of the system’s requirements and specifications is paramount. Secondly, the regulatory landscape – particularly the requirements set by organizations such as the FAA or EASA – must be carefully considered. Thirdly, resource allocation – personnel, equipment, facilities, and budget – must be realistically planned. Fourthly, a well-defined testing methodology is needed, selecting appropriate test techniques (e.g., functional, performance, endurance tests) based on the system’s complexity and functionality.

For instance, when testing a new autopilot system, we would start by defining specific performance requirements for accuracy, stability, and response time. We’d then design test cases to verify these aspects in different flight conditions. Thorough risk assessment is critical, identifying potential failure modes and implementing safeguards. Finally, the regulatory compliance aspect is addressed by ensuring adherence to relevant airworthiness standards throughout the process.

Q 5. What methods do you use to track test progress and identify potential issues?

Tracking test progress and identifying potential issues requires a robust system. We use a combination of methods, including specialized test management software (like Jama or Jira) to log test cases, record results, and monitor progress. These tools allow for real-time updates on test execution, facilitating early identification of delays or discrepancies. Regular status meetings with all stakeholders are essential for open communication and prompt problem-solving. We also utilize data visualization tools to track key metrics, highlighting areas requiring attention. For instance, a trend analysis of flight data might reveal a gradual degradation in system performance, indicating a potential problem needing immediate investigation.

Furthermore, using data logging and automated test reporting capabilities ensures high accuracy, speed and reduced risk of human error in tracking and analysis. This allows for effective problem detection and resolution before significant delays.

Q 6. How do you handle unexpected issues or deviations from the test plan during flight testing?

Unexpected issues during flight testing are inevitable. Our response involves a structured approach. Firstly, safety is paramount: any situation compromising safety necessitates immediate termination of the test and a thorough investigation. Secondly, a deviation report is documented, recording the issue, its context, and the actions taken. Thirdly, a root cause analysis is conducted to understand the underlying reasons for the deviation. Fourthly, the test plan might need revision to incorporate the lessons learned, and potentially additional test cases added to address the uncovered issues. Fifthly, the impact on subsequent tests and the overall program is evaluated, making adjustments as needed.

For example, if an unexpected sensor malfunction occurred during a flight test, the test would be immediately aborted. A deviation report would detail the sensor’s failure, the flight conditions, and the actions taken by the pilot and test crew. A root cause analysis would investigate potential causes like sensor failure, wiring issues, or software bugs. The test plan would be updated, incorporating new tests to validate the sensor’s reliability and explore contingency measures.

Q 7. Explain your understanding of the regulatory requirements for aircraft testing.

My understanding of regulatory requirements for aircraft testing is extensive. These requirements, primarily defined by bodies like the FAA (Federal Aviation Administration) in the US and EASA (European Union Aviation Safety Agency) in Europe, ensure safety and airworthiness. These regulations cover numerous aspects, including the design and conduct of tests, the documentation required (test plans, procedures, reports), the qualification of personnel involved, and the certification process. Compliance involves meticulous adherence to standards like DO-178C (software) and DO-254 (hardware), dictating the processes used in developing and verifying flight-critical systems.

For example, testing a new flight control system requires strict adherence to DO-178C, specifying rigorous processes for software development, verification, and validation. Failure to meet these standards could lead to delays or even rejection of the aircraft certification. Understanding and applying these regulations is fundamental to ensure a safe and successful aircraft certification.

Q 8. Describe your experience with test data analysis and reporting.

Test data analysis and reporting are crucial for validating aircraft performance and identifying potential issues. My experience encompasses the entire process, from data acquisition to generating comprehensive reports for stakeholders. This involves:

- Data Cleaning and Validation: Identifying and correcting anomalies or errors in the raw data, ensuring accuracy and reliability. For example, I’ve used scripting languages like Python to automate outlier detection and data smoothing for flight test data.

- Statistical Analysis: Employing statistical methods to analyze data trends, correlations, and significant deviations from expected values. This might involve calculating confidence intervals, performing regression analysis, or using ANOVA to compare different test conditions. A specific example includes analyzing engine performance data to assess its compliance with certification standards.

- Report Generation: Creating clear, concise reports that summarize findings, highlight key results, and present recommendations. This often involves using specialized software like MATLAB or specialized reporting tools, presenting data visually through charts and graphs to make complex information easily understandable for engineers and management.

- Data Visualization: Transforming raw data into easily interpretable visual representations (charts, graphs, etc.). This allows for quick identification of trends, anomalies, and areas needing further investigation. For instance, I’ve created 3D plots visualizing aircraft trajectory and comparing it to the planned flight path.

In a recent project, I used statistical process control (SPC) charts to monitor engine parameters during a series of high-altitude tests, enabling early detection of a subtle but potentially critical trend that could have led to an in-flight engine malfunction. This proactive approach saved significant time and resources.

Q 9. How familiar are you with different test methodologies (e.g., waterfall, agile)?

I’m proficient in both waterfall and agile methodologies for aircraft test planning. The choice depends on the project’s specific needs and complexity.

- Waterfall: Suitable for projects with well-defined requirements and minimal anticipated changes. This approach is structured and sequential, with each phase (requirements, design, testing, implementation) completed before moving to the next. I’ve utilized waterfall successfully in legacy aircraft modification programs where changes to the test plan are less frequent.

- Agile: Better suited for projects with evolving requirements or where iterative development and frequent feedback are crucial. Agile’s iterative nature allows for flexibility and adaptation throughout the testing process. I’ve successfully integrated agile principles in new aircraft development programs, making use of sprint cycles to deliver test results and adapt to changing design requirements.

In practice, a hybrid approach, combining the strengths of both methodologies, is often the most effective solution for complex aircraft testing. For instance, the overall framework may follow a waterfall structure, but specific aspects could use agile principles, allowing iterative refinement of test procedures and analysis methods.

Q 10. What software tools do you use for test planning and management?

My experience spans various software tools for test planning and management. The specific tools employed often depend on the project and company standards but commonly include:

- Requirements Management Tools: DOORS (Dynamic Object-Oriented Requirements System) or Jama Software for capturing, tracing, and managing aircraft test requirements.

- Test Management Tools: Jira or HP ALM for test case creation, execution, and defect tracking. These tools are invaluable for organizing test activities and maintaining a clear record of testing progress.

- Data Acquisition and Analysis Software: This includes specialized software like NI DIAdem or MATLAB, along with custom scripting (Python, LabVIEW) for automating data processing and analysis. Specific tools depend on the sensors and data acquisition hardware used.

- Flight Test Simulation Software: Flight simulators coupled with data acquisition tools allow the execution of virtual flight tests, decreasing costs and risks associated with real-world flight tests. This might include tools like X-Plane or other high-fidelity flight simulators.

For example, in a recent project, we used Jama to manage the requirements, Jira to track test progress, and MATLAB to analyze the resulting flight data. The combination of these tools allowed for seamless collaboration and efficient management of the testing process.

Q 11. Explain your experience with flight test instrumentation and data acquisition.

My experience with flight test instrumentation and data acquisition is extensive. I’m familiar with various sensor technologies, data acquisition systems, and data processing techniques. This includes:

- Sensor Selection and Placement: Choosing appropriate sensors (accelerometers, pressure transducers, gyroscopes, etc.) and strategically placing them on the aircraft to capture the necessary data. This requires a thorough understanding of the test objectives and the aircraft’s structure.

- Data Acquisition Systems: Working with various data acquisition systems (DAS), understanding their capabilities and limitations, and configuring them to acquire data at the required sampling rates and accuracy. This includes understanding data transmission protocols, data storage methods and potential data loss issues.

- Data Conditioning and Calibration: Processing raw sensor data to remove noise, correct for biases, and calibrate data to ensure accuracy. This often involves using signal processing techniques and specialized calibration equipment.

- Data Telemetry: Utilizing telemetry systems for real-time data transmission during flight tests, enabling monitoring of aircraft parameters and early identification of potential problems.

I recall a project where we had to design a custom sensor array for measuring aerodynamic loads on a new wing design. This involved selecting suitable sensors, integrating them into the wing structure, and developing custom data acquisition and analysis software to manage the large volume of data generated. The process demanded meticulous planning, attention to detail and close collaboration with sensor manufacturers.

Q 12. How do you ensure the safety of personnel and equipment during aircraft testing?

Safety is paramount in aircraft testing. My approach to ensuring the safety of personnel and equipment involves:

- Risk Assessment and Mitigation: Conducting thorough risk assessments to identify potential hazards and develop mitigation strategies. This includes considering factors such as flight conditions, equipment malfunctions, and human error.

- Emergency Procedures: Establishing clear emergency procedures and ensuring that all personnel are adequately trained to respond effectively in case of an incident. This includes regular drills and simulations.

- Safety Equipment and Procedures: Utilizing appropriate safety equipment (e.g., parachutes, fire suppression systems) and adhering to strict safety protocols throughout the testing process. This often involves collaboration with safety officers and adherence to regulatory guidelines.

- Data Monitoring and Real-time Alerts: Implementing systems for real-time data monitoring during flight tests, allowing for quick identification and response to any critical deviations from expected values. The use of automated warning systems is paramount.

For example, before every flight test, we conduct a comprehensive pre-flight inspection, reviewing the aircraft’s condition, all equipment’s functionality and ensuring all personnel understand their roles and responsibilities. We also utilize flight termination systems as a critical safety measure in case of emergency.

Q 13. Describe your experience with test environment setup and configuration.

Setting up and configuring the test environment is a critical step that requires meticulous planning and execution. My experience encompasses:

- Test Facility Selection: Selecting the appropriate test facility based on the test objectives and the required resources. This could range from a dedicated flight test range to a wind tunnel or a ground-based test facility.

- Instrumentation Setup: Installing and calibrating all necessary sensors and equipment, ensuring that they are correctly integrated with the data acquisition system. This includes wiring, cabling, and power supply setup.

- Software Configuration: Configuring the data acquisition software, flight control systems and any supporting software to meet the test requirements. This usually includes thorough testing of the setup before any actual flight tests occur.

- Environmental Considerations: Accounting for environmental factors (temperature, humidity, wind speed, etc.) that could impact the test results. This might involve conducting tests under specific environmental conditions or making corrections for environmental variations.

In one project, we had to set up a complex ground test facility to simulate high-altitude conditions for testing a new engine. This required integrating numerous specialized pieces of equipment, including environmental control chambers, and sophisticated data acquisition systems. The entire process needed meticulous planning, close coordination between several teams and a thorough understanding of the equipment’s capabilities and limitations.

Q 14. How do you collaborate with different teams (engineering, manufacturing, etc.) during test planning?

Collaboration is essential in aircraft test planning. Effective communication and coordination with different teams (engineering, manufacturing, operations, etc.) are vital for successful testing. My approach includes:

- Regular Meetings and Communication: Holding regular meetings with all stakeholders to discuss test plans, progress, and any issues that arise. Effective communication channels are crucial for keeping everyone informed and aligned.

- Clear Roles and Responsibilities: Defining clear roles and responsibilities for each team to avoid duplication of effort and ensure accountability. This includes establishing clear reporting lines and decision-making processes.

- Document Sharing and Version Control: Utilizing version control systems to manage all test-related documents (plans, reports, data) and ensure everyone is working with the latest version. This avoids confusion and ensures that everyone is on the same page.

- Issue Tracking and Resolution: Establishing a process for tracking and resolving issues or conflicts that arise during testing. This often involves using issue tracking software and a collaborative approach to problem-solving.

For instance, during a flight test program, I worked closely with the design engineering team to understand design changes impacting test requirements. We collaborated with manufacturing to ensure that the aircraft was correctly instrumented and prepared for testing, and with flight operations to coordinate flight schedules and safety procedures. This close collaboration was crucial in delivering the flight test program on time and within budget.

Q 15. What are your strategies for managing test timelines and budgets?

Managing test timelines and budgets for aircraft testing requires a proactive, multi-faceted approach. It begins with a meticulous initial planning phase, involving detailed breakdown of tasks, accurate resource estimation (personnel, equipment, flight hours), and contingency planning for potential delays. We leverage tools like Earned Value Management (EVM) to track progress against the baseline plan and identify potential cost overruns early.

My strategy includes:

- Detailed Work Breakdown Structure (WBS): Breaking down the entire test program into smaller, manageable tasks, assigning responsible parties, and estimating time and cost for each. This allows for better monitoring and control.

- Risk Management: Identifying potential risks (e.g., weather delays, equipment malfunctions, software bugs) and creating mitigation strategies, including buffer time in the schedule and budget reserves. This ensures we are prepared for unforeseen challenges.

- Regular Monitoring & Reporting: Consistent tracking of progress against the schedule and budget, using both EVM and regular status meetings. Early identification of variances allows for timely corrective action.

- Collaboration & Communication: Maintaining open communication with all stakeholders – engineers, pilots, management – to ensure everyone is informed and any issues are addressed promptly.

For instance, in a recent project, we anticipated potential delays due to weather constraints in the test location. We built a 20% buffer into the schedule and allocated a corresponding budget reserve, effectively mitigating the impact of these delays on the overall timeline and cost.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe a time when you had to revise a test plan due to unforeseen circumstances.

During a flight test program for a new autopilot system, we experienced a significant software anomaly during a critical maneuver. The autopilot unexpectedly disengaged, requiring immediate intervention by the test pilot. This was not covered in our initial test plan.

Our response involved several steps:

- Immediate Safety Review: The flight was immediately aborted, and a thorough investigation into the cause of the anomaly began.

- Root Cause Analysis: We used a combination of flight data recorder analysis, software debugging, and simulation to identify the source of the software error.

- Test Plan Revision: The original test plan was revised to include additional test cases specifically designed to stress-test the identified weakness in the autopilot system. This involved adding new test points, flight conditions, and safety protocols.

- Retesting and Verification: After the software bug was fixed and thoroughly tested in simulation, we repeated the affected test maneuvers to validate the fix and ensure the autopilot functioned as intended.

This experience highlighted the importance of flexibility and adaptability in test planning. While a detailed plan is crucial, the ability to react effectively to unforeseen challenges and revise the plan accordingly is essential for successful aircraft testing.

Q 17. How do you ensure traceability between requirements, test cases, and test results?

Traceability between requirements, test cases, and test results is crucial for demonstrating test completeness and achieving certification. We achieve this through a rigorous requirement management process and the use of a dedicated test management tool.

The process typically involves:

- Requirement Identification & Decomposition: Clearly defined and documented requirements are broken down into smaller, testable units. Each requirement is uniquely identified (e.g., using a requirement ID).

- Test Case Development: For each testable requirement, specific test cases are designed to verify its functionality. These test cases are linked to the corresponding requirement ID using a requirements traceability matrix (RTM).

- Test Execution & Result Recording: During test execution, the results of each test case are carefully documented, including pass/fail status, observed behavior, and any anomalies. The results are directly linked back to the test cases and, by extension, the related requirements.

- Test Management Tool: We use a dedicated test management tool to facilitate the management of requirements, test cases, and test results. These tools often provide features for automatic generation of traceability reports, ensuring consistent and accurate tracking.

Think of it like a chain: Requirements are the links, test cases are the connectors, and test results are the final verification of each link’s strength. Any broken link in this chain can compromise the overall integrity of the system.

Q 18. Explain your understanding of different types of aircraft testing (e.g., functional, performance, endurance).

Aircraft testing encompasses various types, each serving a specific purpose in validating the aircraft’s design, performance, and safety. Some key types include:

- Functional Testing: Verifying that each system and subsystem of the aircraft functions as specified in its design documentation. This includes things like flight controls, avionics, and engine performance.

- Performance Testing: Evaluating the aircraft’s overall performance characteristics, such as speed, climb rate, range, and fuel consumption. This often involves measuring parameters against predicted values.

- Endurance Testing: Assessing the aircraft’s ability to sustain flight for extended durations, typically to identify potential fatigue issues or system limitations over long periods. This might involve sustained flight at various altitudes and speeds.

- Environmental Testing: Testing the aircraft’s response to extreme environmental conditions, such as high temperatures, low temperatures, high altitude, icing, and extreme wind conditions.

- Structural Testing: Assessing the structural integrity of the aircraft through rigorous stress and fatigue tests to ensure it can withstand the loads it will encounter during operation.

In a typical flight test program, these different types of testing are integrated to provide a comprehensive evaluation of the aircraft’s capabilities and safety.

Q 19. How do you validate and verify test results?

Validating and verifying test results are crucial steps in ensuring the accuracy and reliability of the test program. Validation focuses on ensuring that the testing process itself was correctly designed and executed, while verification confirms that the test results accurately reflect the actual performance of the aircraft.

Verification involves comparing test results to the predicted values or expected behavior defined in the requirements. This might involve analyzing flight data, comparing sensor readings, and examining system logs. We use statistical analysis techniques to determine if the observed performance falls within acceptable tolerances.

Validation involves confirming the test methods were appropriate and the test environment accurately represented real-world operating conditions. This involves reviewing test procedures, ensuring proper calibration of equipment, and assessing the overall quality of the test data. Peer reviews of test plans and results are critical for validation.

For example, after conducting a series of performance tests, we would verify that the measured speed and altitude are within the design specifications. Simultaneously, we would validate that the wind conditions during the test were accurately measured and accounted for in the analysis of the results.

Q 20. What are the key performance indicators (KPIs) you use to measure the success of a test program?

Key Performance Indicators (KPIs) for a successful aircraft test program are carefully selected to reflect the program’s goals and objectives. These KPIs should be measurable, achievable, relevant, and time-bound (SMART).

Examples of important KPIs include:

- Test Completion Rate: The percentage of planned tests completed within the allocated time and budget.

- Defect Detection Rate: The number of defects found during testing, indicative of the test program’s effectiveness in identifying potential issues.

- Test Cycle Time: The time taken to complete each phase of testing, including planning, execution, and reporting.

- Cost Efficiency: The cost of testing per unit of work completed, demonstrating economical use of resources.

- On-Time Delivery: Whether the test program completed within the scheduled timeframe.

- Safety Record: A record of incidents, mishaps, or unsafe events that occur during testing and any mitigation strategies used.

Regular monitoring of these KPIs allows us to track progress, identify areas for improvement, and make data-driven decisions to ensure the program’s success.

Q 21. Explain your experience with fault isolation and troubleshooting during flight testing.

Fault isolation and troubleshooting during flight testing is a critical skill requiring systematic problem-solving and a deep understanding of aircraft systems. It often involves a combination of real-time data analysis, post-flight data review, and collaborative teamwork.

My approach typically involves:

- Data Acquisition and Review: We analyze real-time data from the aircraft’s flight data recorders (FDRs) and other onboard sensors to identify anomalies and potential causes of the fault.

- Systematic Diagnosis: Using fault trees and diagnostic flow charts, we systematically eliminate potential causes of the failure until the root cause is isolated. This involves considering multiple factors like sensor readings, system logs, and pilot reports.

- Simulation and Modeling: We use flight simulators to reproduce the flight conditions and test different hypotheses about the cause of the fault. This can help identify potential issues without further flight tests.

- Collaboration and Expertise: Troubleshooting complex issues often involves collaboration between pilots, engineers from different disciplines (software, hardware, systems), and maintenance crews. Their experience and varied perspectives are invaluable in diagnosing the problems.

For example, during a flight test, we experienced an unexpected deviation in the aircraft’s flight path. By carefully reviewing the FDR data and correlating sensor readings, we discovered a small but significant software error in the flight control system. This was then successfully replicated and resolved in simulation, thus mitigating the need for further risk-bearing flight testing.

Q 22. How do you handle conflicts or disagreements among team members during test planning?

Conflict resolution is crucial in aircraft test planning, where diverse teams with varying expertise often collaborate. My approach is proactive and focuses on open communication and collaborative problem-solving. I start by creating a safe and respectful environment where team members feel comfortable expressing their opinions and concerns. When a disagreement arises, I facilitate a structured discussion, ensuring everyone has a chance to articulate their perspective. This often involves clarifying the root cause of the conflict, identifying shared goals, and exploring various solutions together.

For instance, during the planning phase of a flight test for a new autopilot system, disagreement arose on the best methodology for verifying its performance in turbulent conditions. One team favored a purely simulation-based approach, while another advocated for real-world flight tests in increasingly turbulent conditions. I facilitated a meeting where both teams presented their rationale, highlighting the advantages and risks of each approach. We ultimately agreed on a hybrid approach, combining simulation with carefully controlled flight tests, mitigating risks and satisfying everyone’s concerns.

If the disagreement persists, I escalate it to a higher management level only as a last resort. The emphasis is always on collaborative solutions, respecting diverse viewpoints and reaching a consensus that ensures safety, efficiency, and program success.

Q 23. Describe your experience with integrating new aircraft systems into existing platforms.

Integrating new aircraft systems requires meticulous planning and execution. My experience encompasses various integration projects, from integrating new avionics suites into legacy aircraft to incorporating advanced flight control systems into next-generation platforms. The process usually begins with a comprehensive requirements analysis to understand the functionalities of the new system and its impact on the existing platform. This includes verifying compatibility with existing hardware and software, addressing potential interface issues, and ensuring the new system doesn’t compromise the safety or performance of the existing aircraft.

For example, during the integration of a new weather radar system into a regional jet, we had to carefully consider the power requirements, data bus communication protocols, and integration with the existing cockpit displays. We created detailed interface control documents (ICDs) to specify the communication protocols and data formats between the new system and the existing aircraft infrastructure. Extensive testing, including laboratory testing, software-in-the-loop simulations, and flight tests, ensured the seamless integration of the new radar system without affecting the other aircraft systems. Thorough documentation, including test reports, change requests, and modifications to existing manuals, was crucial throughout this process.

Q 24. How do you prepare for regulatory audits and compliance reviews?

Preparing for regulatory audits and compliance reviews is a critical aspect of aircraft test planning. My approach involves meticulous documentation and proactive compliance measures throughout the entire program lifecycle. We maintain a comprehensive quality management system (QMS) which documents all aspects of the testing process, from initial planning to final report generation. This system ensures we meet all the relevant regulatory requirements, such as those outlined by the FAA (Federal Aviation Administration) or EASA (European Union Aviation Safety Agency). Furthermore, we conduct regular internal audits to identify potential compliance gaps before external audits occur.

We create checklists and templates for all aspects of the testing process – test plans, procedures, reports, and data management. This makes it easier to quickly access documentation and maintain a clear audit trail. We use version control systems to track modifications, ensuring that the documentation reflects the current status of the testing activities. Before an audit, we conduct a mock audit to identify and address any deficiencies in our documentation or processes. We always schedule pre-audit meetings with the regulatory agency to ensure they have clear expectations and are well-informed about our testing program.

Q 25. How do you document and maintain test artifacts throughout the lifecycle of a test program?

Maintaining a robust system for documenting and managing test artifacts is essential for efficient test planning and successful program completion. I utilize a combination of digital and physical archiving methods, tailored to the specific needs of each project. We employ a dedicated test management system (TMS) that provides a centralized repository for all test-related documents and data. This allows for easy access, version control, and collaborative work among team members.

The TMS stores test plans, procedures, test cases, test scripts, test results, defect reports, and any other relevant documentation. We follow a strict naming convention and file organization system to ensure easy retrieval of information. Physical archives are also maintained for critical documents, complying with regulatory requirements for long-term storage. The type of documentation stored includes engineering drawings, flight data, and other crucial physical records. Regular backups are performed to prevent data loss. Access control is implemented to ensure that only authorized personnel can access sensitive information.

Additionally, a comprehensive traceability matrix is maintained to link requirements, test cases, and test results, ensuring complete test coverage. This structured approach ensures traceability and facilitates audits and regulatory compliance reviews.

Q 26. What are your strategies for continuous improvement in aircraft test planning?

Continuous improvement is paramount in aircraft test planning. I use a data-driven approach to identify areas for optimization. Post-test reviews are crucial – we analyze data from completed tests to identify inefficiencies, areas of improvement, and potential risks for future tests. This includes analyzing test execution time, resource utilization, and defect rates. The lessons learned are documented and incorporated into the planning process for subsequent tests.

We actively use feedback from all stakeholders – engineers, pilots, technicians, and regulatory agencies – to identify areas for enhancement. We leverage tools and techniques such as Six Sigma or Lean methodologies to streamline processes and improve efficiency. For example, by analyzing historical data, we might identify recurring issues during a specific test phase and implement preventative measures in future test plans to mitigate those issues. This proactive approach to process improvement not only increases efficiency and reduces cost but also enhances the overall safety and reliability of the testing process.

Q 27. Describe your experience with different aircraft types and their unique testing considerations.

My experience spans a range of aircraft types, including commercial airliners, military transport aircraft, and general aviation aircraft. Each type presents unique testing considerations. Commercial airliners, for example, require extensive flight testing to meet stringent certification standards, encompassing a wide array of performance, systems, and safety tests. Military aircraft often have specialized testing requirements related to weapons integration, operational suitability, and survivability. General aviation aircraft have different certification processes and may involve simpler testing but still require careful consideration for safety and airworthiness.

Testing a large commercial airliner demands significantly more resources and coordination than testing a smaller general aviation aircraft. Furthermore, the complexity of systems and the regulatory oversight vary greatly. For instance, testing a new flight control system on a fighter jet involves rigorous simulations and flight tests in challenging conditions. The focus is on performance under stress, maneuverability, and pilot workload. In contrast, the testing of a new autopilot system on a small propeller aircraft may involve simpler flight maneuvers and a less rigorous certification process. Understanding these nuances, and adapting my approach accordingly, is critical for efficient and successful test planning.

Key Topics to Learn for Aircraft Test Planning Interview

- Test Objectives and Requirements Definition: Understanding how to translate high-level program goals into specific, measurable, achievable, relevant, and time-bound (SMART) test objectives. This includes identifying key performance parameters and acceptance criteria.

- Test Plan Development: Creating a comprehensive test plan that outlines the scope, methodology, resources, schedule, and risks associated with aircraft testing. This involves selecting appropriate test methods (e.g., flight testing, simulation, ground testing) and defining test procedures.

- Test Case Design and Execution: Developing detailed test cases that cover various operational scenarios and potential failure modes. This includes designing tests to verify system functionality, performance, and safety. Practical application involves writing clear, concise, and executable test cases.

- Risk Management and Mitigation: Identifying and assessing potential risks throughout the testing process (e.g., weather, equipment malfunctions, safety concerns). Developing mitigation strategies to minimize the impact of these risks on the overall test program.

- Data Acquisition and Analysis: Understanding the various methods for acquiring and analyzing test data, including the use of specialized sensors, data acquisition systems, and data analysis software. Interpreting results to determine whether the aircraft meets performance requirements.

- Test Reporting and Documentation: Preparing comprehensive test reports that summarize the test results, identify any discrepancies, and recommend corrective actions. This also involves maintaining meticulous records and documentation throughout the testing process.

- Flight Test Instrumentation and Telemetry: Understanding the principles of flight test instrumentation and the use of telemetry systems for data acquisition during flight tests. This includes knowledge of sensor selection, calibration, and data validation.

- Regulatory Compliance: Familiarizing yourself with relevant aviation regulations and standards (e.g., FAA, EASA) that govern aircraft testing and certification.

Next Steps

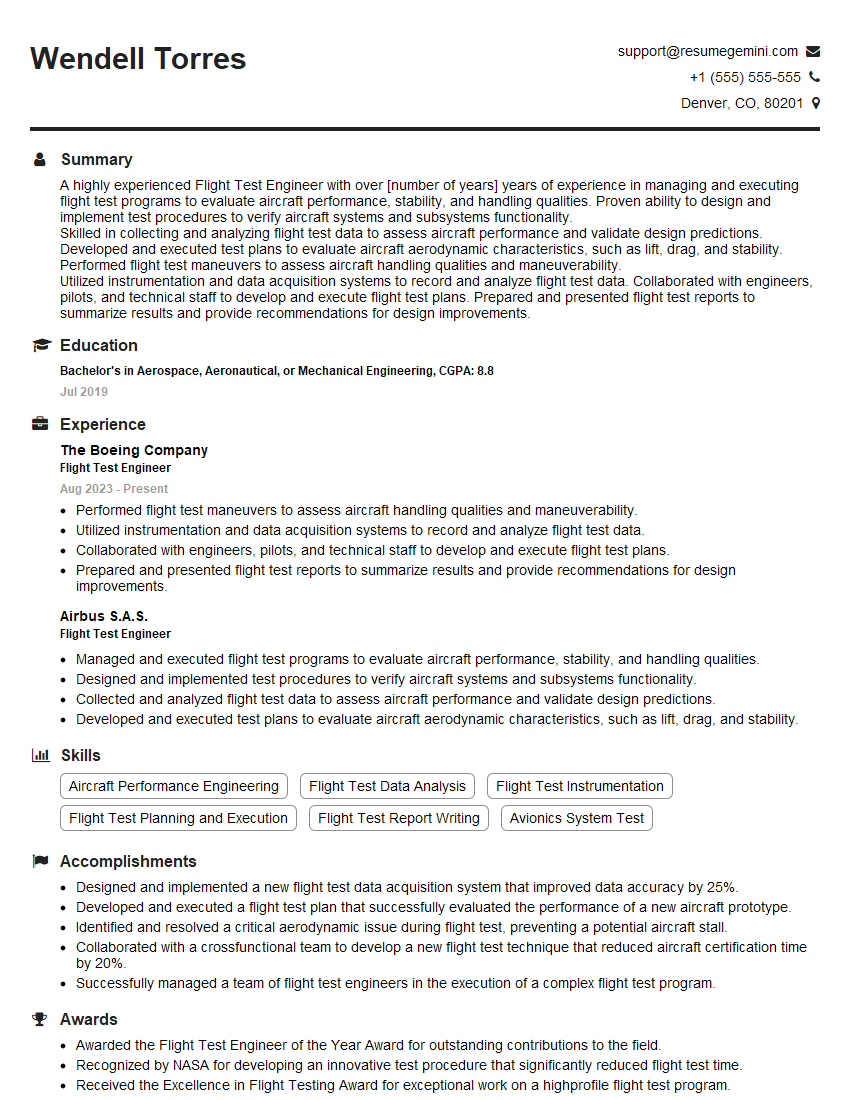

Mastering Aircraft Test Planning is crucial for career advancement in the aerospace industry, opening doors to leadership roles and specialized expertise. A strong, ATS-friendly resume is your key to unlocking these opportunities. ResumeGemini offers a trusted platform to craft a compelling resume that highlights your skills and experience effectively. We provide examples of resumes tailored to Aircraft Test Planning to guide you in building a professional and impactful document that showcases your capabilities to potential employers.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples