Interviews are opportunities to demonstrate your expertise, and this guide is here to help you shine. Explore the essential FPGA and ASIC Design interview questions that employers frequently ask, paired with strategies for crafting responses that set you apart from the competition.

Questions Asked in FPGA and ASIC Design Interview

Q 1. Explain the difference between FPGA and ASIC.

FPGA (Field-Programmable Gate Array) and ASIC (Application-Specific Integrated Circuit) are both integrated circuits used for digital logic implementation, but they differ significantly in their flexibility, cost, and performance. Think of it like this: an FPGA is like a blank canvas – you can reprogram it many times to create different designs. An ASIC, on the other hand, is a custom-designed painting – highly optimized for a specific task but inflexible once created.

- FPGA: Offers flexibility and reprogrammability. Ideal for prototyping, testing, and low-volume production. They are more expensive per unit but much less expensive to design initially.

- ASIC: Offers higher performance, lower power consumption, and smaller size once produced. Ideal for high-volume production where the upfront design cost is amortized over many units. Requires a significant upfront investment and longer design time.

In short, FPGAs are ideal for rapid prototyping and smaller production runs, while ASICs are the best choice for high-volume products requiring optimal performance and power efficiency.

Q 2. Describe the process of RTL design.

RTL (Register-Transfer Level) design is the process of describing a digital circuit using a hardware description language (HDL) such as VHDL or Verilog. It focuses on the transfer of data between registers and the operations performed on that data. It’s like creating a blueprint for your circuit, specifying what happens at a higher level of abstraction without getting bogged down in the minute details of gate-level implementation.

The process typically involves:

- System Specification: Defining the functionality and requirements of the design.

- Architectural Design: Creating a high-level block diagram outlining the major components and their interactions.

- RTL Coding: Writing the HDL code to describe the behavior of each component.

- RTL Simulation: Verifying the functionality of the RTL code through simulation using testbenches.

- Synthesis: Translating the HDL code into a gate-level netlist, a representation of the circuit using logic gates.

For example, a simple counter in Verilog might look like this:

always @(posedge clk) begin if (reset) count <= 0; else count <= count + 1; endThis describes the behavior of a counter incrementing on each clock edge, unless reset.

Q 3. What are the different types of FPGA architectures?

FPGA architectures vary significantly between vendors (like Xilinx, Intel, Microsemi) and even within a vendor's product line. However, some common architectural elements include:

- Look-Up Tables (LUTs): These are small memory blocks that implement logic functions. They are the fundamental building blocks of most FPGAs.

- Flip-Flops (FFs): These are memory elements that store data. They are used to register data and create sequential logic.

- Configurable Logic Blocks (CLBs): These are groupings of LUTs and FFs that can be configured to implement complex logic functions.

- Interconnects: These are the routing resources that connect the CLBs and other components. The efficiency and density of the interconnect heavily influence the FPGA's performance and capacity.

- Embedded Memory Blocks: Some FPGAs include dedicated blocks of memory, like SRAM or ROM, for storing data.

- Hardened Processors (e.g., ARM cores): Many modern FPGAs incorporate hard processor cores, allowing for heterogeneous processing and system-on-chip integration.

Different architectures focus on different priorities. Some architectures prioritize density (lots of logic elements), others prioritize speed (fast clock rates), and still others prioritize power efficiency.

Q 4. Explain the concept of timing closure in FPGA/ASIC design.

Timing closure is the process of ensuring that all timing constraints of an FPGA or ASIC design are met. In simpler terms, it's making sure all the signals arrive at their destinations on time. Imagine it like an orchestra – every instrument needs to play its note at the precise moment for the music to sound correct. If a signal is delayed too much (violates setup time) or arrives too early (violates hold time), the circuit may malfunction.

Achieving timing closure involves several steps, including:

- Defining timing constraints: Specifying the required clock frequencies and delays.

- Place and Route: Physically assigning components and routing signals on the chip. This step has a significant impact on timing.

- Static Timing Analysis (STA): Analyzing the timing of the design to identify timing violations.

- Optimization: Modifying the design or the place and route to meet timing requirements. This could involve techniques like pipelining, clock tree synthesis, or using faster components.

Failure to achieve timing closure leads to a non-functional design.

Q 5. How do you perform static timing analysis (STA)?

Static Timing Analysis (STA) is a process that verifies the timing correctness of a design by analyzing the delays on all paths in the circuit *without* actually running the circuit. It's like checking the blueprint of a building to ensure all the structural elements meet the requirements before constructing the building. It's performed on a gate-level netlist after synthesis and place and route.

The process typically involves:

- Reading design data: Loading the gate-level netlist, constraints (clock frequency, input delays, etc.).

- Delay Calculation: Calculating the propagation delays of signals through the circuit based on the technology library (which defines the delays of individual gates).

- Path Analysis: Identifying all critical paths – paths that take the longest time to traverse. It considers both setup and hold time constraints.

- Violation Reporting: Identifying any paths that violate timing constraints (setup or hold time violations).

STA tools provide detailed reports indicating the severity and location of timing violations, which are crucial for design optimization.

Q 6. What are the different levels of verification in ASIC design?

Verification in ASIC design is a crucial process to ensure the design functions as intended. It's a multi-level process involving several stages, each with its own set of techniques and tools:

- Unit Verification: Verifying individual modules or components using directed and random testbenches.

- Integration Verification: Verifying the interaction between different modules after integration.

- System Verification: Verifying the entire system-level functionality, often using transaction-level modeling (TLM) and sophisticated verification techniques.

- Formal Verification: Using mathematical methods to prove or disprove specific properties of the design.

These levels build upon each other, offering a robust verification flow that increases confidence in the correctness of the design before manufacturing. Each level uses different methods, from simple testbenches to complex assertions and formal property checking. The choice of verification methods depends on factors such as complexity, criticality, and budget constraints.

Q 7. Explain different coding styles for HDL (VHDL/Verilog).

Coding styles in HDL (VHDL/Verilog) are crucial for readability, maintainability, and efficient synthesis. There's no single “best” style, but several common practices promote good design.

- Clear Naming Conventions: Use descriptive names for signals, registers, and modules to improve code understanding. For instance,

clkfor clock,data_infor input data,data_outfor output data. - Proper Indentation and Formatting: Consistent indentation enhances readability and makes it easy to identify code blocks. Most HDL editors provide auto-formatting features.

- Modularity: Divide complex designs into smaller, independent modules. This improves code reusability and reduces complexity.

- Comments: Add comments to explain the purpose and function of code sections. Especially crucial for complex logic or algorithms.

- Data Types: Use appropriate data types (e.g., signed/unsigned integers, vectors, arrays) to efficiently represent data.

- Synthesizable Coding: Avoid constructs that are not supported by synthesis tools. This often involves restrictions on delays and using only synthesizable constructs within the HDL.

Different projects and teams might adopt their preferred coding style guidelines. Maintaining consistency is paramount within a project.

Q 8. Describe your experience with synthesis tools.

Synthesis is the crucial process of translating a hardware description language (HDL) like Verilog or VHDL into a netlist—a description of interconnected logic gates. I've extensively used synthesis tools like Synopsys Design Compiler and Xilinx Vivado throughout my career. My experience encompasses not just running the tools but also optimizing the synthesis process for area, power, and performance. For instance, in a recent project designing a high-speed packet processor, I leveraged Design Compiler's advanced optimization options to reduce the chip area by 15% while maintaining the required clock frequency, significantly impacting the final cost and performance.

My workflow typically involves:

- HDL code review: Ensuring the code is synthesizable and follows best practices for optimal results.

- Constraint definition: Setting timing constraints, area constraints, and other design requirements to guide the synthesis tool.

- Synthesis run and analysis: Running the synthesis tool, analyzing the reports (timing, area, power), and iteratively refining the design to meet specifications.

- Optimization techniques: Employing techniques such as clock gating, register balancing, and hierarchical synthesis to improve performance and resource utilization.

Understanding the synthesis tool's capabilities and limitations is paramount. For example, I know when to leverage specific synthesis directives or explore different synthesis strategies to overcome challenges like timing closure or excessive resource usage.

Q 9. How do you handle clock domain crossing?

Clock domain crossing (CDC) is a critical issue in digital design where signals need to transition between asynchronous clock domains. The primary concern is metastability, where a signal caught in an indeterminate state can cause unpredictable behavior. My approach to handling CDC involves a multi-layered strategy:

- Asynchronous FIFOs: For high-throughput data transfer, asynchronous FIFOs provide a robust solution. They handle data transfer between clock domains reliably, mitigating metastability risks. I've used this extensively in high-speed communication interfaces.

- Synchronization stages: For single-bit signals or low-data-rate transfers, multiple flip-flops (typically two or more) are used in the receiving clock domain to synchronize the signal. The probability of metastability propagating through multiple stages is significantly reduced. I've successfully employed this method in various control signal synchronization scenarios.

- Gray codes: When dealing with multi-bit counters or state machines, using Gray codes can reduce the risk of multiple bits changing simultaneously during a clock transition, which helps to limit the potential impact of metastability.

- Formal verification: Utilizing formal verification methods can help prove the correctness of the CDC implementation and ensure that no metastability-related issues exist. This is an increasingly important part of my design process for critical systems.

Choosing the appropriate CDC method depends on factors like data rate, data width, and the criticality of the design. Thorough simulation and analysis are always crucial to validate the chosen solution.

Q 10. Explain your experience with constraint files (XDC, SDC).

Constraint files, like XDC (Xilinx Design Constraints) and SDC (Synopsys Design Constraints), are essential for guiding the synthesis, place-and-route, and timing analysis tools. They specify design requirements such as clock frequencies, input/output delays, and timing exceptions. I have extensive experience defining and managing these files for complex FPGAs and ASICs.

My expertise includes:

- Creating timing constraints: Accurately defining clock periods, input and output delays, and other timing parameters based on the system specifications and physical board characteristics.

- Generating and using I/O constraints: Defining the pin assignments, slew rates, and drive strengths for the external interfaces. I've used this to optimize signal integrity and achieve the required communication speeds.

- Managing false paths: Identifying and constraining false paths (signal paths that should not be considered during timing analysis) to avoid unnecessary timing violations and improve timing closure.

- Using constraints for physical design: Incorporating area and placement constraints to manage resource allocation and optimize the physical layout.

- Debugging timing violations: Analyzing timing reports generated by the tools, identifying timing violations, and adjusting constraints or design to resolve them.

A well-defined constraint file is critical for successful implementation. In one project, a poorly defined constraint file resulted in significant timing violations. By meticulously reviewing and refining the constraints, I successfully closed the timing and delivered a functional design on time.

Q 11. What is metastability and how can it be mitigated?

Metastability is a phenomenon that occurs when a flip-flop is triggered by a data signal that changes during the setup and hold time window. This results in the flip-flop entering an unpredictable state, neither a '0' nor a '1', which can persist for an indeterminate time. This unpredictable state can propagate through the design and cause intermittent failures. It’s like trying to catch a ball when your hand isn't fully ready - you might sometimes catch it, but other times the ball might slip.

Mitigation strategies:

- Multiple flip-flops: Asynchronous signals should be synchronized with multiple flip-flops in the target clock domain. Each flip-flop reduces the probability of metastability propagating further.

- Asynchronous FIFOs: These provide robust synchronization for data transfers between asynchronous clocks.

- Careful clocking strategies: Proper clock distribution and management can minimize the chance of setup/hold violations.

- Robust design techniques: Designing systems with sufficient margins (e.g., using faster clock speeds than required) can lessen the impact of metastability.

- Metastability analysis: Techniques like formal verification can help to identify and analyze potential metastability issues.

It is crucial to remember that metastability cannot be completely eliminated, but its probability can be significantly reduced using these methods.

Q 12. Describe your experience with simulation tools (ModelSim, VCS).

I have extensive experience using industry-standard simulation tools like ModelSim and VCS for verifying the functionality and timing of my FPGA and ASIC designs. My expertise encompasses both behavioral and RTL simulations.

In ModelSim, I frequently use:

- Testbench development: Creating comprehensive testbenches to stimulate the design and verify its behavior under various conditions. I'm adept at using SystemVerilog for advanced testbench development.

- Debugging using waveforms: Analyzing waveforms to identify and debug functional issues.

- Code coverage analysis: Determining the level of code coverage to ensure thorough testing.

Similarly, with VCS, I leverage its capabilities for advanced verification including:

- Functional coverage: Ensuring that all the intended functionality of the design is verified.

- Static timing analysis integration: Combining simulation with static timing analysis to identify potential timing violations.

- SystemVerilog support: Using SystemVerilog features such as constrained random verification for more efficient and comprehensive testing.

Choosing between ModelSim and VCS often depends on project requirements and existing infrastructure; however, my proficiency with both enables adaptability to diverse environments.

Q 13. Explain the concept of power optimization in FPGA/ASIC design.

Power optimization is a critical aspect of modern FPGA and ASIC design, especially for battery-powered or high-density applications. The goal is to minimize power consumption without compromising performance or functionality. My approach to power optimization includes several key strategies:

- Clock gating: Disabling clock signals to inactive parts of the design. This is a highly effective technique I often use, especially in designs with large clock trees.

- Power-aware synthesis and place-and-route: Utilizing the power optimization capabilities of synthesis and place-and-route tools. These tools help to place power-hungry components strategically to reduce power dissipation.

- Low-power libraries and cells: Employing specialized low-power cells and libraries provided by the FPGA/ASIC vendors.

- Voltage scaling: Reducing the operating voltage to lower power consumption, although this requires careful analysis to ensure proper functionality.

- Multi-voltage domains: Dividing the design into multiple voltage domains, allowing sections with lower activity to run at lower voltages.

- Design architectural improvements: Optimizing the design architecture itself to reduce switching activity and power dissipation.

In a recent project involving a low-power sensor, I successfully reduced power consumption by 30% using a combination of clock gating, power-aware place-and-route, and design architectural changes, while adhering to the stringent performance requirements.

Q 14. What are different methods for debugging FPGA designs?

Debugging FPGA designs can be challenging due to the inherent complexity of the hardware. My approach involves a combination of techniques:

- Simulation: Using simulation tools to verify functionality before implementing the design on the FPGA. This helps catch errors early in the design process.

- Hardware debugging tools: Using logic analyzers, oscilloscopes, and in-circuit emulators to observe signals on the FPGA board directly.

- FPGA vendor tools: Leveraging integrated debugging features in FPGA vendor tools (e.g., Xilinx Vivado's ILA (Integrated Logic Analyzer) and VIO (Virtual Input/Output)). These tools allow monitoring internal signals without external equipment.

- Signal tracing and analysis: Systematically tracing signals through the design to identify the root cause of errors using debugging tools.

- Timing analysis: Using timing analysis reports to identify timing violations that might contribute to erratic behavior.

- Formal verification: For highly critical designs, using formal verification techniques to prove correctness and identify potential issues before testing on the physical hardware.

A methodical approach and a good understanding of the design are key to effective debugging. Often, a combination of these techniques needs to be applied. I also prioritize documenting each step and outcome to facilitate troubleshooting and future modifications.

Q 15. How do you perform floorplanning and placement in ASIC design?

Floorplanning and placement in ASIC design are crucial steps that significantly impact performance, power consumption, and manufacturing cost. Floorplanning involves the high-level arrangement of major functional blocks (like processors, memories, and I/O) on the chip, while placement determines the precise location of individual logic gates and cells within those blocks. Think of it like designing a city: floorplanning is deciding where to put residential areas, industrial parks, and commercial centers, while placement is deciding where each individual building goes within those zones.

The process typically starts with a top-down approach. We analyze the design's critical paths and power requirements to strategically position critical blocks. For example, a high-speed processor would be placed close to its memory controller to minimize latency. Similarly, high-power components would be strategically positioned to improve thermal management. We utilize tools like Synopsys IC Compiler or Cadence Innovus which use algorithms like simulated annealing and force-directed placement to optimize the layout. These tools consider factors like wire length, congestion, and timing constraints. We iteratively refine the placement based on the results of timing analysis and signal integrity checks. Manual intervention is sometimes necessary to fine-tune the placement, especially for critical paths or sensitive analog circuits. The goal is to create a balanced layout that meets all design specifications while minimizing area and maximizing performance.

Once the placement is finalized, a detailed analysis is performed, often through static timing analysis (STA) to verify that the design meets its timing requirements. Any timing violations are then addressed through further placement adjustments or by adding buffers or other timing optimization techniques.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini's guide. Showcase your unique qualifications and achievements effectively.

- Don't miss out on holiday savings! Build your dream resume with ResumeGemini's ATS optimized templates.

Q 16. Explain your experience with routing and signal integrity.

Routing and signal integrity are intrinsically linked. Routing involves connecting the placed components via physical interconnects (metal layers) on the chip. Signal integrity, on the other hand, is about ensuring that signals arrive at their destination cleanly and without distortion. It deals with issues like reflections, crosstalk, and electromagnetic interference (EMI). Poor signal integrity can lead to malfunctioning circuits, data corruption, and timing violations.

My experience encompasses using advanced routing tools like those found in Synopsys IC Compiler and Cadence Innovus. These tools employ sophisticated algorithms to find optimal routes that minimize wire length, delay, and congestion. I've been actively involved in analyzing signal integrity using simulation tools like HSPICE and SiTime to identify and mitigate potential problems. This involves analyzing signal waveforms, calculating impedance matching, and implementing techniques like shielding, controlled impedance lines, and termination resistors to minimize reflections and crosstalk. In one project, we were able to resolve a critical timing violation by strategically routing a high-speed signal on a lower metal layer with improved shielding and impedance control.

Understanding the importance of various routing metrics—such as via count, total wire length, and congestion—is key to creating a robust and reliable design. I actively use reports generated by the routing tools to identify potential problem areas and proactively address them. We need to ensure that the routed design meets timing closure requirements and adheres to strict EMI/EMC guidelines. This often involves several iterations of routing and signal integrity analysis before the final design is deemed acceptable.

Q 17. What are your experiences with different FPGA vendors (Xilinx, Intel, Microsemi)?

I have extensive experience with Xilinx, Intel (formerly Altera), and Microsemi FPGAs. Each vendor offers a unique architecture and toolset. Xilinx devices, known for their 7-series and UltraScale architectures, excel in high-performance computing and embedded applications. I've worked extensively with their Vivado Design Suite, proficient in HDL coding (Verilog and VHDL), constraint management (XDC), and IP integration. I've leveraged Xilinx's extensive IP library, including high-speed serial interfaces like PCIe and Ethernet.

Intel FPGAs, with their Stratix and Arria families, are known for their power efficiency and density. I'm familiar with the Quartus Prime software, using its features for design entry, synthesis, and timing analysis. I've successfully implemented designs using Intel's embedded processors and transceivers. Microsemi's FPGAs, notably the PolarFire family, are robust and suitable for harsh environments. I've worked with their Libero SoC software, integrating their devices into secure and reliable systems.

The key difference in my experience lies in the specific tools and design methodologies. Each vendor’s design flow has subtle nuances that require familiarity and adaptability. For example, Xilinx’s Vivado emphasizes a more integrated flow, while Quartus Prime provides greater control over specific design steps. Understanding these differences allows for efficient and effective design implementation.

Q 18. What is your experience with formal verification tools?

Formal verification is a crucial part of ensuring design correctness and preventing costly errors late in the design cycle. I've used tools like JasperGold and Cadence Conformal for property checking and equivalence checking. Property checking involves specifying design properties using a formal language like SystemVerilog Assertions (SVA) and then using the tool to mathematically prove that these properties hold true for all possible inputs and states. Equivalence checking compares two different versions of a design (e.g., a RTL design and a synthesized netlist) to verify that they behave identically.

My experience includes developing and debugging SVA properties to verify complex data paths and control logic. Formal verification is particularly useful for catching subtle errors that might be missed by simulation-based verification. For instance, in one project, formal verification helped us uncover a race condition in a state machine that would have been difficult to detect through simulation alone. The use of formal verification significantly reduces the risk of releasing a faulty design, saving time and resources during the later stages of the design process.

Formal verification isn’t a replacement for simulation, but rather a complementary technique. The combination provides a more comprehensive verification strategy.

Q 19. Describe your experience with scripting languages (Tcl, Python) in the context of FPGA/ASIC design.

Scripting languages like Tcl and Python are essential for automating repetitive tasks and improving efficiency in FPGA/ASIC design flows. Tcl is deeply integrated into most EDA tools, making it ideal for customizing workflows and automating design tasks such as generating constraints, running simulations, and analyzing results. Python, with its extensive libraries, is a powerful tool for higher-level automation, data analysis, and creating custom design tools.

I've used Tcl extensively to automate the generation of XDC constraints for timing closure, write scripts to manage design files, and automate the synthesis and implementation process within Vivado and Quartus. I've used Python for tasks such as analyzing timing reports, generating testbenches, and creating custom scripts to interact with EDA tools through their APIs. For example, I developed a Python script that automatically extracts timing information from a Vivado timing report and creates a customized visualization of critical paths, which greatly accelerated our timing closure efforts.

Proficiency in scripting is crucial for optimizing the design process. It enables efficient reuse of code, reduces manual intervention, and promotes consistency. By leveraging the power of scripting languages, design engineers can spend less time on tedious tasks and focus more on problem-solving and creative design.

Q 20. How do you manage large and complex FPGA/ASIC projects?

Managing large and complex FPGA/ASIC projects requires a structured approach. We utilize a combination of project management methodologies, version control, and collaborative tools. I've successfully employed Agile methodologies, breaking down large projects into smaller, manageable tasks with clearly defined milestones and deliverables. This iterative approach allows for early detection and resolution of issues, reducing risks and delays. Utilizing tools like Jira or similar project management software helps us track progress, manage tasks, and ensure on-time delivery.

Version control using Git is essential for managing the design files and ensuring collaboration among team members. We maintain a well-defined branching strategy to accommodate parallel development and facilitate easy merging of changes. We regularly use code reviews to improve code quality and ensure consistency across the project. We adhere to a rigorous design methodology, using a top-down approach to decompose the project into well-defined modules. This modular design approach simplifies the verification and integration process.

Communication and collaboration are paramount. Regular meetings, design reviews, and detailed documentation ensure that everyone is on the same page. Careful planning, risk assessment, and proactive issue management are key to successfully delivering complex projects on time and within budget.

Q 21. Explain the different types of memory in FPGA/ASIC designs.

FPGA and ASIC designs utilize various types of memory, each with its own performance characteristics and trade-offs. These memories are essential for storing data and instructions, impacting overall system performance and power consumption. The choice of memory depends on factors such as access speed, capacity, cost, and power requirements.

- SRAM (Static Random-Access Memory): SRAM offers fast access times but relatively low density and higher power consumption. It's commonly used for registers, caches, and high-speed data paths.

- DRAM (Dynamic Random-Access Memory): DRAM is denser than SRAM, offering higher capacity at lower cost. However, it has slower access times and requires periodic refreshing. It's used for main memory in systems.

- Embedded Block RAM (BRAM): FPGAs include dedicated BRAM blocks that are configurable as either single- or dual-port memories. They provide a balance between speed, density, and power efficiency, suitable for implementing data buffers, lookup tables, and other applications.

- Register Files: These are arrays of flip-flops used for storing data within a processor or other data-intensive units. They offer very fast access times.

- FIFO (First-In, First-Out): FIFOs are used for asynchronous data transfer between different parts of the design. They offer a reliable way to manage data flow between modules operating at different clock speeds.

- ROM (Read-Only Memory): ROM is used for storing pre-loaded data or firmware that doesn't need to be modified during operation. It's non-volatile and doesn't require power to retain data.

The selection of appropriate memory types for each component is a crucial part of the design process, impacting the overall system performance and resource utilization. Careful consideration of memory access patterns and bandwidth requirements is needed to achieve optimal results.

Q 22. What are your experiences with different design methodologies (e.g., top-down, bottom-up)?

Throughout my career, I've extensively utilized both top-down and bottom-up design methodologies, tailoring my approach to the specific project requirements and complexity. Top-down design starts with a high-level architectural specification, progressively refining it into lower-level modules and ultimately, the gate-level netlist. This approach is excellent for managing complex projects, ensuring early identification of potential issues. Think of building a house – you start with the overall blueprint before detailing individual rooms. I've used this extensively in designing high-bandwidth data processing systems, where the initial focus was on data flow and overall latency requirements, before diving into individual block implementations.

Conversely, bottom-up design starts with pre-designed, verified modules that are integrated to create a larger system. This is effective when reusing existing IP blocks or for projects with well-defined, smaller components. It's like assembling a pre-fabricated home, where individual modules (walls, windows) are combined. I've employed this strategy when integrating a pre-verified Ethernet MAC into a larger SoC design. The choice between these methodologies often depends on project constraints – time, complexity, available resources, and the reuse of IP.

Q 23. Describe your experience with physical design tools.

My experience with physical design tools spans several leading EDA suites, including Synopsys IC Compiler, Cadence Innovus, and Mentor Graphics Olympus-SoC. I'm proficient in all stages of the physical design flow, from floorplanning and placement to routing and timing closure. Floorplanning involves strategically placing the major blocks within the chip, considering power distribution, signal integrity, and thermal constraints. Placement optimizes the location of individual cells to minimize wire length and improve performance. Routing connects the cells, considering signal integrity, timing, and routing congestion. Finally, timing closure ensures the design meets the required speed and timing specifications.

I've successfully tackled challenging physical design tasks, including handling high-speed serial interfaces like PCIe and SerDes. These often require careful consideration of signal integrity, electromagnetic interference (EMI), and clock distribution. A particular project involved optimizing the routing of a high-speed bus in a high-performance computing chip, where I used techniques such as clock tree synthesis and advanced routing algorithms to achieve optimal signal integrity and timing performance.

Example: In IC Compiler, I utilize commands such as 'place_opt' for optimization and 'route_opt' for routing. Specific constraints are defined using the DEF (Design Exchange Format) and LEF (Library Exchange Format) files.Q 24. How do you ensure design security in your FPGA/ASIC designs?

Design security is paramount, especially in applications where intellectual property (IP) protection or data security is critical. My approach to securing FPGA/ASIC designs involves a multi-layered strategy. First, I utilize obfuscation techniques to make reverse engineering more difficult. This involves techniques like logic encryption, where critical parts of the design are encrypted and decrypted during runtime. Secondly, I employ secure IP cores and carefully manage access controls to prevent unauthorized modification of the design. Thirdly, for critical designs, I employ formal verification methods to prove the absence of certain vulnerabilities, like side-channel attacks.

Furthermore, I'm familiar with hardware security modules (HSMs) and their integration into designs for enhanced security. These can be used for key management and cryptographic operations. In the context of FPGA designs, bitstream encryption and secure configuration methods are crucial to prevent unauthorized reprogramming. The specific techniques used are tailored to the security requirements and constraints of each project, and often involve a close collaboration with security experts.

Q 25. Explain your experience with different design verification methodologies (e.g., UVM).

I have extensive experience with various design verification methodologies, with a strong focus on Universal Verification Methodology (UVM). UVM is a standardized framework that significantly improves the efficiency and reusability of verification components. I've used UVM to create robust verification environments for complex designs, including SoC verification. UVM’s key features, such as the transaction-level modeling (TLM), factory pattern, and phase-based execution, enable efficient verification of complex interfaces and protocols.

Beyond UVM, I'm also experienced with other techniques like functional verification using SystemVerilog assertions (SVA), formal verification using tools like Jasper, and simulation-based verification using SystemVerilog testbenches. The choice of methodology often depends on factors like design complexity, verification budget, and time constraints. For instance, for complex protocols like PCIe, a combination of UVM-based simulation and formal verification might be ideal to ensure thorough verification.

Example: In UVM, a common approach involves creating a testbench with a test sequence, driver, monitor, scoreboard, and environment components interacting via TLM.Q 26. Describe your experience with low-power design techniques.

Low-power design is a critical aspect of modern FPGA and ASIC design. My experience encompasses several techniques, from architectural level optimizations to gate-level power reduction. At the architectural level, I focus on optimizing the design's clock frequency, reducing the amount of active circuitry, and using power gating to turn off inactive parts of the design. This often involves careful analysis of the design's power consumption using tools like Synopsys PrimeTime PX.

At the logic level, I utilize techniques such as multi-voltage operation (using different voltage levels for different parts of the chip), low-power libraries, and optimized register transfer level (RTL) coding styles. I'm familiar with various power estimation and analysis methodologies, enabling accurate prediction and optimization of power consumption. In several projects, power optimization has been a key design constraint and I’ve successfully reduced power consumption by 20-30% through careful application of these techniques. For instance, in a mobile device application, power reduction was essential, and I focused on clock gating, power gating, and low-power cell selection.

Q 27. How familiar are you with different testing methodologies for FPGA/ASICs?

My familiarity with testing methodologies for FPGAs and ASICs includes both simulation-based testing and hardware-based testing. Simulation-based testing uses techniques like functional verification (as discussed earlier with UVM) to identify design flaws before manufacturing. For FPGAs, I'm experienced with in-system testing using JTAG (Joint Test Action Group) interfaces, allowing me to test and debug the design directly on the target hardware. This is particularly important for identifying timing-related issues and verifying the design's behavior in the target environment.

For ASICs, hardware-based testing typically involves creating dedicated test structures in the chip itself, such as boundary scan and built-in self-test (BIST) circuits. These enable comprehensive testing at the wafer level and post-packaging. I'm familiar with various fault models and the use of Automatic Test Pattern Generation (ATPG) tools to create efficient test vectors. Furthermore, I'm well-versed in different test methodologies, including scan testing, memory BIST, and embedded test compression to reduce the test time and cost.

Q 28. What are your experience with high-speed serial interfaces (e.g., PCIe, SerDes)?

I have significant experience with high-speed serial interfaces, specifically PCIe and SerDes (Serializer/Deserializer). These interfaces are crucial for achieving high bandwidth and low latency in modern systems. My experience involves all aspects of the design, from the physical layer (dealing with signal integrity, equalization, and jitter) to the protocol layer (implementing the PCIe or SerDes protocol stack). I'm adept at using advanced techniques to meet the stringent requirements of these high-speed interfaces, such as channel equalization, clock and data recovery (CDR), and signal integrity analysis using tools like Signal Integrity Analysis (SIA) and electromagnetic field simulators.

A key project involved designing a PCIe Gen4 interface for a high-performance data acquisition system. This required careful consideration of signal integrity to avoid signal attenuation, reflections, and crosstalk. The design incorporated advanced equalization techniques to compensate for channel impairments, resulting in a robust and reliable interface. Understanding and optimizing critical parameters like eye diagrams, bit error rates, and jitter is crucial for successful high-speed interface implementation. I’ve also worked with various SerDes technologies like those used in optical communication systems, integrating them seamlessly into FPGA and ASIC designs.

Key Topics to Learn for FPGA and ASIC Design Interview

- Digital Logic Design Fundamentals: Mastering Boolean algebra, logic gates, combinational and sequential circuits is foundational. Understand how these translate into practical hardware implementations.

- HDL (VHDL/Verilog): Gain proficiency in writing, simulating, and synthesizing HDL code. Practice designing modular and reusable code for efficient designs.

- FPGA Architecture and Implementation: Understand the internal architecture of FPGAs, including lookup tables (LUTs), flip-flops, and routing resources. Learn about place and route tools and optimization techniques.

- ASIC Design Flow: Familiarize yourself with the entire ASIC design flow, from specification to fabrication, including synthesis, static timing analysis (STA), and physical design.

- Timing Analysis and Constraints: Develop a strong understanding of timing closure and the techniques used to meet timing requirements in both FPGA and ASIC designs. Learn about clock domain crossing (CDC) considerations.

- Verification Methodologies: Understand different verification techniques, including simulation, formal verification, and emulation. Know how to write effective testbenches and debug complex designs.

- Memory Architectures: Gain knowledge of different memory types (SRAM, DRAM, Flash) and their applications in FPGA and ASIC designs. Understand memory controllers and interface protocols.

- High-Level Synthesis (HLS): Explore HLS tools and their capabilities for accelerating the design process. Understand the trade-offs between high-level design and low-level implementation details.

- Power Optimization Techniques: Learn about power consumption in digital circuits and various techniques to minimize power dissipation in FPGA and ASIC designs.

- Specific Application Domains (Optional): Depending on the job description, familiarize yourself with relevant application domains, such as high-speed data processing, image processing, or machine learning acceleration.

Next Steps

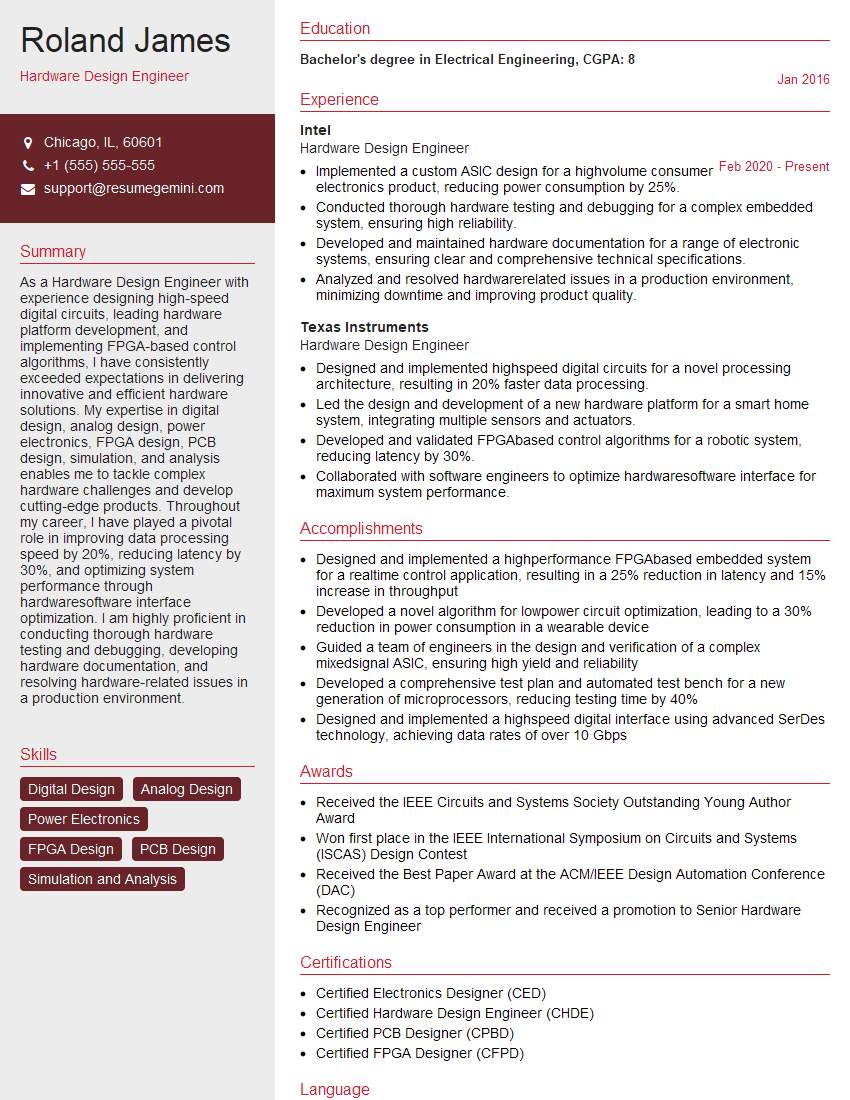

Mastering FPGA and ASIC design opens doors to exciting and rewarding careers in cutting-edge technology. Companies highly value professionals with these skills, offering competitive salaries and growth opportunities. To maximize your job prospects, it's crucial to have an ATS-friendly resume that showcases your expertise effectively. ResumeGemini can help you create a professional and impactful resume that highlights your skills and experience. They offer examples of resumes tailored to FPGA and ASIC design, ensuring your application stands out. Invest time in crafting a strong resume; it's your first impression and a key to unlocking your career potential.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

I Redesigned Spongebob Squarepants and his main characters of my artwork.

https://www.deviantart.com/reimaginesponge/art/Redesigned-Spongebob-characters-1223583608

IT gave me an insight and words to use and be able to think of examples

Hi, I’m Jay, we have a few potential clients that are interested in your services, thought you might be a good fit. I’d love to talk about the details, when do you have time to talk?

Best,

Jay

Founder | CEO