Interviews are opportunities to demonstrate your expertise, and this guide is here to help you shine. Explore the essential Music Production and Engineering interview questions that employers frequently ask, paired with strategies for crafting responses that set you apart from the competition.

Questions Asked in Music Production and Engineering Interview

Q 1. Explain the difference between mixing and mastering.

Mixing and mastering are both crucial post-production stages, but they serve distinct purposes. Mixing is like assembling a puzzle – you’re taking individual tracks (vocals, guitars, drums, etc.) and arranging them in the stereo field to create a balanced and cohesive song. This involves adjusting levels, EQ, compression, reverb, and other effects to shape the sound of each instrument and vocal, ensuring clarity and a pleasing sonic landscape. Mastering, on the other hand, is more like polishing a finished sculpture. It’s the final stage where the entire mixed track is optimized for playback across various platforms. A mastering engineer focuses on overall loudness, dynamic range, stereo imaging, and frequency balance to ensure the track translates well on different systems (car stereos, headphones, large speaker systems).

Think of it this way: Mixing is about creating the best possible sound *within* the track, while mastering is about creating the best possible sound *of* the entire track, preparing it for the wider world.

Q 2. Describe your experience with various Digital Audio Workstations (DAWs).

I’m proficient in several DAWs, including Pro Tools, Logic Pro X, and Ableton Live. My experience with Pro Tools spans over a decade, working on everything from large-scale orchestral projects to intimate singer-songwriter recordings. Its extensive plugin support and powerful editing capabilities are unmatched for many tasks. Logic Pro X’s intuitive interface and impressive built-in instrument library make it a go-to for quick prototyping and composing. Ableton Live’s session view and flexible workflow are excellent for experimental music and live performance setups, perfect for electronic music production.

In each DAW, my expertise extends beyond basic operation to advanced techniques like automation, MIDI editing, and efficient workflow management. I adapt my approach to the specific project requirements and the strengths of each DAW, always prioritizing the best outcome for the music.

Q 3. What are your preferred plugins for compression, EQ, and reverb, and why?

My plugin choices depend heavily on the specific context, but I frequently reach for several reliable workhorses. For compression, I often use either the Waves CLA-76 for its punchy, vintage vibe or the FabFilter Pro-C for its precise and transparent control. The CLA-76 is great for adding warmth and character to vocals and drums, while the Pro-C allows for surgical precision when needed, excellent for controlling dynamics in a mix without losing the nuances of a performance.

For EQ, I rely heavily on the FabFilter Pro-Q 3 for its dynamic EQ capabilities and exceptional clarity. Its ability to target specific frequencies with surgical precision and the dynamic EQ capabilities help me sculpt the sound and eliminate muddiness or harshness without sacrificing important harmonic content. For reverb, I frequently use ValhallaRoom for its beautiful, natural-sounding spaces and its versatility. It can create anything from subtle ambience to massive, cavernous soundscapes, adapting perfectly to various sonic needs.

Ultimately, plugin choice is subjective and depends on both the task and the sound you’re after. But I find that these plugins provide a solid foundation for most mixing scenarios, offering both versatility and reliability.

Q 4. How do you approach troubleshooting audio issues during a recording session?

Troubleshooting audio issues during a session is a critical skill. My approach is systematic and prioritizes identifying the root cause before applying solutions. I begin by checking the most obvious things: levels (are they too hot or too low?), cabling (are connections secure?), and interface settings (is the correct input/output selected?). Then, I carefully listen to identify the issue: is it a hum, crackle, low frequency rumble, or something else?

If it’s a hum, I’ll check grounding and eliminate any potential ground loops. Crackles often indicate a faulty cable or connector, which I’ll replace or test. A low-frequency rumble might suggest issues with the room acoustics, requiring treatment. For more complex problems, I’ll work through a process of elimination: temporarily disconnecting components one by one to pinpoint the source. Using a digital multimeter can help diagnose cable faults, while using headphones during tracking can isolate the audio source more efficiently.

Documentation and careful organization are vital. Keeping track of every change and setting allows me to retrace my steps and eliminate uncertainty.

Q 5. Explain the concept of phase cancellation and how to avoid it.

Phase cancellation occurs when two identical sound waves are out of sync, resulting in a reduction or complete cancellation of the signal. Imagine two waves – one at the peak and the other at the trough. When they combine, they effectively negate each other, leading to a loss of sound energy and a thinner, less powerful overall sound.

This often happens with microphones recording the same sound source, especially if they are too close together or are positioned poorly. It can also be an issue with multiple instruments playing the same frequency. To avoid this, ensure that microphones are placed strategically to minimize the chance of them picking up the same signal at different phases. Checking the phase correlation of tracks in the mix using a correlation meter can help identify any phase issues.

In practice, techniques such as using spaced microphones and employing proper microphone techniques can significantly minimize the risk of phase cancellation.

Q 6. Describe your workflow for mixing a pop song.

My workflow for mixing a pop song is iterative and involves several key stages. It begins with gain staging: setting appropriate levels for each track to prevent clipping and ensure headroom. Then I focus on foundational elements: drums, bass, and vocals. I’ll EQ these to ensure clarity and cut out any unwanted frequencies.

Next, I address other instrumental tracks, adjusting their EQ, compression, and reverb to create a cohesive sound. I’ll pay close attention to the stereo field, making sure instruments aren’t masking each other or creating unwanted muddiness. Throughout this process, I use automation to dynamically shape the sound, adjusting parameters like volume, EQ, and effects over time to ensure excitement and variation.

Once the basic mix is balanced, I’ll introduce effects such as delay, chorus, and flanger for added texture and interest. I’ll then refine the overall mix, making subtle adjustments to the EQ, compression, and levels to achieve a polished and radio-ready sound. Regular listening on different playback systems and checking the stereo width are crucial steps throughout the process. The whole process is usually iterative, involving multiple passes through the mix, each time refining and enhancing the sonic image until the result meets my and the client’s expectations.

Q 7. How do you handle difficult clients or creative disagreements?

Handling difficult clients or creative disagreements requires diplomacy and strong communication skills. My first step is to listen actively and try to understand their perspective. I will try to find common ground and identify the core concerns. Often, disagreements stem from a misunderstanding or differing artistic visions.

I’ll present different options or approaches, explaining the technical reasoning behind my choices. I might offer A/B comparisons of different mixes to illustrate the impact of specific decisions. Ultimately, I aim for a collaborative approach. It’s a shared creative process; a clear and open line of communication helps to navigate and resolve any conflicting ideas effectively. If there are irreconcilable differences, then it is important to set clear expectations and boundaries to ensure a satisfactory resolution, possibly even recommending a mediator if the differences are substantial. Remember, maintaining a professional and respectful demeanor throughout is key.

Q 8. What is your experience with microphone selection and placement?

Microphone selection and placement are crucial for capturing high-quality audio. The choice of microphone depends heavily on the source and desired sound. For example, a large-diaphragm condenser microphone excels at capturing smooth, detailed vocals, while a dynamic microphone is more robust and suitable for loud instruments like drums or amplified guitars. Placement is equally important. For vocals, a cardioid microphone placed a few inches from the mouth, slightly off-axis, often yields the best results, minimizing proximity effect (excessive bass boost). For acoustic guitar, experimenting with placement is key—close miking emphasizes detail, while further placement provides a more natural room sound. I typically use a combination of techniques, such as stereo miking (using two mics to capture a wider soundstage) or employing multiple microphones on a single instrument (e.g., close miking a snare drum for attack and a room mic for ambience).

Consider this scenario: I’m recording a vocalist. I might start with a Neumann U 87 (a large-diaphragm condenser) positioned about 6-12 inches from the singer’s mouth, angled slightly away. I’d then listen carefully, adjusting placement until the sound is full and natural, without harshness or excessive bass.

Q 9. Explain your understanding of room acoustics and their impact on recording quality.

Room acoustics significantly affect recording quality. Uncontrolled reflections and resonances can muddy the sound, making it difficult to achieve a clean, transparent mix. A poorly treated room might exhibit standing waves (areas of constructive and destructive interference) at certain frequencies, resulting in uneven tonal balance. This is why professional studios often employ acoustic treatment, such as bass traps (to absorb low-frequency sounds) and diffusion panels (to scatter sound waves) to control reflections and create a more neutral listening environment. Recording in a naturally reverberant space can sometimes be desirable, however, for creating ambience and depth, but it requires careful control and processing in post-production.

Imagine recording a piano in a large, untreated hall. The resulting recording would likely have excessive reverb and a lack of clarity. In contrast, recording in a well-treated studio would provide a more balanced and defined sound. Acoustic treatment helps prevent coloration and ensures that the microphones capture the essence of the instrument rather than the characteristics of the room.

Q 10. How do you monitor your mixes to ensure accurate translation across various playback systems?

Accurate mix translation across different playback systems is paramount. I achieve this through a multi-faceted approach. First, I monitor my mixes on multiple playback systems—near-field monitors (for detailed analysis), headphones (to catch subtle nuances), and even car audio and smaller Bluetooth speakers (to assess the mix’s behavior in less ideal environments). Second, I ensure a well-calibrated monitoring environment in my studio, minimizing room anomalies through careful acoustic treatment. Third, I often reference commercially released tracks in the same genre to gain a comparative perspective on dynamics and overall sonic balance. Finally, I employ techniques like spectral analysis (using EQ plugins to identify and correct frequency imbalances) and careful gain staging (maintaining appropriate signal levels throughout the mix) to create a more balanced and robust mix.

For example, I might compare my mix to a similarly produced track on several systems, checking how the bass frequencies translate on different speakers and how the overall clarity holds up on small speakers, addressing any issues to improve the mix’s ability to translate effectively.

Q 11. What are your preferred methods for noise reduction and audio restoration?

Noise reduction and audio restoration are essential for creating professional-sounding recordings. For noise reduction, I use both hardware and software solutions. Hardware solutions, such as gate plugins, can automatically reduce unwanted background sounds such as air conditioning or refrigerator hum. Software solutions, such as spectral noise reduction plugins (e.g., iZotope RX), offer more advanced techniques that can analyze and remove specific noise patterns without affecting the desired audio. Audio restoration involves repairing or enhancing damaged audio, often requiring more sophisticated techniques. This might include declicking (removing pops and clicks), decrackling (reducing static and crackle), and spectral repair (restoring lost frequencies). Choosing the right tools depends on the nature of the noise or damage. I always prefer non-destructive editing whenever possible, using plugins to manipulate the signal without permanently altering the original audio file.

Imagine a vocal recording with a consistent hum. I might first use a gate to remove the hum during silent sections. Then, if any residual hum remains, I’d employ a spectral noise reduction plugin to carefully address the issue without affecting the quality of the vocals.

Q 12. Describe your experience with different types of audio effects processing (e.g., delay, chorus, flanger).

I have extensive experience with various audio effects, including delay, chorus, and flanger. Delay creates echoes or repetitions of the audio signal. The parameters controlling delay are delay time, feedback, and mix. Chorus creates a thicker, richer sound by adding slightly detuned copies of the original signal. It’s used to add fullness and width. Flanger creates a sweeping, jet-like effect by modulating the delay time of a delayed signal, often resulting in dramatic and interesting sounds. I choose the appropriate effects based on the desired musical style and emotional impact. For example, a subtle delay can add depth to vocals, while a more pronounced delay effect might be used for creating atmospheric textures. A chorus effect can add richness to a guitar part, and a flanger effect might be used to create a psychedelic effect or textural shifts.

Think of a lead guitar solo. Adding a short, slightly delayed repeat can enhance the melody. Alternatively, a chorus effect adds fullness and width, blending with the mix better. A carefully-placed flanger could make the solo sound more intense and experimental.

Q 13. How familiar are you with different audio file formats (e.g., WAV, AIFF, MP3)?

I’m very familiar with various audio file formats, including WAV, AIFF, and MP3. WAV (Waveform Audio File Format) and AIFF (Audio Interchange File Format) are lossless formats, meaning they preserve all audio data during encoding and decoding. These are preferred for mastering and archiving. MP3 (MPEG Audio Layer III) is a lossy format, compressing audio data by discarding some information, resulting in a smaller file size but potential loss of audio quality. It’s ideal for distribution because of its efficiency and compatibility with various devices. The choice of format depends on the intended use. Lossless formats like WAV and AIFF are best for studio work where preserving audio quality is paramount. MP3 is preferred for online distribution or streaming, where smaller file sizes are important. I usually work in lossless formats during production and then convert to MP3 for final delivery if needed.

For example, I would store my original recordings as WAV files for maximum quality. I would then create MP3 versions for online distribution, choosing a bitrate that balances quality and file size based on the intended platform (e.g., higher bitrate for CD quality streams, lower for podcasting).

Q 14. Explain your understanding of signal flow in a recording studio.

Understanding signal flow in a recording studio is fundamental. Signal flow describes the path an audio signal takes from its source to the final output. It typically starts with the instrument or voice, which is captured by a microphone. The microphone signal then passes through a preamplifier, which boosts the signal level and shapes its tonal character. From the preamp, the signal might go through an equalizer (EQ) for tonal adjustments, a compressor for dynamic control, and other effects processors like reverb or delay. After processing, the signal is routed to an audio interface, which converts the analog signal to a digital format for recording and processing. The signal is then recorded on a digital audio workstation (DAW), where further editing and mixing occurs. Finally, the mixed signal is sent to a master bus for final processing and mastering before being exported to various formats.

Imagine a simple recording of a vocal track: the singer’s voice is captured by a microphone, sent to a preamp, then through an EQ, compressor, and reverb plugin on a DAW. The processed vocal is then routed to the DAW’s mixer and combined with other tracks. This represents the signal flow, a chain of audio processing which impacts the final product.

Q 15. What is your experience with automated mixing and automation techniques?

Automation in mixing is crucial for efficiency and creative control. It involves using software to control various parameters over time, like fader levels, panning, effects sends, and even plugin parameters. Think of it as programming your mix to evolve dynamically throughout a song. I’m proficient in using automation lanes within DAWs (Digital Audio Workstations) like Pro Tools, Logic Pro X, and Ableton Live. For instance, I might automate a vocal’s volume to subtly duck under a guitar solo, creating space and sonic interest, or automate a reverb send to build intensity during a chorus.

My experience extends to both writing automation from scratch and using pre-made templates to quickly achieve certain effects. I find that understanding the underlying principles of sound design and mixing informs my automation choices greatly; automation is not just about moving faders but about crafting a musical narrative.

For example, on a recent project, I used automation to create a sweeping, cinematic effect on a string section. By subtly adjusting the panning and reverb of individual string instruments across the track, it created the illusion of movement and space. This approach not only saved time but also resulted in a richer, more dynamic performance than manually adjusting levels throughout the entire mix.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you ensure the quality and consistency of your work?

Maintaining quality and consistency is paramount. My approach involves a multi-faceted strategy. Firstly, I establish clear communication with the artist or client at the outset, defining the overall sonic goal and the desired stylistic approach. This ensures that everyone’s on the same page from the start.

Secondly, I employ meticulous workflow processes. This includes creating detailed session templates, implementing robust file management strategies, and using version control within my DAW to track changes. I regularly check my work against reference tracks – professional recordings in a similar style – to ensure that I’m meeting industry standards.

Thirdly, I utilize quality control tools and techniques, such as gain staging and metering, throughout the entire process. For instance, careful gain staging minimizes clipping and noise, ensuring a professional-sounding mix. I utilize plugins for spectral analysis to catch any frequency clashes or muddiness in the mix. Finally, I always take a break from a project and listen back with fresh ears before finalizing my work. This helps catch any subtle errors or inconsistencies that might have been missed earlier.

Q 17. Describe your experience with MIDI and virtual instruments.

MIDI (Musical Instrument Digital Interface) and virtual instruments are integral to my workflow. I’m highly proficient in programming MIDI sequences, using them to create and control virtual instruments and drum machines. I’m familiar with a broad range of virtual instruments, including synthesizers, samplers, and orchestral libraries from companies like Native Instruments, Spitfire Audio, and EastWest.

My experience encompasses both using pre-made sounds and designing entirely custom sounds using synthesis techniques. I’m comfortable with subtractive synthesis, additive synthesis, wavetable synthesis, and FM (Frequency Modulation) synthesis, tailoring sounds to fit the specific musical context. For instance, I might design a custom bassline using subtractive synthesis to give it a specific punch and warmth, or program a complex drum beat using MIDI drum editor to lay down rhythms.

Furthermore, I use MIDI controllers and keyboards for more intuitive performance and composition. This enables a more expressive and spontaneous approach to creating musical parts. Working with MIDI allows for quick experimentation and iteration, enabling me to explore various musical ideas efficiently and effectively.

Q 18. What are your preferred methods for creating a balanced and dynamic mix?

Creating a balanced and dynamic mix requires a comprehensive approach. I begin with careful gain staging and frequency balancing of individual tracks, ensuring that each instrument has its own space in the frequency spectrum without excessive muddiness or harshness. I utilize equalization (EQ) to sculpt the tone of each instrument, cutting unwanted frequencies and boosting desirable ones.

Next, I use compression to control dynamics, making quieter parts louder and louder parts less loud, which helps to improve the overall clarity and punch. Then, I add subtle stereo widening and panning to create a sense of space and depth. I rely heavily on referencing professional mixes in a similar genre to guide my decisions and ensure I’m achieving a professional sound.

Finally, I utilize mastering techniques, such as limiting and loudness maximization, to polish the mix and prepare it for distribution, ensuring it’s optimized for various playback systems. Throughout the entire process, I constantly monitor the mix on multiple playback systems, including studio monitors, headphones, and car speakers, to ensure that it translates well across different listening environments. The ultimate goal is to create a mix that is both sonically pleasing and commercially viable.

Q 19. How do you manage large audio projects and maintain organization?

Managing large audio projects requires a structured approach. I use a combination of strategies to ensure organization and efficiency. Firstly, I create a detailed project folder structure, organizing files by type (audio, MIDI, video, etc.) and date. This makes it easy to find specific files when needed.

Secondly, I utilize the session management features within my DAW. This includes creating multiple sessions for various stages of the project (pre-production, tracking, mixing, mastering) and utilizing track grouping and color-coding within each session to maintain clarity and reduce visual clutter. In addition, I employ color-coding for different audio tracks (drums, bass, vocals, etc.).

Thirdly, I frequently save and backup my project files. I use external hard drives for backups and cloud storage to ensure that my work is protected from loss. For very large projects, I might use audio archiving software to consolidate my sessions and minimize storage space usage.

Q 20. What is your experience with audio editing software?

I’m highly proficient in several industry-standard audio editing software applications. My primary DAW is Pro Tools, but I also have extensive experience with Logic Pro X, Ableton Live, and Studio One. My proficiency extends beyond basic editing functions; I’m adept at advanced techniques like audio restoration, noise reduction, time stretching, and pitch correction.

I regularly use plugins for specialized tasks, such as spectral editing, dynamic processing, and sound design. My experience encompasses both linear and non-linear editing workflows, allowing me to adapt to the specific requirements of each project. I’m comfortable working with various audio formats, including WAV, AIFF, MP3, and others.

I understand the nuances of different software and how their strengths can be leveraged in a project. For example, Pro Tools’ power for large projects and its robust features for post-production are perfectly matched to large-scale commercial tasks, while Ableton’s unique session view is very helpful for composing and live performance scenarios.

Q 21. Describe your experience working with various recording techniques (e.g., close miking, ambient recording).

My experience with recording techniques is extensive and spans various styles and approaches. Close miking is a technique where the microphone is placed very close to the sound source to capture a detailed and intimate sound, useful for instruments like vocals, acoustic guitar, or drums. For instance, close-miking a snare drum provides a focused, punchy sound, whereas ambient miking captures the resonance of the room, adding warmth and natural reverb. This is invaluable for capturing a realistic sound, particularly for acoustic instruments in a live setting.

Ambient recording, on the other hand, aims to capture the overall atmosphere of a space, incorporating natural reverberation and room tone. This might involve placing microphones strategically throughout a room, capturing the ambiance of a concert hall or the outdoors, adding spaciousness to a recording. I also utilize techniques such as stereo recording using XY and AB microphone techniques to capture a wide and immersive stereo image.

I understand how microphone choice greatly impacts the sound. For example, I might use a large-diaphragm condenser microphone for a smooth vocal recording, while using a dynamic microphone for louder instruments like a guitar amplifier to prevent overload. I carefully consider microphone placement, room acoustics, and signal processing to optimize sound quality, tailoring my recording approach to the specific characteristics of each instrument and the desired aesthetic of the project.

Q 22. How do you use EQ to shape the tone of an instrument?

EQ, or equalization, is a crucial tool for shaping the tone of an instrument by adjusting its frequency balance. Think of it like a sculptor refining a clay figure: you’re not adding or subtracting material, but reshaping what’s already there. We use EQ to boost or cut specific frequencies to highlight desirable characteristics or reduce unwanted ones.

For example, a muddy bass guitar might benefit from a cut in the low-mid frequencies (around 250-500Hz), which often cause muddiness. Conversely, a slightly dull snare drum might need a boost in the high-mid range (around 2-4kHz) to add some punch and clarity. I typically use parametric EQs, allowing precise control over the center frequency, gain (boost or cut), and Q (bandwidth) of each adjustment. A wide Q affects a broader range of frequencies, whereas a narrow Q targets a specific frequency band. I always listen critically, making subtle adjustments until the instrument sits well in the mix and contributes to the overall sound.

Another example would be a vocal track. If there’s a harsh sibilance (a ‘s’ sound that’s too bright), I might use a narrow cut around 5-8kHz to smooth it out without affecting other frequencies. The process is iterative; I’ll often use multiple EQ stages to achieve a natural and refined sound.

Q 23. What are your methods for optimizing audio for different playback environments (e.g., headphones, car stereo, home theater)?

Optimizing audio for different playback systems requires understanding their frequency responses and limitations. Headphones, for instance, often have a boosted bass response, while car stereos can lack high-frequency detail. Home theaters usually have a wider frequency range but can vary greatly in their sound quality.

My approach involves a combination of techniques. First, I ensure a balanced mix that sounds good on reference monitors – this is my primary baseline. Then, I utilize specialized plugins and techniques to tailor the sound. For example, I might use a low-cut filter to remove subsonic frequencies that can cause unnecessary strain on smaller speaker systems like those found in cars. Conversely, I may subtly boost high frequencies for playback systems known for lacking detail in the high-end. For headphones, I’ll often listen for harshness in the upper midrange and make necessary adjustments to ensure a smooth and comfortable listening experience. The process often involves multiple rounds of listening and tweaking on various playback systems. I’m also acutely aware of loudness and dynamics, adjusting the overall level and compression according to the playback environment.

Ultimately, it’s a balancing act between maintaining the artistic intent of the original mix and ensuring it translates well across different systems. It’s rarely about making drastic changes; instead, it’s about subtle adjustments that enhance the listening experience on various devices.

Q 24. Explain your experience with different types of microphones (e.g., dynamic, condenser, ribbon).

My experience encompasses a wide range of microphone types, each with its unique characteristics and applications. Dynamic microphones, like the Shure SM57, are robust, reliable, and handle high sound pressure levels well – making them ideal for loud instruments like snare drums and guitar amps. Their lower sensitivity means they’re less prone to picking up unwanted noise.

Condenser microphones, such as the Neumann U 87, are known for their detail and sensitivity. They capture a wider frequency range, often with a more transparent and detailed sound, making them perfect for recording vocals, acoustic instruments, and delicate sounds. However, they are more sensitive to handling noise and require phantom power.

Ribbon microphones, exemplified by the Royer R-121, offer a unique, often warmer and smoother sound due to their different transduction method. They’re particularly well-suited for capturing the subtle nuances of instruments such as guitars and orchestral instruments, but are typically more fragile and susceptible to damage from high SPLs.

Choosing the right microphone is crucial. It depends entirely on the sound source, the desired tone, and the acoustic environment. For example, a dynamic mic might be preferred for a live, energetic drum recording, while a condenser mic would be chosen for a clean, detailed vocal recording in a controlled studio setting.

Q 25. How do you approach creating a compelling soundscape for a film or game?

Creating a compelling soundscape for film or games requires a deep understanding of storytelling and emotional impact. It’s more than just adding music; it’s about crafting an immersive sonic environment that enhances the narrative and emotional experience. I approach this by first carefully studying the script or game design to understand the mood, setting, and character arcs.

My workflow often begins with creating a mood board and identifying key sonic elements. This might involve researching relevant sound effects, composing original scores, and selecting pre-existing sound libraries. I also pay close attention to spatialization, using techniques like surround sound and 3D audio to create depth and realism. For instance, a character walking through a forest might have footsteps that are initially close and then gradually fade into the distance. This spatial manipulation engages the listener and adds realism.

For film, I’d closely collaborate with the director and editor to ensure the sound design complements the visuals and narrative. This involves using sound effects to build tension, enhance dialogue clarity, and underscore emotional moments. In game design, I’d work closely with the game designers and programmers to integrate sounds seamlessly within the game engine, paying attention to how the sounds respond dynamically to player interactions. The goal is always to create a cohesive and immersive sonic world that transports the audience to another reality.

Q 26. What are some common mistakes to avoid during the mixing and mastering process?

Several common mistakes can hinder the mixing and mastering process. One major pitfall is neglecting proper gain staging – setting appropriate levels throughout the signal chain from input to output. Improper gain staging can lead to distortion, noise, or a weak overall signal. Another common issue is overusing plugins, especially compression and reverb. Too much compression can make the mix sound lifeless and dull, while excessive reverb can create a muddy and unclear soundscape.

Frequency clashes are also a frequent problem. Instruments might compete for the same frequency range, resulting in a muddy or cluttered mix. Careful EQ is crucial to ensure instruments occupy distinct frequency spaces. Ignoring phase issues is another significant error; phase cancellations can lead to a loss of low-end energy or other unwanted artifacts. Finally, insufficient monitoring is a major problem; listening only on headphones or a single set of speakers can lead to inaccurate decisions. Using multiple monitoring setups, with various loudness and frequency characteristics, is crucial for producing a well-balanced and accurate master.

I always prioritize a clean signal path, proper gain staging, and careful EQ and compression to avoid these pitfalls. Regular listening checks on multiple playback systems are crucial in catching these errors early on.

Q 27. Describe your familiarity with different audio metering techniques.

Audio metering is crucial for ensuring the quality and loudness of the final product. I’m proficient with several metering techniques, including: Peak metering to measure the highest level of the audio signal, preventing clipping; RMS (Root Mean Square) metering to measure the average level, essential for gauging overall loudness; LUFS (Loudness Units relative to Full Scale) metering, which is crucial for complying with broadcast standards and streaming services; and spectrum analysis, which provides a visual representation of the frequency content of the audio, helping identify potential frequency imbalances or clashes. I also use correlation meters, which helps identify phase cancellation issues.

For example, LUFS metering is especially important during mastering. Streaming platforms have specific loudness standards, and exceeding these limits could lead to unwanted dynamic range compression or even rejection of the track. Therefore, mastering engineers often target a specific LUFS level based on the platform’s guidelines.

My familiarity with these various metering techniques allows for a comprehensive approach to mixing and mastering, ensuring that the final product is not only creatively engaging but also technically compliant and consistent across different platforms.

Q 28. How do you stay current with advancements in music production technology?

Staying current in music production is vital. I actively participate in online communities, forums, and subscribe to industry publications like Sound on Sound magazine, and attend webinars or online courses offered by platforms such as Udemy and Coursera. Following prominent producers, engineers, and technology reviewers on social media keeps me up-to-date on new software, hardware, and techniques. I regularly test and experiment with new plugins and DAW features.

Moreover, I engage with other professionals through networking events and online communities. Exchanging ideas and experiences with peers is invaluable for broadening my knowledge and perspective. I also prioritize experimenting on personal projects to apply new techniques and tools I learn about. This hands-on approach solidifies my understanding and helps me adapt new workflows effectively.

Continuous learning and experimentation is key to maintaining a high level of proficiency in this rapidly evolving field. It’s not just about knowing the tools but also understanding their applications and adapting to new developments within the industry.

Key Topics to Learn for Music Production and Engineering Interview

- Digital Audio Workstations (DAWs): Understanding the core functionalities of popular DAWs like Pro Tools, Logic Pro X, Ableton Live, etc., including session setup, track management, mixing, and mastering workflows.

- Signal Flow and Processing: Practical application of signal flow diagrams, understanding the impact of different plugins (compressors, EQs, reverbs, delays) on audio, and troubleshooting common signal chain issues.

- Microphone Techniques: Knowledge of different microphone types (dynamic, condenser, ribbon), polar patterns, placement techniques for various instruments and vocals, and microphone pre-amplification.

- Audio Editing and Mixing: Proficiency in editing techniques (cutting, splicing, time stretching, pitch correction), applying mixing principles (EQ, compression, panning, automation), and achieving a balanced and polished mix.

- Mastering Principles: Understanding the goals of mastering (loudness, dynamic range, stereo imaging), familiarity with mastering plugins and processes, and preparing tracks for distribution.

- Music Theory Fundamentals: A solid grasp of music theory concepts (scales, chords, rhythm, harmony) to better understand musical composition and arrangement, facilitating effective collaboration with musicians.

- Acoustic Treatment and Room Acoustics: Knowledge of principles of room acoustics, understanding the importance of acoustic treatment in recording spaces, and identifying common acoustic problems and solutions.

- Hardware and Software Troubleshooting: The ability to diagnose and resolve technical issues related to both hardware (microphones, interfaces, monitors) and software (DAW crashes, plugin errors) is crucial.

- Collaboration and Communication: Effective communication with musicians, engineers, and producers, understanding different roles within a project, and managing expectations.

- Audio for Visual Media: Understanding the unique challenges and techniques of sound design and mixing for film, television, and video games.

Next Steps

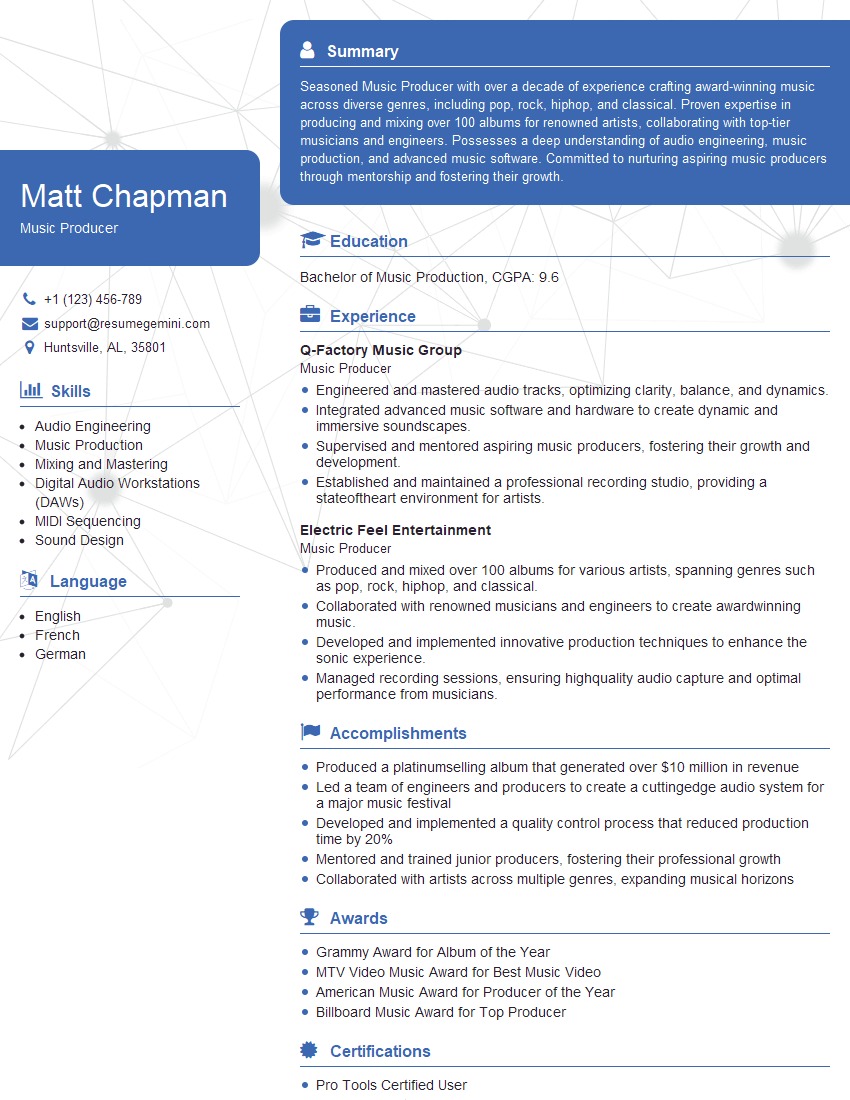

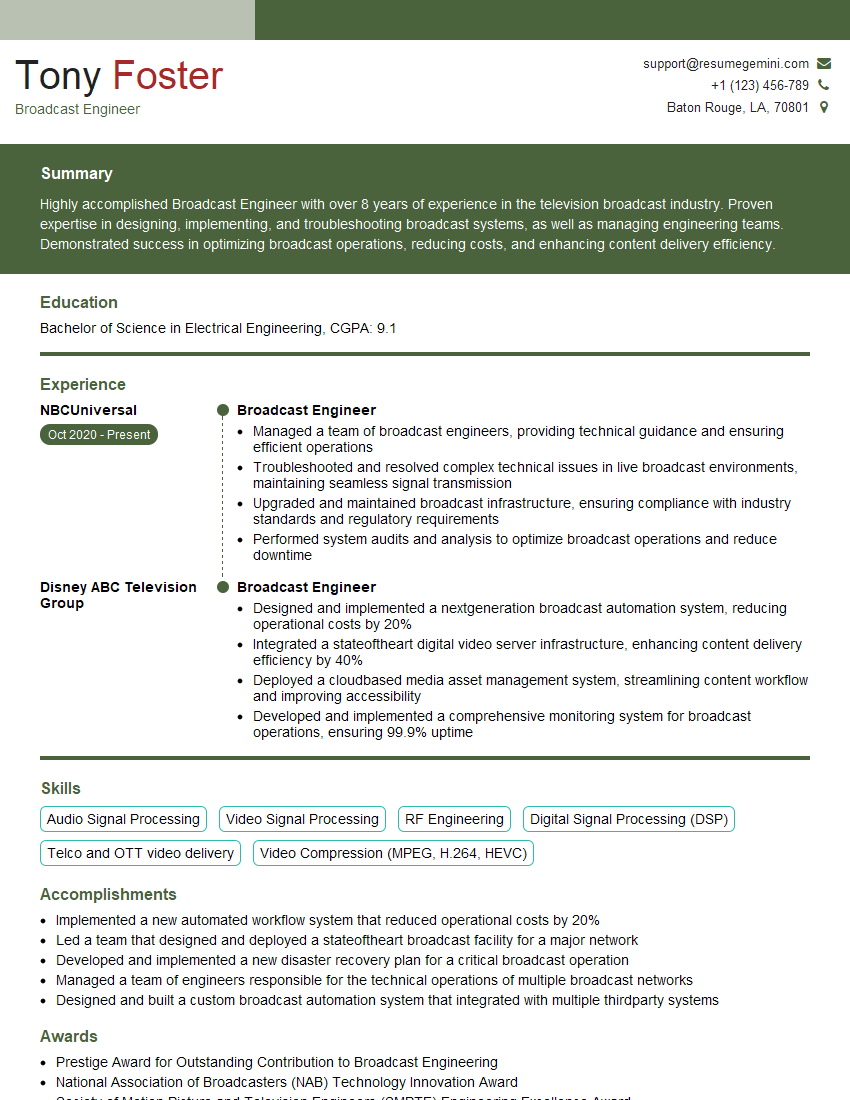

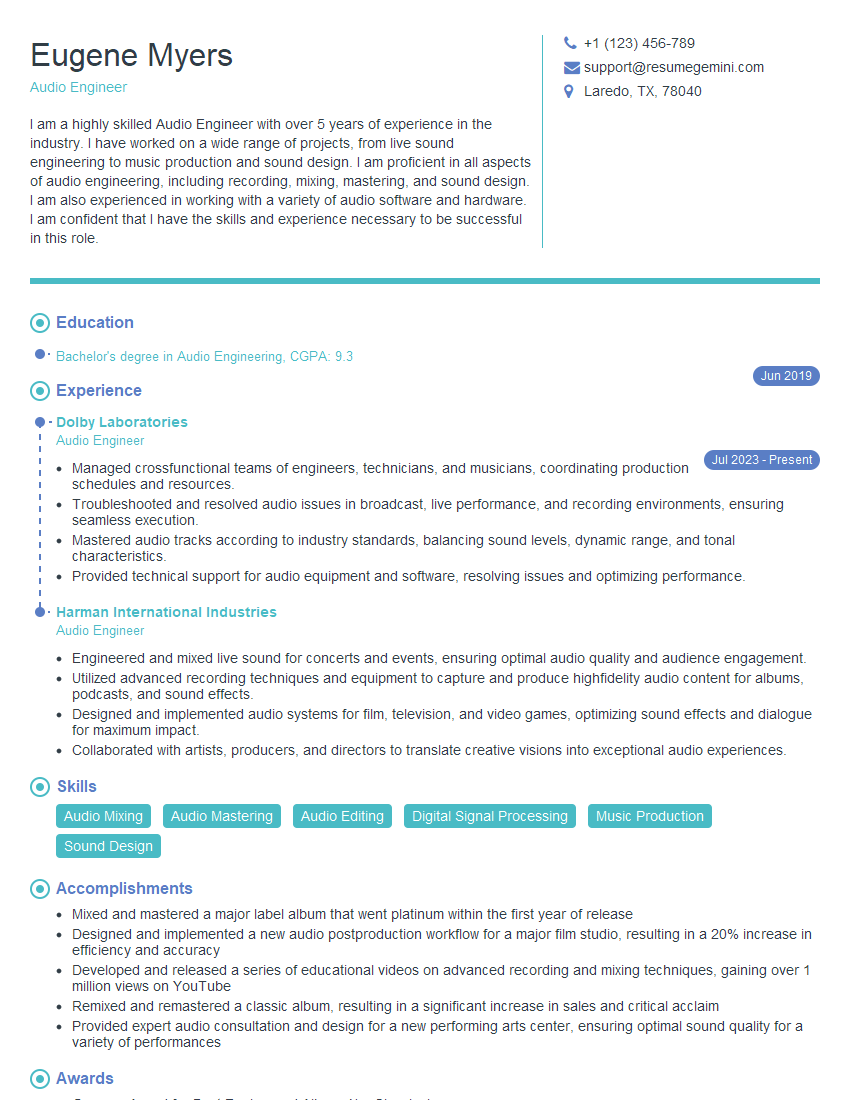

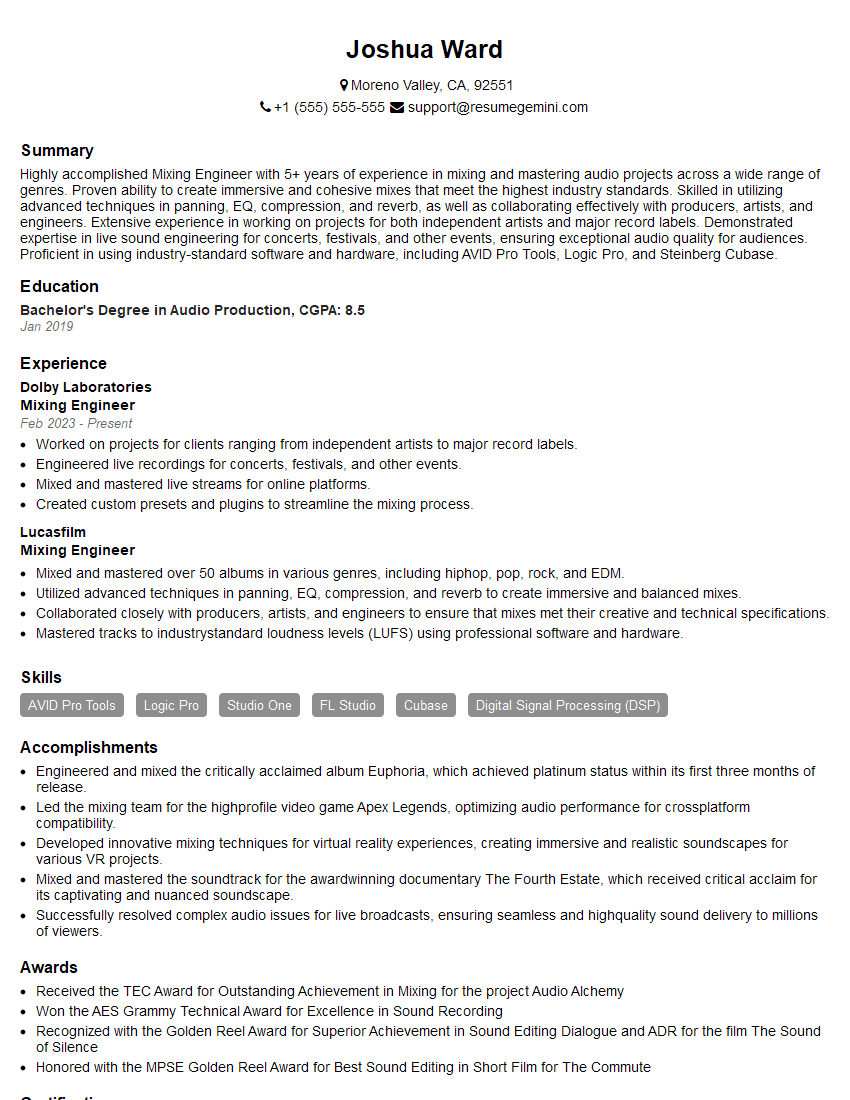

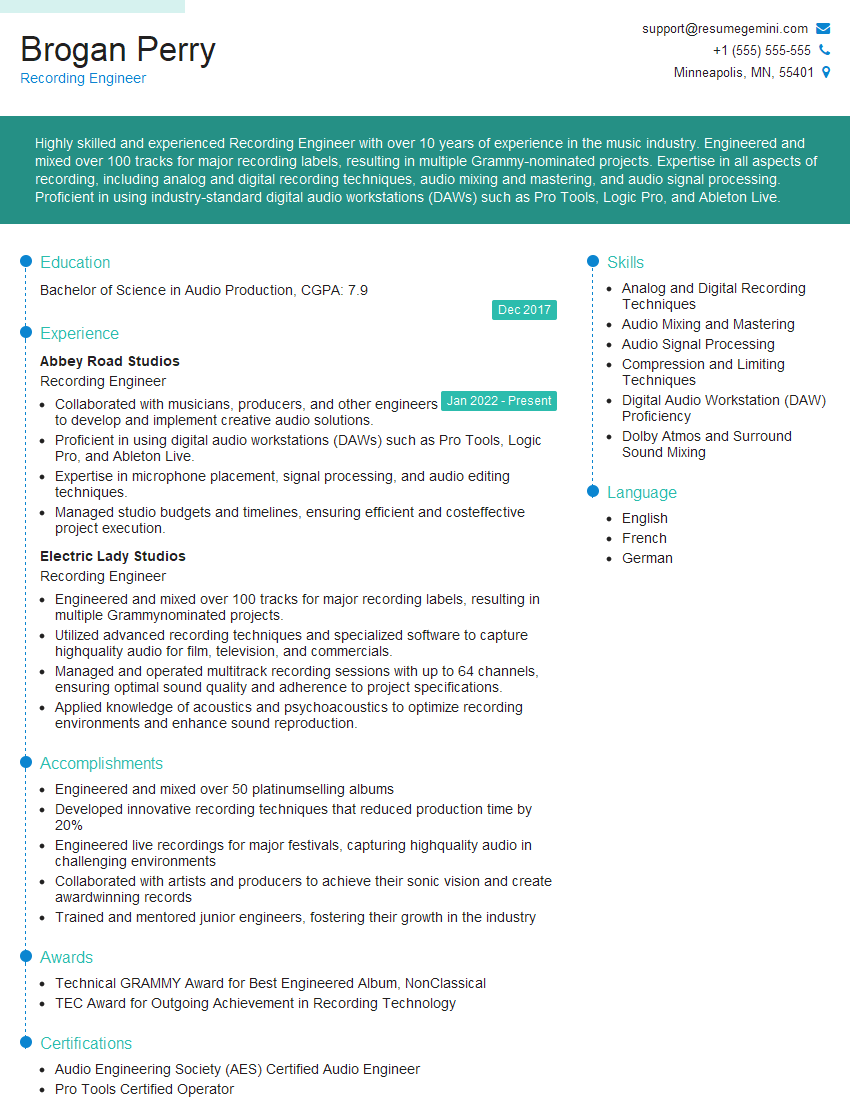

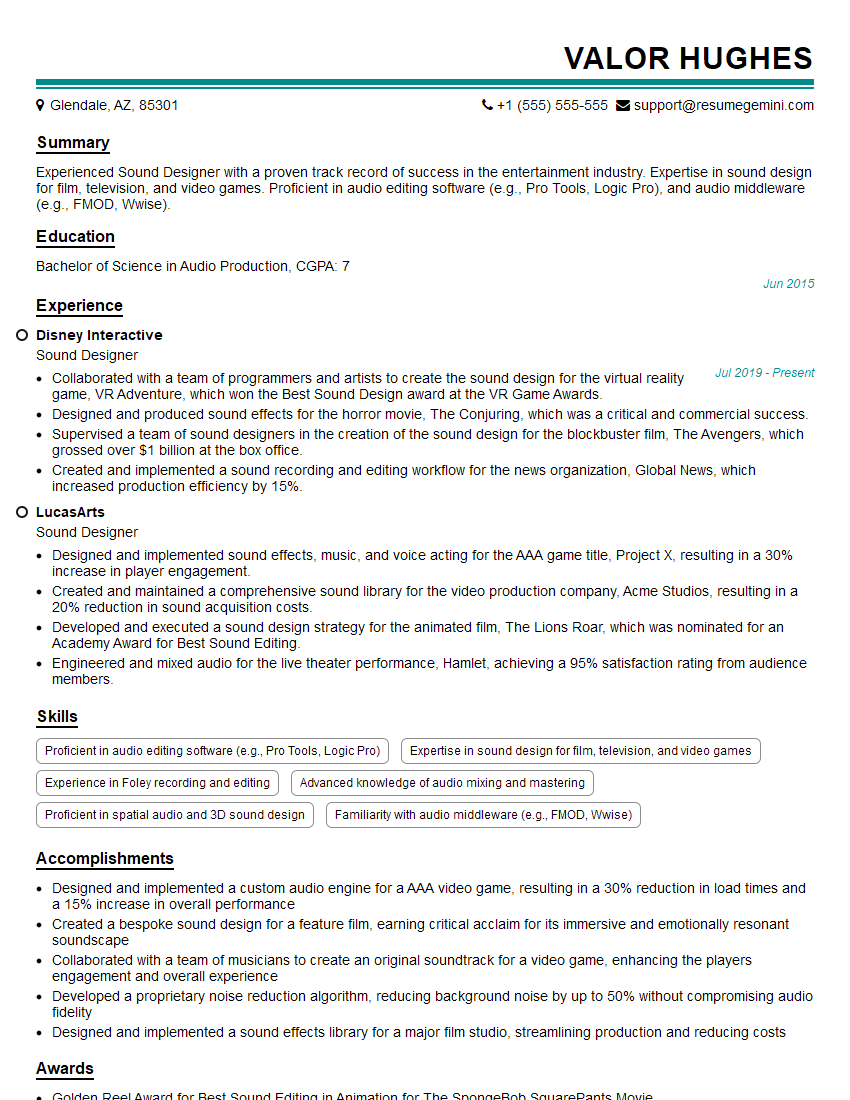

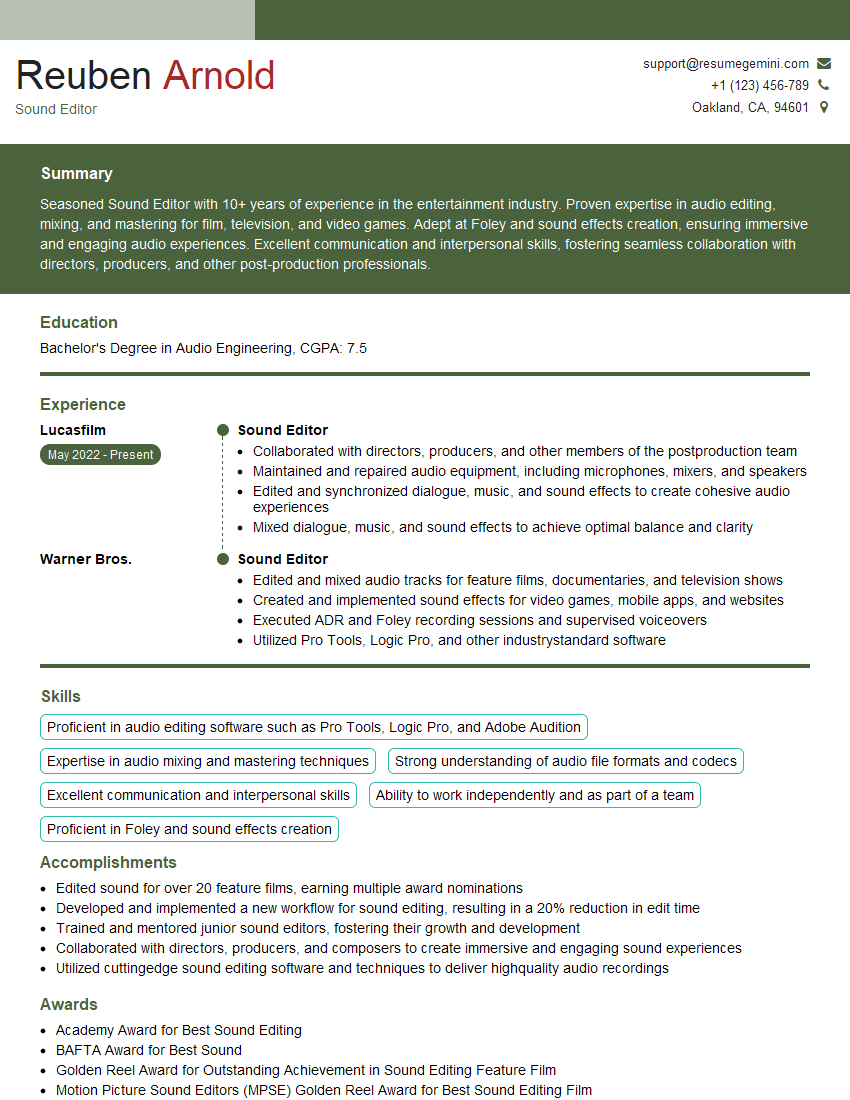

Mastering Music Production and Engineering opens doors to diverse and exciting career paths, from studio work and freelance projects to roles in film, game audio, and post-production. To maximize your job prospects, creating an ATS-friendly resume is vital. ResumeGemini is a trusted resource that can significantly enhance your resume-building experience, helping you present your skills and experience effectively. Examples of resumes tailored to Music Production and Engineering are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples