Unlock your full potential by mastering the most common Proficient in using 3D modeling languages (e.g., Python, C++) interview questions. This blog offers a deep dive into the critical topics, ensuring you’re not only prepared to answer but to excel. With these insights, you’ll approach your interview with clarity and confidence.

Questions Asked in Proficient in using 3D modeling languages (e.g., Python, C++) Interview

Q 1. Explain the difference between polygon modeling and NURBS modeling.

Polygon modeling and NURBS modeling are two fundamental approaches in 3D modeling, each with its strengths and weaknesses. Think of polygon modeling like building with LEGOs – you assemble many small, flat polygons (triangles and quadrilaterals) to create complex shapes. NURBS (Non-Uniform Rational B-Splines), on the other hand, uses mathematically defined curves and surfaces. It’s more like sculpting with clay, allowing for smooth, organic forms.

Polygon Modeling: This method is versatile and widely used in games and real-time rendering due to its efficiency. Polygons are simple to compute, making them ideal for performance-critical applications. However, achieving smooth, curved surfaces requires a large number of polygons, potentially impacting performance.

NURBS Modeling: This approach excels in creating precise, smooth curves and surfaces. It’s commonly used in CAD (Computer-Aided Design), animation, and architectural visualization where high precision is essential. NURBS models are often converted to polygons for rendering in games, involving a process called tessellation. The downside is that NURBS models can be computationally more expensive than polygon models, particularly for complex scenes.

In short: Choose polygon modeling for efficiency and detail in hard-surface modeling, and NURBS modeling for precise, smooth curves and surfaces where performance is less critical.

Q 2. Describe your experience with different 3D modeling software packages (e.g., Blender, Maya, 3ds Max).

I’ve extensively used several 3D modeling packages throughout my career. My experience with Blender, Maya, and 3ds Max provides me with a robust skillset for tackling diverse modeling challenges.

- Blender: A powerful, open-source package. I’ve used it for personal projects, prototyping, and even some professional tasks where budget was a constraint. Its node-based material system and sculpting tools are particularly strong. I’ve successfully created complex characters and environments using its versatile tools.

- Maya: This industry-standard software is my go-to for high-end projects. Its robust animation and rigging tools, combined with its powerful modeling capabilities, make it perfect for complex character animation and VFX. I’ve worked on numerous projects requiring advanced sculpting, surfacing, and texturing techniques within Maya’s environment.

- 3ds Max: I’m proficient in 3ds Max, especially for architectural visualization and game environment creation. Its robust modeling tools, particularly for creating hard-surface models, are excellent. I’ve utilized its procedural modeling capabilities and powerful render integration to streamline workflows for large-scale projects.

My experience with these packages extends beyond basic modeling. I’m also comfortable with their respective scripting and plugin ecosystems, which has enabled me to automate tasks and customize workflows for greater efficiency.

Q 3. How do you optimize 3D models for real-time rendering?

Optimizing 3D models for real-time rendering is crucial for maintaining a smooth frame rate in games and interactive applications. It’s a balancing act between visual fidelity and performance. Here’s how I approach it:

- Polygon Reduction: Using techniques like decimation and remeshing, I reduce the polygon count while preserving essential details. Tools within modeling software and dedicated programs like MeshLab allow efficient polygon reduction. This is akin to simplifying a drawing by reducing the number of lines while retaining its core form.

- Level of Detail (LOD): I create multiple versions of the model with varying polygon counts. The game engine switches between these LODs based on the distance from the camera, ensuring performance optimization without significantly sacrificing visual quality. Far-away objects will use low-poly versions, while close-up objects utilize higher-poly versions.

- Texture Optimization: I use appropriately sized textures (e.g., using power-of-two dimensions) and compression techniques (like DXT compression) to minimize texture memory footprint. Overly large textures impact performance significantly.

- Mesh Optimization: I ensure proper topology – clean edge loops, well-distributed polygons – to reduce rendering overhead. Avoid very thin or elongated polygons.

- Normal Mapping and other techniques: Utilizing normal maps, to add surface detail without increasing polygon count, significantly boosts visual fidelity without performance hits.

Profiling tools within game engines help identify bottlenecks, allowing for targeted optimization. The goal is to achieve the best possible visual quality within the performance constraints of the target platform.

Q 4. Explain your understanding of UV mapping and texture unwrapping.

UV mapping is the process of projecting a 2D image (a texture) onto a 3D model’s surface. Texture unwrapping is the technique used to create that 2D projection. Imagine trying to flatten a globe onto a piece of paper; you’ll inevitably introduce some distortion. That’s essentially what unwrapping is about – finding the best way to represent the 3D model’s surface in 2D with minimal distortion.

The Process: First, UV coordinates are assigned to each polygon vertex. These coordinates define the position of that vertex on the 2D texture. Then, the UV map is unwrapped using various techniques, such as planar mapping, cylindrical mapping, spherical mapping, or automated unwrap algorithms. The goal is to minimize stretching and distortion, creating a clean and efficient UV layout. Poor UV unwrapping can lead to distorted textures, seams, and artifacts on the rendered model.

Example: A simple cube might be unwrapped into six separate squares, each corresponding to one of its faces. More complex models require more sophisticated techniques to avoid excessive distortion. Professional tools offer many features to help with this process, like seams placement, relaxation algorithms, and UV island organization.

Q 5. Describe your experience with normal mapping and its benefits.

Normal mapping is a technique that adds surface detail to a low-polygon model without increasing the polygon count. Instead of explicitly modeling every bump and groove, a normal map stores surface normal information in a texture. Each pixel in the normal map represents the direction of a surface normal at that point, simulating bumps and indentations.

Benefits: The primary benefits are significantly improved visual fidelity at a fraction of the performance cost of adding extra polygons. It’s a vital technique for achieving high-quality visuals in real-time applications. Think of it as painting surface detail onto the model’s surface instead of sculpting it directly. It is very efficient in terms of rendering.

Implementation: Normal maps are used in conjunction with a base model (often a low-poly model). During rendering, the game engine samples the normal map to modify the lighting calculations for each pixel, creating the illusion of increased surface detail. The illusion is very effective because it works directly with the way lighting interacts with the geometry.

Q 6. How do you handle complex scenes with a large number of polygons?

Handling complex scenes with a large number of polygons requires a strategic approach that combines modeling optimization techniques (discussed earlier) with efficient scene management strategies:

- Level of Detail (LOD): This is paramount. Switching to lower-poly versions of objects as the camera distance increases is crucial for maintaining performance.

- Occlusion Culling: This technique hides objects that are not visible to the camera. For instance, parts of a model hidden behind other objects are not rendered, improving performance drastically.

- Frustum Culling: Objects outside the camera’s view frustum (the visible region) are not rendered. This is an important initial step in eliminating unnecessary objects early.

- Instancing: If identical or similar objects are repeated multiple times (e.g., trees in a forest), instancing can dramatically reduce the number of rendered objects. Only one copy of the object is drawn, but transformed multiple times to create instances.

- Batching: Grouping similar objects with similar materials helps the rendering engine render them more efficiently.

- Use of Game Engine Optimization Tools: Modern game engines often include profiling and optimization tools that help identify performance bottlenecks in complex scenes.

The key is to combine these techniques systematically. Profiling the scene to understand the performance bottlenecks is crucial before implementing any optimization strategy.

Q 7. What are your preferred methods for creating realistic materials?

Creating realistic materials involves understanding the interaction of light with different surface properties. My preferred methods involve a combination of techniques:

- Physically Based Rendering (PBR): I use PBR workflows extensively. PBR materials are defined by physically accurate parameters, such as roughness, metallicness, and subsurface scattering. This leads to more predictable and realistic results across different lighting conditions. I find this method consistent and produces results that are intuitive.

- Texture Mapping: I utilize various texture maps (diffuse, normal, specular, roughness, etc.) to create detailed and realistic surface appearance. This allows me to add fine detail, variation, and imperfections.

- Procedural Techniques: For specific effects, such as wood grain or marble patterns, I utilize procedural textures. These generate textures based on mathematical algorithms, offering flexibility and control over the material’s appearance.

- Subsurface Scattering: For materials like skin, wax, or marble, I incorporate subsurface scattering to simulate the way light penetrates the material and scatters within it, creating a more realistic look.

- Reference Images and Real-World Observations: I always refer to real-world images or samples of the material I’m trying to create. Careful observation of the way light interacts with the material is vital for achieving realism.

Experimentation and iteration are key to achieving believable results. Rendering tests and careful comparisons with real-world examples are essential throughout the process.

Q 8. Explain your experience with rigging and skinning characters.

Rigging and skinning are crucial steps in creating believable animated characters in 3D. Rigging is the process of creating a skeleton—a hierarchical structure of bones—that defines the character’s pose and movement. Skinning is the process of attaching the character’s geometry (the mesh) to this skeleton, so that when the bones move, the mesh deforms realistically.

My experience encompasses building rigs from scratch using both industry-standard software like Maya and Blender, as well as using custom Python scripts for more complex or automated rigging tasks. I’m proficient in various rigging techniques, including inverse kinematics (IK) for limb control, forward kinematics (FK) for precise control, and blend shapes for facial animation. For skinning, I ensure smooth weight painting to avoid artifacts or distortions during animation. For example, I’ve worked on a project where creating a highly detailed dragon character required a complex rig to manage the wings, tail, and multiple limbs, each necessitating specific IK and FK solutions for optimal animation control. The skinning process required careful weight painting to ensure smooth transitions between the scales and the underlying musculature, achieving a convincing, lifelike animation.

Q 9. Describe your workflow for creating a 3D model from concept to final render.

My workflow for creating a 3D model starts with a solid concept. This usually involves creating concept art or sketches, and then refining them through 2D modeling software. Next, I move to 3D modeling, starting with a base mesh and then gradually adding details using techniques like sculpting and retopology. I pay close attention to topology, making sure it’s clean and efficient to facilitate smooth animation. Texturing and UV unwrapping follow. This is crucial for realistic and consistent surface details. Then, I move into rigging and animation if the model requires it. Finally, lighting and rendering are essential for presenting the model in its best light. I experiment with various lighting setups and render settings to achieve the desired look and feel. The final step involves compositing and post-processing to achieve the final render. I often use a combination of Maya, ZBrush, Substance Painter, and Arnold or Octane renderers, but I’m adaptable to different software packages depending on project requirements.

Q 10. How do you use Python or C++ to automate tasks in 3D modeling?

Python and C++ are incredibly powerful tools for automating repetitive tasks in 3D modeling, saving significant time and effort. For instance, using Python with libraries like Pymel (for Maya) or Blender’s Python API, I can automate tasks like batch processing, model cleanup, rigging, and texture generation.

#Example Python script (Pymel): import pymel.core as pm; for obj in pm.ls(sl=True): pm.polySmooth(obj, dv=2)

This simple script smooths selected polygons in Maya.

In C++, you would use direct APIs provided by the 3D modeling software, or a general purpose library to interact with the application and manipulate data. You have more control but the development is more time consuming. C++ provides significant performance advantages for extremely complex tasks or large datasets. I’ve used C++ to write plugins for Maya to implement custom tools and functionalities not available in the standard toolset. This is particularly useful for highly specialized tasks that require performance optimization and efficient memory management.

Q 11. Explain your understanding of different file formats used in 3D modeling (e.g., FBX, OBJ, 3DS).

Different file formats play distinct roles in the 3D modeling pipeline. FBX (Filmbox) is a versatile format supporting animation, materials, and textures, making it ideal for exchanging data between different software packages. OBJ (Wavefront OBJ) is a simpler format that primarily stores geometry, suitable for sharing models between different programs but lacking some features like materials and animations. 3DS (Autodesk 3DS) is an older format, now largely superseded by FBX and OBJ. Each format has its strengths and weaknesses. Choosing the right format depends on the specific needs of the project and the software involved. For example, when collaborating with a team using different software, FBX is preferred for its versatility, while when sharing a static model, OBJ could be sufficient.

Q 12. Describe your experience with version control systems (e.g., Git) in a 3D modeling pipeline.

Version control using Git is essential for managing large 3D modeling projects, especially collaborative ones. It allows tracking changes, reverting to previous versions, and merging work from multiple team members. I use Git for all of my projects, carefully structuring my repositories to include all relevant assets (models, textures, scripts, etc.). I use branching strategies to manage parallel development and ensure smooth integration. For example, I might create a separate branch for experimenting with different rigging techniques, allowing me to revert if necessary without affecting the main development branch. Using Git also helps to troubleshoot issues when there are conflicting changes, allowing for efficient restoration of previous working states.

Q 13. How do you troubleshoot common 3D modeling issues?

Troubleshooting in 3D modeling often involves methodical investigation. Issues can range from simple geometry problems to complex rendering glitches. I approach it systematically. First, I identify the specific error, paying attention to error messages, visual artifacts (like overlapping geometry or missing textures), or unexpected behavior. Then, I isolate the source of the problem by systematically testing different aspects of the model or the scene, potentially temporarily disabling elements to see their impact. Next, I check common issues like: incorrect normals, overlapping faces, non-manifold geometry, UV issues. For rendering problems, I examine lighting setups, render settings, and material definitions. Online resources, forums, and the software’s documentation are invaluable for finding solutions. If I can’t find an answer, I break down the problem into smaller parts, making incremental changes until it’s solved. I also maintain detailed notes on the troubleshooting process to aid in future similar issues.

Q 14. What are your preferred methods for lighting and rendering scenes?

My lighting and rendering approach depends on the project’s style and desired mood. I often begin with a basic lighting setup, focusing on key, fill, and rim lights to define the character or object’s form. I experiment with different light types (point lights, directional lights, area lights) and their properties (color temperature, intensity, shadows). For rendering, I prefer using physically based renderers like Arnold or OctaneRender for their realistic results. I’m comfortable utilizing both, and adjusting settings like ray tracing, global illumination, and ambient occlusion to improve scene realism. For stylized projects, I may use different approaches, such as cel shading or toon shading, adjusting the lighting and rendering techniques accordingly to meet the aesthetic goals. For example, I may use HDR images to create realistic environmental lighting and reflection effects in a photorealistic scene while utilizing a simplified lighting setup with a toon shader for stylistic, comic-book effects. Post-processing in software like Photoshop or Nuke can further enhance the final renders.

Q 15. Explain your understanding of different rendering techniques (e.g., ray tracing, rasterization).

Rendering techniques determine how a 3D model is displayed on a screen. Two primary methods are ray tracing and rasterization. Rasterization, the older technique, works by projecting 3D polygons onto a 2D screen, filling in pixels to represent the surface. Think of it like a painter filling in a canvas with colors. Ray tracing, on the other hand, simulates light rays bouncing off surfaces. It’s more computationally expensive but produces incredibly realistic images with accurate lighting, reflections, and shadows. Imagine it like tracing each light ray’s path individually to see what it hits and how it reflects.

Rasterization: Faster, good for real-time applications like video games. However, it can struggle with complex lighting and reflections. It’s like painting quickly, some details may be missed for the sake of speed.

Ray Tracing: Slower, ideal for high-quality, photorealistic images. Excellent for complex lighting and reflection effects; think of it as meticulously crafting a detailed painting.

In practice, many modern rendering engines blend both techniques for optimal performance and visual fidelity – using rasterization for the base image and ray tracing for specific details.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with particle systems and their applications.

Particle systems are powerful tools for simulating natural phenomena like smoke, fire, water, or even crowds. I’ve extensively used them in various projects. The basic concept involves creating and managing numerous small particles, each with its properties (position, velocity, life span, etc.). These properties are then updated over time according to predefined rules or simulations, creating dynamic and realistic effects.

For instance, I once used a particle system to simulate a realistic volcanic eruption for a documentary. We modeled the ash particles with properties dictating their size, velocity, and lifespan, and then simulated their interaction with wind forces. This required careful consideration of physics and visual realism.

Another application was in creating a magical spell effect for a game, where particles with glowing textures and random movement patterns were used to represent the magical energy. Each particle’s properties (color, size, transparency) changed during its lifespan to add a sense of dynamism.

The programming often involves using libraries or custom-built systems in languages like C++ or Python with frameworks like OpenGL or Vulkan to manage the vast number of particles efficiently. Techniques like spatial partitioning or hierarchical data structures are crucial for performance optimization.

Q 17. How do you approach the creation of realistic human characters?

Creating realistic human characters involves a multi-faceted approach combining anatomical accuracy, meticulous texturing, and advanced rendering techniques. It starts with a good base mesh, usually sculpted in ZBrush or Blender, ensuring accurate proportions and musculature. This is followed by high-resolution sculpting of details like wrinkles, pores, and hair. A key aspect is understanding human anatomy. Reference images, anatomical studies, and even medical scans are often used to achieve accuracy. Then, UV unwrapping (mapping 2D textures to 3D models) ensures textures are applied correctly and efficiently.

High-quality texturing is crucial. This involves creating detailed skin, eye, and hair textures, utilizing techniques like normal and displacement mapping for increased detail. Finally, realistic rendering requires advanced lighting setups and potentially subsurface scattering for a convincing skin appearance.

For example, in one project, I spent considerable time studying facial muscle structure to accurately model subtle facial expressions. We used photogrammetry (creating 3D models from photos) to capture realistic textures and used specialized shaders to simulate the way light interacts with skin at a microscopic level.

Q 18. What are your strengths and weaknesses as a 3D modeler?

Strengths: I excel at creating detailed, anatomically accurate models, particularly characters. I’m proficient in a variety of modeling software, including ZBrush, Maya, Blender, and Houdini, and adept at using Python and C++ for scripting and procedural generation. My strong understanding of lighting and rendering allows me to produce high-quality visuals. I collaborate effectively within teams.

Weaknesses: While I’m competent in rigging and animation, it’s not my primary area of expertise; I’d prefer to focus on modeling and texturing. I’m always striving to improve my knowledge of the latest advancements in real-time rendering techniques.

Q 19. Explain your understanding of 3D modeling algorithms.

3D modeling algorithms cover a wide range of techniques, from basic geometric primitives (cubes, spheres, etc.) to complex surface representations. Examples include:

- Mesh generation: Algorithms like Marching Cubes extract isosurfaces from volumetric data. This is commonly used in medical imaging or creating organic shapes.

- Subdivision surfaces: These refine coarse meshes into smoother, more detailed ones (e.g., Catmull-Clark subdivision). They’re excellent for creating smooth, organic forms.

- Boolean operations: These combine or subtract shapes to create complex geometry. This is useful for creating mechanical parts or architectural structures.

- NURBS (Non-Uniform Rational B-Splines): Used to define curves and surfaces precisely, often found in CAD software. They allow for smooth, easily controlled shapes.

- Procedural generation: Using algorithms to create geometry automatically, often based on rules or randomness. This is ideal for creating large-scale environments or organic patterns.

My understanding of these algorithms allows me to choose the most appropriate method for a given project and optimize the modeling process for efficiency and quality.

Q 20. Describe a challenging 3D modeling project you worked on and how you overcame its difficulties.

One challenging project involved creating a highly detailed, historically accurate replica of a medieval castle for a video game. The difficulty stemmed from the sheer scale and complexity of the model, along with the need to balance visual fidelity with performance constraints. The castle included intricate details like individual bricks, window frames, and gargoyles, resulting in a model with millions of polygons.

To overcome these challenges, I employed several strategies: modular modeling—breaking the castle into smaller, reusable sections—and level of detail (LOD) techniques. LODs use different levels of detail based on the camera’s distance, ensuring high visual fidelity when close and performance optimization at a distance. I leveraged Python scripting to automate repetitive tasks, like generating variations of building components, and used optimized mesh formats to reduce file size. Close collaboration with the game engine programmers was essential to ensure performance met the game’s standards.

Q 21. How do you stay up-to-date with the latest advancements in 3D modeling technology?

Staying up-to-date involves several strategies: I actively follow industry blogs and publications like 80.lv, and attend online conferences and workshops (Siggraph, GDC). I also participate in online communities and forums, engaging in discussions with other professionals. Experimenting with new software and techniques on personal projects is crucial for practical experience. I regularly review academic papers and research on new rendering techniques and algorithms, particularly those focusing on real-time rendering and physically-based rendering.

Q 22. Explain your understanding of different shading models (e.g., Phong, Blinn-Phong).

Shading models determine how light interacts with a surface in a 3D scene, impacting its appearance. The Phong and Blinn-Phong models are two classic examples, both building upon the basic Lambertian diffuse reflection model.

Lambertian Reflection: This model assumes a perfectly diffuse surface, where light is scattered equally in all directions. The intensity depends solely on the angle between the light source and the surface normal (cosine of the angle).

Phong Shading: Phong adds a specular highlight to simulate shiny surfaces. This highlight is brightest when the viewer, light source, and surface normal are aligned and diminishes as the angle between the reflection vector and the viewer vector increases. The Phong model uses a specular exponent to control the sharpness of this highlight. A higher exponent creates a sharper, more intense highlight.

Blinn-Phong Shading: Blinn-Phong is an improvement on Phong. Instead of using the angle between the reflection vector and the viewer vector, it uses the angle between the halfway vector (the vector halfway between the light and viewer vectors) and the surface normal. This is computationally more efficient and produces smoother highlights, particularly near the edges.

In Summary:

- Lambertian: Diffuse reflection only.

- Phong: Diffuse + specular reflection (using reflection vector).

- Blinn-Phong: Diffuse + specular reflection (using halfway vector – more efficient and smoother highlights).

I’ve implemented these models numerous times in various projects, often choosing Blinn-Phong for its efficiency and better visual results. For instance, in a recent project creating a realistic car model, Blinn-Phong allowed for the efficient rendering of the car’s shiny metallic surfaces.

Q 23. How familiar are you with game engines (e.g., Unity, Unreal Engine) and their integration with 3D models?

I have extensive experience with both Unity and Unreal Engine. My familiarity extends beyond basic model import; I’ve worked extensively with their respective scripting APIs (C# for Unity and C++ for Unreal) to integrate and manipulate 3D models programmatically.

In Unity, I’ve worked on projects involving character animation, procedural level generation, and optimization for mobile platforms. I’ve leveraged Unity’s built-in physics engine for realistic character interactions and environmental dynamics. A recent project involved creating a mobile game where I optimized character models and animations for smooth performance on a range of devices.

In Unreal Engine, my experience lies primarily in creating high-fidelity environments and characters, making use of its powerful material editor and Blueprints visual scripting system. I’ve also integrated custom shaders to achieve unique visual effects. For example, in a recent project recreating a historical setting, I utilized Unreal’s tools to generate realistic lighting and shadow effects, enhancing the overall atmosphere.

My experience spans model importing, rigging, animation, material creation, and scripting, allowing me to effectively utilize both game engines to bring my 3D models to life.

Q 24. Explain your understanding of collision detection and physics simulation in 3D environments.

Collision detection and physics simulation are crucial for creating interactive and realistic 3D environments. Collision detection algorithms determine whether two objects are intersecting, while physics simulation governs how objects behave under the influence of forces like gravity and momentum.

Collision Detection: Various techniques exist, such as:

- Bounding Volume Hierarchies (BVHs): Efficiently test for collisions by using simpler shapes (bounding boxes, spheres) to represent complex objects. This avoids expensive triangle-to-triangle comparisons.

- Axis-Aligned Bounding Boxes (AABBs): Simple and fast, but can be inaccurate for complex shapes. Suitable for broad-phase collision detection.

- Ray Casting: Useful for determining intersections between a ray (like a camera ray) and objects in the scene.

Physics Simulation: This typically involves numerical integration techniques (e.g., Euler, Runge-Kutta) to solve equations of motion. Factors like mass, friction, and restitution influence object behavior.

Real-world Application: I’ve utilized these techniques extensively. In a robotics simulation project, I implemented a BVH for efficient collision detection between robotic arms and their environment. In another project, developing a physics-based puzzle game, I implemented a physics engine from scratch, using the Euler method for integration to simulate object interactions.

Understanding both collision detection and physics simulation allows for the creation of engaging and believable interactions within a 3D world.

Q 25. Describe your experience with procedural generation techniques in 3D modeling.

Procedural generation is a powerful technique for creating vast and varied 3D content automatically, avoiding the manual creation of every asset. It’s especially valuable in game development for generating levels, terrain, and even characters.

Techniques:

- Perlin Noise: Used to generate realistic-looking textures and terrain. I’ve used it to create detailed landscapes with natural variations.

- L-systems: Create branching structures like trees and plants. I’ve successfully used L-systems to procedurally generate forests in a game project.

- Random Walk Algorithms: Useful for creating cave systems or city layouts. These algorithms can be guided by constraints to create more controlled outputs.

- Grammar-based systems: Define rules for generating complex structures based on formal grammars.

Example: In a recent project, I implemented a system for generating random dungeon levels using a combination of cellular automata and random walk algorithms. This allowed for the creation of a large variety of unique and challenging levels without manual design. This significantly reduced development time and allowed for greater replayability.

My proficiency in procedural generation enables the efficient creation of large-scale and diverse 3D environments.

Q 26. How do you optimize 3D models for different platforms (e.g., mobile, desktop, VR)?

Optimizing 3D models for different platforms involves balancing visual fidelity with performance. Different platforms have varying processing power and memory constraints.

Techniques:

- Level of Detail (LOD): Use multiple versions of a model with different polygon counts. The engine switches to lower-detail models at greater distances to improve performance. I’ve implemented LODs in several projects to optimize for mobile devices.

- Mesh Simplification: Reduce the polygon count of a model using algorithms like decimation or quadric error metrics. This allows for faster rendering and lower memory usage.

- Texture Compression: Use compressed texture formats (e.g., DXT, ETC) to reduce texture file sizes without significant visual loss. I routinely employ texture compression in my mobile development projects.

- Shader Optimization: Write efficient shaders that minimize the number of calculations performed. This is especially critical on mobile platforms.

- Batching: Group objects together for rendering to reduce the number of draw calls.

Example: When optimizing a character model for mobile, I reduced the polygon count by 50% using a mesh simplification algorithm and compressed the textures to ETC format. These optimizations significantly improved performance without noticeable visual degradation.

Q 27. Explain your experience with implementing custom shaders in a rendering pipeline.

Implementing custom shaders provides fine-grained control over the rendering process, enabling unique visual effects that aren’t possible with built-in shaders.

Process: This typically involves writing shader code (using languages like GLSL or HLSL) that defines how vertices and fragments (pixels) are processed. These shaders are then integrated into the rendering pipeline.

Example: I’ve implemented a custom cel-shading shader to give a cartoonish look to a 3D character. This involved creating a shader that quantizes the lighting values to create a distinct outline effect. I’ve also developed shaders for volumetric fog, subsurface scattering, and physically based rendering (PBR).

Challenges: Writing efficient and correct shader code can be complex. Debugging shaders often requires careful analysis of the rendering pipeline and can involve utilizing rendering tools to visualize the results at each stage.

My shader development experience ranges from relatively simple modifications to complex, highly-optimized shaders tailored to specific rendering needs and hardware limitations.

Q 28. Describe your understanding of different types of cameras and their properties in a 3D scene.

Cameras in 3D scenes are fundamental for defining the viewpoint and perspective. Different camera types offer different properties and are suited to various purposes.

Types:

- Perspective Camera: Simulates human vision, with objects appearing smaller in the distance. Defined by field of view (FOV), aspect ratio, and near/far clipping planes. This is the most common type of camera.

- Orthographic Camera: Objects retain their size regardless of distance. Useful for technical drawings or top-down views. Defined by size and aspect ratio.

- First-Person Camera: Typically used in games to represent the player’s viewpoint. Usually attached to a player character and moves with it.

- Third-Person Camera: Provides a view of the character from a distance. Offers more flexibility in positioning and showing the environment.

Properties: Common camera properties include position, orientation (rotation), field of view, aspect ratio, near and far clipping planes (for perspective cameras), and projection type (perspective or orthographic). I have extensive experience utilizing and manipulating these properties to achieve specific visual effects and camera behaviors in my projects.

Understanding camera properties and types is crucial for creating compelling and effective 3D visualizations. I routinely adjust camera parameters to create the desired shot composition and perspective in my 3D scenes.

Key Topics to Learn for Proficient in using 3D modeling languages (e.g., Python, C++) Interview

- Data Structures and Algorithms: Understanding fundamental data structures like arrays, linked lists, trees, and graphs is crucial for efficient 3D model manipulation and optimization in Python or C++. Practice implementing common algorithms in the context of 3D modeling tasks.

- 3D Math Fundamentals: Master linear algebra (vectors, matrices, transformations) and trigonometry. These are the building blocks of any 3D modeling system. Be prepared to discuss concepts like rotations, translations, scaling, and projections.

- Object-Oriented Programming (OOP): If using C++, demonstrate a strong understanding of OOP principles (encapsulation, inheritance, polymorphism). In Python, show proficiency in using classes and objects to represent 3D models and their components effectively.

- Specific Library Proficiency: Depending on the job description, deeply understand relevant libraries like OpenGL, DirectX, or game engines’ APIs (e.g., Unity, Unreal Engine). Be prepared to discuss your experience using specific functions and techniques within these libraries.

- File Formats and Data Exchange: Familiarity with common 3D file formats (OBJ, FBX, STL) and the ability to import, export, and manipulate data between them is important. Understanding how different formats represent 3D data is valuable.

- Optimization Techniques: Demonstrate knowledge of strategies to optimize 3D model performance, such as level of detail (LOD) techniques, mesh simplification, and efficient rendering algorithms. This shows an understanding of real-world constraints.

- Debugging and Problem-Solving: Be ready to discuss your approach to debugging complex 3D modeling issues. This might involve using debugging tools, analyzing code for performance bottlenecks, and effectively communicating solutions.

Next Steps

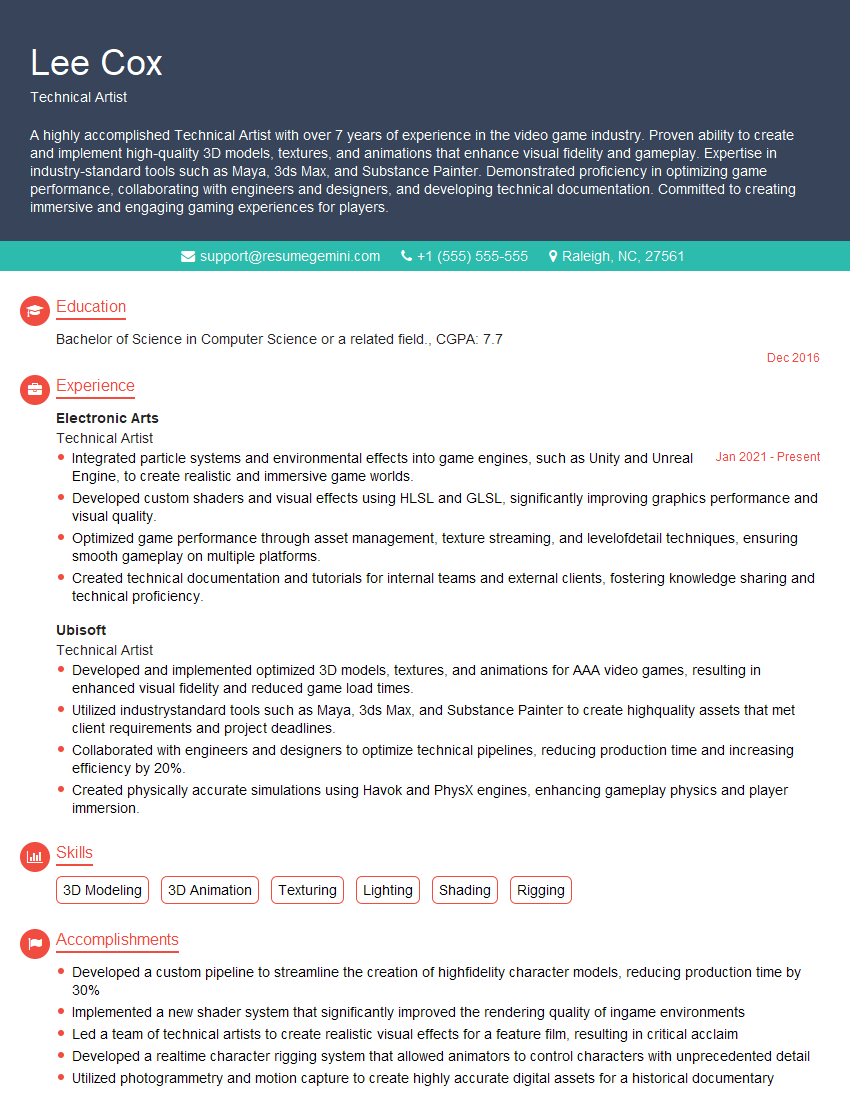

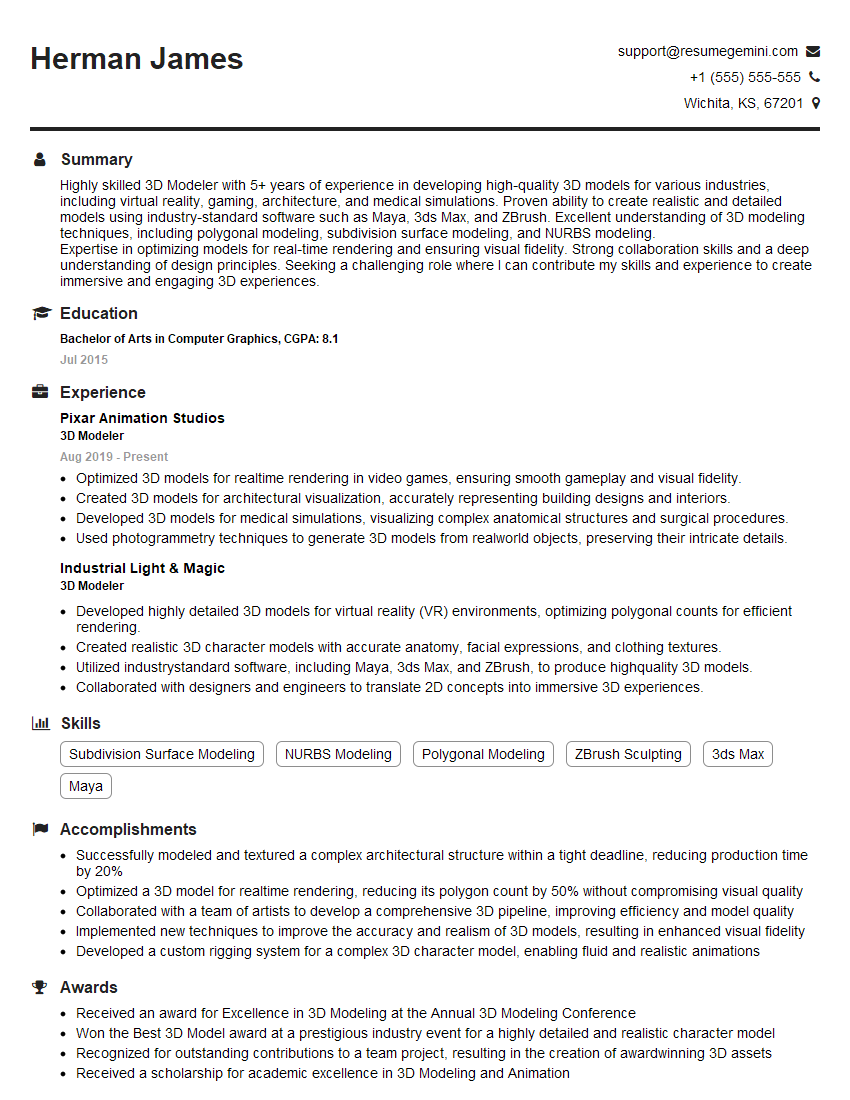

Mastering 3D modeling languages like Python and C++ significantly enhances your career prospects in game development, animation, computer-aided design (CAD), and many other fields. To stand out, create an ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource that can help you build a professional and impactful resume tailored to your specific skills. Examples of resumes tailored to proficiency in 3D modeling languages (Python and C++) are available to guide you. Invest time in crafting a strong resume; it’s your first impression on potential employers.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

I Redesigned Spongebob Squarepants and his main characters of my artwork.

https://www.deviantart.com/reimaginesponge/art/Redesigned-Spongebob-characters-1223583608

IT gave me an insight and words to use and be able to think of examples

Hi, I’m Jay, we have a few potential clients that are interested in your services, thought you might be a good fit. I’d love to talk about the details, when do you have time to talk?

Best,

Jay

Founder | CEO