Are you ready to stand out in your next interview? Understanding and preparing for Software Design and Architecture interview questions is a game-changer. In this blog, we’ve compiled key questions and expert advice to help you showcase your skills with confidence and precision. Let’s get started on your journey to acing the interview.

Questions Asked in Software Design and Architecture Interview

Q 1. Explain the difference between microservices and monolithic architecture.

Monolithic architecture and microservices represent two fundamentally different approaches to software design. A monolith is a single, self-contained application where all components – user interface, business logic, and data access – are tightly coupled. Imagine a giant, complex machine where everything is interconnected. In contrast, a microservices architecture decomposes the application into small, independent services, each focusing on a specific business function. Think of it as a collection of smaller, specialized machines working together.

The key difference lies in their deployment and scalability. Monoliths are deployed as a single unit; updating any part requires redeploying the entire application. Microservices, however, are independently deployable, allowing for more frequent and targeted updates. This modularity also enhances scalability; you can scale individual services based on their specific needs, rather than scaling the entire application.

Example: A monolithic e-commerce application might handle everything from user authentication to product catalog management within a single codebase. A microservices-based equivalent would break this down into separate services such as User Service, Product Catalog Service, Order Service, etc., each with its own database and deployment pipeline.

Q 2. Describe your experience with different design patterns (e.g., Singleton, Factory, Observer).

I have extensive experience with several design patterns, including Singleton, Factory, and Observer. The Singleton pattern ensures that a class has only one instance and provides a global point of access to it. This is useful for managing resources like database connections or logging services, preventing multiple instances from conflicting. However, overuse can lead to tight coupling and make testing difficult.

The Factory pattern provides an interface for creating objects without specifying their concrete classes. This allows for flexibility in object creation, allowing the system to easily switch between different object implementations without altering the client code. It’s commonly used in scenarios where you need to create objects based on configuration or user input.

The Observer pattern defines a one-to-many dependency between objects, where a change in one object automatically notifies its dependents. Think of a stock ticker – the stock price (subject) updates, and all subscribers (observers) receive the update. This pattern is excellent for managing event-driven architectures and decoupling components.

Example (Factory Pattern):

interface Shape { draw(); } class Circle implements Shape { draw() { console.log('Drawing a circle'); } } class Square implements Shape { draw() { console.log('Drawing a square'); } } class ShapeFactory { getShape(shapeType) { if (shapeType === 'CIRCLE') return new Circle(); if (shapeType === 'SQUARE') return new Square(); return null; } } let factory = new ShapeFactory(); let circle = factory.getShape('CIRCLE'); circle.draw(); // Output: Drawing a circleQ 3. How do you handle database scalability in a high-traffic application?

Handling database scalability in high-traffic applications requires a multi-pronged approach. The key is to anticipate growth and design for it from the start. Simply scaling vertically (adding more resources to a single database server) has limitations. Instead, we often employ strategies like horizontal scaling (sharding, read replicas).

Sharding involves partitioning the database across multiple servers. This distributes the load and prevents a single server from becoming a bottleneck. However, careful planning is crucial to ensure data consistency and efficient query routing. For example, you might shard based on geographical location or user ID.

Read replicas create copies of the database optimized for read operations. This offloads read traffic from the primary database, improving performance. Write operations are still handled by the primary database, ensuring data consistency. Careful consideration of data synchronization and consistency is vital when implementing this.

Caching is another essential technique. Storing frequently accessed data in a fast, in-memory cache like Redis or Memcached reduces the load on the database. We need to strategically cache data and update cache appropriately to maintain data consistency.

Finally, database optimization is crucial. Proper indexing, query optimization, and database tuning ensure that the database performs efficiently, even with a large data set. Regular monitoring and performance testing are also essential for identifying and resolving bottlenecks.

Q 4. What are the key considerations when designing a RESTful API?

Designing a RESTful API involves adhering to several key principles. The most important are:

- Resource-based URLs: URLs should represent resources (e.g.,

/users/{userId}). - HTTP methods: Using appropriate HTTP verbs (GET, POST, PUT, DELETE) for different operations (read, create, update, delete).

- Statelessness: Each request should contain all the information necessary for processing, making the API easier to scale and maintain.

- Standard media types: Using standard formats like JSON or XML for data exchange.

- Cacheability: Employing HTTP caching mechanisms (e.g., headers) to improve performance.

- Layered system: Clearly separating the presentation, business logic, and data access layers.

- Hypermedia controls (HATEOAS): While often overlooked, including hyperlinks in responses to guide clients through the API and improve discoverability.

Example: A RESTful API endpoint to retrieve a user by ID might look like this:

GET /users/123

The response would be a JSON representation of the user data, often including links to related resources (HATEOAS).

Q 5. Explain your understanding of SOLID principles.

The SOLID principles are five design principles intended to make software designs more understandable, flexible, and maintainable. They are:

- Single Responsibility Principle (SRP): A class should have only one reason to change. This promotes modularity and reduces complexity.

- Open/Closed Principle (OCP): Software entities (classes, modules, functions, etc.) should be open for extension, but closed for modification. This allows adding new functionality without altering existing code.

- Liskov Substitution Principle (LSP): Subtypes should be substitutable for their base types without altering the correctness of the program. This ensures that inheritance is used correctly and prevents unexpected behavior.

- Interface Segregation Principle (ISP): Clients should not be forced to depend upon interfaces they don’t use. This avoids creating large, bloated interfaces that clients are forced to implement.

- Dependency Inversion Principle (DIP): High-level modules should not depend on low-level modules. Both should depend on abstractions. Abstractions should not depend on details. Details should depend on abstractions. This promotes loose coupling and makes code more reusable.

Following SOLID principles results in code that is easier to understand, maintain, test, and extend over time. It’s a cornerstone of good object-oriented design.

Q 6. How do you ensure code maintainability and scalability in your designs?

Ensuring code maintainability and scalability involves several key practices:

- Modular Design: Breaking down the application into smaller, independent modules with well-defined interfaces. This makes the code easier to understand, test, and modify.

- Clean Code Practices: Writing clear, concise, and well-documented code following established coding standards. This improves readability and reduces the likelihood of errors.

- Version Control (Git): Using a robust version control system to track changes, collaborate effectively, and revert to previous versions if necessary.

- Automated Testing: Implementing comprehensive unit, integration, and end-to-end tests to catch bugs early and ensure that changes don’t introduce regressions.

- Continuous Integration/Continuous Delivery (CI/CD): Automating the build, test, and deployment process to streamline the software development lifecycle and enable frequent releases.

- Code Reviews: Having peer reviews of code before merging it into the main branch to identify potential issues and ensure code quality.

- Design Patterns: Using appropriate design patterns to address recurring design problems and improve code structure and maintainability.

By consistently applying these practices, we can build software systems that are robust, adaptable, and easy to maintain over their entire lifecycle.

Q 7. Describe your experience with different architectural styles (e.g., MVC, MVP, MVVM).

I’ve worked with various architectural styles, including MVC, MVP, and MVVM. Model-View-Controller (MVC) is a classic pattern that separates the application into three interconnected parts: the Model (data and business logic), the View (user interface), and the Controller (handling user input and updating the model and view). It’s widely used but can become complex in large applications.

Model-View-Presenter (MVP) is a variation of MVC where the Presenter acts as an intermediary between the Model and the View. The Presenter handles user interactions, updates the Model, and updates the View accordingly. This improves testability and reduces the coupling between the View and the Model.

Model-View-ViewModel (MVVM) is another variation well-suited for modern UI frameworks, particularly those employing data binding. The ViewModel acts as a bridge between the Model and the View, exposing data and commands to the View, making the view logic simpler and easier to test.

The choice of architectural style depends heavily on the specific needs of the application, the size of the development team, and the technologies being used. Each style has its strengths and weaknesses, and there are various variations and hybrid approaches.

Q 8. How do you approach system design for high availability and fault tolerance?

Designing for high availability and fault tolerance is paramount for building robust systems. It involves anticipating potential points of failure and implementing strategies to mitigate their impact. This is achieved through a multi-layered approach.

- Redundancy: This is the cornerstone. We replicate critical components – servers, databases, network connections – across multiple locations or availability zones. If one fails, others seamlessly take over. Think of it like having backup singers in a band; if one gets sick, the show goes on.

- Load Balancing: Distributing traffic across multiple servers prevents overload on any single instance. This ensures even resource utilization and prevents cascading failures. Imagine a crowded restaurant; load balancing is like having multiple servers to ensure no single waiter is overwhelmed.

- Failover Mechanisms: These are automated processes that switch to backup systems upon detection of a failure. Heartbeat monitoring, for example, constantly checks the health of components and triggers failover if necessary. This is like having a backup generator in case of a power outage.

- Circuit Breakers: These prevent cascading failures by stopping requests to failing services. If a service is down, circuit breakers temporarily block further requests to it, preventing a wider system outage. It’s like a traffic controller diverting traffic away from a closed road.

- Health Checks: Regular health checks actively monitor the status of individual components and the system as a whole. This allows for proactive identification of potential issues before they escalate into major outages. It’s like a regular medical checkup for your system.

In practice, I’d employ technologies like Kubernetes for container orchestration, cloud-based solutions (AWS, Azure, GCP) for geographic redundancy, and robust monitoring tools (Prometheus, Grafana) for real-time system health visibility.

Q 9. What are your preferred tools and technologies for software design and modeling?

My toolset is adaptable to the project’s needs, but some favorites include:

- UML Modeling Tools: Enterprise Architect, Lucidchart, or draw.io for creating class diagrams, sequence diagrams, and state diagrams to visually represent system architecture and behavior. UML provides a common language for communication among team members.

- Architectural Description Languages (ADLs): While less common for smaller projects, ADLs (like Archimate) are invaluable for large, complex systems, offering a more formal and structured approach to architectural modeling.

- IDE Integrated Modeling: Most modern IDEs (IntelliJ, Visual Studio) have built-in diagramming capabilities that aid in visualizing code structure and relationships.

- Prototyping Tools: Figma, Adobe XD, or even simple HTML/CSS mockups for rapid prototyping and user interface design. This lets me test designs early and get valuable user feedback before committing to development.

I prioritize choosing tools that foster collaboration and allow for easy sharing and version control of design artifacts. The goal is to ensure that everyone involved understands the system design clearly.

Q 10. Explain your experience with Agile development methodologies and their impact on design.

My experience with Agile, specifically Scrum, has profoundly shaped my approach to design. The iterative nature of Agile encourages incremental design and development, allowing for continuous feedback and adaptation.

- Evolutionary Design: Instead of upfront, comprehensive design, we focus on designing just enough to get started and then iteratively refine it based on user feedback and evolving requirements. This minimizes waste and reduces the risk of building the wrong thing.

- Close Collaboration: Agile methodologies emphasize close collaboration between designers, developers, and stakeholders. This ensures the design accurately reflects user needs and is technically feasible.

- Continuous Integration/Continuous Delivery (CI/CD): Agile often involves CI/CD pipelines, which necessitate a design that is modular, testable, and easily deployable. This impacts architectural decisions favoring microservices or modular monoliths.

- Adaptive Planning: Agile allows for adjustments to the design based on changing priorities or new insights. This flexibility is critical in today’s dynamic business environment.

For example, in a recent project, we initially designed a monolithic architecture. Through iterative development and user feedback, we realized a microservices architecture would better suit scalability and maintainability, so we adapted our design mid-project.

Q 11. How do you balance technical feasibility with business requirements during the design process?

Balancing technical feasibility with business requirements is a constant juggling act. It requires clear communication, compromise, and a deep understanding of both domains. I use a few key strategies:

- Prioritization: Clearly identify and prioritize business requirements. This helps focus development efforts on the most impactful features first. The Pareto principle (80/20 rule) often applies here; 80% of the value often comes from 20% of the features.

- Proof of Concept (PoC): For technically uncertain aspects, I advocate building PoCs to validate feasibility before committing significant resources. This helps avoid costly rework later.

- Technical Debt Management: Acknowledge that some compromises might be necessary. Document these as technical debt, with a plan for addressing them later. This is crucial for maintaining long-term system health.

- Trade-off Analysis: Explicitly document the trade-offs involved in each design decision, weighing the technical complexity against business benefits. This transparency ensures everyone understands the rationale behind choices.

For instance, if a business wants a specific feature requiring a complex technology, I’d present alternative solutions, comparing their technical feasibility, cost, and time-to-market, to allow for an informed decision.

Q 12. Describe your experience with different database technologies (e.g., SQL, NoSQL).

My experience spans both SQL and NoSQL databases. The choice depends heavily on the specific application’s needs.

- SQL Databases (e.g., PostgreSQL, MySQL): Ideal for applications requiring strong data consistency, ACID properties (Atomicity, Consistency, Isolation, Durability), and relational data modeling. They excel at complex queries and transactions. I’ve used them extensively for transactional systems and applications where data integrity is paramount.

- NoSQL Databases (e.g., MongoDB, Cassandra, Redis): Better suited for applications requiring high scalability, flexibility, and horizontal scaling. They handle large volumes of unstructured or semi-structured data effectively. I’ve used them for applications with high write loads, real-time data processing, and large-scale data storage.

Often, a hybrid approach is best, leveraging the strengths of both. For example, a system might use a relational database for core transactional data and a NoSQL database for user profiles or session data. The choice is always driven by the specific needs of the application, considering factors such as data volume, transaction requirements, and query patterns.

Q 13. How do you handle performance bottlenecks in a complex system?

Handling performance bottlenecks requires a systematic approach involving profiling, analysis, and optimization. My process typically involves:

- Profiling: Using profiling tools to identify the specific parts of the system consuming the most resources (CPU, memory, I/O). This provides concrete data to guide optimization efforts.

- Code Optimization: Improving code efficiency through techniques like algorithm optimization, data structure selection, and efficient use of resources. This often involves careful analysis of code performance using profiling tools.

- Database Optimization: Optimizing database queries, indexing, and schema design. Inefficient database queries can significantly impact performance.

- Caching: Implementing caching mechanisms to store frequently accessed data in memory or a dedicated cache, reducing the load on the database or other back-end services. This is akin to having a well-stocked pantry in a restaurant kitchen.

- Load Testing: Simulating real-world usage patterns to identify bottlenecks under stress. This ensures the system can handle expected traffic loads.

- Hardware Upgrades: In some cases, improving hardware (more memory, faster CPUs, SSDs) may be necessary to handle increased load.

A common example involves a slow database query. Profiling might reveal a poorly designed index or a missing index. Addressing this through database optimization can dramatically improve overall system performance.

Q 14. What are the trade-offs between different architectural choices?

Choosing the right architecture is crucial, and involves careful consideration of trade-offs. There’s no one-size-fits-all solution. Here are a few key examples:

- Monolithic vs. Microservices: Monolithic architectures are simpler to develop and deploy initially, but can become difficult to scale and maintain as complexity grows. Microservices offer better scalability and flexibility but introduce complexities in deployment, communication, and monitoring. The choice depends on the expected system scale and complexity.

- Client-Server vs. Peer-to-Peer: Client-server architectures provide centralized control and easier management, but can be susceptible to single points of failure. Peer-to-peer architectures are more resilient but harder to manage and may have scalability challenges.

- Synchronous vs. Asynchronous Communication: Synchronous communication is simpler to implement, but it can block operations and lead to performance bottlenecks. Asynchronous communication is more complex, but it enables better scalability and responsiveness.

- Relational vs. NoSQL Databases: Relational databases offer data integrity and consistency, but might not scale as well as NoSQL databases for specific use cases. NoSQL databases offer high scalability, but might compromise data consistency depending on the specific database choice.

The best choice depends on factors like scalability requirements, data consistency needs, development team expertise, and overall project constraints. A thorough evaluation of these factors guides the selection of the most appropriate architectural style.

Q 15. How do you design for security considerations at the architectural level?

Security is paramount in software architecture. It shouldn’t be an afterthought, but rather a core principle woven into every layer. My approach involves a multi-layered defense strategy, incorporating security considerations from the initial design phase.

- Principle of Least Privilege: Restricting access to data and resources to only what’s absolutely necessary. For example, a user authentication system should only grant access to the specific functionalities the user is authorized for, rather than providing blanket access.

- Input Validation and Sanitization: Preventing malicious input from compromising the system. This includes validating user inputs, sanitizing data before processing, and escaping output to prevent cross-site scripting (XSS) attacks.

- Secure Authentication and Authorization: Implementing robust methods for user authentication and authorization, such as multi-factor authentication (MFA), OAuth 2.0, and using secure hashing algorithms to store passwords.

- Data Encryption: Protecting data both in transit and at rest using strong encryption algorithms like AES-256. This is crucial for sensitive information like user credentials and payment details.

- Regular Security Audits and Penetration Testing: Proactively identifying vulnerabilities through regular security assessments and penetration testing. This helps uncover weaknesses before malicious actors can exploit them.

- Defense in Depth: Employing multiple layers of security controls. If one layer fails, others are in place to mitigate the risk. This includes firewalls, intrusion detection systems, and web application firewalls (WAFs).

For example, in designing an e-commerce application, I’d use HTTPS for all communication, implement robust input validation on order forms to prevent SQL injection, and encrypt sensitive customer data stored in the database.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your understanding of CAP theorem.

The CAP theorem, short for Brewer’s theorem, states that in a distributed data store, you can only achieve two out of the following three guarantees:

- Consistency: All nodes see the same data at the same time. Think of this as everyone having the same, up-to-date view of the truth.

- Availability: The system is always operational and able to respond to requests. It’s always ‘on’ and ready to serve.

- Partition tolerance: The system continues to operate even if communication between nodes is disrupted (a network partition). This is crucial in distributed systems since network outages are inevitable.

It’s practically impossible to achieve all three simultaneously. The choice of which two to prioritize depends heavily on the application’s specific needs. For instance, a banking application might prioritize consistency and partition tolerance, accepting some minor reductions in availability during a network partition to ensure data accuracy. On the other hand, a social media platform might prioritize availability and partition tolerance, accepting eventual consistency to provide a seamless user experience, even if data might momentarily be slightly out of sync across servers.

Q 17. How do you ensure data consistency in a distributed system?

Ensuring data consistency in a distributed system is a complex challenge. The approach depends on the chosen CAP trade-off. Several strategies can be employed:

- Two-phase commit (2PC): A traditional approach where a transaction coordinator ensures all participating nodes agree on the outcome before committing changes. However, 2PC suffers from performance limitations and blocking issues.

- Paxos and Raft: Consensus algorithms that help distributed nodes reach agreement on the current state of data, enhancing consistency. These are complex to implement but highly effective for critical applications requiring strong consistency.

- Versioning and Conflict Resolution: Assigning unique versions to data elements and using conflict resolution mechanisms (e.g., last-write-wins, conflict detection and manual resolution) to manage inconsistencies. This is frequently used in collaborative editing applications.

- Eventual Consistency: Data is eventually consistent, meaning inconsistencies might exist temporarily but resolve themselves over time. This approach is suitable for applications where immediate consistency isn’t crucial, such as many social media platforms.

- Data Replication and Synchronization: Employing techniques like master-slave replication or multi-master replication to spread data across multiple nodes, improving availability and facilitating consistency, depending on the replication strategy chosen.

Choosing the right strategy requires a careful evaluation of the application’s requirements, considering factors like the level of consistency needed, performance requirements, and the complexity of implementation.

Q 18. How do you manage technical debt in a project?

Technical debt is unavoidable in software development, but uncontrolled debt can cripple a project. Managing it effectively is crucial for long-term maintainability and success. My approach involves a multi-pronged strategy:

- Identification and Prioritization: Regularly identify technical debt through code reviews, static analysis tools, and feedback from developers. Prioritize based on impact and risk, focusing on high-impact, high-risk debt first.

- Documentation: Track technical debt in a central repository, describing the debt, its impact, and a proposed plan for addressing it. This transparency improves team awareness and facilitates planning.

- Strategic Refactoring: Dedicate time for refactoring during development sprints, addressing manageable amounts of debt incrementally. Avoid large-scale refactoring unless absolutely necessary.

- Automated Testing: Maintain a robust suite of automated tests to ensure refactoring efforts don’t introduce new bugs and to provide confidence in making changes.

- Continuous Integration/Continuous Deployment (CI/CD): Employ CI/CD to automate the build, testing, and deployment process, making it easier to safely introduce changes and refactor code.

For instance, if a crucial module is poorly structured and difficult to maintain (a significant technical debt), we’d prioritize refactoring it, allocating sufficient time and resources while ensuring thorough testing to minimize disruptions.

Q 19. Describe your experience with version control systems (e.g., Git).

I have extensive experience with Git, utilizing it daily for version control. I’m proficient in branching strategies (like Gitflow or GitHub Flow), creating pull requests, resolving merge conflicts, and using Git for collaborative development. I’m comfortable using command-line Git as well as GUI clients. My workflow usually involves creating feature branches for new developments, regularly committing changes with descriptive messages, and using pull requests for code reviews before merging into the main branch.

I’ve used Git to manage projects of varying scales, from small personal projects to large enterprise applications. I understand the importance of a well-structured Git repository with clear commit messages to ensure traceability and maintainability. I’m also familiar with using Git to manage releases and rollbacks if needed.

Q 20. How do you conduct code reviews and ensure code quality?

Code reviews are a cornerstone of ensuring code quality and maintainability. My approach is collaborative and focused on both finding defects and improving coding standards. I typically follow these steps:

- Clear Review Guidelines: Establish clear guidelines for code reviews, focusing on aspects like readability, maintainability, adherence to coding standards, and security considerations.

- Focused Reviews: Reviews should be focused on specific aspects, not a complete overhaul of the code. Smaller, more frequent reviews are often more effective than large, infrequent ones.

- Constructive Feedback: Providing constructive, actionable feedback, highlighting potential problems and suggesting concrete improvements rather than just pointing out errors.

- Collaborative Approach: The review process should be collaborative, with the reviewer and author engaging in a dialogue to clarify issues and find solutions.

- Tools and Automation: Utilize code review tools like GitHub or GitLab that facilitate the review process and help automate checks (e.g., linters, static analysis).

In my experience, code reviews not only improve the quality of the code but also serve as a crucial knowledge-sharing mechanism, promoting learning and improving overall team skills.

Q 21. Explain your approach to testing and quality assurance in software design.

Testing and quality assurance (QA) are integral parts of my software design process. I employ a multi-faceted approach that combines different testing strategies:

- Unit Testing: Testing individual components or modules in isolation to ensure they function correctly. This helps catch errors early in the development cycle.

- Integration Testing: Testing the interaction between different components to ensure they work together seamlessly. This helps identify issues related to communication and data exchange.

- System Testing: Testing the entire system as a whole to verify it meets the specified requirements. This includes functional, performance, security, and usability testing.

- User Acceptance Testing (UAT): Involving end-users in the testing process to ensure the system meets their needs and expectations.

- Automated Testing: Automating as much of the testing process as possible to improve efficiency, consistency, and reduce manual effort. This typically involves using testing frameworks and CI/CD pipelines.

Choosing the appropriate testing methods and tools depends on the specific project, its complexity, and risk tolerance. I always prioritize building in testability from the start, ensuring code is modular, well-documented, and easily tested.

Q 22. How do you incorporate user feedback into the design process?

Incorporating user feedback is crucial for iterative design and building a successful product. It’s not a one-time event but a continuous process integrated throughout the design lifecycle. I typically employ a multi-faceted approach:

Early and Frequent Feedback: I start gathering feedback early on, even during the prototyping phase. This could be through usability testing with representative users, conducting surveys, or simply observing how people interact with early mockups.

Diverse Feedback Channels: I utilize various methods to capture feedback, including user interviews, focus groups, A/B testing, and analyzing user data from analytics dashboards. Each channel provides a unique perspective.

Prioritization and Analysis: Not all feedback is created equal. I prioritize feedback based on its frequency, impact, and feasibility. This involves carefully analyzing the data, identifying trends, and understanding the underlying user needs.

Iteration and Refinement: Based on the analyzed feedback, I iterate on the design, making adjustments and improvements. This iterative process allows for continuous refinement and optimization of the product.

Transparency and Communication: I maintain open communication with the development team and stakeholders, sharing user feedback and explaining design decisions. This ensures everyone is aligned and working towards a common goal.

For example, in a recent project, user feedback revealed that a key feature was difficult to find. By analyzing user interactions and conducting interviews, we identified the problem and redesigned the navigation, resulting in a significant improvement in user satisfaction.

Q 23. Describe your experience with cloud platforms (e.g., AWS, Azure, GCP).

I have extensive experience with major cloud platforms – AWS, Azure, and GCP – having used them on various projects. My expertise spans across several services, including compute (EC2, Azure VMs, Compute Engine), storage (S3, Azure Blob Storage, Cloud Storage), databases (RDS, Azure SQL Database, Cloud SQL), and serverless computing (Lambda, Azure Functions, Cloud Functions).

On one project, we chose AWS for its mature ecosystem and wide range of services. We leveraged EC2 for our application servers, S3 for static assets, and RDS for our relational database. Another project required the flexibility of Azure’s hybrid cloud capabilities, allowing us to seamlessly integrate our on-premises infrastructure with cloud resources.

My experience goes beyond just using individual services; I’m proficient in designing cloud-native architectures, understanding the nuances of each platform’s pricing models, and optimizing for cost-effectiveness and performance. I’m also familiar with security best practices specific to each cloud provider.

Q 24. How do you design for scalability in a cloud environment?

Designing for scalability in a cloud environment involves anticipating growth and ensuring your application can handle increasing user demand and data volume without performance degradation. Key strategies include:

Horizontal Scaling: Instead of scaling vertically (increasing the resources of a single server), we use horizontal scaling, adding more instances of our application to distribute the load. This is a much more efficient and cost-effective approach in the cloud.

Microservices Architecture: Breaking down the application into smaller, independent services allows for individual scaling based on the specific needs of each service. If one service experiences high demand, only that service needs to be scaled.

Load Balancing: Distributing incoming traffic across multiple instances of your application using a load balancer ensures no single instance is overwhelmed. This can be done using cloud-provided load balancers or custom solutions.

Stateless Applications: Designing applications to be stateless simplifies scaling because instances can be added or removed without affecting the application’s state. Session data is typically stored externally, such as in a database or a distributed cache.

Caching: Implementing caching mechanisms, such as Redis or Memcached, reduces the load on databases and other backend systems by storing frequently accessed data in memory. This significantly improves response times and scalability.

Database Scaling: Choosing the right database and scaling it appropriately is vital. Options include using cloud-managed databases that offer automatic scaling features or employing techniques like sharding to distribute data across multiple databases.

For example, I designed a system that used auto-scaling groups in AWS to dynamically adjust the number of EC2 instances based on real-time demand. This ensured that the application remained responsive even during peak hours.

Q 25. What are the security implications of cloud-based architectures?

Cloud-based architectures introduce unique security considerations. The shared responsibility model is key: the cloud provider is responsible for the security *of* the cloud (physical infrastructure, network), while the customer is responsible for security *in* the cloud (data, applications, configurations).

Data Encryption: Encrypting data both in transit (using HTTPS) and at rest (using encryption services offered by the cloud provider) is paramount.

Access Control: Implementing strong access control mechanisms, such as IAM roles in AWS or Azure RBAC, limits access to resources only to authorized users and applications.

Network Security: Utilizing virtual private clouds (VPCs), security groups, and network firewalls to restrict network access and prevent unauthorized connections is crucial.

Vulnerability Management: Regularly scanning for and addressing vulnerabilities in the application and infrastructure is essential. Using automated vulnerability scanning tools is highly recommended.

Logging and Monitoring: Implementing comprehensive logging and monitoring systems allows for detecting and responding to security incidents promptly.

Compliance: Adhering to relevant security and compliance standards (e.g., ISO 27001, SOC 2) is often a necessity.

Failing to address these security implications can lead to data breaches, system compromises, and reputational damage. A robust security strategy needs to be incorporated from the very beginning of the design phase.

Q 26. Explain your experience with containerization technologies (e.g., Docker, Kubernetes).

I have significant experience with containerization technologies like Docker and Kubernetes. Docker provides a consistent way to package and run applications in containers, isolating them from the underlying infrastructure. Kubernetes is an orchestration platform that automates the deployment, scaling, and management of containerized applications across a cluster of machines.

I’ve used Docker to create lightweight, portable containers for various applications, simplifying deployment and improving consistency across different environments (development, testing, production). My experience with Kubernetes includes designing and managing Kubernetes clusters, defining deployments and services, managing configurations, and implementing monitoring and logging. I’m also familiar with concepts like pod scheduling, namespaces, and secrets management.

In one project, we used Docker and Kubernetes to deploy a microservices architecture. The containers were built using Dockerfiles, and Kubernetes managed the deployment, scaling, and health of each microservice, greatly simplifying the deployment process and improving resilience. This allowed for independent scaling of individual services based on demand, enhancing the overall system efficiency.

Q 27. How would you design a system to handle a sudden surge in users?

Handling a sudden surge in users requires a system designed for elasticity and resilience. The key is to anticipate potential bottlenecks and employ strategies to mitigate their impact. My approach involves:

Horizontal Scaling: Leverage auto-scaling capabilities of cloud platforms (like AWS Auto Scaling or Azure Autoscale) to automatically add more instances of your application when demand increases.

Caching: Use a distributed caching system (like Redis or Memcached) to store frequently accessed data in memory, reducing the load on databases and improving response times.

Queueing: Implement a message queue (like RabbitMQ or Kafka) to handle incoming requests during peak periods. This decouples the application from the immediate processing of requests, preventing overload.

Load Balancing: Use a robust load balancer to distribute traffic efficiently across multiple instances of your application.

Database Optimization: Ensure your database is properly configured for high availability and performance. Consider using read replicas to handle read-heavy workloads.

Circuit Breakers: Implement circuit breakers to prevent cascading failures. If a service becomes unresponsive, the circuit breaker will prevent further requests from being sent to it, allowing other services to continue functioning.

Rate Limiting: Implement rate limiting to prevent abuse and protect your system from denial-of-service attacks.

A combination of these strategies ensures the system remains responsive and available even during unexpected traffic spikes. For example, using a combination of auto-scaling, caching, and queueing, I helped a client’s e-commerce platform successfully handle a Black Friday traffic surge without any significant performance degradation.

Key Topics to Learn for Software Design and Architecture Interview

- Design Patterns: Understand common design patterns (e.g., Singleton, Factory, Observer) and their practical applications in solving recurring design problems. Consider when to apply each pattern and the trade-offs involved.

- Microservices Architecture: Explore the principles of microservices, including service discovery, communication patterns (REST, gRPC), and deployment strategies. Be prepared to discuss the advantages and disadvantages compared to monolithic architectures.

- Databases and Data Modeling: Master relational and NoSQL databases. Practice designing efficient database schemas and understand normalization principles. Discuss your experience with different database technologies and their suitability for various applications.

- API Design and RESTful Principles: Understand the core principles of RESTful API design, including resource representation, HTTP methods, and status codes. Be prepared to discuss API design best practices and security considerations.

- Scalability and Performance: Discuss strategies for designing scalable and high-performing systems. This includes topics like load balancing, caching, and database optimization. Be ready to analyze potential bottlenecks and propose solutions.

- Software Architecture Styles: Familiarize yourself with different architectural styles like layered, event-driven, and message-queue based architectures. Understand their strengths, weaknesses, and when each is most appropriate.

- Testing and Deployment: Discuss your experience with various testing methodologies (unit, integration, system) and deployment strategies (CI/CD). Understanding the importance of robust testing and efficient deployment is crucial.

- Security Considerations: Demonstrate an understanding of security best practices throughout the software development lifecycle, including authentication, authorization, and data protection.

Next Steps

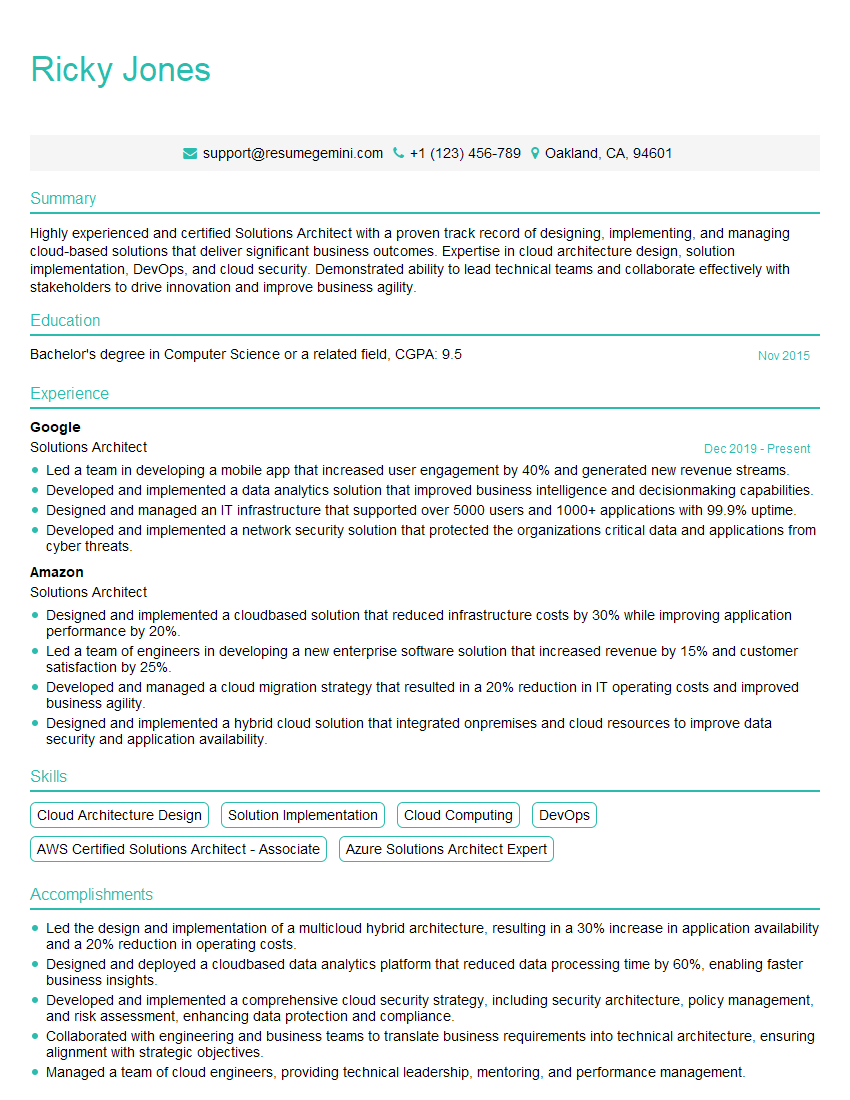

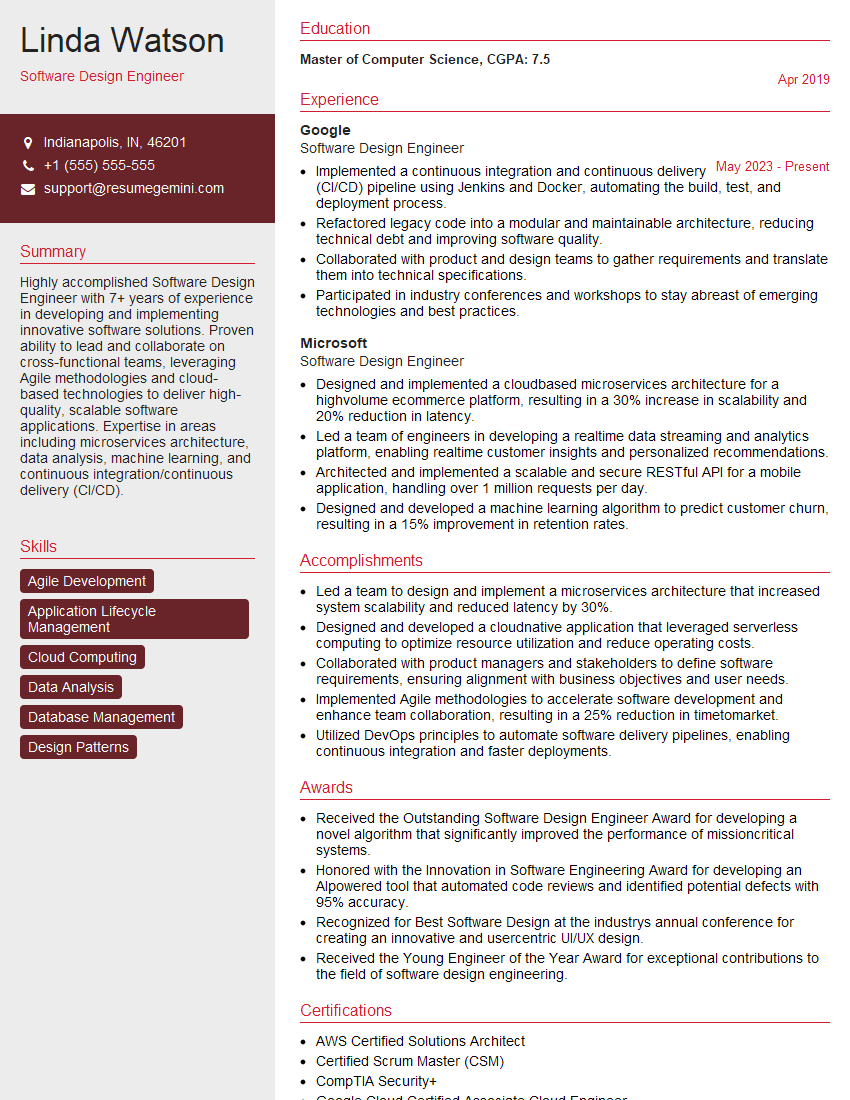

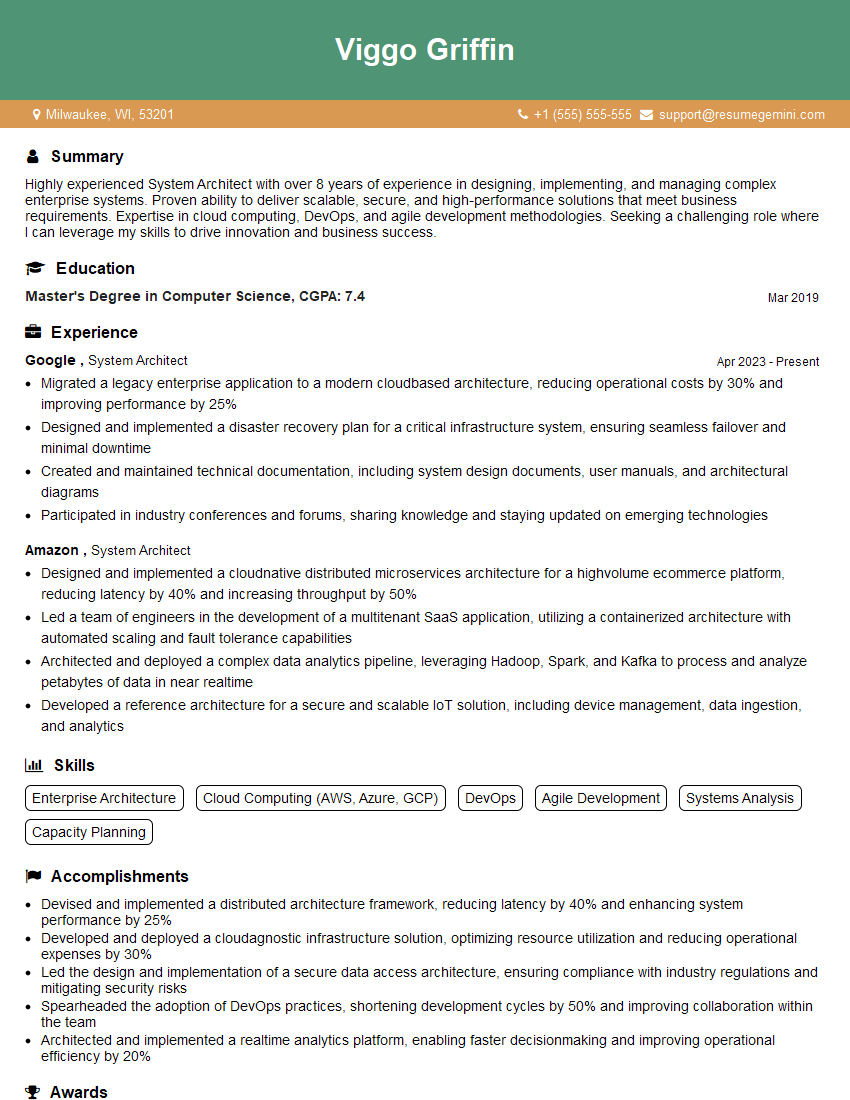

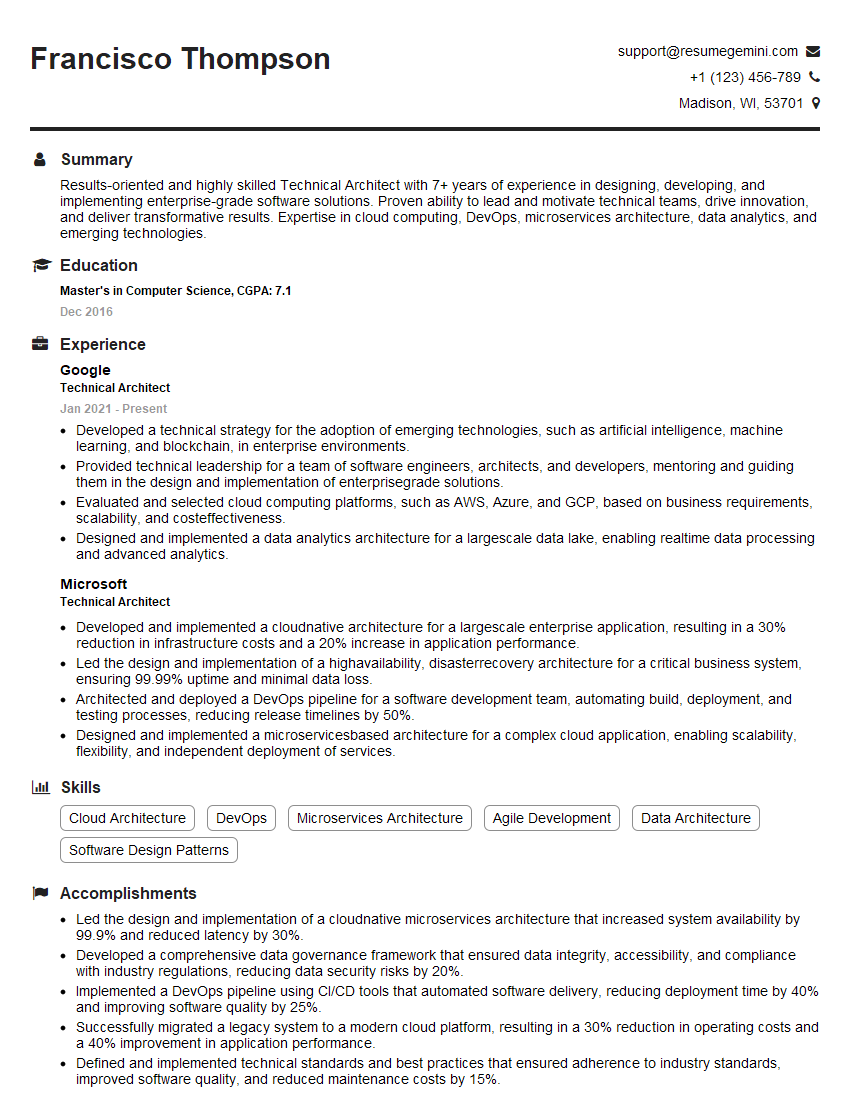

Mastering Software Design and Architecture is pivotal for advancing your career in the tech industry. It demonstrates a deep understanding of building robust, scalable, and maintainable systems – highly sought-after skills in today’s competitive market. To maximize your job prospects, it’s crucial to create a compelling and ATS-friendly resume that effectively showcases your expertise. ResumeGemini is a trusted resource to help you build a professional and impactful resume. We offer examples of resumes tailored to Software Design and Architecture roles to guide you through the process. Invest time in crafting a resume that highlights your skills and experience; it’s your first impression with potential employers.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

I Redesigned Spongebob Squarepants and his main characters of my artwork.

https://www.deviantart.com/reimaginesponge/art/Redesigned-Spongebob-characters-1223583608

IT gave me an insight and words to use and be able to think of examples

Hi, I’m Jay, we have a few potential clients that are interested in your services, thought you might be a good fit. I’d love to talk about the details, when do you have time to talk?

Best,

Jay

Founder | CEO