Feeling uncertain about what to expect in your upcoming interview? We’ve got you covered! This blog highlights the most important Accuracy Control & Verification interview questions and provides actionable advice to help you stand out as the ideal candidate. Let’s pave the way for your success.

Questions Asked in Accuracy Control & Verification Interview

Q 1. Explain your understanding of accuracy control methodologies.

Accuracy control methodologies are the systematic processes and techniques used to ensure data integrity and reliability. Think of it as building a strong foundation for any data-driven decision. These methodologies encompass various stages, from data acquisition and entry to analysis and reporting, all aimed at minimizing errors and maximizing the trustworthiness of the information.

- Data Validation Rules: These are predefined rules that check data against specific criteria (e.g., range checks, format checks, data type checks). For instance, a rule could ensure that an age field only contains positive integers.

- Data Cleansing: This involves identifying and correcting inconsistencies, errors, and inaccuracies in the data. This could be as simple as correcting a misspelled name or as complex as handling missing values using statistical imputation techniques.

- Source Verification: This is the process of confirming the authenticity and reliability of data sources. Imagine verifying the source of a financial report before making investment decisions – cross-referencing with other sources adds confidence.

- Regular Audits: Periodic reviews of data accuracy and control procedures are crucial. These audits help identify weaknesses and potential improvements in the system.

For example, in a clinical trial, accuracy control ensures that patient data is accurately recorded, reducing the risk of misleading results impacting treatment decisions.

Q 2. Describe your experience with data validation techniques.

My experience with data validation techniques is extensive. I’ve employed a wide range of methods, adapting my approach based on the specific data context and the potential for errors. Effective data validation is like a multi-layered security system, each layer providing an additional check.

- Range Checks: I verify that numerical data falls within acceptable limits. For example, a temperature reading should fall within the biologically plausible range.

- Format Checks: I ensure data adheres to specified formats (e.g., date, phone number). A date should be in YYYY-MM-DD format to avoid inconsistencies.

- Data Type Checks: I verify data aligns with its intended type (integer, string, etc.). Trying to perform arithmetic operations on a text field will lead to errors.

- Cross-field Validation: This involves checking consistency across different data fields. For example, verifying that the sum of individual line items matches the total in an invoice.

- Consistency Checks: I compare data against previously validated data or reference data to identify inconsistencies.

- Lookup Tables: I utilize lookup tables to verify the existence of values in predefined sets. Think of a country code lookup table ensuring only valid country codes are entered.

In a recent project involving customer data, I implemented cross-field validation to ensure consistency between customer addresses recorded in different databases, significantly improving data accuracy and reducing duplication.

Q 3. How do you identify and mitigate risks related to data inaccuracy?

Identifying and mitigating risks related to data inaccuracy is a proactive process. It’s like preventing a house fire before it starts—better to be prepared than to deal with the aftermath.

- Risk Assessment: I begin by identifying potential sources of error. This could involve analyzing data collection methods, data entry processes, and the reliability of data sources.

- Data Profiling: I analyze data characteristics to identify potential issues such as missing values, outliers, and inconsistencies. This gives me a clearer picture of potential problems.

- Error Detection Mechanisms: I build in automated checks and alerts to flag potential errors early in the data pipeline. Imagine an alert system notifying you immediately if a value falls outside an acceptable range.

- Data Governance Policies: I develop and implement clear policies that define data quality standards and responsibilities. These policies provide structure and accountability.

- Error Handling Procedures: I define clear procedures for handling and correcting errors, including tracking, documentation, and escalation protocols.

In a financial modeling scenario, failing to identify and correct data inaccuracies can lead to incorrect financial projections, potentially resulting in significant losses. By implementing robust risk mitigation strategies, such as cross-checking financial data from multiple reliable sources, we can reduce this risk.

Q 4. What are the key performance indicators (KPIs) you use to measure accuracy?

Key Performance Indicators (KPIs) are essential for measuring accuracy and demonstrating continuous improvement. They provide objective measures that allow us to assess the effectiveness of our accuracy control efforts.

- Data Accuracy Rate: This measures the percentage of data points that are accurate and free from errors. A higher rate indicates better data quality.

- Completeness Rate: This measures the percentage of data fields that are filled. Missing data can lead to inaccurate conclusions.

- Error Rate: This measures the percentage of data points containing errors. A lower rate is always the goal.

- Timeliness of Data Correction: This measures how quickly errors are identified and corrected. Faster correction reduces the impact of inaccurate data.

- Number of Data Errors Identified in Audits: This tracks the effectiveness of our internal audits in discovering and addressing errors.

For example, in a customer relationship management (CRM) system, a high data accuracy rate is crucial for successful marketing campaigns and personalized customer service. Tracking the error rate over time helps identify trends and focus improvement efforts.

Q 5. Explain your experience with different types of verification methods.

Verification methods ensure that the data is accurate and reliable. These methods provide independent confirmation of the data’s validity. Think of it like getting a second opinion from a trusted expert.

- Manual Verification: This involves manually reviewing and checking data for accuracy. While time-consuming, it can be effective for smaller datasets or critical data points.

- Automated Verification: This uses software tools and algorithms to automatically check data for accuracy. This is particularly useful for large datasets where manual verification is impractical.

- Cross-Referencing: This involves comparing data from multiple sources to verify consistency. Checking information across multiple databases.

- Reconciliation: This involves comparing data against a known reliable source, such as a bank statement or official record.

- Statistical Sampling: This involves selecting a representative sample of data for verification. This allows for efficient verification of large datasets.

In a financial auditing context, using reconciliation methods to compare reported transactions against bank statements is a cornerstone of accuracy verification, helping to uncover potential discrepancies and fraud.

Q 6. How do you handle discrepancies in data sources?

Handling discrepancies in data sources requires a systematic approach. It’s like solving a detective mystery – you need to carefully gather clues and determine the most reliable source.

- Identify the Source of Discrepancy: The first step is to pinpoint the exact location and nature of the discrepancy. Is it a formatting issue, a data entry error, or a genuine difference in data definition?

- Investigate the Discrepancy: Once identified, investigate the cause. Were there data entry errors? Are the data definitions different? Are there inconsistencies in measurement methodologies?

- Prioritize Data Sources: Determine which data source is more reliable based on factors like data source authority, data quality, and the known error rates. Trust the most trustworthy source.

- Resolve the Discrepancy: Once the source and cause are understood, apply appropriate corrections. This might involve data cleansing, updating the less reliable source, or flagging the discrepancy for further review.

- Document the Resolution: Maintain a clear record of the identified discrepancy, the investigation process, and the resolution implemented. This aids future analysis.

For example, in supply chain management, discrepancies between inventory records from different warehouses might be resolved by a physical inventory check, leading to a more accurate representation of stock levels and optimized supply decisions.

Q 7. Describe your experience in implementing accuracy control procedures.

Implementing accuracy control procedures requires a structured and phased approach. It is like building a sturdy house – you need a strong foundation, well-defined plans, and regular maintenance.

- Needs Assessment: Define the specific accuracy requirements for the data and the potential consequences of inaccuracies. This is the first crucial step before building your system.

- Procedure Design: Develop clear and comprehensive procedures for data entry, validation, verification, and correction. Detailed instructions to ensure consistency.

- System Implementation: Implement the procedures using appropriate tools and technologies. Automated systems help streamline processes.

- Training: Train personnel on the new procedures and systems. Well-trained staff are essential for success.

- Monitoring and Evaluation: Establish a system for monitoring data accuracy and evaluating the effectiveness of the control procedures. Regular review and adjustments are key.

- Continuous Improvement: Regularly review and improve the accuracy control procedures based on monitoring and evaluation results. The systems are not static; they adapt over time.

In a manufacturing environment, implementing robust accuracy control procedures for production data ensures that production targets are met and that the quality of manufactured goods is maintained.

Q 8. How do you ensure data integrity throughout a process?

Ensuring data integrity throughout a process is paramount for reliable results. It involves implementing a robust system of checks and balances at each stage, from data acquisition to analysis and reporting. Think of it like building a strong bridge – each component needs to be sound to prevent collapse.

- Data Source Verification: We start by validating the credibility and reliability of the source. This includes verifying data provenance, checking for known biases, and confirming the accuracy of data collection methods.

- Data Validation Rules: During data entry or import, we implement strict validation rules. For example, using data type checks (ensuring a date field only contains valid dates), range checks (limiting values to a reasonable range), and consistency checks (comparing data across different fields for internal coherence).

For example, a rule might check if an age value is positive. - Data Transformation & Cleaning: Data cleaning addresses inconsistencies, handles missing values (using imputation techniques like mean/median substitution or more advanced methods), and standardizes data formats. We document each transformation step meticulously to ensure traceability.

- Version Control & Audit Trails: Implementing version control for datasets and maintaining comprehensive audit trails allows us to track changes, identify the source of errors, and easily revert to previous versions if necessary.

- Regular Data Backups: Robust backup strategies protect against data loss and ensure business continuity. This includes regularly backing up both the raw data and processed data to separate, secure locations.

In a recent project involving customer database analysis, we uncovered inconsistencies in address data due to varying input formats. Implementing a standardized format and data validation checks at the entry point significantly reduced errors and improved data quality.

Q 9. What is your experience with error detection and correction techniques?

My experience with error detection and correction techniques spans various methods, ranging from simple visual inspections to sophisticated statistical algorithms. It’s like being a detective, searching for clues to pinpoint inaccuracies.

- Visual Inspection & Data Profiling: This initial step helps to identify obvious errors and gain insights into data distribution, revealing potential outliers or anomalies.

For example, using histograms to visualize data distribution and identify unusual peaks. - Data Consistency Checks: Comparing data across different sources or fields helps identify discrepancies. For instance, checking if a customer’s age matches the birthdate provided.

- Statistical Process Control (SPC): SPC charts, like control charts, help monitor the stability of a process and detect shifts in the mean or variance, signifying potential errors. I’ll elaborate on SPC in a later answer.

- Error Correction Techniques: After error detection, we apply appropriate correction techniques such as data imputation for missing values, outlier treatment (capping, winsorizing, or removal), and data transformation (log transformation, standardization).

- Root Cause Analysis: It’s crucial to understand the underlying cause of errors. This often involves analyzing the process itself, identifying weaknesses in procedures or data acquisition methods.

In one project, we used SPC charts to monitor the accuracy of a production process. The charts highlighted a period of increased variability, leading us to investigate and identify a faulty machine component as the root cause.

Q 10. How do you prioritize accuracy control tasks?

Prioritizing accuracy control tasks requires a risk-based approach. We consider the potential impact of errors on business decisions and downstream processes. It’s about focusing on the ‘highest-value’ data.

- Risk Assessment: We assess the potential consequences of inaccurate data in different areas. Data critical for financial reporting or regulatory compliance would naturally receive higher priority.

- Data Criticality: We analyze the importance of each data element within the context of the overall project or business processes. Essential data receives more scrutiny.

- Error Probability: Data sources or processes with a higher historical error rate are prioritized for more rigorous accuracy checks.

- Cost-Benefit Analysis: Balancing the cost of implementing accuracy controls with the potential cost of errors is critical. We aim for an optimal balance.

Imagine a scenario where we’re analyzing sales data. Data related to revenue figures would naturally receive a higher priority for accuracy checks than data on customer preferences, as revenue errors have more direct financial implications.

Q 11. Describe your experience using quality control software or tools.

I have extensive experience with various quality control software and tools. These tools significantly enhance our ability to detect, track, and resolve data quality issues.

- Data Profiling Tools: Tools like

OpenRefineorTalend Open Studioallow for detailed data profiling, identifying data quality issues such as missing values, inconsistencies, and outliers. - Data Validation Tools: These tools allow us to define data validation rules and automatically check data against these rules during import or processing, ensuring data integrity.

- Statistical Software Packages: Packages like

RandPython(with libraries likePandasandNumPy) provide powerful tools for statistical analysis, including SPC and outlier detection. - Database Management Systems (DBMS): Built-in features of DBMS such as constraints and triggers can automatically enforce data integrity rules within the database.

In a past project, we used R to develop custom scripts for automated data quality checks and outlier detection. This significantly improved the efficiency and accuracy of our data analysis workflow.

Q 12. How do you document and report accuracy control findings?

Documenting and reporting accuracy control findings is crucial for transparency and accountability. Think of it as creating a detailed case file for each data quality investigation.

- Detailed Documentation: We maintain detailed records of all accuracy control procedures, including data sources, validation rules, error detection methods, and correction steps.

- Data Quality Reports: We generate reports summarizing the results of our accuracy checks, including the number of errors detected, their severity, and the corrective actions taken. These reports usually include tables and charts illustrating key findings.

- Traceability: Each finding is linked to specific data sources and processes, allowing for easy traceability and root cause analysis.

- Communication: We communicate findings to relevant stakeholders in a clear and concise manner, using appropriate visualizations to aid understanding.

A typical report might include a summary of data quality metrics (e.g., percentage of missing values, percentage of outliers), a description of detected errors, and recommendations for improvement. Visualizations like heatmaps can be used to highlight areas of high error concentration.

Q 13. Explain your understanding of statistical process control (SPC).

Statistical Process Control (SPC) is a powerful methodology used to monitor and control the variability of processes. It helps identify trends, patterns, and special causes of variation, ensuring consistent data quality and reducing errors. Imagine it as a continuous quality checkup for your data pipeline.

- Control Charts: Control charts (like X-bar and R charts, p-charts, c-charts) are visual tools that track data over time. They help distinguish between common cause variation (inherent in the process) and special cause variation (indicating a problem that needs addressing).

- Process Capability Analysis: This analysis determines whether a process is capable of meeting specified requirements. It helps assess if the process variability is within acceptable limits.

- Acceptance Sampling: In some cases, we may use acceptance sampling techniques to check the quality of a batch or sample of data before incorporating it into the main dataset.

For example, in manufacturing, SPC charts might track the dimensions of a product over time. If the data points exceed the control limits, it signals a problem (e.g., machine malfunction) requiring immediate attention. Similarly, in data processing, SPC can monitor the error rate of a particular automated data validation step.

Q 14. How do you manage and resolve conflicts regarding data accuracy?

Managing and resolving conflicts regarding data accuracy requires a collaborative and objective approach. It’s about finding common ground and building consensus.

- Establish Clear Data Governance Procedures: Define clear roles and responsibilities for data management, validation, and dispute resolution.

- Data Validation Protocol: Have a standardized protocol for validating disputed data points, involving multiple parties if necessary.

- Objective Evidence: Resolve conflicts based on objective evidence, such as documentation, data sources, and statistical analysis.

- Mediation & Collaboration: Facilitate communication and collaboration between conflicting parties, finding common ground and agreeing on a resolution.

- Escalation Procedures: Establish a clear escalation process for resolving conflicts that cannot be resolved at lower levels.

In a situation where two departments disagreed on the accuracy of sales data, we used a standardized data reconciliation process, reviewing source documents and applying statistical tests to validate the data. This collaborative approach led to a mutually agreeable resolution.

Q 15. What experience do you have with root cause analysis related to data errors?

Root cause analysis for data errors is crucial for preventing future inaccuracies. My approach involves a systematic investigation using methods like the 5 Whys and Fishbone diagrams. I start by clearly defining the error, then systematically drill down to understand the underlying causes. This often involves examining data sources, processes, and systems involved in data collection, transformation, and storage.

For example, if we find inconsistent customer addresses, the 5 Whys might go like this:

- Why are addresses inconsistent? Because data entry is inconsistent.

- Why is data entry inconsistent? Because training on data entry standards is lacking.

- Why is training lacking? Because there’s no dedicated training program.

- Why is there no training program? Because the importance of data accuracy wasn’t prioritized.

- Why wasn’t data accuracy prioritized? Because there weren’t clear metrics and accountability tied to data quality.

By identifying the root cause (lack of prioritization and training), we can implement targeted solutions, such as creating a standardized data entry process and a comprehensive training program, preventing similar errors in the future.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How familiar are you with industry-specific accuracy standards or regulations?

My familiarity with industry-specific accuracy standards and regulations is extensive. I’ve worked with various standards, including HIPAA (for healthcare data), GDPR (for personal data in Europe), and SOX (for financial data). Understanding these regulations is critical because non-compliance can lead to significant legal and financial repercussions. For instance, a breach of HIPAA can result in hefty fines and reputational damage.

I’m adept at interpreting these regulations and translating them into practical, actionable accuracy control procedures. This includes developing and implementing data validation rules, implementing audit trails, and establishing appropriate data governance frameworks. I also stay updated on changes and updates to these regulations through professional organizations and industry publications.

Q 17. How do you ensure the accuracy of data from various sources?

Ensuring data accuracy from multiple sources requires a multi-faceted approach. It’s not a ‘one-size-fits-all’ solution. My strategy combines several key techniques:

- Data Validation: Implementing robust validation rules at the point of data entry to catch errors early. This involves using data type checks, range checks, and consistency checks.

- Source Verification: Evaluating the reliability and trustworthiness of each data source. This includes assessing data quality metrics, reviewing data documentation, and potentially auditing the source systems.

- Data Reconciliation: Comparing data from different sources to identify discrepancies and inconsistencies. This may involve using automated tools or manual reconciliation processes.

- Data Transformation and Cleaning: Applying data transformation techniques to standardize, cleanse, and enhance data quality. This might include handling missing values, removing duplicates, and correcting inconsistencies.

- Data Governance Framework: Establishing clear roles, responsibilities, and processes for data management, including data quality control and verification.

For example, if we’re integrating data from a CRM and an ERP system, we’d implement data reconciliation checks to ensure customer IDs match across both systems and address discrepancies before integrating the data into a reporting system.

Q 18. Describe a time you identified a critical data inaccuracy. How did you handle it?

In a previous role, we discovered a critical inaccuracy in sales figures due to a coding error in our reporting system. This error significantly overstated sales for the previous quarter, impacting financial forecasting and strategic decision-making.

My response involved a several steps:

- Immediate Containment: We immediately froze the reporting system to prevent further dissemination of incorrect data.

- Root Cause Analysis: We performed a thorough investigation, tracing the error back to a faulty calculation within a specific script.

- Corrective Action: The coding error was swiftly corrected, and the data was recalculated.

- Impact Assessment: We assessed the impact of the error on key decisions and financial statements.

- Communication: We communicated the error and its correction to all relevant stakeholders, including leadership, finance, and sales teams.

- Preventive Measures: We implemented stricter code review processes and added automated data validation checks to prevent similar errors in the future.

This experience highlighted the critical need for rigorous testing, code reviews, and data validation at every stage of the data lifecycle.

Q 19. Explain your approach to training others on accuracy control procedures.

My approach to training others on accuracy control procedures is practical and hands-on. I believe in a blended learning approach that combines theoretical knowledge with practical application.

The training would include:

- Theoretical Instruction: Lectures and presentations covering concepts such as data validation, error detection, root cause analysis, and relevant industry regulations.

- Practical Exercises: Hands-on exercises and case studies that allow trainees to practice applying learned concepts in simulated scenarios.

- On-the-Job Training: Mentoring and shadowing opportunities that allow trainees to work alongside experienced professionals and apply their knowledge in real-world settings.

- Regular Assessments: Regular quizzes and assessments to evaluate understanding and identify areas requiring further training.

- Feedback and Reinforcement: Continuous feedback and reinforcement to ensure that trainees master the skills and processes.

I tailor the training content and delivery method to the specific needs and learning styles of the trainees. The goal is to empower them to independently manage and maintain data accuracy.

Q 20. How do you stay updated on best practices for accuracy control and verification?

Staying updated on best practices is vital in this field. I utilize several strategies:

- Professional Organizations: Membership in organizations like the American Statistical Association or relevant industry groups provides access to research, publications, and conferences focused on data quality and accuracy.

- Industry Publications: Following reputable industry journals, blogs, and newsletters that publish articles on data quality and accuracy control techniques.

- Webinars and Conferences: Attending webinars and conferences to learn about the latest advancements and best practices in data accuracy and verification.

- Online Courses and Certifications: Taking online courses and pursuing certifications in relevant areas, like data management or data analytics, to enhance my skill set.

- Networking: Engaging with professionals in the field through networking events and online communities to exchange ideas and best practices.

Continuous learning ensures I remain at the forefront of developments and can provide the most effective accuracy control solutions.

Q 21. How would you develop and implement an accuracy control plan for a new project?

Developing and implementing an accuracy control plan for a new project involves a structured, phased approach:

- Project Scope and Data Requirements: Clearly define the project’s objectives and identify all data sources, types, and quality requirements. This might involve creating a data dictionary.

- Risk Assessment: Identify potential data quality risks, considering factors such as data source reliability, data entry methods, and data transformation processes.

- Accuracy Control Procedures: Define specific procedures for data collection, validation, transformation, and verification. This might involve developing data validation rules, implementing data quality checks, and establishing data reconciliation processes.

- Data Governance Framework: Establish roles and responsibilities for data management, including data quality control and verification. This might involve establishing a data quality team or assigning data stewardship responsibilities.

- Monitoring and Evaluation: Implement mechanisms to monitor data quality and evaluate the effectiveness of the accuracy control procedures. This might involve setting up data quality metrics and conducting regular data quality audits.

- Documentation: Document all procedures, data quality metrics, and related information to facilitate future reference and continuous improvement.

This structured approach ensures that the accuracy control plan is comprehensive, tailored to the specific needs of the project, and effectively mitigates potential data quality risks.

Q 22. What are the common challenges in maintaining data accuracy in your field?

Maintaining data accuracy is a constant challenge, akin to navigating a complex maze. Common pitfalls include human error (data entry mistakes, misinterpretations), system limitations (software bugs, outdated technology), data integration issues (inconsistencies across different systems), and the sheer volume of data involved. Imagine a large hospital – manual entry of patient data into multiple systems increases the risk of errors. Similarly, integrating data from various medical devices can lead to inconsistencies if not carefully managed. Another significant challenge is the lack of clear data governance procedures, leaving room for ambiguity and variability in data handling practices.

- Human Error: Data entry errors, especially when dealing with large datasets or repetitive tasks. A simple typo in a patient’s medical record can have serious consequences.

- System Limitations: Bugs in software, or limitations in the capacity or functionality of the systems used to store and process data. For example, an outdated database system may not be able to handle the volume of data generated by a rapidly growing business.

- Data Integration Issues: Inconsistent formats or definitions across various systems lead to discrepancies and inaccuracies. A customer’s address may be stored differently in the sales system versus the customer service system.

- Data Volume: The sheer volume of data makes manual verification impractical. It’s simply not feasible to manually check every data point in a petabyte-sized dataset.

Q 23. How do you assess the effectiveness of your accuracy control measures?

Assessing the effectiveness of accuracy control measures requires a multi-faceted approach. We use a combination of methods, including:

- Key Performance Indicators (KPIs): We track metrics like error rates, data completeness, and timeliness. For example, we might monitor the percentage of records with missing values or the number of data entry errors detected.

- Regular Audits: Scheduled audits provide an independent verification of our accuracy control procedures. These audits involve checking a sample of data for accuracy and identifying any weaknesses in our processes.

- Data Quality Reports: We generate automated reports that highlight potential accuracy issues. These reports might identify outliers, inconsistencies, or unexpected data patterns.

- User Feedback: We solicit feedback from data users to identify areas where accuracy is a concern. This can help us pinpoint specific problems and improve our processes.

These methods are combined to create a comprehensive picture of the effectiveness of our control measures, enabling continuous improvement. Imagine a quality control process in a manufacturing plant. We wouldn’t just rely on one method; we would use a combination of inspection, testing, and feedback to ensure product quality.

Q 24. What is your experience with auditing processes and procedures?

My experience with auditing processes includes both performing and overseeing audits in diverse settings. I’m proficient in developing audit plans, selecting appropriate audit samples, and performing detailed data analysis to identify inconsistencies and violations of established standards. I’m also skilled in documenting audit findings, communicating these findings to stakeholders, and recommending corrective actions. This experience encompasses various data types, ranging from financial records to operational metrics to scientific research data. A key aspect of my work is ensuring the audit process is consistent with regulatory requirements and internal policies, emphasizing objectivity and impartiality.

For example, in a recent audit of a financial database, I identified an anomaly in the reconciliation process that resulted in a significant discrepancy. Through systematic investigation, I traced the source of the error to a misconfiguration in the automated reconciliation tool. This was communicated to the relevant stakeholders and the issue was promptly corrected.

Q 25. Describe your approach to investigating and resolving data discrepancies.

Investigating data discrepancies involves a systematic approach. First, we identify the nature and extent of the discrepancy. Then, we trace the data’s origin, verifying its source and path through the system. Next, we analyze the data for patterns or anomalies that might indicate the root cause. This often involves examining related data sources and systems. Finally, once the root cause is identified, we implement corrective actions to prevent similar discrepancies in the future and document the entire process for future reference.

For instance, if we detect an unexpected spike in sales figures for a particular product, we would examine the sales transactions for that product, review the inventory management system, and potentially look at marketing campaigns to see if any promotional activities could explain the increase. If the discrepancy is due to a system error, we would work with IT to correct the error and prevent it from recurring.

Q 26. How do you balance the speed of processing with the need for accuracy?

Balancing speed and accuracy requires a thoughtful approach. It’s not about choosing one over the other; instead, it’s about optimizing the process to achieve both. We achieve this by employing techniques like:

- Automation: Automating data entry and validation processes reduces human error and speeds up processing. Automated checks can catch errors before they propagate.

- Data Validation Rules: Implementing rigorous data validation rules during data entry helps to prevent inaccurate data from entering the system in the first place. For example, a rule could prevent the entry of a negative value for an order quantity.

- Parallel Processing: Where feasible, we utilize parallel processing techniques to speed up processing without compromising accuracy. Think of multiple computers working simultaneously on different parts of a dataset.

- Prioritization: We prioritize data accuracy in critical areas, accepting slightly lower speed in those areas for the sake of accuracy, while leveraging speed in areas with lower risk.

This balanced approach ensures that we maintain both efficiency and data integrity. It’s like building a house; you can build it quickly, but compromising quality will lead to problems later. A more measured approach ensures a solid foundation for long-term success.

Q 27. How do you communicate effectively about accuracy control issues to stakeholders?

Effective communication about accuracy control issues is critical. I use a variety of methods depending on the audience and the severity of the issue. For minor issues, I might use email or informal updates to keep stakeholders informed. For more significant issues, I would prepare formal reports detailing the problem, its impact, and proposed solutions. These reports would be presented to relevant stakeholders in a clear and concise manner, using visual aids such as charts and graphs where appropriate.

I always ensure that my communication is accurate, timely, and appropriate to the audience’s level of understanding. For technical audiences, I may use more technical language, while for non-technical audiences, I would use simpler terms and analogies to ensure clear understanding. Transparency and proactive communication are key to maintaining trust and fostering collaboration.

Q 28. Describe your experience with using different data analysis tools to identify accuracy issues.

I have extensive experience using various data analysis tools to identify accuracy issues. These tools range from basic spreadsheet software like Excel to sophisticated statistical packages like R and specialized data quality tools. I’m proficient in using SQL for querying and analyzing large databases. I also use data visualization tools to identify patterns and anomalies in data. For example, scatter plots can highlight outliers, while histograms can show the distribution of data and identify unexpected values.

Specific tools I’ve used include:

- SQL: For querying and analyzing large relational databases to identify inconsistencies and anomalies.

- R: For performing statistical analyses to detect outliers and patterns in the data.

- Data Quality Tools: Specialized software designed to identify and address data quality issues, including profiling, cleansing, and matching capabilities.

Example: SELECT COUNT(*) FROM Customers WHERE Address IS NULL; -- SQL query to find records with missing address information - Data Visualization Tools (Tableau, Power BI): To create charts and graphs to visualize data and identify patterns and anomalies.

The choice of tools depends on the nature and volume of the data as well as the specific issues being investigated.

Key Topics to Learn for Accuracy Control & Verification Interview

- Data Validation Techniques: Understanding and applying various methods for ensuring data integrity, including range checks, format checks, and cross-referencing.

- Error Detection and Correction: Exploring strategies for identifying and resolving discrepancies in data, such as using checksums, parity bits, or automated reconciliation processes. Practical application: Describe your experience in identifying and resolving data inconsistencies in a past role.

- Quality Assurance Methodologies: Familiarity with different QA frameworks (e.g., Six Sigma) and their application in accuracy control and verification processes. Think about how you’ve used statistical methods to analyze data accuracy.

- Data Governance and Compliance: Understanding the importance of adhering to data standards and regulations relevant to the industry. Consider how you’ve ensured data compliance in your previous work.

- Automation and Tools: Proficiency in using software and tools designed for data verification and quality control. Be prepared to discuss your experience with specific tools or technologies.

- Root Cause Analysis: Developing skills in identifying the underlying causes of data inaccuracies and implementing preventative measures. Prepare examples demonstrating your problem-solving abilities in this area.

- Process Improvement: Discuss your understanding of lean methodologies and how they apply to improving the accuracy and efficiency of verification processes.

Next Steps

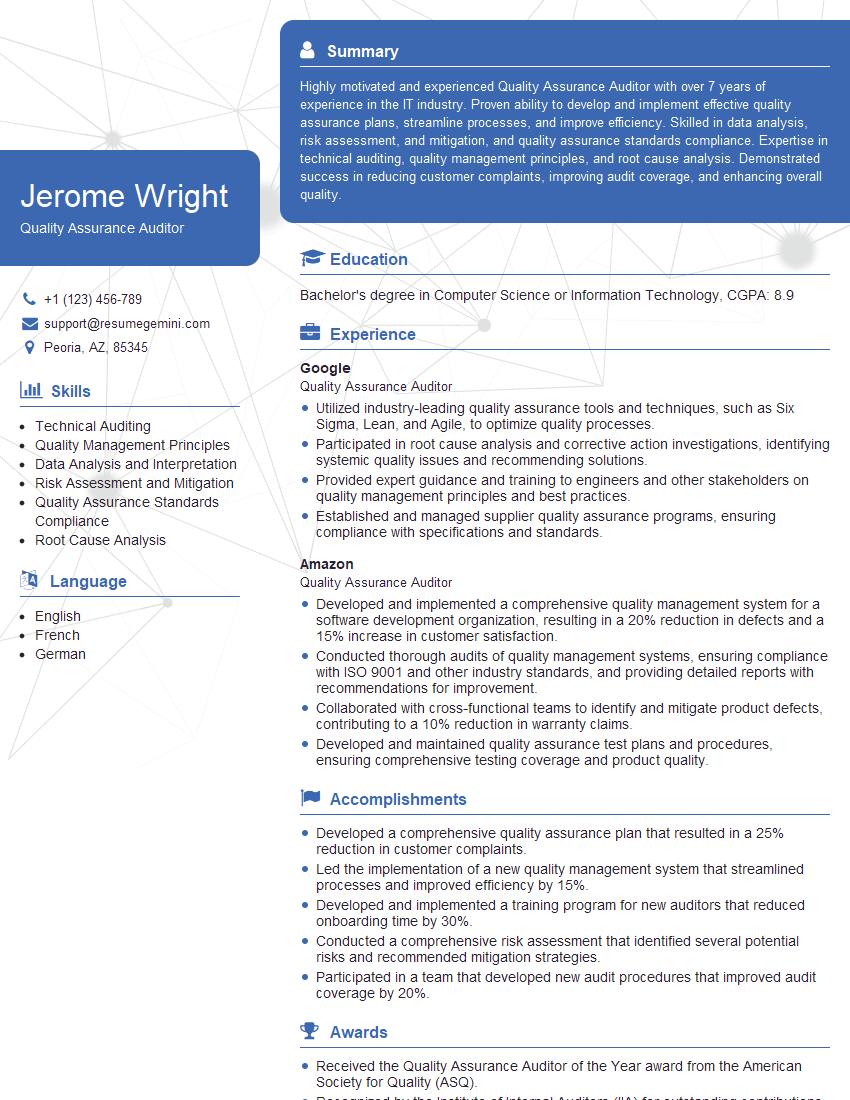

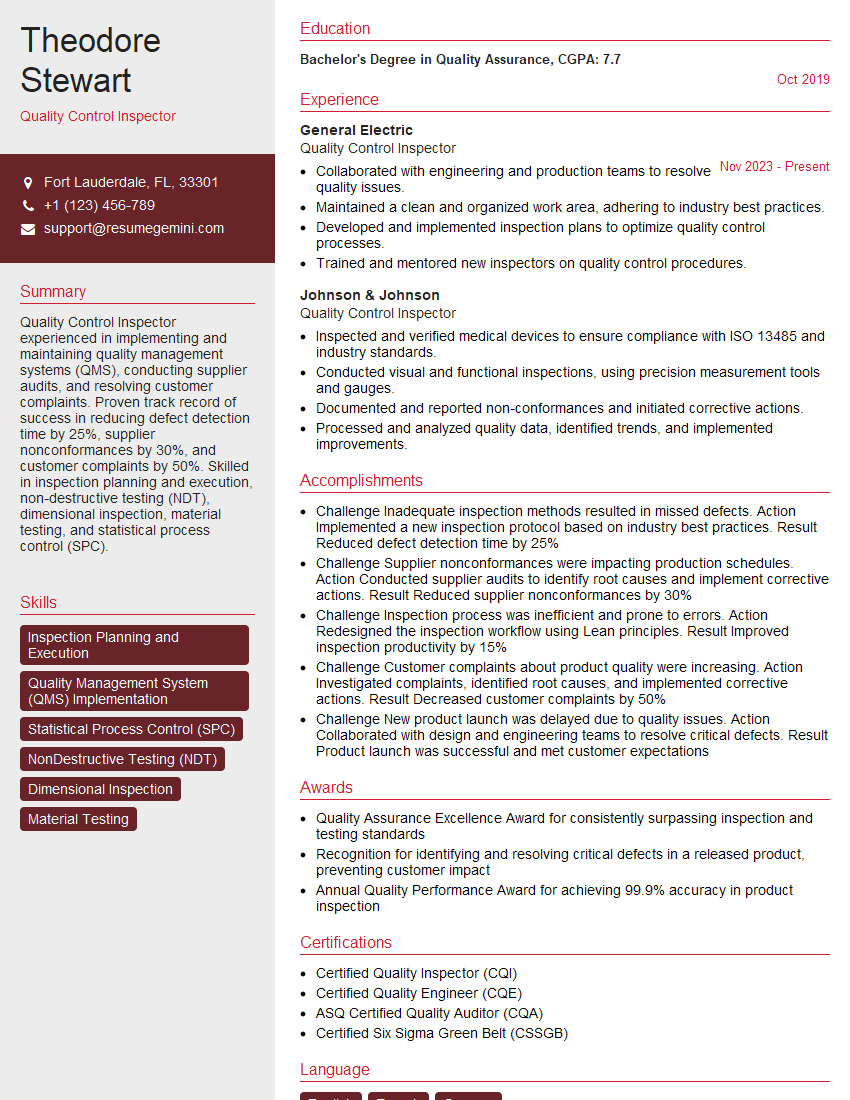

Mastering Accuracy Control & Verification is crucial for career advancement in many fields, opening doors to specialized roles with higher earning potential and increased responsibility. A well-crafted resume is your key to unlocking these opportunities. Building an ATS-friendly resume is paramount to getting your application noticed by recruiters. To ensure your resume shines and effectively highlights your skills and experience, leverage the power of ResumeGemini. ResumeGemini provides a trusted platform for creating professional, impactful resumes, and we offer examples of resumes tailored specifically to Accuracy Control & Verification to help you get started. Take the next step in your career journey – build a resume that gets you noticed!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples