Unlock your full potential by mastering the most common Advanced Masking Techniques interview questions. This blog offers a deep dive into the critical topics, ensuring you’re not only prepared to answer but to excel. With these insights, you’ll approach your interview with clarity and confidence.

Questions Asked in Advanced Masking Techniques Interview

Q 1. Explain the difference between data masking and data anonymization.

Data masking and data anonymization are both crucial for protecting sensitive information, but they achieve this through different methods. Data masking replaces sensitive data elements with non-sensitive substitutes while preserving the original data’s structure and format. Think of it like disguising a person – you change their appearance (e.g., using a pseudonym), but their underlying identity (the data structure) remains intact. Data anonymization, on the other hand, aims to remove or transform identifying information to the point where individuals can no longer be identified. It’s like completely changing a person’s identity to the point where it’s nearly impossible to trace them back to their original identity. The key difference lies in the level of privacy preservation; anonymization provides a stronger guarantee of privacy than masking, but often at the cost of data usability.

Example: Let’s say we have a credit card number: 1234-5678-9012-3456. Data masking might replace it with XXXX-XXXX-XXXX-1234, preserving the last four digits for validation purposes. Data anonymization might completely remove the credit card number from the dataset.

Q 2. What are the different types of data masking techniques?

Data masking techniques are diverse, catering to different levels of data sensitivity and utility requirements. Some common types include:

- Shuffling: Randomly swapping values within a column. For example, re-ordering customer IDs.

- Pseudonymization: Replacing identifying values (like names or email addresses) with pseudonyms or surrogate keys. This allows linkage of masked data for analysis while protecting the original identity.

- Character Masking/Redaction: Replacing characters within a value with masked characters like ‘X’ or ‘*’. For example, masking all but the last four digits of a phone number.

- Data Subset Masking: Hiding certain columns or rows from a dataset. A useful strategy for restricting access to the most sensitive information.

- Data Perturbation: Adding noise to numerical data by altering values slightly, preventing exact values from being revealed while still maintaining the overall distribution of the data.

- Tokenization: Replacing sensitive data with non-sensitive tokens, maintaining a secure mapping between the token and the original data. This method maintains data utility for certain operations.

The choice of technique depends on the specific sensitivity of the data, the purpose of the masked data, and the regulatory requirements.

Q 3. Describe the process of implementing a data masking strategy.

Implementing a data masking strategy is a multi-step process requiring careful planning and execution. It begins with:

- Data Discovery and Classification: Identifying sensitive data elements and categorizing them based on their sensitivity level.

- Masking Technique Selection: Choosing the appropriate masking technique for each data category, considering the balance between data privacy and utility.

- Masking Tool Selection: Selecting a suitable masking tool, considering factors like scalability, performance, and compliance.

- Masking Policy Definition: Defining rules and guidelines for how data should be masked, including specifics for different data types and categories.

- Testing and Validation: Thoroughly testing the masking process to ensure the accuracy and effectiveness of the techniques employed, including testing for reversibility and potential vulnerabilities.

- Deployment and Monitoring: Deploying the chosen masking solution and continuously monitoring the effectiveness of the masking process to ensure ongoing data security.

Each step is crucial for ensuring a robust and effective data masking solution.

Q 4. How do you choose the appropriate masking technique for a given dataset?

The choice of masking technique is dictated by several factors. You need to consider:

- Sensitivity of the data: Highly sensitive data like credit card numbers or social security numbers might require stronger techniques like tokenization or character masking, while less sensitive data may only require shuffling or data perturbation.

- Data usability requirements: If the masked data needs to be used for analytical purposes, techniques that preserve data patterns and distributions (like data perturbation) might be preferred. If data utility is not a concern, stronger techniques can be used.

- Compliance requirements: Regulations like GDPR or HIPAA might dictate specific masking requirements, influencing the choice of technique.

- Data volume and performance: For large datasets, computationally efficient masking techniques are important. The chosen tool’s capability to handle large datasets must also be considered.

For example, for a dataset containing customer names, addresses, and credit card numbers, you might use pseudonymization for names and addresses, character masking for parts of addresses, and tokenization for credit card numbers.

Q 5. What are the challenges in implementing data masking in a large-scale environment?

Implementing data masking at a large scale presents significant challenges:

- Performance: Processing massive datasets with complex masking techniques can be computationally intensive and time-consuming.

- Scalability: The chosen solution must be able to handle increasing data volumes and user demands.

- Data Consistency: Maintaining data consistency and integrity across multiple masked datasets can be complex.

- Integration with Existing Systems: Integrating a data masking solution with existing data pipelines and applications can be challenging, requiring careful planning and execution.

- Cost: Large-scale implementations can be expensive, involving software licenses, infrastructure costs, and professional services.

Addressing these challenges requires a well-defined strategy, appropriate tool selection, and strong project management.

Q 6. How do you ensure the effectiveness of data masking techniques?

Ensuring the effectiveness of data masking techniques involves a combination of strategies:

- Regular Audits: Periodically auditing the masked data to ensure the masking techniques are still effective and that no vulnerabilities have emerged.

- Automated Testing: Implementing automated tests to verify the accuracy and completeness of the masking process.

- Penetration Testing: Simulating attacks to identify any weaknesses in the masking process.

- Monitoring and Logging: Tracking masking activities and monitoring for any anomalies or suspicious behavior.

- Data Loss Prevention (DLP) tools: Utilizing DLP tools to detect and prevent the leakage of sensitive data.

- Compliance Checks: Regular checks to ensure compliance with relevant regulations and standards.

Proactive monitoring and a robust testing regimen are critical for verifying the ongoing effectiveness of data masking measures.

Q 7. Explain the concept of data masking tokenization.

Data masking tokenization replaces sensitive data with unique, non-sensitive tokens. These tokens are stored securely in a separate, encrypted database, maintaining a secure mapping between the token and the original data. This is different from simple substitution because the token itself doesn’t reveal any information about the original data; it’s a completely obfuscated representation. This allows for data access and manipulation while maintaining a strong level of security.

Example: A credit card number could be replaced with a random token like ‘TKN-12345’. Only the tokenization system knows the mapping between ‘TKN-12345’ and the actual credit card number. This provides a crucial layer of security, as even if a database is breached, the tokens provide no direct access to the original sensitive information.

This technique is particularly useful in scenarios requiring data utility, such as testing and development, where access to real data is needed but not direct exposure.

Q 8. What are the security considerations related to data masking?

Data masking, while enhancing security, introduces its own set of considerations. A primary concern is ensuring the masked data remains sufficiently obfuscated to prevent sensitive information leakage. This requires choosing the right masking technique based on the sensitivity level of the data. For instance, simply replacing names with ‘John Doe’ is insufficient for highly sensitive data. We must also consider the potential for data inference attacks. Even with masking, an attacker might deduce sensitive information by combining multiple masked datasets or exploiting patterns in the masked data. Therefore, robust masking strategies should minimize the risk of both direct and indirect disclosure. Furthermore, the process itself should be secure, preventing unauthorized access to the original or masked data during the masking process. Finally, data masking needs to be balanced against the usability of the data for its intended purpose – overly aggressive masking can render the data useless for analysis or testing.

- Data leakage through insufficient masking: Using weak techniques, like simple substitution, can easily be reversed.

- Inference attacks: Combining multiple masked datasets could reveal patterns and compromise privacy.

- Security of the masking process itself: Ensuring the masking tool and its environment are secured against unauthorized access.

Q 9. How do you handle sensitive data during the testing phase of a data masking project?

Handling sensitive data during testing demands a layered approach. We use a combination of techniques: First, we employ a comprehensive data masking strategy to create synthetic datasets that mirror the structure and statistical properties of the original data, but without the actual sensitive information. This might involve techniques like data shuffling, character swapping, or creating pseudonymous identifiers. Second, we restrict access to these masked datasets on a need-to-know basis. This usually involves controlling access permissions at both the system and database levels. Third, we monitor access to masked data to quickly detect any suspicious activity. We use auditing trails to record all data accesses, providing a means of tracking potential misuse. Finally, we adhere strictly to the principle of least privilege – users only have access to the masked data necessary for their tasks. For example, a tester working on a payment processing module might only have access to masked payment amounts and transaction IDs, not the full customer information.

Example: Instead of using real credit card numbers, we might use a data masking tool to generate synthetic credit card numbers that follow the correct format but don't map to any real card.Q 10. Describe your experience with different data masking tools.

My experience spans various data masking tools, each with its strengths and weaknesses. I’ve worked extensively with tools like IBM Infosphere Information Server, Informatica Data Masking, and several open-source solutions. IBM Infosphere excels in large-scale deployments and complex data masking scenarios due to its robust capabilities and features, especially suitable for enterprise environments. Informatica provides comprehensive functionalities and integrates smoothly with other Informatica ecosystem products. Open-source tools, on the other hand, offer flexibility and cost-effectiveness, but often require more customization and expertise in their setup and maintenance. I’ve found that the ideal choice depends on the specific requirements of the project, considering factors such as scale, complexity, budget, and available expertise. In one project, we chose an open-source tool to tailor the masking strategy exactly to the unique needs of our dataset, while in another, we opted for IBM Infosphere due to the large volume of data and enterprise-grade security requirements.

Q 11. How do you ensure compliance with data privacy regulations when implementing data masking?

Compliance with data privacy regulations like GDPR, CCPA, and HIPAA is paramount. When implementing data masking, we build a robust compliance framework that considers the specific requirements of each relevant regulation. This begins with a thorough data discovery and classification process to identify all sensitive data elements. We then select masking techniques that meet the required level of privacy protection based on the sensitivity of the data. This ensures that masking methods align with the regulations and the defined privacy thresholds. Furthermore, we maintain comprehensive documentation about the data masking process, including the rationale behind chosen techniques, data lineage and audit trails. This documentation provides verifiable evidence of our compliance efforts and enables easier auditing. Finally, we regularly review and update our data masking strategy to ensure ongoing compliance with evolving regulations and best practices.

Q 12. How do you balance the need for data security with the usability of masked data?

Balancing data security with usability is a crucial aspect of data masking. Overly aggressive masking can render data unusable for analysis, testing, or reporting. Conversely, insufficient masking compromises security. We address this challenge by employing a risk-based approach. First, we carefully assess the sensitivity of each data element and the potential impact of its exposure. Then, we select masking techniques that provide the necessary security without unduly compromising usability. For example, for less sensitive data, we might use character swapping or partial masking. For more sensitive data, we might use stronger techniques like tokenization or encryption, carefully considering the impact on downstream processes. Additionally, we might develop custom masking rules to handle specific data patterns and requirements. The goal is to find an optimal balance based on the intended use of the masked data, the risk assessment, and the regulatory requirements.

Q 13. Explain the concept of differential privacy in the context of data masking.

Differential privacy adds a layer of protection beyond traditional data masking. It focuses on adding carefully calibrated noise to the data, making it difficult to infer individual data points, even when combining the masked data with external knowledge. This ensures that the release of the masked data does not increase the risk of identifying any individual. Instead of completely replacing or obfuscating data, differential privacy adds random noise to aggregate statistics computed from the data, ensuring the privacy of individual data points. The amount of noise added is carefully controlled to balance privacy and accuracy. The key is to ensure that the introduction of this noise doesn’t significantly impact the analytical value of the data. Think of it as adding a small amount of static to a radio broadcast. You still get the overall message but it’s more difficult to pinpoint individual words or sounds. Implementing differential privacy requires a deep understanding of statistics and cryptography.

Q 14. What are the key performance indicators (KPIs) for evaluating a data masking solution?

Evaluating a data masking solution requires a set of key performance indicators (KPIs). These KPIs fall into several categories: Security KPIs measure the effectiveness of the masking techniques. These include metrics like the probability of re-identification or the amount of information leakage. Performance KPIs reflect the efficiency and speed of the masking process. This involves metrics like the time taken to mask a dataset and the resource utilization during the masking process. Usability KPIs assess how well the masked data serves its intended purpose. Examples are the accuracy of analytical results using masked data, or the effectiveness of testing with the masked data. Finally, Compliance KPIs demonstrate the solution’s adherence to relevant data privacy regulations. These metrics track the percentage of data elements masked appropriately or the completeness of the audit trails. By tracking these KPIs, we can effectively evaluate the performance, efficiency, security, and compliance of a data masking solution.

Q 15. How do you handle dynamic data masking in a database?

Dynamic data masking in a database handles the masking of sensitive data on-the-fly, meaning the data is masked only when it’s accessed, rather than being permanently altered. This is crucial for maintaining data integrity while still allowing access for authorized users.

Imagine a scenario where you need to share a customer database with a third-party analytics team. You don’t want to expose sensitive information like credit card numbers or social security numbers. With dynamic data masking, we can configure the database to mask these fields only when they are accessed by the analytics team. The original data remains unchanged in the database, ensuring data integrity.

Implementation typically involves using database features like views, triggers, or stored procedures. For example, a view could be created that masks the credit card number field, replacing it with a series of Xs, while the underlying table remains unchanged. This approach is often more efficient and less disruptive to the database than static masking. Tools like Data Masking Services in Azure and similar offerings from other vendors often allow for granular control, even per-user or per-role. Advanced techniques involve using context-aware masking, which adjusts the level of masking based on the requesting user’s permissions.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What is the role of data masking in data governance?

Data masking plays a vital role in data governance by ensuring compliance with regulations like GDPR and CCPA, as well as protecting sensitive information from unauthorized access or breaches. It’s a key component of a broader data security strategy.

Think of it as a gatekeeper. Data governance aims to define who can access what data and under what circumstances. Data masking acts as a technical control, enforcing these access restrictions. By masking sensitive data, organizations can reduce the risk of data breaches, comply with regulations, and allow for legitimate data access without compromising confidentiality.

In a real-world scenario, a company might have various departments needing access to customer data. The marketing department might need access to names and email addresses for campaigns, but not credit card details. Data masking ensures only the necessary data is visible to each department, improving data security and minimizing risk while fulfilling data governance requirements. Regular audits and monitoring ensure that the masking policies are effective and updated according to evolving regulatory compliance needs.

Q 17. How do you manage the lifecycle of masked data?

Managing the lifecycle of masked data involves a structured approach to ensure data remains protected and compliant throughout its existence. This goes beyond simply applying a masking technique.

- Planning & Design: Identifying sensitive data, selecting appropriate masking techniques, and defining policies based on sensitivity levels and regulations.

- Implementation: Applying the chosen masking techniques, often using specialized tools, and documenting the process.

- Monitoring & Auditing: Regularly checking the effectiveness of the masking, ensuring policies are adhered to, and auditing the masked data to detect anomalies or potential leakage.

- Unmasking (if necessary): Establishing a controlled and secure process for unmasking data, only when absolutely necessary and with strict authorization and logging.

- Retirement & Deletion: When masked data is no longer needed, a secure process for its deletion or archiving is crucial. This requires careful consideration of retention policies.

Consider a scenario where masked data is used for testing. After the testing phase, it’s critical to delete the masked data or securely archive it according to the retention policy. Failure to do so can lead to unnecessary risk and potential compliance violations.

Q 18. Describe your experience with different types of masking algorithms.

My experience encompasses a range of masking algorithms, each with its strengths and weaknesses. The choice depends on the sensitivity of the data and the desired level of protection.

- Shuffling: Replacing values with other values from the same data set. Useful for preserving data distribution but less effective for highly sensitive data.

- Substitution: Replacing values with predefined substitutes (e.g., ‘X’ for characters, ‘0’ for numbers). Simple but can reveal data patterns.

- Character Masking: Replacing certain characters with other characters. For example, masking parts of an email address or phone number.

- Data Redaction: Removing or deleting portions of the data entirely. Effective but can lose some data context.

- Tokenization: Replacing sensitive data with non-sensitive tokens that can be later reversed. Offers stronger protection and reversibility, but adds complexity.

- Pseudonymization: Replacing identifying information with pseudonyms, allowing for analysis while preserving privacy. This often requires careful design to ensure privacy and data utility.

For example, in a customer database, credit card numbers might be tokenized, while names could be pseudonymized, and email addresses partially masked to protect against phishing attacks. The selection of the appropriate algorithm is critical to achieving the required level of security without hindering the utility of the data.

Q 19. How do you address the challenges of masking unstructured data?

Masking unstructured data presents unique challenges due to its lack of predefined format and structure. Traditional database-centric masking techniques don’t directly apply.

Approaches often involve techniques like:

- Data Classification: Identifying sensitive information within unstructured data (e.g., documents, emails) using natural language processing (NLP) or machine learning (ML) techniques. This step is crucial to understanding what needs masking.

- Regular Expressions: Using regular expressions to find and mask specific patterns of sensitive information (e.g., credit card numbers, social security numbers).

- Optical Character Recognition (OCR): If data is in image format, OCR can extract text, enabling subsequent masking techniques.

- Specialized Tools: Many data masking tools offer functionalities for handling unstructured data. These tools may leverage NLP or ML models for advanced identification and masking of sensitive information.

Consider a scenario involving sensitive information embedded in PDFs. We would utilize OCR to extract the text, then apply regular expressions or specialized tools to identify and mask sensitive data elements, finally rebuilding the document with the masked information. This requires significant attention to detail to prevent accidental disclosure of the original information.

Q 20. Explain your understanding of k-anonymity and l-diversity.

k-anonymity and l-diversity are privacy-enhancing techniques that focus on limiting the re-identification risk in datasets. They are commonly used in data anonymization and de-identification.

k-anonymity ensures that each record in a dataset is indistinguishable from at least k-1 other records based on a set of quasi-identifiers (attributes that could potentially identify an individual). For instance, if k=10, every record must have at least 9 other identical records with regard to the quasi-identifiers. This prevents re-identification based on those attributes alone.

l-diversity builds upon k-anonymity by adding a diversity requirement. It stipulates that for each k-anonymous group, there should be at least l well-represented values for a sensitive attribute. This addresses the limitations of k-anonymity, which may still allow re-identification if there is homogeneity in sensitive attributes within a k-anonymous group. Imagine a hospital dataset. k-anonymity might group patients with the same age and zip code. l-diversity would ensure that within that group, there’s enough diversity in diagnoses to prevent inference of an individual’s condition.

Both are important for protecting sensitive information, but they are often insufficient in the presence of background knowledge. Advanced privacy models like t-closeness combine these concepts with statistical considerations to offer stronger privacy guarantees.

Q 21. How do you prevent data leakage during the masking process?

Preventing data leakage during the masking process is paramount. It requires a multi-layered approach that goes beyond simply applying a masking algorithm.

- Comprehensive Data Discovery: Thoroughly identifying all sensitive data elements before applying any masking techniques. This minimizes the risk of overlooking critical information.

- Robust Masking Algorithms: Choosing appropriate algorithms that provide strong protection against various attacks. Simply replacing data with ‘X’s is often inadequate.

- Regular Audits and Testing: Regularly auditing the masking process and testing its effectiveness. Penetration testing can reveal vulnerabilities in the process.

- Secure Logging and Monitoring: Tracking and monitoring all activities related to the masking process. This helps detect anomalies and potential leaks.

- Principle of Least Privilege: Granting only necessary access to masked data. Restricting access is essential to control who can view or use the data.

- Secure Storage & Handling: Ensuring that masked data is stored and handled securely, using encryption and access control mechanisms.

For instance, a rigorous audit might reveal an unexpected pattern in the masked data, indicating that the masking algorithm wasn’t effective enough. Identifying and addressing this weakness promptly is crucial to preventing data leakage.

Q 22. What is the difference between static and dynamic data masking?

Static and dynamic data masking differ fundamentally in when the masking occurs. Static masking applies transformations to data once, usually during a data copy or migration process. Think of it like creating a photocopy – the original remains untouched, but the copy has sensitive information obscured. This is efficient for one-time masking needs, such as creating a test or development database. Dynamic masking, on the other hand, masks data on-the-fly, each time it’s accessed. It’s like a magic trick – the original data remains intact, but anyone accessing it sees only the masked version. This is ideal for ongoing protection and is particularly useful in production environments where real-time data access is paramount.

For instance, if you’re creating a development copy of a customer database, static masking would be suitable. You’d transform credit card numbers into ‘XXXX-XXXX-XXXX-1234’ once, and that’s it. However, if you need to provide read-only access to a sensitive customer attribute in your production system, dynamic masking allows users to see only the masked value, even if the underlying data remains unchanged.

Q 23. How do you integrate data masking with other security tools?

Data masking integrates seamlessly with other security tools to create a robust defense. It often works hand-in-hand with Data Loss Prevention (DLP) tools. DLP systems can monitor data movement and identify sensitive information, while data masking can prevent that information from leaving the system or being seen by unauthorized users. Additionally, masking complements access control systems by limiting the scope of damage even if an unauthorized individual gains access. If a user doesn’t have proper permissions but somehow bypasses access controls, the data they see will be masked, further minimizing risk. Integration with audit and logging systems is also crucial for tracking masking activities and ensuring compliance. In some cases, the masking tool itself provides robust logging, which you then integrate into your overall SIEM (Security Information and Event Management) solution.

For example, we used to integrate our data masking solution with a DLP system by setting up alerts for any attempts to access sensitive fields. If a masked field was accessed without proper authorization, the DLP and masking tool would generate a security alert, which would then be logged and acted on by our security team. This layered approach helps to identify and respond to security incidents much faster than relying on a single tool.

Q 24. Describe your experience with data masking in cloud environments.

My experience with data masking in cloud environments centers around leveraging the features offered by various cloud providers like AWS, Azure, and GCP. These platforms provide managed services and tools that simplify data masking implementation, significantly reducing the overhead of managing infrastructure. I have extensive experience in using these managed services for both static and dynamic masking scenarios, including setting up masking rules using their respective APIs and integrating these services with existing cloud-based security solutions.

In one project, we migrated a large on-premises database to AWS. To ensure data security during the migration and in the cloud environment, we employed AWS’s data masking capabilities. We defined masking rules for specific data types, like Personally Identifiable Information (PII), and scheduled the masking process as part of our migration pipeline. We configured the AWS service to automatically mask the data at rest and in transit, ensuring regulatory compliance and data security.

Q 25. Explain your experience with implementing data masking for various data types (e.g., PII, PHI).

Implementing data masking for different data types requires tailored strategies. PII (Personally Identifiable Information), such as names, addresses, and social security numbers, demands sophisticated masking techniques to comply with regulations like GDPR and CCPA. This often involves techniques like tokenization or substitution, ensuring the masked data is statistically similar to the original but doesn’t reveal identifying details. PHI (Protected Health Information), governed by HIPAA, requires even stricter controls, often involving encryption and rigorous access control measures in addition to masking. Other sensitive data types, like financial data or intellectual property, require a similar level of care based on their respective sensitivities and governing regulations. I’ve worked extensively on projects involving all these types of data, developing strategies to meet regulatory compliance requirements while maintaining data usability.

For example, when masking PHI, we used a combination of data masking and encryption. We’d mask the patient’s name and address using character substitution while encrypting their medical records. This allows authorized personnel to access the data after decryption, but only after passing the required authentication and authorization checks.

Q 26. How do you handle data masking in a multi-tenant environment?

Handling data masking in a multi-tenant environment necessitates robust isolation and separation mechanisms. Each tenant’s data must be masked independently, preventing data leakage between different tenants. This usually involves configuring the masking solution to use tenant-specific masking rules and keys. The masking process needs to be integrated with tenant management, ensuring that only authorized users within each tenant can access even the masked data associated with their respective tenant. Proper access controls and tenant-specific masking configurations are crucial to prevent unauthorized access.

We once worked on a SaaS application with multiple clients. Each client’s data needed to be masked independently to prevent data leakage. Our solution was to use a database per tenant and implement role-based access control which restricted access to only a tenant’s own data, and that too, with the masking applied.

Q 27. What are your strategies for auditing and monitoring masked data?

Auditing and monitoring masked data are crucial for ensuring the effectiveness and security of the masking process. This involves maintaining logs of all masking activities, including who masked the data, when it was masked, which techniques were used, and any exceptions or errors. Regular audits are necessary to verify the integrity of the masked data and ensure compliance with relevant regulations. We utilize automated tools and processes to collect these logs, analyze them for anomalies, and generate reports that highlight potential issues. This proactive approach allows us to identify and address potential data breaches or compliance violations before they can cause significant harm.

Specifically, we implemented a system where every masking operation was logged, including timestamps, user IDs, the type of masking used and the data before and after masking. These logs were then analyzed to detect any unusual patterns or unauthorized access attempts. Regular audits were performed to verify the accuracy and effectiveness of the masking process.

Key Topics to Learn for Advanced Masking Techniques Interview

- Image Masking Fundamentals: Understanding different masking techniques like layer masks, vector masks, and clipping masks. Knowing the strengths and weaknesses of each approach.

- Advanced Selection Techniques: Mastering the use of tools like the pen tool, quick selection tool, and refine edge for precise selections, especially in complex imagery.

- Frequency Separation: Understanding the theory and practical application of frequency separation for non-destructive retouching and preserving image detail.

- Color Range Masking: Utilizing color range selection for efficient masking of specific color ranges within an image.

- Advanced Layer Blending Modes: Proficient use of blending modes to achieve seamless integration of masked elements.

- Practical Applications: Discussing real-world scenarios like product masking for e-commerce, image compositing for advertising, and photo retouching for editorial purposes.

- Problem-Solving Approaches: Demonstrating your ability to troubleshoot common masking challenges, such as halo effects, jagged edges, and color inconsistencies.

- Workflow Optimization: Explaining efficient workflows for complex masking tasks, including the use of actions and keyboard shortcuts.

- Software Proficiency: Showcasing expertise in relevant software like Photoshop, GIMP, or Affinity Photo.

Next Steps

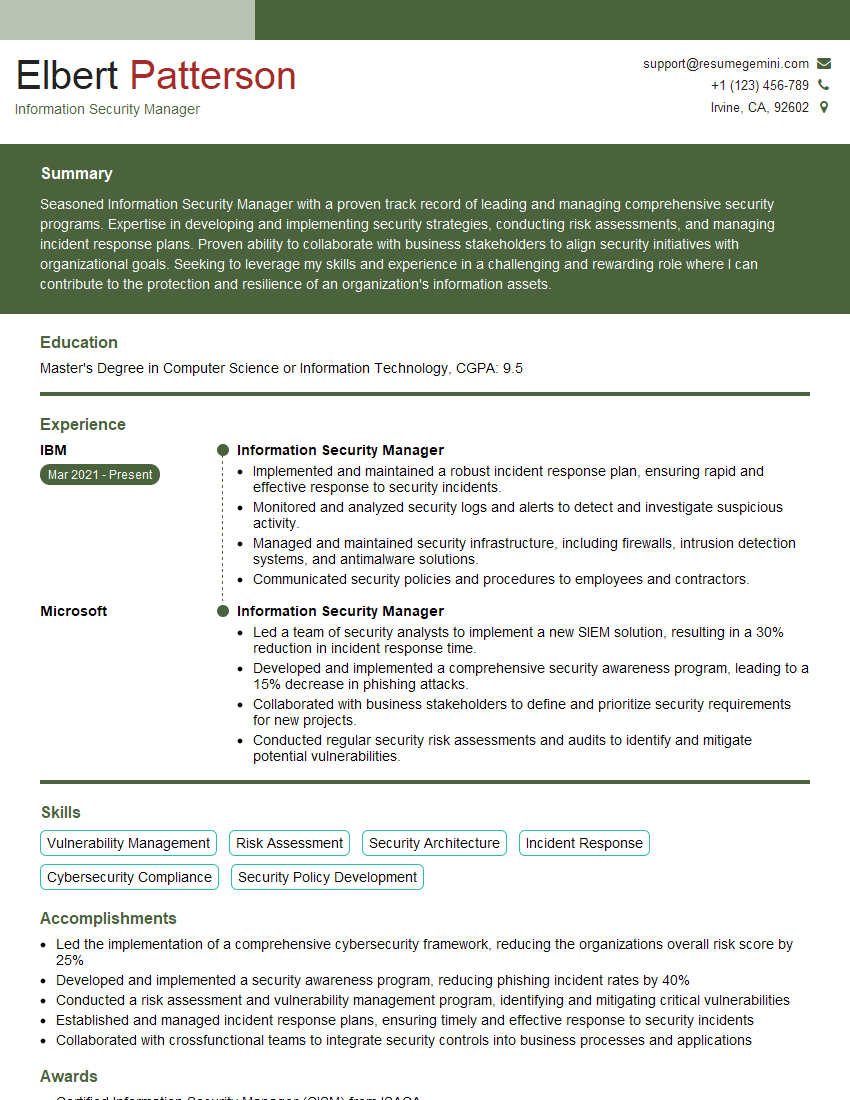

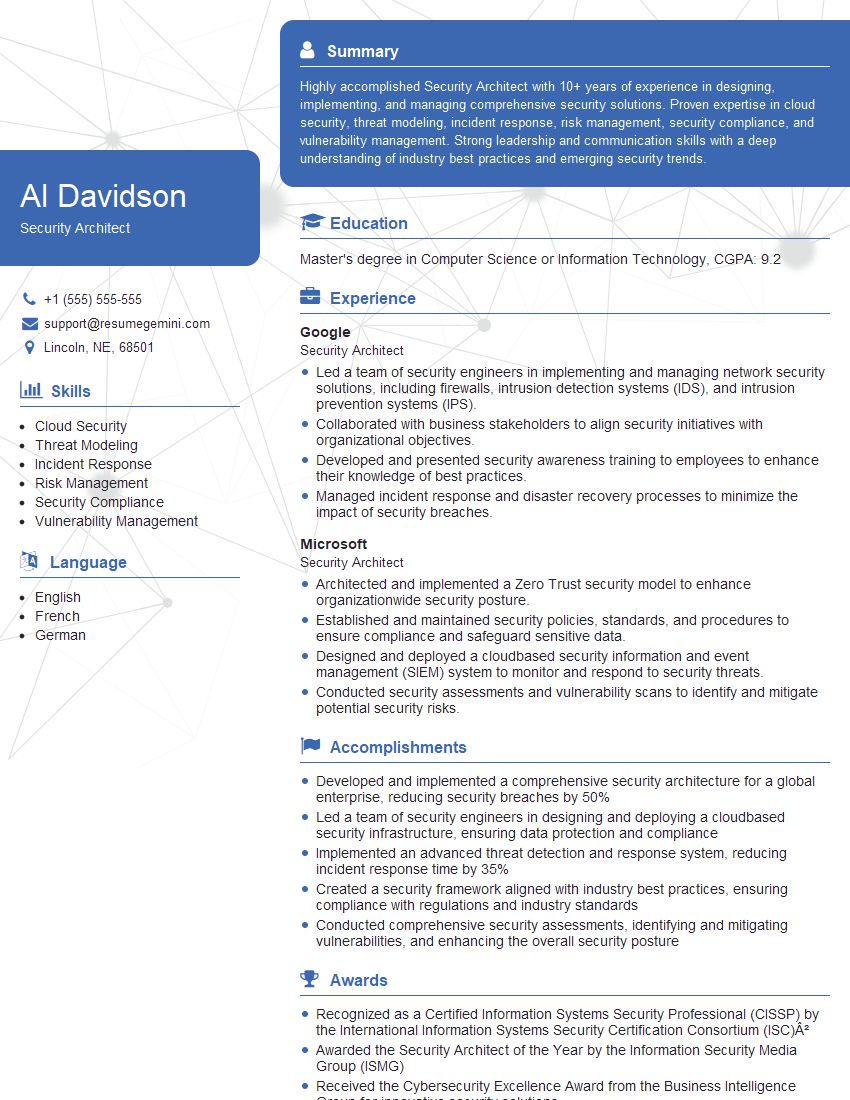

Mastering advanced masking techniques is crucial for career advancement in fields like graphic design, photography, and visual effects. A strong understanding of these techniques significantly enhances your value to potential employers. To maximize your job prospects, crafting an ATS-friendly resume is essential. ResumeGemini can help you build a professional and effective resume that highlights your skills and experience in Advanced Masking Techniques. Examples of resumes tailored to this specialization are available to help you get started.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples