Preparation is the key to success in any interview. In this post, we’ll explore crucial Earth Observation Analysis interview questions and equip you with strategies to craft impactful answers. Whether you’re a beginner or a pro, these tips will elevate your preparation.

Questions Asked in Earth Observation Analysis Interview

Q 1. Explain the difference between passive and active remote sensing.

The core difference between passive and active remote sensing lies in how they acquire data. Passive remote sensing relies on detecting naturally emitted or reflected energy from the Earth’s surface, like sunlight. Think of it like taking a photograph – you’re capturing the light that’s already there. Examples include using Landsat or MODIS satellites to observe reflected sunlight in various wavelengths. Active remote sensing, on the other hand, emits its own energy and then measures the energy reflected back. This is like shining a flashlight at an object and observing how much light bounces back. LiDAR (Light Detection and Ranging) and radar are prime examples of active remote sensing techniques, used for creating high-resolution elevation models or penetrating cloud cover.

In essence, passive systems are ‘observers’ while active systems are ‘illuminators’ and ‘observers’. The choice between the two depends on the application, the desired resolution, and the atmospheric conditions.

Q 2. Describe the electromagnetic spectrum and its relevance to remote sensing.

The electromagnetic (EM) spectrum encompasses all types of electromagnetic radiation, ranging from very long radio waves to very short gamma rays. Remote sensing utilizes a portion of this spectrum, primarily the visible, near-infrared (NIR), shortwave infrared (SWIR), thermal infrared (TIR), and microwave regions. Each part of the spectrum interacts differently with the Earth’s surface features. For example:

- Visible light is what our eyes see and is crucial for identifying features based on color and texture.

- Near-infrared is highly sensitive to vegetation health.

- Thermal infrared detects heat emitted by objects, allowing us to map temperature variations and monitor things like volcanic activity or wildfires.

- Microwave radiation can penetrate clouds and even some vegetation, making it useful for all-weather monitoring.

The relevance to remote sensing is that different materials reflect, absorb, and emit different amounts of energy at specific wavelengths. By analyzing the spectral signature – the pattern of energy reflected or emitted across the spectrum – we can identify and classify various features on the Earth’s surface, such as vegetation types, soil types, water bodies, and urban areas. This spectral analysis forms the foundation of remote sensing applications.

Q 3. What are the different spatial resolutions available in satellite imagery?

Spatial resolution refers to the size of the smallest discernible detail in a satellite image. It’s essentially the level of ‘zoom’. High spatial resolution means you can see finer details, while low spatial resolution shows larger, more generalized features. There’s a wide range available:

- Very High Resolution (VHR): Less than 1 meter. Examples include imagery from GeoEye-1, WorldView-3, providing incredibly detailed images that can be used for applications like urban planning and precise mapping.

- High Resolution (HR): 1-10 meters. Imagery like Landsat 8 falls into this range and is excellent for land cover classification, monitoring deforestation, and agricultural assessments.

- Medium Resolution (MR): 10-100 meters. Sensors like MODIS offer this resolution and are ideal for regional to global scale monitoring of environmental changes like vegetation growth and sea surface temperature.

- Low Resolution (LR): Greater than 100 meters. These datasets are commonly used for global-scale monitoring, providing a broad overview of large areas.

The choice of spatial resolution depends entirely on the application. If you need to identify individual trees, you’ll need VHR. For monitoring large-scale climate change effects, LR might suffice.

Q 4. How do atmospheric effects influence remote sensing data?

Atmospheric effects significantly influence remote sensing data by scattering and absorbing electromagnetic radiation as it travels between the sensor and the Earth’s surface. This can lead to reduced image clarity and inaccuracies in spectral measurements. Several atmospheric components play a role:

- Scattering: This occurs when radiation interacts with atmospheric particles (aerosols, water vapor) causing it to change direction. Rayleigh scattering (affecting shorter wavelengths like blue) and Mie scattering (affecting longer wavelengths) are common types, leading to hazy images and reduced contrast.

- Absorption: Certain gases like ozone and water vapor absorb specific wavelengths of radiation, leading to gaps or distortions in the spectral signature of the surface features.

These effects need to be accounted for through atmospheric correction techniques to obtain accurate and reliable information about the Earth’s surface.

Q 5. Explain the concept of radiometric resolution and its importance.

Radiometric resolution refers to the sensitivity of a sensor to detect differences in electromagnetic energy. It determines the number of distinct levels of brightness a sensor can record for each pixel in an image. Higher radiometric resolution means more subtle variations in brightness can be detected, resulting in a more detailed and nuanced image. For example, an 8-bit image can represent 256 different brightness levels (28), while a 16-bit image can represent 65,536 levels.

The importance of radiometric resolution lies in its ability to capture subtle variations in surface features, enabling more accurate classification and analysis. Higher radiometric resolution is especially important for detecting subtle changes in vegetation health, identifying different types of minerals, and monitoring thermal anomalies.

Q 6. What are the various methods for atmospheric correction?

Various methods exist for atmospheric correction, aiming to remove or minimize the effects of atmospheric scattering and absorption on remote sensing data. These methods range from simple to complex, depending on the data and desired accuracy:

- Dark Object Subtraction (DOS): A simple method assuming the darkest pixel in an image represents the atmospheric contribution. It’s relatively easy to implement but can be inaccurate.

- Empirical Line Methods: These methods establish a relationship between the observed radiance and the atmospheric conditions, often using ground-based measurements or reference sites.

- Radiative Transfer Models (RTMs): Sophisticated methods like MODTRAN or 6S simulate the passage of radiation through the atmosphere, providing highly accurate correction but requiring detailed atmospheric information.

- Look-up Tables (LUTs): Pre-calculated tables that link atmospheric conditions to correction factors. They require significant pre-processing but can improve efficiency during correction.

The choice of atmospheric correction method depends on the specific application, the available data, and the required accuracy. RTMs are generally preferred for high-accuracy applications, while simpler methods might suffice for less demanding tasks.

Q 7. Describe different image processing techniques used in Earth Observation.

Image processing techniques are crucial for enhancing, analyzing, and extracting information from Earth Observation data. They span a wide range of methods, including:

- Geometric Correction: Correcting for distortions in the image caused by sensor geometry, Earth’s curvature, and satellite movement. This involves processes like orthorectification, which ensures accurate spatial representation.

- Atmospheric Correction: (discussed earlier) Removing or minimizing the effects of the atmosphere on the image.

- Image Enhancement: Techniques like histogram equalization, contrast stretching, and filtering are used to improve the visual quality and interpretability of images. For example, sharpening can help delineate boundaries.

- Image Classification: Assigning pixels to different categories (e.g., land cover types) based on their spectral signatures. Supervised classification (using training data) and unsupervised classification (finding patterns in the data) are common methods.

- Change Detection: Analyzing images acquired at different times to identify changes in land cover, urban expansion, or other phenomena. This can involve simple image differencing or more sophisticated methods.

- Object-Based Image Analysis (OBIA): Grouping pixels into meaningful objects (e.g., buildings, trees) based on spectral and spatial characteristics, allowing for more complex analysis than pixel-based methods.

These techniques are essential for transforming raw satellite imagery into meaningful information that can be used for a vast array of applications, from environmental monitoring and urban planning to precision agriculture and disaster response.

Q 8. What is image classification and explain different classification methods.

Image classification in Earth Observation is the process of assigning predefined categories or classes to pixels or groups of pixels in a satellite or aerial image. Think of it like sorting a box of LEGOs – each brick represents a pixel, and we need to sort them into categories like ‘red brick,’ ‘blue brick,’ etc. This allows us to map land cover (forests, urban areas), identify objects (buildings, vehicles), or monitor environmental changes.

Supervised Classification: This is the most common method. We ‘train’ the algorithm by providing it with labeled samples – that is, pixels of known classes. The algorithm then learns the spectral characteristics (the unique ‘color’ signature) of each class and assigns new, unlabeled pixels to the most likely class. Examples include Maximum Likelihood Classification (MLC) and Support Vector Machines (SVM).

Unsupervised Classification: Here, the algorithm identifies natural groupings of pixels based on their spectral similarity without any prior labeling. This is useful for exploratory analysis when we don’t have prior knowledge of the classes. K-means clustering is a popular unsupervised method.

Object-Based Image Analysis (OBIA): This combines image segmentation with classification. Instead of classifying individual pixels, OBIA classifies image objects, which are groups of spatially connected pixels with similar characteristics (explained in more detail in the next answer). This is often more accurate for complex landscapes.

For example, in a supervised classification of agricultural land, we might train the algorithm with samples of corn fields, soybean fields, and fallow land. The algorithm would then use this training data to automatically classify the entire image, generating a map showing the distribution of these crop types.

Q 9. Explain the concept of object-based image analysis (OBIA).

Object-based image analysis (OBIA) moves beyond pixel-by-pixel classification by considering the spatial context and relationships between pixels. Instead of treating each pixel individually, OBIA first segments the image into meaningful objects (or patches). These objects are then classified based on their spectral, spatial, and contextual characteristics. Imagine classifying a forest – with pixel-based methods, you might misclassify individual trees, while OBIA would group them into a single ‘forest’ object based on shape, texture, and spectral signature. This leads to better accuracy, especially in heterogeneous landscapes.

The process typically involves:

- Image Segmentation: This step divides the image into meaningful objects. Common algorithms include watershed segmentation and region growing.

- Object Feature Extraction: Each object is characterized by a range of features, including spectral properties (e.g., mean, variance, etc.), shape parameters (e.g., area, perimeter, compactness), and textural measures.

- Object Classification: These features are then used to classify each object into pre-defined classes using machine learning techniques like Support Vector Machines (SVMs) or Random Forests.

For example, in urban planning, OBIA can accurately delineate individual buildings from satellite images, while pixel-based methods would struggle to differentiate them from neighboring structures or roads.

Q 10. What are the advantages and disadvantages of using LiDAR data?

LiDAR (Light Detection and Ranging) data provides highly accurate three-dimensional information about the Earth’s surface. It’s like having a detailed 3D model of the landscape.

Advantages:

- High Accuracy: LiDAR provides precise measurements of elevation and surface characteristics.

- Penetration Capability: It can penetrate vegetation cover, offering information about the ground surface even under dense forests.

- Detailed 3D Models: LiDAR creates detailed point clouds and digital elevation models (DEMs), enabling the creation of highly accurate 3D models.

- Applications: LiDAR is crucial for various applications, such as mapping terrain, assessing flood risk, monitoring deforestation, and creating highly accurate city models.

Disadvantages:

- Cost: LiDAR data acquisition is expensive compared to other remote sensing methods.

- Data Processing: Processing large LiDAR datasets requires specialized software and expertise.

- Weather Sensitivity: LiDAR data collection can be affected by adverse weather conditions.

- Limited Coverage: Acquiring LiDAR data for large areas can be time-consuming.

For instance, imagine assessing damage after a hurricane. LiDAR data can pinpoint the precise location and extent of flooding and building damage, providing critical information for disaster response and recovery.

Q 11. How do you handle cloud cover in satellite imagery?

Cloud cover is a major challenge in satellite imagery analysis because clouds obscure the ground surface, making it impossible to see the features of interest. Several strategies can be employed to handle this:

Image Selection: The simplest approach is to choose images with minimal cloud cover. This requires access to a large archive of images and often involves compromising on acquisition dates.

Cloud Masking: This involves identifying and removing cloud-covered areas from the image. Algorithms can detect clouds based on their spectral signatures (e.g., high reflectance in near-infrared bands). The cloud-masked image will have gaps where the clouds were.

Cloud Removal: More sophisticated techniques attempt to ‘fill in’ cloud-covered areas using interpolation or data from neighboring clear pixels. These techniques can introduce artifacts, requiring careful validation.

Multi-temporal Image Analysis: Combining images from different dates can help overcome cloud cover issues. If a feature is obscured by clouds in one image, it may be visible in another. Sophisticated techniques can fuse these multiple images to improve overall scene coverage.

For example, in monitoring deforestation, cloud cover often obscures large parts of the Amazon rainforest in satellite images. Cloud masking techniques are used to remove the cloudy areas and focus analysis on the visible forest regions. Combining images from multiple dates can significantly improve the coverage of cloud-free forest area.

Q 12. What are the various data formats used in remote sensing?

Remote sensing data comes in various formats, reflecting the sensor type, data processing steps, and intended use.

Raster Formats: These formats represent data as a grid of pixels, such as GeoTIFF (.tif), ERDAS Imagine (.img), and HDF (.hdf). GeoTIFF is particularly common because it stores geospatial information alongside the pixel values.

Vector Formats: These formats represent data as points, lines, or polygons. Shapefiles (.shp) are a widely used vector format for storing geographic features. Vector data is often created to represent the results of image analysis such as classified polygons.

Point Cloud Formats: These formats store three-dimensional point data, frequently used with LiDAR data. Common formats include LAS (.las) and XYZ (.xyz) files.

Specialized Formats: Many sensors have their own proprietary data formats, requiring specialized software for processing.

Choosing the right format depends on the application. For example, GeoTIFF is well-suited for storing and sharing imagery due to its wide support and geospatial metadata. Shapefiles are often used to represent categorized areas resulting from image classification.

Q 13. Explain the concept of georeferencing and its importance.

Georeferencing is the process of assigning geographic coordinates (latitude and longitude) to each pixel in a remotely sensed image or other geospatial data. It’s like putting a map grid on top of a photograph, so we know exactly where each point in the image is located on the Earth’s surface.

Importance:

- Spatial Accuracy: Georeferencing ensures that data from different sources can be accurately overlaid and analyzed together. This is crucial for applications such as land-cover mapping, where we need to know exactly where different land cover types are located.

- Data Integration: Georeferencing allows for seamless integration of remote sensing data with other geospatial data, such as topographic maps, census data, or GPS tracks.

- Spatial Analysis: It enables various spatial analyses, such as measuring distances, areas, and calculating spatial relationships between features.

Imagine creating a map of deforestation using satellite images. If the images are not georeferenced, we cannot accurately determine the precise location of deforestation events or measure their extent. Georeferencing allows us to link the image to a real-world coordinate system, enabling accurate spatial analysis and mapping.

Q 14. How do you assess the accuracy of remote sensing data?

Accuracy assessment in remote sensing is crucial for evaluating the reliability of the data and the results of image analysis. It involves comparing the classified or extracted information with known ground truth data. This is similar to checking your answers on a test to see how many are correct.

Common methods include:

Error Matrix (Confusion Matrix): This matrix compares the classified values to the reference data, showing the number of correctly and incorrectly classified pixels for each class. From this, we can calculate overall accuracy, producer’s accuracy (how well each class was classified), user’s accuracy (how well each class was identified), and the kappa coefficient, which measures the agreement between classification and reference data, accounting for chance agreement.

Root Mean Square Error (RMSE): This is often used to assess the accuracy of continuous data, such as elevation models derived from LiDAR data. A lower RMSE indicates higher accuracy.

Field Surveys and Ground Truth Data: Accuracy assessment usually requires independent ground truth data collected through field surveys or other sources. These data provide the ‘correct’ values to compare with the remotely sensed data.

For example, in classifying land cover types, we might compare our classified map with field observations from GPS-recorded points across the study area. The error matrix would then show the percentage of correctly and incorrectly classified pixels for each land cover type.

Q 15. What are the different types of map projections and their uses?

Map projections are mathematical transformations that translate the three-dimensional Earth’s surface onto a two-dimensional map. No projection is perfectly accurate; all involve some distortion of area, shape, distance, or direction. The choice of projection depends heavily on the intended use.

- Cylindrical Projections (e.g., Mercator): These project the Earth onto a cylinder. They are useful for navigation because they preserve direction, but drastically distort area at higher latitudes (Greenland appears much larger than it actually is).

Example: The Mercator projection, commonly used in web maps. - Conical Projections (e.g., Albers Equal-Area): Project the Earth onto a cone. They are good for mid-latitude regions, offering a balance between area and shape preservation.

Example: Albers Equal-Area Conic projection is often used for mapping large areas, like continents. - Planar Projections (e.g., Azimuthal Equidistant): Project the Earth onto a plane, typically tangent at a single point (pole or equator). Useful for mapping polar regions or showing great-circle distances from a central point.

Example: Azimuthal equidistant projection is useful for showing air routes. - Pseudocylindrical Projections (e.g., Robinson): A compromise projection that attempts to minimize distortions in several aspects. It’s often used for world maps as it provides a visually appealing representation.

Example: Robinson projection, frequently seen in atlases.

Choosing the right projection is crucial. For instance, a land cover analysis requiring accurate area calculations would benefit from an equal-area projection, while a navigation app needs a projection that preserves direction, even at the cost of distorted areas.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain the concept of spatial autocorrelation.

Spatial autocorrelation describes the degree to which the values of a variable at different locations are similar. In simpler terms, it measures how much nearby locations tend to be alike. High spatial autocorrelation means nearby locations have similar values (e.g., areas of high population density tend to cluster together). Low spatial autocorrelation means nearby locations have dissimilar values (e.g., randomly scattered distribution of tree species).

Understanding spatial autocorrelation is critical in Earth Observation analysis because it influences the reliability of statistical analyses. Ignoring it can lead to incorrect conclusions. For example, if we are studying soil erosion, a high spatial autocorrelation indicates that the erosion rate in a particular area will likely be similar to the rate in neighboring areas. We need to account for this when designing sampling strategies and conducting statistical analyses to avoid pseudoreplication (treating spatially correlated data points as independent). Techniques like Geographically Weighted Regression (GWR) are designed to account for spatial autocorrelation.

Q 17. What is a digital elevation model (DEM) and its applications?

A Digital Elevation Model (DEM) is a 3D representation of the Earth’s surface, showing elevation data. It’s essentially a digital model of terrain, often created from remote sensing data like LiDAR or stereo pairs of satellite imagery. DEMs are not simple elevation maps; they provide a comprehensive dataset for various analyses.

- Applications:

- Hydrological Modeling: Predicting floodplains, drainage basins, and water flow.

- Slope and Aspect Analysis: Determining steepness and direction of slopes for understanding erosion, landslide risk, and habitat suitability.

- Volume Calculations: Estimating earthworks, rock volume, or changes in glacier mass.

- Visualizations: Creating 3D terrain visualizations, fly-throughs, or shaded relief maps for improved understanding and communication.

- Line-of-Sight Analysis: Determining visibility between points, crucial for planning communication towers or infrastructure.

For instance, a DEM can be used to assess the impact of deforestation on landslide risk by analyzing slope stability changes after tree removal. The fine detail provided by a high-resolution DEM allows for a much more accurate assessment compared to traditional methods.

Q 18. How do you perform change detection using remote sensing data?

Change detection using remote sensing involves identifying and quantifying changes in the Earth’s surface over time by comparing images acquired at different dates. Several methods exist, each with strengths and weaknesses:

- Image differencing: Subtracting pixel values of two images to highlight changes. Simple, but sensitive to atmospheric effects and radiometric inconsistencies.

- Image rationing: Dividing pixel values of two images to reduce the effects of illumination variations. More robust than simple differencing.

- Post-classification comparison: Classifying both images separately and then comparing the classification maps to identify areas of change. This approach allows for more complex change detection.

- Vegetation Indices: Analyzing changes in vegetation indices like NDVI (Normalized Difference Vegetation Index) over time to detect changes in vegetation cover.

For example, to monitor deforestation, we might compare Landsat images from different years. Image differencing or post-classification comparison could highlight areas where forest cover has been lost. Vegetation indices would help quantitatively assess changes in biomass.

Preprocessing steps, such as atmospheric correction and geometric correction, are critical to ensure accurate change detection. The choice of method depends on the type of change, data availability, and desired level of detail.

Q 19. What are the ethical considerations in using Earth Observation data?

Ethical considerations in using Earth Observation data are crucial. The widespread availability and power of this data demand responsible usage:

- Privacy: High-resolution imagery can potentially reveal sensitive information about individuals or properties, raising privacy concerns. Data anonymization and appropriate access controls are essential.

- Bias and Fairness: Algorithms used to process and analyze Earth Observation data can perpetuate existing biases, leading to unfair or discriminatory outcomes. Careful algorithm design and validation are necessary.

- Data Security: Protecting Earth Observation data from unauthorized access, modification, or destruction is vital, especially for sensitive national security or environmental monitoring applications.

- Data Transparency and Accessibility: Promoting open access to Earth Observation data while also ensuring data security and responsible use is a key challenge.

- Environmental Justice: Ensuring that the benefits and risks of Earth Observation technologies are distributed fairly across different communities and avoiding exacerbation of existing inequalities.

For instance, using high-resolution imagery to monitor individuals’ movements without consent is a clear ethical violation. Similarly, algorithms used for land-use planning should be carefully scrutinized to avoid biases that may disadvantage certain groups.

Q 20. Explain the concept of time series analysis in remote sensing.

Time series analysis in remote sensing involves analyzing a sequence of remotely sensed images acquired over time to monitor changes in Earth’s surface features. It allows us to track dynamic processes like deforestation, urban sprawl, glacial retreat, or seasonal crop growth.

Methods include analyzing temporal trends in pixel values, vegetation indices, or classified land cover data. Techniques such as change detection, trend analysis, and seasonal decomposition are employed to understand the patterns and magnitudes of changes over time. For example, we might use a time series of MODIS imagery to study changes in vegetation greenness across a large region over a decade, revealing seasonal patterns and long-term trends. Advanced techniques involve using time series models (e.g., ARIMA) to forecast future changes or to decompose the time series to isolate different contributing factors.

The primary benefit of time series analysis is its capacity to monitor gradual changes that might be missed using only two or a few images. This continuous monitoring allows for proactive intervention and informed decision-making in resource management and environmental protection.

Q 21. Describe your experience with specific remote sensing software (e.g., ENVI, ERDAS IMAGINE, ArcGIS).

I have extensive experience with various remote sensing software packages, including ENVI, ArcGIS, and QGIS. In my previous role, I utilized ENVI for atmospheric correction, image classification (e.g., supervised and unsupervised classification methods), and spectral analysis of hyperspectral data for precision agriculture applications. Specifically, I used ENVI’s tools to create and analyze vegetation indices from multispectral satellite data (Landsat and Sentinel). I also have experience with ArcGIS for geospatial data management, spatial analysis (e.g., overlay analysis, proximity analysis), and creating thematic maps. My proficiency in ArcGIS included integrating remote sensing data with other geographic datasets like vector and raster data to support complex analyses. I’ve used QGIS extensively for open-source geospatial data analysis, creating custom scripts for automating processes and batch processing large amounts of raster data. In one project, I used QGIS to generate elevation profiles from DEMs and integrate those with hydrological models for flood risk assessment. This required working with various plugins and integrating the processing results with external data sources.

Q 22. How do you handle large datasets in remote sensing?

Handling massive remote sensing datasets requires a multi-pronged approach focusing on efficient data storage, processing, and analysis. Think of it like organizing a massive library – you can’t just throw everything on the floor and expect to find anything.

- Cloud Computing: Platforms like Google Earth Engine, AWS, and Azure provide scalable cloud-based storage and processing capabilities. This allows for parallel processing of large datasets, significantly reducing processing time. For example, I’ve used Google Earth Engine to process terabytes of Landsat imagery for deforestation monitoring across the Amazon, a task impossible on a single workstation.

- Data Compression and Formats: Using efficient data formats like GeoTIFF with appropriate compression (e.g., LZW, DEFLATE) significantly reduces storage space and improves I/O speed. Choosing the right format is crucial to balance data size and access speed.

- Big Data Tools: Tools like Apache Spark, Hadoop, and Python libraries (e.g., Dask, Vaex) are designed to handle large datasets efficiently. These tools allow for distributed computing, enabling parallel processing across multiple machines.

- Data Subsetting and Pre-processing: Before analysis, focusing on a specific area of interest or time period through subsetting significantly reduces the data volume. Pre-processing steps like cloud masking and atmospheric correction can also streamline later analysis.

The key is to choose the right combination of tools and techniques based on the specific dataset size, available resources, and the desired analysis.

Q 23. Explain your experience with data visualization and presentation.

Data visualization is crucial for communicating insights from Earth Observation analysis. It’s about turning raw data into compelling narratives. I’m proficient in various visualization tools and techniques to effectively communicate complex findings to both technical and non-technical audiences.

- Software: I’m experienced with ArcGIS Pro, QGIS, and ENVI for creating maps, charts, and other visualizations. I also use Python libraries like Matplotlib, Seaborn, and Plotly for creating custom visualizations.

- Techniques: I utilize a range of techniques including choropleth maps (for showing spatial variation of a variable), time-series plots (for showing changes over time), and interactive dashboards (for exploring data dynamically). For example, I created an interactive dashboard showing changes in urban sprawl over several decades using Landsat data. Users could zoom in on specific areas, choose different time periods, and even compare different land-use categories.

- Presentation Skills: I tailor my presentations to the audience, employing clear and concise language, visually appealing graphics, and effective storytelling to communicate complex findings in a digestible way. I have presented my research at numerous conferences and workshops, receiving positive feedback on both the content and the presentation style.

Q 24. Describe a challenging project involving Earth Observation analysis and how you overcame it.

One challenging project involved mapping agricultural drought severity using Sentinel-2 data in a region with significant cloud cover. The frequent cloud cover made obtaining clear, cloud-free imagery difficult. This impacted the accuracy of our vegetation indices, which are critical for assessing drought conditions.

To overcome this, we implemented a two-pronged approach:

- Cloud Masking and Temporal Smoothing: We used sophisticated cloud masking algorithms to identify and remove cloudy pixels from the Sentinel-2 images. Then, we employed temporal smoothing techniques to fill in the gaps left by cloud cover by using data from nearby dates and locations. This approach leverages the temporal continuity of satellite data to reconstruct a mostly cloud-free image over time. It’s akin to putting together a jigsaw puzzle, where some pieces are missing, but you can still get a good overall picture.

- Data Fusion: We combined Sentinel-2 data with data from other sources such as rainfall data and soil moisture maps to improve the accuracy of our drought severity estimations. We used this supplementary data to create a more robust indicator of drought.

This combined approach significantly improved the accuracy and reliability of our drought maps, demonstrating the importance of creative problem-solving when working with imperfect datasets.

Q 25. What are your preferred methods for validating remote sensing results?

Validating remote sensing results is paramount to ensure their reliability. My validation methods vary depending on the application, but generally involve a combination of approaches:

- Ground Truthing: This involves collecting in-situ measurements, such as field surveys or ground-based instrument readings, to compare with the remote sensing data. It’s a fundamental approach, providing the ‘ground truth’ against which our results are compared.

- Comparison with Existing Datasets: I often compare my results with existing datasets from other sources, such as high-resolution imagery, census data, or data from other remote sensing missions. Agreement across datasets increases confidence in results.

- Accuracy Assessment Metrics: I use quantitative metrics like overall accuracy, producer’s accuracy, user’s accuracy, kappa coefficient, and root mean squared error (RMSE) to assess the accuracy of classifications and estimations. These metrics provide a statistical measure of how well our results align with reality.

- Uncertainty Analysis: I also consider sources of uncertainty, like sensor limitations, atmospheric effects, and variations in data quality, and incorporate that into the interpretation and reporting of results. Knowing the limits of your data is crucial for making responsible interpretations.

A rigorous validation process ensures the credibility of our findings and enhances the impact of our research.

Q 26. How familiar are you with different satellite platforms (e.g., Landsat, Sentinel, MODIS)?

I have extensive experience with various satellite platforms, including Landsat, Sentinel, and MODIS. Each platform has its own strengths and weaknesses depending on the application.

- Landsat: Provides long-term, consistent data across multiple decades, making it ideal for studying long-term environmental changes. The spatial resolution is suitable for many applications, offering a good balance between detail and coverage. I’ve used Landsat extensively for land cover mapping and change detection.

- Sentinel: Offers high temporal and spatial resolution, making it excellent for monitoring dynamic phenomena like floods, wildfires, and agricultural growth. Sentinel’s free and open access also makes it very appealing. I’ve used Sentinel data for precision agriculture applications and disaster response.

- MODIS: Its primary advantage is its high temporal resolution and broad swath width, making it perfect for large-scale monitoring of global processes such as vegetation dynamics, sea surface temperature, and snow cover. The coarser spatial resolution restricts its application to regional or global-scale analyses.

My familiarity extends to understanding the characteristics of each sensor (spectral bands, spatial resolution, temporal resolution), processing workflows, and common data formats. I can select the most appropriate platform for the given task, understanding the trade-offs between resolution, coverage, and data availability.

Q 27. What are your future aspirations in the field of Earth Observation?

My future aspirations in Earth Observation involve leveraging the latest advancements in remote sensing technology and artificial intelligence to address pressing global challenges.

- AI and Machine Learning: I aim to further develop my expertise in applying AI and machine learning algorithms to improve the accuracy and efficiency of remote sensing data analysis, automating tasks and extracting more meaningful insights from complex datasets.

- Data Fusion and Integration: I want to explore novel approaches to integrating data from diverse sources (remote sensing, in-situ measurements, crowdsourced data) to create more comprehensive and accurate representations of Earth systems.

- Environmental Monitoring and Sustainability: I am particularly interested in applying my skills to contribute to environmental monitoring efforts, focusing on issues such as climate change, deforestation, and natural disaster response. I believe Earth Observation holds the key to better understanding and addressing these critical issues.

Ultimately, my goal is to contribute to the development of innovative solutions that leverage the power of Earth Observation to improve our understanding of the planet and support sustainable decision-making.

Key Topics to Learn for Earth Observation Analysis Interview

- Remote Sensing Fundamentals: Understanding the electromagnetic spectrum, sensor types (optical, radar, lidar), and data acquisition techniques. Practical application: Analyzing multispectral imagery to identify land cover changes.

- Image Processing and Analysis: Mastering techniques like atmospheric correction, geometric correction, and image classification. Practical application: Developing algorithms for automated feature extraction from satellite imagery.

- Geographic Information Systems (GIS): Proficiency in GIS software (e.g., ArcGIS, QGIS) for data visualization, spatial analysis, and map creation. Practical application: Integrating Earth Observation data with other geospatial datasets for comprehensive analysis.

- Data Analysis and Interpretation: Developing strong skills in statistical analysis, data mining, and interpretation of results within the context of Earth Observation applications. Practical application: Using time series analysis to monitor deforestation rates.

- Specific EO Applications: Gaining expertise in a specific area of interest, such as precision agriculture, disaster response, environmental monitoring, or climate change studies. Practical application: Applying EO techniques to assess the impact of a natural disaster.

- Programming and Scripting: Familiarity with programming languages like Python, R, or MATLAB for automating tasks, analyzing large datasets, and developing custom algorithms. Practical application: Creating a script to process and analyze a large volume of satellite imagery data.

- Cloud Computing Platforms: Understanding cloud-based platforms (e.g., Google Earth Engine, AWS) for processing and analyzing large Earth Observation datasets. Practical application: Leveraging cloud computing resources for efficient processing of high-resolution imagery.

Next Steps

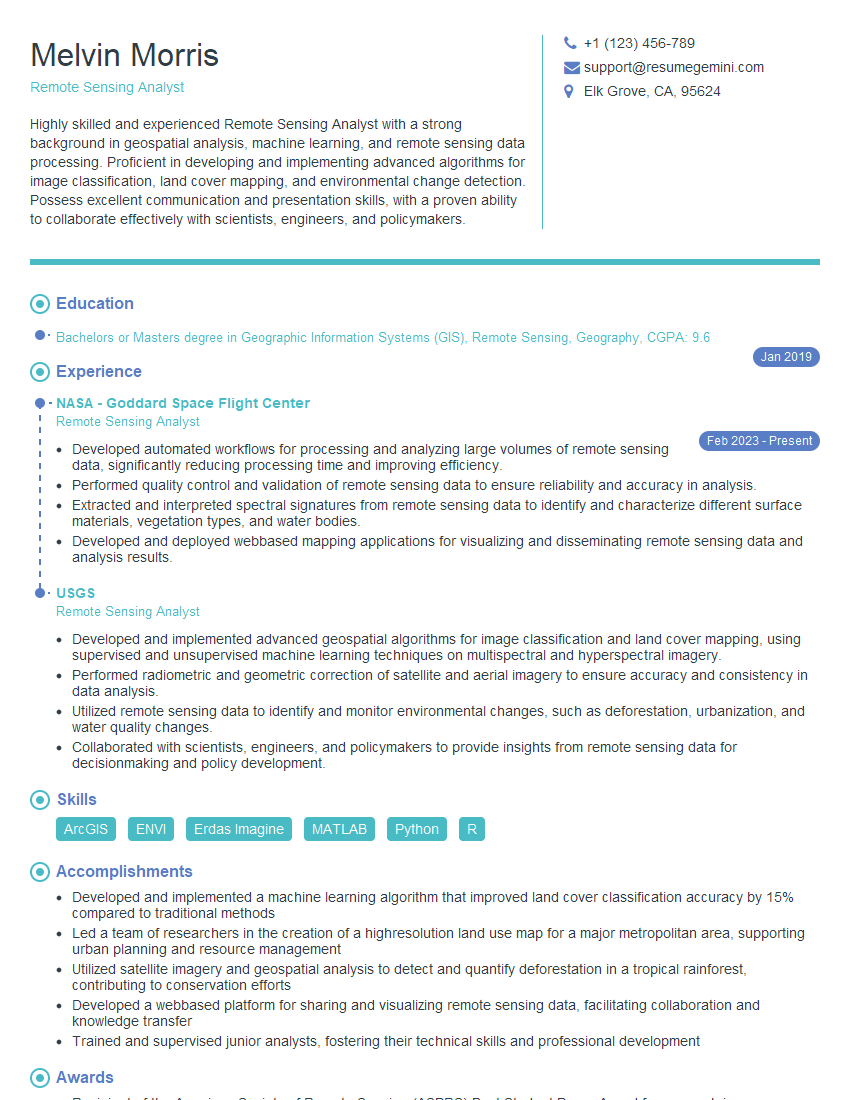

Mastering Earth Observation Analysis opens doors to exciting and impactful careers in various sectors. To maximize your job prospects, a well-crafted, ATS-friendly resume is crucial. ResumeGemini is a trusted resource that can help you build a professional and compelling resume tailored to showcase your skills and experience effectively. Examples of resumes specifically tailored to Earth Observation Analysis are available to help guide you. Invest time in creating a strong resume – it’s your first impression on potential employers.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples