The right preparation can turn an interview into an opportunity to showcase your expertise. This guide to Error Propagation Analysis interview questions is your ultimate resource, providing key insights and tips to help you ace your responses and stand out as a top candidate.

Questions Asked in Error Propagation Analysis Interview

Q 1. Explain the difference between random and systematic errors.

Random and systematic errors are two fundamental types of errors encountered in measurements. Random errors are unpredictable fluctuations that vary from one measurement to the next, even under identical conditions. Think of it like slightly misjudging the starting point on a ruler each time you make a measurement. They usually follow a normal (Gaussian) distribution, meaning that small errors are more common than large ones, and positive and negative errors occur with equal probability. Systematic errors, on the other hand, are consistent and repeatable biases. They are caused by a flaw in the experimental setup or a fault in the measuring instrument. Imagine a scale that consistently reads 1kg too high – every measurement will be off by the same amount. The key difference lies in their predictability and behavior: random errors are inherently unpredictable, while systematic errors are repeatable and, in principle, correctable once identified.

- Random Error Example: Repeatedly measuring the length of a table using a ruler might yield slightly different results due to inconsistencies in human judgment or minor vibrations.

- Systematic Error Example: A poorly calibrated thermometer consistently gives readings that are 2°C lower than the actual temperature.

Q 2. Describe the method of propagation of uncertainty for addition and subtraction.

For addition and subtraction, uncertainty propagates by adding the absolute uncertainties. This is based on the principle that the maximum possible error in the sum or difference is the sum of the individual maximum possible errors. Consider two measurements, x and y, with associated uncertainties Δx and Δy, respectively. If z = x + y or z = x – y, the uncertainty in z, denoted as Δz, is given by:

Δz = Δx + ΔyThis is a conservative estimate, as it assumes that the individual errors are always in the same direction, thus maximizing the total error. In practice, the errors often partially cancel each other, so this method provides an upper bound to the uncertainty.

Example: If you measure a length of 10.2 ± 0.1 cm and add it to another length of 5.5 ± 0.2 cm, the total length is 15.7 cm, and the uncertainty is 0.1 cm + 0.2 cm = 0.3 cm. Therefore, the result is expressed as 15.7 ± 0.3 cm.

Q 3. How do you propagate uncertainty for multiplication and division?

Uncertainty propagation for multiplication and division involves using relative uncertainties. The relative uncertainty is the ratio of the absolute uncertainty to the measured value. For multiplication and division, the relative uncertainty of the result is approximately the sum of the individual relative uncertainties. Let’s consider z = x * y or z = x / y. The relative uncertainty in z is given by:

Δz/z ≈ Δx/x + Δy/yThis formula provides a good approximation, particularly when the relative uncertainties are small (typically less than 10%). Again, this is a conservative estimate that often overestimates the true uncertainty.

Example: If you measure the area of a rectangle by multiplying length (10.2 ± 0.1 cm) and width (5.5 ± 0.2 cm), the area is 56.1 cm². The relative uncertainty is (0.1/10.2) + (0.2/5.5) ≈ 0.047. The absolute uncertainty is 0.047 * 56.1 ≈ 2.6 cm². Thus the area is approximately 56.1 ± 2.6 cm².

Q 4. What is the significance of the Gaussian distribution in error propagation?

The Gaussian distribution (or normal distribution) plays a crucial role in error propagation because many random errors follow this distribution. The central limit theorem states that the average of a large number of independent random variables, regardless of their individual distributions, tends toward a Gaussian distribution. Since many measurements are affected by many small, independent random errors, their combined effect often approximates a Gaussian distribution. This makes the Gaussian distribution a fundamental model in calculating confidence intervals and propagating uncertainties. Using the standard deviation (a measure of the spread of the Gaussian distribution) allows us to quantify the uncertainty in our measurements and their propagated uncertainties.

In error propagation, knowing the standard deviation of the underlying Gaussian distribution allows us to estimate the probability of a measured value falling within a certain range.

Q 5. Explain how to propagate uncertainty for more complex functions (e.g., exponential, logarithmic).

Propagating uncertainty for complex functions like exponentials and logarithms requires using the method of partial derivatives. This approach generalizes the addition/subtraction and multiplication/division rules. If z = f(x, y), then the uncertainty in z, Δz, can be estimated using:

(Δz)² ≈ (∂f/∂x)²(Δx)² + (∂f/∂y)²(Δy)²where ∂f/∂x and ∂f/∂y are the partial derivatives of f with respect to x and y, respectively. These partial derivatives represent the sensitivity of the function z to changes in x and y.

Example: If z = ex and x has uncertainty Δx, then ∂z/∂x = ex. Therefore, Δz ≈ ex Δx.

Similarly, for z = ln(x), ∂z/∂x = 1/x, resulting in Δz ≈ (Δx)/x.

Q 6. What are the limitations of linear error propagation?

Linear error propagation, which uses formulas like those derived for addition/subtraction and multiplication/division, relies on a fundamental assumption: that the relationship between the measured quantities and the result is approximately linear within the range of uncertainties. This is not always true. For non-linear functions, linear error propagation can significantly underestimate the actual uncertainty. The approximation breaks down when uncertainties are large compared to the measured values or when the function has significant curvature within the uncertainty range. In such cases, more sophisticated methods like Monte Carlo simulation are needed for accurate uncertainty propagation.

Example: The linear approximation for z = x² is significantly inaccurate when the relative uncertainty in x is large. A small change in x results in a much larger change in x², thus the linear approximation falls short.

Q 7. When is Monte Carlo simulation a suitable method for error propagation?

Monte Carlo simulation is particularly useful when dealing with complex functions, large uncertainties, or correlations between input variables where linear error propagation is insufficient. It’s a computational technique that involves repeatedly generating random samples from the probability distributions of the input variables (e.g., using a Gaussian distribution for normally distributed errors). For each sample set, the function is evaluated, and the resulting distribution of outputs gives an estimate of the uncertainty in the result. This method can handle non-linear relationships and correlations between input variables accurately, providing a robust estimate of the overall uncertainty.

Example: If you have a model with many parameters, each with uncertainty, and the model involves complex non-linear calculations, Monte Carlo simulation can efficiently estimate the overall uncertainty of the model’s output. You generate many random combinations of input parameter values (within their uncertainties), run the model for each combination, and analyze the distribution of the output.

Q 8. How do you handle correlated errors in propagation of uncertainty?

Correlated errors occur when the uncertainties in different measured quantities are not independent. Ignoring correlation leads to an underestimation of the overall uncertainty in the derived quantity. Handling correlated errors requires considering the covariance between the variables. Instead of simply summing the variances (as in uncorrelated errors), we need to include the covariance terms. This is done using the law of propagation of uncertainty, expressed in matrix form. Imagine you’re measuring the length and width of a rectangle to calculate its area. If you use the same ruler for both measurements, there might be a systematic error in the ruler itself that affects both length and width measurements positively or negatively – this is correlation.

For example, let’s say we’re calculating z = f(x, y), where x and y are measured quantities with uncertainties. The variance of z (σz2) is given by:

σz2 = (∂f/∂x)2σx2 + (∂f/∂y)2σy2 + 2(∂f/∂x)(∂f/∂y)cov(x,y)where σx2 and σy2 are the variances of x and y, and cov(x,y) is their covariance. The covariance captures the degree of correlation. A positive covariance indicates that x and y tend to vary in the same direction, while a negative covariance indicates an opposite variation. If x and y are uncorrelated, cov(x,y) = 0, and the formula simplifies to the standard formula for uncorrelated errors.

In practice, determining the covariance might require careful consideration of the measurement process and statistical analysis of the data. Sometimes, repeated measurements under varied conditions can help estimate the covariance. Software packages specializing in uncertainty analysis frequently handle covariance matrices directly.

Q 9. Explain the concept of sensitivity analysis in the context of error propagation.

Sensitivity analysis in error propagation quantifies how much the uncertainty in a derived quantity changes in response to variations in the uncertainties of the input variables. It essentially helps prioritize which input measurements need the most improvement to reduce the overall uncertainty. Imagine you are building a model to predict crop yield, and you have uncertainties in rainfall, temperature and fertilizer amount. Sensitivity analysis would tell you which of these factors has the biggest influence on your prediction’s uncertainty. This then allows you to focus resources on improving the measurement of that particular factor.

This is typically done by calculating the partial derivatives of the derived quantity with respect to each input variable. Larger partial derivatives indicate higher sensitivity. For example, if z = f(x, y), the sensitivity of z to x is given by ∂f/∂x. The magnitude of this derivative reveals how much a change in x’s uncertainty affects z’s uncertainty. A large derivative implies a high sensitivity, meaning a small change in x’s uncertainty will lead to a large change in z’s uncertainty, suggesting that improving the precision of x is crucial. Software packages can automate this process and graphically present sensitivity indices.

Q 10. What are some common sources of systematic errors in experimental measurements?

Systematic errors are consistent, repeatable errors that are not due to random fluctuations. They’re often caused by flaws in the measurement apparatus or procedure. Identifying and mitigating them is crucial for accurate measurements. Some common sources include:

- Instrument Calibration Errors: An improperly calibrated instrument will consistently produce biased results. For example, a miscalibrated scale will always weigh objects incorrectly.

- Environmental Factors: Temperature, humidity, or pressure changes can systematically affect measurements. A thermometer reading inaccurately due to consistent ambient temperature is a classic case.

- Observer Bias: The observer’s expectations or techniques can introduce bias. For instance, consistently reading a meter slightly high or low. This is often subtle and harder to eliminate.

- Imperfect Experimental Design: Flaws in the experiment’s setup can lead to systematic errors. For example, a poorly designed experiment to measure the speed of sound might inadvertently introduce wind effects.

- Data Processing Errors: Errors in data analysis, such as applying an incorrect formula or neglecting to account for a systematic effect in the data manipulation phase.

Addressing systematic errors often involves careful instrument calibration, environmental control, using multiple measurement techniques, and rigorously validating the experimental design and data processing methods. Blind testing can minimize observer bias.

Q 11. How do you determine the uncertainty in a derived quantity?

The uncertainty in a derived quantity is determined using the law of propagation of uncertainty. This law considers the uncertainties in the input variables and how they combine to affect the final result. The method depends on whether the errors are correlated or uncorrelated. For uncorrelated errors, we use the formula involving the squares of the partial derivatives and the variances of the input variables. For correlated errors, we need to incorporate covariances. Let’s consider an example. Suppose we measure the length (l) and width (w) of a rectangle to calculate its area (A = l*w). The uncertainty in A (δA) is calculated as:

δA = A * sqrt[ (δl/l)2 + (δw/w)2 ]where δl and δw are the uncertainties in l and w, respectively. This formula assumes uncorrelated errors. If the errors were correlated, a covariance term would be added, as explained in the answer to question 1.

In more complex cases, where the derived quantity is a function of multiple variables, partial derivatives are needed, potentially necessitating numerical methods or computer programs for their calculation. The software packages dedicated to uncertainty analysis can handle these complex calculations efficiently, providing both numerical results and graphical visualizations of uncertainty contributions from different input parameters.

Q 12. What is the difference between accuracy and precision?

Accuracy refers to how close a measurement is to the true value, while precision refers to how close repeated measurements are to each other. A simple analogy: imagine you’re throwing darts at a dartboard.

- High accuracy, high precision: All darts cluster tightly near the bullseye.

- High accuracy, low precision: Darts are scattered around the bullseye, but their average is close to it.

- Low accuracy, high precision: Darts cluster tightly, but far from the bullseye.

- Low accuracy, low precision: Darts are scattered all over the board.

In scientific measurements, we strive for both high accuracy and high precision. Accuracy addresses systematic errors, while precision reflects random errors. A precise measurement is not necessarily accurate (it could consistently miss the target), and vice versa (a single, accurate measurement is not proof of consistent accuracy).

Q 13. How do you assess the quality of your error propagation analysis?

Assessing the quality of an error propagation analysis involves several checks:

- Reasonable Uncertainties: Are the resulting uncertainties plausible given the uncertainties of the input variables? Unreasonably large or small uncertainties could indicate errors in the calculations or assumptions.

- Sensitivity Analysis: A sensitivity analysis helps identify the most significant contributors to the overall uncertainty. This can guide efforts towards improving the accuracy of the most influential measurements.

- Correlation Considerations: Were correlations between input variables properly accounted for? Ignoring correlation leads to an underestimation of uncertainty.

- Multiple Methods: If possible, compare results obtained using different methods or software packages for error propagation. This can help to validate the results and identify potential errors.

- Validation with Data: If possible, compare the propagated uncertainty with the observed variability in the data. Substantial disagreement might indicate issues with the error propagation analysis or the underlying model.

Documenting the entire error propagation process, including all assumptions, formulas, and calculations is essential for transparency and reproducibility. Peer review can also help identify potential flaws in the analysis.

Q 14. Explain the concept of degrees of freedom in uncertainty analysis.

Degrees of freedom in uncertainty analysis represent the number of independent pieces of information available to estimate a parameter. It’s related to the number of measurements and the number of constraints or parameters estimated from those measurements. The concept is crucial in determining the appropriate statistical distribution to use when calculating confidence intervals or uncertainties. A simple example: if you have ‘n’ independent measurements, then the degrees of freedom for estimating the sample mean is ‘n-1’ because one degree of freedom is used to estimate the sample mean itself. Intuitively, you lose one degree of freedom because once you know the mean and ‘n-1’ data points, you can find the remaining data point.

Degrees of freedom influence the shape of the uncertainty distribution. For example, when estimating the uncertainty of a mean from a small number of measurements, using a t-distribution (which accounts for the degrees of freedom) is more appropriate than using a normal distribution. The t-distribution has heavier tails, reflecting the greater uncertainty associated with a smaller sample size and thus fewer degrees of freedom. The importance of degrees of freedom lies in ensuring that the calculated confidence intervals accurately reflect the uncertainty associated with the limited number of available data.

Q 15. Describe different methods for expressing measurement uncertainty (e.g., standard deviation, confidence intervals).

Measurement uncertainty quantifies the doubt associated with a measurement result. Several methods express this doubt. Standard deviation is a common metric, representing the spread of data around the mean. A larger standard deviation implies greater uncertainty. Confidence intervals provide a range within which the true value is likely to fall with a specified probability (e.g., a 95% confidence interval means there’s a 95% chance the true value lies within the calculated range). Other methods include standard error (which reflects uncertainty in the estimation of a mean), and percent relative uncertainty which expresses uncertainty as a percentage of the measurement value.

Example: Imagine measuring the length of a table multiple times. The standard deviation of these measurements reflects the variability in our readings. A 95% confidence interval would give us a range where we are 95% confident the true length lies.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you choose an appropriate method for propagating uncertainty in a given situation?

Selecting the right uncertainty propagation method depends on the function relating the measurements and the nature of the uncertainties. For simple linear functions, straightforward formulas work well. For complex non-linear functions, the most accurate approach often involves Monte Carlo simulation. If uncertainties are small relative to the measurements, a linear approximation (e.g., using partial derivatives) usually suffices. However, when uncertainties are significant, a non-linear approach is necessary to avoid inaccuracies. Always consider the correlation between measured quantities. If measurements are correlated, neglecting this correlation can lead to underestimation of the overall uncertainty.

Example: Calculating the area of a rectangle (Area = length * width). If length and width measurements are independent, we use a specific formula for propagating the uncertainties. If they are somehow correlated (e.g., a systematic error in measuring both), we need to consider that correlation in our calculations to get a more realistic uncertainty value.

Q 17. Explain the concept of covariance in error propagation.

Covariance measures the degree to which two variables change together. In error propagation, it’s crucial when uncertainties in different measured quantities are correlated. A positive covariance indicates that when one variable’s measurement is higher than expected, the other is also likely higher. A negative covariance suggests an inverse relationship. Ignoring covariance when it’s present leads to an underestimation of the overall uncertainty.

Example: Measuring the mass and volume of an object to calculate its density. If there’s a systematic error in the weighing scale (e.g., it consistently reads high), both mass and volume measurements would be affected similarly, exhibiting positive covariance. Failing to account for this would underestimate the uncertainty in the calculated density.

Q 18. What is the significance of the standard deviation of the mean?

The standard deviation of the mean (also called the standard error) quantifies the uncertainty in estimating the population mean based on a sample. It’s always smaller than the standard deviation of the sample itself. This is because averaging multiple measurements reduces random error. The smaller the standard error, the more precise our estimate of the population mean.

Example: Measuring the average height of students in a class. The standard deviation reflects the variation in individual heights. The standard deviation of the mean tells us how precisely we’ve estimated the average height of the entire student population based on our sample measurements. A smaller standard error means a more precise estimate of the average height.

Q 19. What is the difference between type A and type B uncertainty evaluations?

Type A and Type B uncertainty evaluations represent different approaches to quantifying uncertainties. Type A involves statistically analyzing multiple measurements of the same quantity. We calculate the standard deviation from repeated measurements to represent the uncertainty. Type B relies on other sources of information, like instrument specifications, prior knowledge, or manufacturer’s data sheets. Type B uncertainty is often expressed as a standard deviation based on a reasonable distribution of possible values.

Example: Imagine measuring the temperature with a thermometer. Type A would involve taking multiple readings and calculating the standard deviation. Type B would involve looking at the thermometer’s resolution and precision specification to estimate the uncertainty in a single reading. We then combine both Type A and Type B uncertainties to obtain a comprehensive uncertainty estimate.

Q 20. How do you combine uncertainties from different sources?

Combining uncertainties from different sources (e.g., Type A and Type B) requires considering whether they are correlated. If they are independent (uncorrelated), we combine them in quadrature (square root of the sum of squares). If correlated, covariance terms must be included. The general formula for combining uncertainties involves a covariance matrix, taking into account correlations between different sources.

Example: Suppose we measure the length and width of a rectangle with different instruments, leading to independent Type B uncertainties. Then we take multiple measurements, generating a Type A uncertainty. To get the overall uncertainty in the area, we combine the uncertainties from these various sources in quadrature (for independent sources).

Q 21. How do you deal with outliers in your data when propagating uncertainties?

Outliers can significantly affect uncertainty propagation. Handling them requires careful consideration. Simply discarding outliers is not always appropriate, as they may indicate genuine errors or unexpected events. Methods for dealing with outliers include robust statistical methods that are less sensitive to extreme values. One could use techniques like median instead of mean or use a trimmed mean (exclude some of the most extreme values). Investigating the cause of the outlier is crucial. If it’s due to a clear error (e.g., a recording mistake), it should be removed. If the cause is unknown, robust methods might be more appropriate to avoid bias.

Example: If while measuring the diameter of a pipe, one reading is significantly different from others, we’d check if there was an issue during that measurement (e.g., incorrect placement of the caliper). If no clear error is identified, we could use a robust method like the median instead of the mean to calculate the central tendency and propagate uncertainty.

Q 22. What are some software tools used for error propagation analysis?

Several software tools can assist with error propagation analysis, ranging from simple spreadsheets to sophisticated statistical packages. The choice depends on the complexity of the analysis and the user’s familiarity with programming.

- Spreadsheets (e.g., Microsoft Excel, Google Sheets): These are suitable for simpler calculations involving a few variables. You can use built-in functions or create custom formulas to propagate uncertainties. However, for complex models, spreadsheets can become cumbersome and prone to errors.

- Statistical Software (e.g., R, Python with SciPy/NumPy): These offer powerful tools for handling large datasets, performing complex statistical analyses, and visualizing results. Libraries like SciPy in Python provide functions specifically designed for uncertainty propagation. R also boasts a rich ecosystem of packages for statistical computing.

- Specialized Software (e.g., LabVIEW, MATLAB): Software packages tailored to specific scientific domains (e.g., engineering, physics) often incorporate error propagation functionalities directly into their toolsets. This can streamline the process, especially for applications requiring extensive data analysis and simulation.

- Monte Carlo Simulation Software: For extremely complex systems with many interacting variables and non-linear relationships, Monte Carlo simulation provides a robust approach. It involves generating numerous random samples within the uncertainty bounds of input variables and then observing the distribution of output values to estimate the uncertainty in the final result.

The best tool for the job always depends on the context of the analysis and the expertise of the analyst. For instance, a simple linear model might be easily handled in a spreadsheet, while a complex non-linear model may necessitate the power of Python’s SciPy library or a Monte Carlo simulation.

Q 23. Describe a situation where you had to perform error propagation analysis. What challenges did you face and how did you overcome them?

During a project involving the calibration of a sensor used to measure atmospheric pressure, I had to perform a thorough error propagation analysis. The sensor’s output voltage was a non-linear function of pressure, and several contributing uncertainties existed: sensor noise, temperature fluctuations impacting the sensor’s calibration, and the accuracy of the pressure standard used for calibration.

The main challenge was dealing with the non-linearity. Simple linear error propagation formulas were inadequate. To overcome this, I employed a Monte Carlo simulation. I modeled each source of uncertainty with appropriate probability distributions (e.g., Gaussian for sensor noise, uniform for uncertainties in the calibration standard). I then generated thousands of random samples for each input variable, propagated them through the non-linear pressure-voltage relationship, and analyzed the resulting distribution of calculated pressures. This approach allowed me to estimate not only the standard deviation but also the full probability distribution of the uncertainty in the final pressure measurement.

Another challenge was properly quantifying the different uncertainty sources. This involved carefully reviewing sensor specifications, analyzing temperature data, and estimating the uncertainty associated with the calibration standard. The process required rigorous documentation to ensure traceability and transparency.

Q 24. Explain the concept of a confidence interval and its significance in error propagation.

A confidence interval is a range of values around a point estimate that is likely to contain the true value of a parameter with a specified probability. In error propagation, the confidence interval quantifies the uncertainty in a calculated result. For instance, if we calculate the average speed of a car as 60 mph with a 95% confidence interval of ± 2 mph, it means that we are 95% confident that the true average speed lies between 58 and 62 mph. The choice of confidence level (e.g., 95%, 99%) reflects the desired degree of certainty.

The significance of confidence intervals in error propagation lies in providing a more complete picture of the uncertainty than simply stating the standard deviation or standard error. It allows us to make probabilistic statements about the likely range of the true value, which is crucial for making informed decisions based on the analysis. For example, in a manufacturing setting, confidence intervals can help determine if a product meets specifications with a certain degree of confidence.

Q 25. How do you present and interpret the results of an error propagation analysis?

Presenting and interpreting the results of error propagation analysis requires clarity and transparency. The method used for propagation should be explicitly stated (e.g., linear approximation, Monte Carlo simulation). Results should be presented using appropriate statistical measures:

- Point estimate: The best estimate of the quantity being measured.

- Uncertainty: The standard deviation, standard error, or a confidence interval, clearly indicating the level of confidence.

- Probability distribution (if applicable): This may be a histogram or a probability density function showing the complete range of plausible values.

For example, one might report: “The calculated density is 2.7 g/cm³ with a 95% confidence interval of 2.68 – 2.72 g/cm³. This uncertainty is primarily due to the measurement error in the mass (60%), and to a lesser degree to the measurement of volume (40%).”

Interpretation should focus on the magnitude of the uncertainty relative to the measured value. A large uncertainty compared to the measured value suggests that more precise measurements or a more accurate method are needed. Understanding the contributions of individual sources of uncertainty helps identify areas where improvement is most needed.

Q 26. What is the impact of neglecting error propagation in scientific research?

Neglecting error propagation in scientific research can have serious consequences, leading to:

- Misleading conclusions: If uncertainties are ignored, calculated results might seem more precise than they actually are, potentially leading to false conclusions and flawed interpretations. A study might claim a significant effect when, in reality, the uncertainty is so large that the effect isn’t statistically significant.

- Inaccurate predictions: In applications like engineering design or climate modeling, neglecting uncertainties can result in predictions that are unrealistically precise and fail to account for potential variations or risks. This can have significant safety or economic implications.

- Lack of reproducibility: If uncertainties are not properly reported, it becomes impossible for others to assess the validity of the findings or reproduce the results. This undermines the credibility and reliability of the research.

- Incorrect decision-making: Decisions based on imprecise results can be costly or even dangerous. For example, in medical research, underestimating uncertainties can lead to ineffective treatments or incorrect diagnoses.

In short, proper error propagation analysis is essential for establishing the reliability, validity, and trustworthiness of scientific results. It’s not merely a technical detail but a fundamental requirement for sound scientific practice.

Q 27. Explain the importance of proper documentation and traceability in error propagation analysis.

Proper documentation and traceability are paramount in error propagation analysis. This ensures the transparency and reproducibility of the analysis and allows for effective scrutiny by others. Key elements include:

- Clear description of the measurement process: Detailed account of how data was collected, including equipment used, procedures followed, and any potential sources of error.

- Quantification of uncertainties: Careful documentation of the individual sources of uncertainty and their associated magnitudes. This often involves including uncertainties in measurement devices and estimations of systematic errors.

- Error propagation method: Precise explanation of the method used to propagate uncertainties (linear approximation, Monte Carlo simulation, etc.). Including code or equations is essential for reproducibility.

- Traceability: Maintaining a complete record of data sources, calculations, and software used to ensure that every step in the process can be followed and verified. Version control for code is crucial here.

- Documentation of assumptions and limitations: Openly acknowledging any assumptions made during the analysis and any limitations of the methods used.

Thorough documentation enhances the credibility and reliability of the findings. It facilitates peer review, enables future scrutiny, and allows for the identification and correction of errors. Without such meticulous record-keeping, the results of error propagation analysis are susceptible to misinterpretation and are unlikely to be accepted by the scientific community.

Key Topics to Learn for Error Propagation Analysis Interview

- Understanding Uncertainty: Grasping the fundamental concepts of uncertainty and its different types (random, systematic). Learn to distinguish between precision and accuracy.

- Propagation of Uncertainty: Mastering the techniques for calculating the uncertainty in a result based on the uncertainties in the input values. This includes understanding both linear and non-linear propagation methods.

- Statistical Methods: Familiarize yourself with relevant statistical concepts like standard deviation, variance, and confidence intervals, and how they relate to error analysis.

- Practical Applications: Explore real-world applications of error propagation analysis in your field of interest. Examples might include experimental physics, engineering design, or data analysis.

- Error Analysis Techniques: Learn different techniques for analyzing and minimizing errors, such as least squares fitting and Monte Carlo simulations.

- Software and Tools: Gain familiarity with software packages or tools commonly used for error propagation calculations. This demonstrates practical experience.

- Communicating Results: Practice clearly and concisely presenting your error analysis results, both verbally and in written reports. Understanding how to effectively communicate uncertainty is crucial.

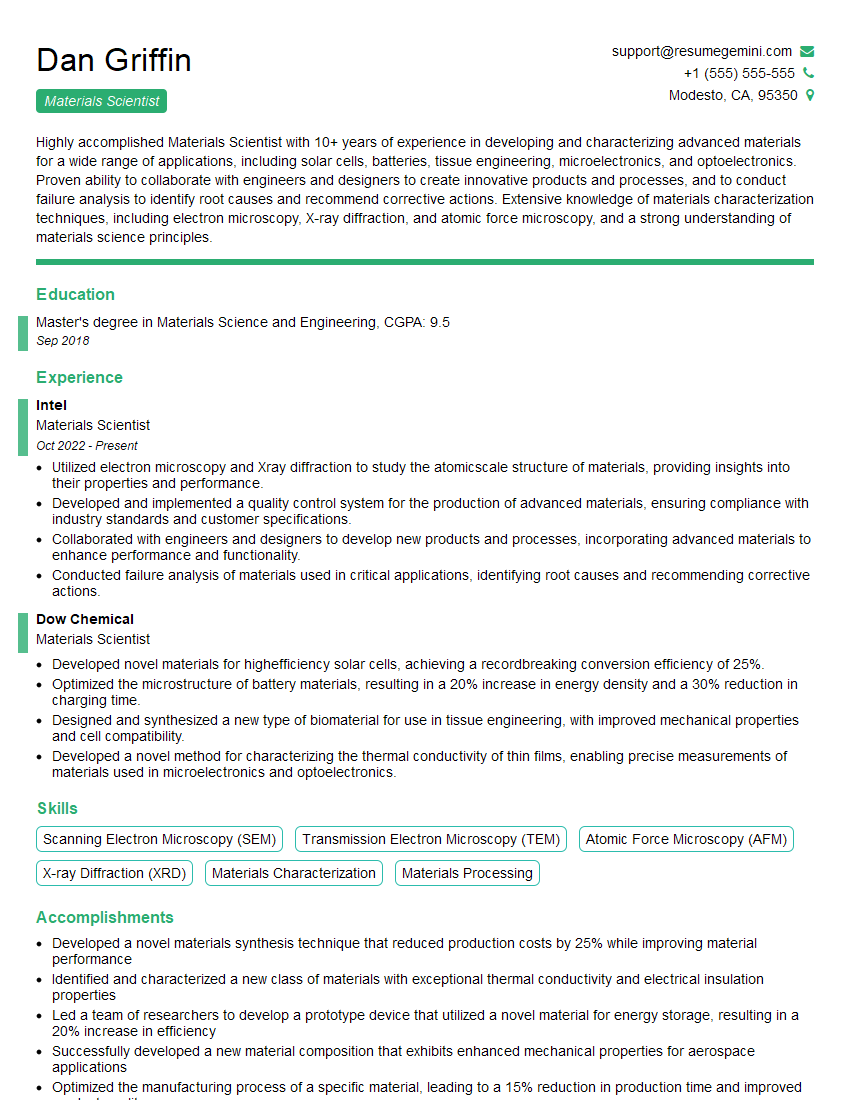

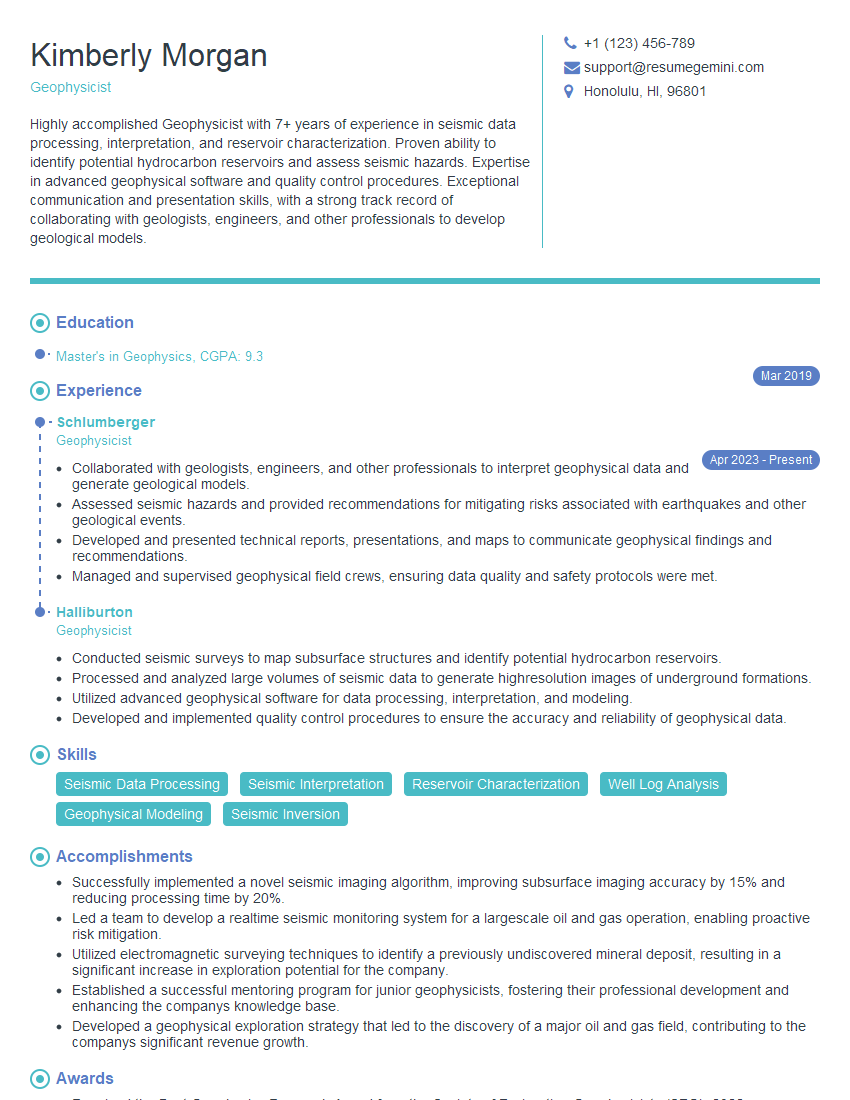

Next Steps

Mastering error propagation analysis significantly enhances your problem-solving skills and demonstrates a strong foundation in scientific rigor – highly valued in many technical roles. To maximize your job prospects, creating an ATS-friendly resume is essential. This ensures your qualifications are effectively highlighted to potential employers. ResumeGemini is a trusted resource to help you build a professional and impactful resume. We offer examples of resumes tailored to highlight expertise in Error Propagation Analysis to give you a head start. Invest the time to craft a compelling resume that showcases your skills and experience – it’s a crucial step in your career journey.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples