The right preparation can turn an interview into an opportunity to showcase your expertise. This guide to Land Use and Land Cover Classification interview questions is your ultimate resource, providing key insights and tips to help you ace your responses and stand out as a top candidate.

Questions Asked in Land Use and Land Cover Classification Interview

Q 1. Explain the difference between land use and land cover.

Land use and land cover are often confused, but they represent distinct concepts. Land cover refers to the physical materials present on the Earth’s surface, such as forests, water, urban areas, or bare soil. Think of it as what you see when looking at a satellite image – the surface features themselves. Land use, on the other hand, describes how humans utilize the land. It’s about the purpose or function of the land, such as residential, agricultural, industrial, or recreational. For instance, a land cover might be ‘forest,’ while the land use could be ‘timber production’ or ‘conservation reserve’. The same land cover can support multiple land uses.

Example: A field might be covered in grass (land cover), but it could be used for grazing livestock (land use), growing hay (land use), or simply left fallow (land use).

Q 2. Describe various methods for land cover classification.

Land cover classification employs various methods to categorize and map land cover features. These methods leverage remote sensing data (satellite imagery and aerial photography) and often incorporate Geographic Information Systems (GIS) for analysis and visualization. Common approaches include:

- Supervised Classification: This involves training a classifier using a set of labeled samples (reference data) where the land cover type is known for each sample. Algorithms then use these samples to classify unlabeled pixels in the image. Common algorithms include Maximum Likelihood Classification, Support Vector Machines (SVM), and Random Forest.

- Unsupervised Classification: This method doesn’t require pre-labeled samples. Algorithms identify natural groupings or clusters in the data based on spectral similarity. K-means clustering is a widely used unsupervised technique. The resulting clusters then need interpretation based on spectral characteristics and ancillary data.

- Object-Based Image Analysis (OBIA): OBIA moves beyond pixel-by-pixel classification and considers groups of pixels (objects) with similar characteristics. This allows for the consideration of both spectral and spatial information leading to more accurate classification.

- Decision Tree Classification: This approach uses a tree-like model of decisions and their possible consequences to classify land cover. It’s easily interpretable and can incorporate diverse data.

The choice of method depends on factors like the availability of reference data, the complexity of the landscape, and the desired accuracy.

Q 3. What are the advantages and disadvantages of supervised vs. unsupervised classification?

Supervised classification offers higher accuracy because it leverages prior knowledge through labeled training data. However, it’s more time-consuming and requires expert knowledge to select and label representative training samples. The accuracy is also highly dependent on the quality and representativeness of the training data. In contrast, unsupervised classification is faster and requires less initial effort. However, it requires post-classification interpretation to assign meaningful labels to the identified clusters and generally results in lower accuracy than supervised methods. Often, a hybrid approach (e.g., using unsupervised classification to generate initial groupings, then refining them with supervised techniques) is employed to balance speed and accuracy.

Example: Imagine classifying agricultural fields. Supervised classification would require identifying and labeling sample areas of corn, wheat, and soybeans in satellite imagery. Unsupervised classification would group pixels based on spectral similarity without prior knowledge of crop types, requiring subsequent interpretation of these groupings.

Q 4. How does accuracy assessment work in land cover classification?

Accuracy assessment is crucial to evaluate the reliability of a land cover classification. It involves comparing the classified map to a reference dataset – a ground truth data set that represents the actual land cover on the ground – independently collected and verified with high accuracy. This is typically done through field surveys, high-resolution imagery, or other reliable sources. The comparison generates an error matrix (confusion matrix) that shows the number of pixels correctly and incorrectly classified for each land cover type. Metrics like overall accuracy, producer’s accuracy (correct classification of each class on the ground), user’s accuracy (correct classification of each class on the map), and kappa coefficient (a measure of agreement that accounts for chance agreement) are calculated from this matrix to quantify classification accuracy.

Example: If 90% of the pixels classified as forest in a map actually correspond to forest areas on the ground, the producer’s accuracy for the ‘forest’ class is 90%.

Q 5. What are common sources of error in land cover classification?

Several factors can introduce errors in land cover classification. These include:

- Spatial Resolution of Imagery: Coarse resolution images may mix different land cover types within a single pixel, leading to mixed pixels and classification inaccuracies.

- Spectral Resolution: Limited spectral bands may not adequately capture the unique spectral signatures of different land cover types.

- Temporal Resolution: Single-date imagery may not capture the seasonal changes in land cover.

- Cloud Cover: Clouds obscure the land surface, hindering accurate classification.

- Atmospheric Effects: Atmospheric conditions like haze or aerosols can alter the spectral signatures of features.

- Classification Algorithm Selection: The choice of algorithm and its parameters significantly influence classification accuracy.

- Reference Data Quality: Errors in the reference data used for accuracy assessment will propagate into the accuracy estimations of the land cover map.

Careful consideration of these factors and employing appropriate error mitigation strategies are essential for producing reliable land cover classifications.

Q 6. Explain the concept of spectral signature in remote sensing.

A spectral signature is the unique pattern of reflectance or emitted energy across different wavelengths of the electromagnetic spectrum for a given material or land cover type. Different materials interact with light differently, absorbing and reflecting certain wavelengths more than others. This interaction is captured by remote sensing sensors. The unique spectral signature allows us to differentiate between different land cover classes. For example, healthy vegetation has a high reflectance in the near-infrared (NIR) region and a lower reflectance in the visible red region, forming a distinct spectral signature that differentiates it from water, bare soil, or urban areas.

Example: Water typically exhibits low reflectance across most visible and near-infrared wavelengths, while asphalt has high reflectance across most visible bands. These differences in spectral signatures are exploited in remote sensing to classify land cover.

Q 7. What are different types of remote sensing data used in land use/cover classification?

A variety of remote sensing data is used in land use/cover classification, each with its own strengths and weaknesses:

- Multispectral Imagery: Data acquired from sensors like Landsat, Sentinel, and ASTER, which record reflectance in multiple spectral bands (e.g., visible, near-infrared, shortwave infrared). These provide information about surface features and are widely used for land cover mapping at various spatial scales.

- Hyperspectral Imagery: Data with hundreds of continuous spectral bands, offering highly detailed spectral information and enabling finer distinctions between land cover types. Examples include AVIRIS and HyMap.

- LiDAR (Light Detection and Ranging): Active remote sensing technology using laser pulses to measure distances to the Earth’s surface. LiDAR data are excellent for generating digital elevation models (DEMs) and characterizing 3D surface features, crucial for classifying features like forests and urban areas.

- SAR (Synthetic Aperture Radar): Active remote sensing technology using radar signals to penetrate clouds and vegetation. It provides information on surface roughness and moisture content, useful for mapping features like flooded areas or vegetation types.

- Aerial Photography: High-resolution images obtained from aircraft. Useful for detailed analysis of land cover at local scales.

The selection of data type depends on factors such as the spatial and spectral resolution required, the budget, and the specific land cover features of interest.

Q 8. How do you handle cloud cover in satellite imagery for land cover mapping?

Cloud cover is a major challenge in satellite imagery for land cover mapping because it obscures the ground surface, preventing accurate classification. We handle this using several strategies.

- Image Selection: We prioritize images with minimal cloud cover. This often involves reviewing multiple images acquired over time to find the clearest ones. Think of it like choosing the clearest photo from a photoshoot – you want the one that shows the subject best.

- Cloud Masking: We use sophisticated algorithms to identify and mask out cloudy areas in the imagery. This involves analyzing spectral characteristics of clouds, which are distinct from land cover features. The masked areas are then excluded from the classification process. Think of it as digitally ‘erasing’ the clouds from the picture.

- Cloud Filling/Interpolation: For areas with moderate cloud cover, techniques like interpolation or using data from neighbouring clear pixels can estimate the missing land cover information. This is like filling in a small gap in a puzzle using the surrounding pieces as a guide. However, this approach has limitations and should be used cautiously, as it can introduce some degree of uncertainty.

- Multiple Dates: Acquiring images from different dates can increase the chances of obtaining cloud-free coverage of the area of interest. This is akin to taking multiple shots during your photoshoot to ensure you capture a perfect image of your subject.

The choice of method depends on the extent of cloud cover and the desired accuracy of the land cover map.

Q 9. Describe the process of image preprocessing for land cover classification.

Image preprocessing is crucial for achieving accurate land cover classification. It involves a series of steps to enhance image quality and remove irrelevant information. The steps typically include:

- Atmospheric Correction: This removes the effects of atmospheric scattering and absorption, ensuring the spectral reflectance values accurately represent the ground features. Imagine removing haze from a photograph to reveal the true colours of the landscape.

- Geometric Correction: This corrects for geometric distortions caused by sensor perspective and Earth’s curvature, aligning pixels with accurate geographic coordinates. This is like straightening a slightly crooked photograph.

- Radiometric Calibration: This standardizes the digital numbers (DNs) in the image, ensuring consistent brightness values across the entire image. This is similar to adjusting brightness and contrast in a photo editing software.

- Data Filtering: This removes noise or unwanted patterns in the image that might interfere with classification. Think of it as digitally cleaning up blemishes or scratches in a picture.

- Data Transformation: This includes operations like principal component analysis (PCA) or tasseled cap transformation to enhance the separability of land cover classes. This is like highlighting the key features in a photograph to make them easier to distinguish.

These preprocessing steps improve the accuracy of the classification process by ensuring that the input data is of high quality and free from artifacts.

Q 10. What is the role of GIS in land use and land cover analysis?

GIS (Geographic Information Systems) plays a vital role in land use and land cover analysis. It provides the framework for managing, analyzing, and visualizing spatial data. Essentially, GIS is the toolbox for handling geographical information.

- Data Management: GIS software handles storing, organizing, and retrieving vast amounts of geographic data, including satellite imagery, vector data (like boundaries), and attribute tables (containing information about land cover types).

- Spatial Analysis: GIS allows us to perform various spatial analyses, such as calculating areas, distances, and proximity relationships between land cover classes. For example, we can use GIS to identify areas experiencing deforestation or urbanization.

- Visualization: GIS enables the creation of maps and other visualizations to communicate land use/cover change results effectively. A clear map is crucial for presenting complex findings to stakeholders and policymakers.

- Integration: GIS integrates diverse data sources, enabling comprehensive analysis. We can combine satellite imagery data with other datasets like population density or elevation data for a more holistic understanding of land use and land cover patterns.

Without GIS, analyzing large datasets for land use/land cover would be incredibly difficult and time-consuming.

Q 11. Explain different spatial resolutions and their impact on classification accuracy.

Spatial resolution refers to the size of the pixels in a satellite image. A higher spatial resolution means smaller pixels, providing more detailed information about the ground surface. The impact on classification accuracy is significant:

- High Resolution (e.g., < 1 meter): Captures fine details, allowing for accurate classification of small land cover features like individual trees or buildings. This leads to higher accuracy but also higher data volume and processing costs.

- Medium Resolution (e.g., 10-30 meters): Provides a balance between detail and data volume, suitable for mapping larger land cover types like forests or agricultural fields. This is often a practical choice for large-scale projects.

- Low Resolution (e.g., > 100 meters): Offers limited detail, only suitable for mapping broad land cover classes. This might be acceptable for regional-scale assessments where fine detail is less important but is likely to have lower accuracy.

The choice of spatial resolution depends on the scale of the study, the size of the land cover features of interest, and the available budget. A detailed study of urban land cover might need high-resolution imagery, while a national-level assessment might use medium or low resolution.

Q 12. How do you select appropriate classification algorithms?

Selecting the appropriate classification algorithm is crucial for achieving accurate results. The choice depends on several factors:

- Data Characteristics: The type of satellite imagery (e.g., multispectral, hyperspectral), the number of bands, and the presence of noise all influence algorithm selection.

- Land Cover Complexity: If the area has many spectrally similar classes, a more sophisticated algorithm might be needed compared to an area with distinct classes.

- Computational Resources: Some algorithms are more computationally intensive than others. The availability of processing power and memory should be considered.

- Desired Accuracy: The level of accuracy required will impact the algorithm choice. A higher accuracy requirement might necessitate a more complex algorithm.

Common algorithms include:

- Supervised Classification: (e.g., Maximum Likelihood, Support Vector Machines) requires training data to classify pixels. Think of it like teaching a computer to identify different land cover types based on example pixels.

- Unsupervised Classification: (e.g., K-means clustering) groups pixels based on spectral similarity without prior training data. This is like letting the computer find patterns on its own.

- Object-Based Image Analysis (OBIA): This approach groups pixels into meaningful objects before classification, often improving accuracy, particularly with high-resolution data. This is like first grouping similar features before identifying them.

The best algorithm is often determined through experimentation and evaluation.

Q 13. What are the key performance indicators (KPIs) for evaluating a land cover classification?

Key Performance Indicators (KPIs) for evaluating a land cover classification include:

- Overall Accuracy: The percentage of correctly classified pixels across all classes. This provides a general measure of the classification’s performance.

- Producer’s Accuracy: The proportion of pixels correctly classified for each individual land cover class. This highlights the accuracy of the classification for each specific class.

- User’s Accuracy: The probability that a pixel classified as a particular class truly represents that class on the ground. This reflects the reliability of the assigned classes.

- Kappa Coefficient: Measures the agreement between the classified map and the reference data, accounting for chance agreement. A higher kappa value signifies better agreement and classification accuracy.

- Error Matrix (Confusion Matrix): Provides a detailed breakdown of classification errors, showing the confusion between different classes.

By analyzing these KPIs, we can assess the strengths and weaknesses of the classification and identify potential areas for improvement.

Q 14. Explain the concept of a confusion matrix.

A confusion matrix, also known as an error matrix, is a table that summarizes the performance of a classification model. It shows the counts of correctly and incorrectly classified pixels for each land cover class. Imagine it like a scorecard for your classification process.

For example, consider a classification with three land cover classes: Forest, Urban, and Agriculture. A confusion matrix might look like this:

Classified As Forest Urban Agriculture Actual Forest 90 5 5 Forest Urban 2 85 3 Forest Agriculture 3 7 80

The diagonal elements (90, 85, 80) represent the number of correctly classified pixels for each class. The off-diagonal elements show the misclassifications. For example, 5 pixels were classified as Forest but actually belong to the Urban class. The confusion matrix allows us to identify which classes are being confused and helps to improve the classification process.

Q 15. How do you interpret a kappa coefficient?

The Kappa coefficient is a statistical measure that quantifies the agreement between two raters (or classifiers) when evaluating categorical data, such as land use/land cover classifications. It’s a crucial metric because it accounts for agreement that could occur by chance alone. A kappa value ranges from -1 to +1. A kappa of 0 indicates agreement equivalent to random chance; a kappa of 1 represents perfect agreement; and values between 0 and 1 represent varying degrees of agreement above chance.

For example, imagine you’re comparing your automated land cover classification results to a manually interpreted reference map. If your kappa is 0.8, it means that your automated classification is 80% accurate beyond what would be expected by random chance. A lower kappa, such as 0.5, suggests more discrepancies and a need for improvement in your classification methodology.

Interpreting kappa also depends on context. A kappa of 0.7 might be considered good for a complex classification with many classes, while the same kappa might be inadequate for a simpler task. Always consider the specific application and the variability within the data when evaluating kappa.

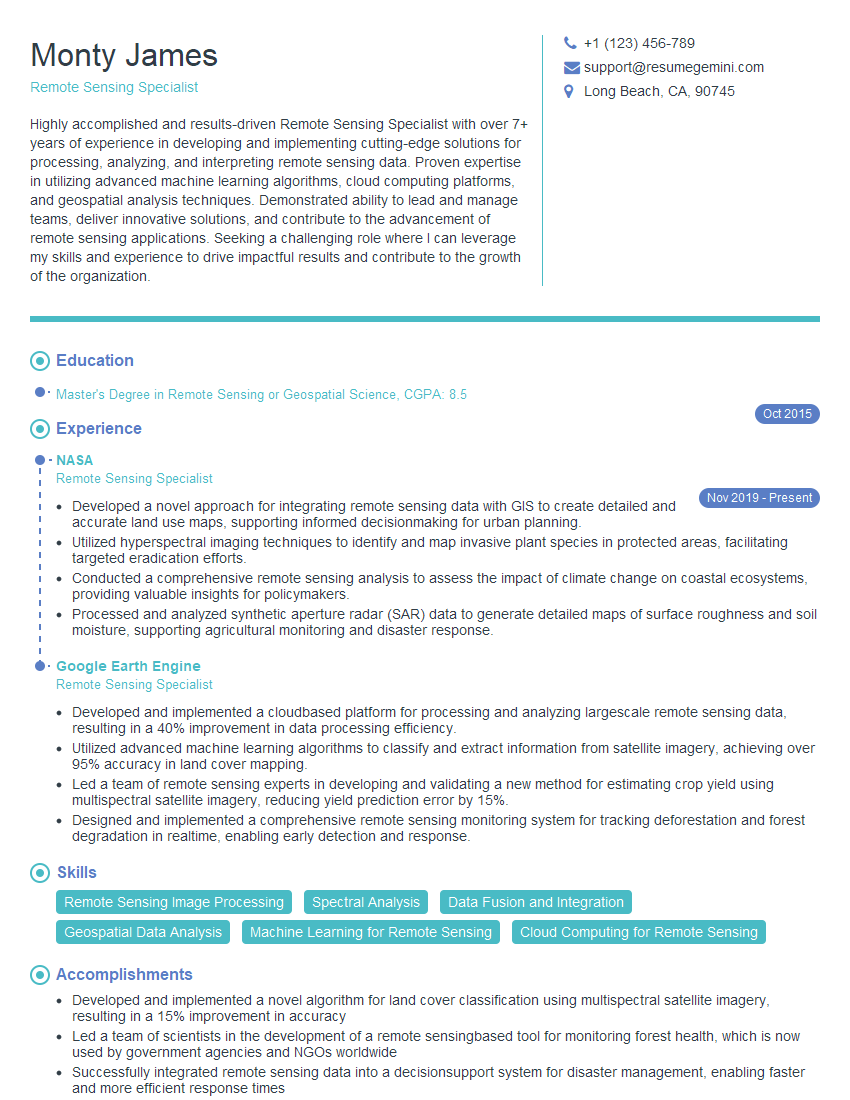

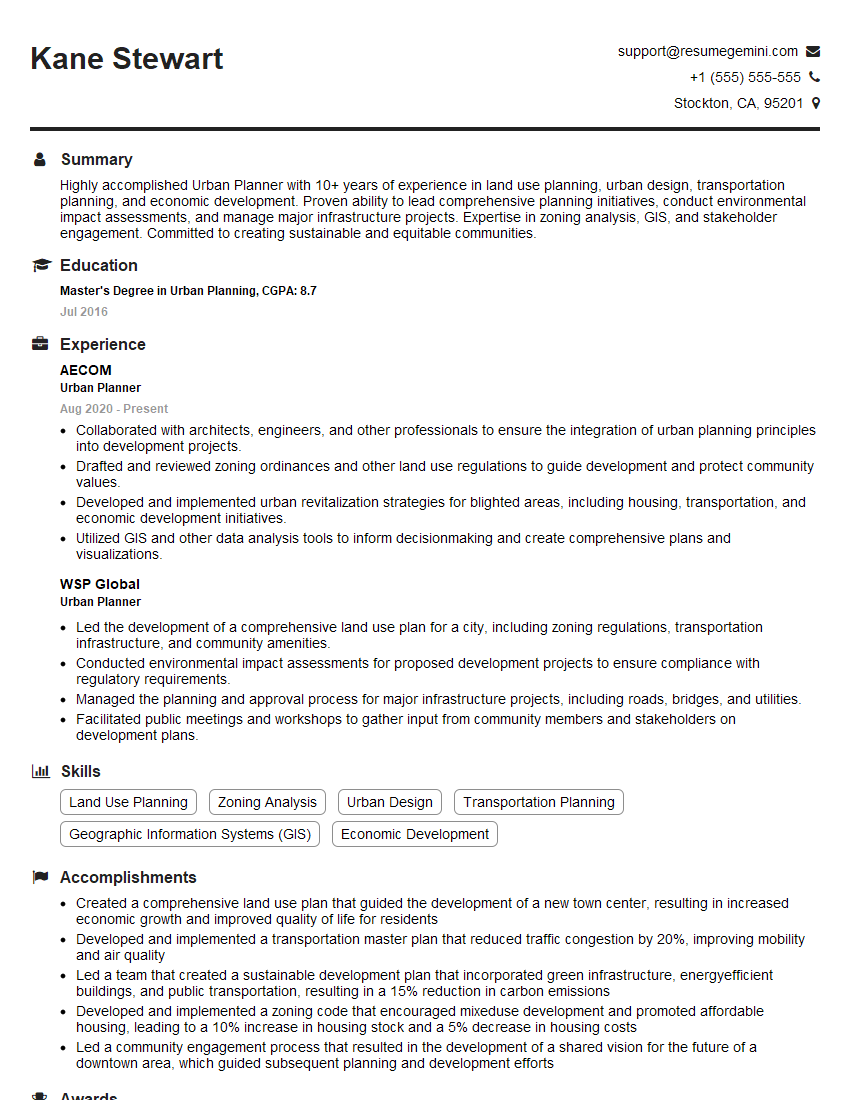

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with different classification algorithms (e.g., Maximum Likelihood, Support Vector Machines).

I have extensive experience with various classification algorithms, including Maximum Likelihood Classification (MLC), Support Vector Machines (SVMs), and others such as Random Forests and Neural Networks. MLC, a classic technique, assumes that the spectral values for each land cover class follow a normal distribution. It assigns pixels to the class with the highest probability given its spectral signature. It’s computationally efficient but can be less accurate if class distributions are non-normal.

SVMs, on the other hand, are powerful tools for finding optimal hyperplanes to separate different land cover classes. They work well with high-dimensional data and can handle non-linear relationships using kernel functions. While they are robust, they can be more computationally intensive than MLC and require careful parameter tuning.

In my work, I often employ a comparative approach, experimenting with different algorithms and evaluating their performance using metrics like overall accuracy, producer’s accuracy, user’s accuracy, and the kappa coefficient. The choice of the best algorithm depends on factors such as the characteristics of the data (e.g., number of classes, data dimensionality, presence of noise), computational constraints, and the desired level of accuracy.

For instance, in a project involving complex urban land cover mapping with numerous classes and potentially noisy data, I might favor an ensemble method like Random Forest for its resilience to noise and high accuracy, while for a simpler land cover classification with clear class separability, MLC might be sufficient.

Q 17. How do you address the issue of spatial autocorrelation in land cover data?

Spatial autocorrelation, where nearby pixels tend to have similar land cover types, is a common issue in land cover data. Ignoring this can lead to biased estimates and inflated accuracy assessments. Several techniques are used to address it:

- Geographically Weighted Regression (GWR): This method allows for local variations in regression coefficients, acknowledging the spatial non-stationarity of the data.

- Spatial Lag or Spatial Error Models: These models incorporate spatial dependencies into traditional regression frameworks. A spatial lag model includes a spatially lagged dependent variable, while a spatial error model accounts for spatially autocorrelated errors.

- Sampling Strategies: Employing stratified random sampling or spatially balanced sampling can reduce the impact of autocorrelation by ensuring representative samples across the study area.

- Spatial Filtering Techniques: Techniques like moving averages or other spatial filters can smooth the data and reduce the impact of local clustering, but should be used cautiously as they can also obscure real spatial patterns.

For instance, in analyzing forest cover change, ignoring spatial autocorrelation might lead to an overestimation of the extent of deforestation if deforestation clusters in specific areas. Incorporating spatial autocorrelation into the analysis would lead to a more accurate assessment.

Q 18. What are the ethical considerations involved in land use/cover classification?

Ethical considerations in land use/cover classification are crucial. Transparency and data provenance are paramount. We must ensure that the data used is ethically sourced and that any biases in the data or the classification process are acknowledged and addressed. This includes considering the potential impact of our classifications on different stakeholders.

For example, a classification that inaccurately depicts protected areas could lead to inappropriate land management practices and harm biodiversity. Similarly, biased data could perpetuate existing inequalities. Therefore, it’s essential to use appropriate data collection methods, validation strategies, and to communicate the limitations of the classification results clearly and honestly. We must also consider the potential use and misuse of our classifications and their broader social and environmental consequences.

It is crucial to be mindful of data privacy, especially when dealing with remotely sensed imagery that might reveal sensitive information. Involving local communities and stakeholders in the mapping process can ensure that classifications reflect their knowledge and perspectives, fostering transparency and inclusivity.

Q 19. How do you handle change detection in land use/cover analysis?

Change detection in land use/cover analysis involves identifying and quantifying changes that occur over time. This is often done by comparing land cover maps generated from imagery acquired at different time points. Common methods include:

- Post-Classification Comparison: This method involves independently classifying imagery from different time periods and then comparing the resulting maps to identify changes. This approach is straightforward but can be prone to errors if the classification accuracy is low.

- Image Differencing: This involves subtracting pixel values from one image from another. Significant differences indicate areas of change. This approach is computationally efficient but can be sensitive to atmospheric effects and radiometric differences between images.

- Image Regression: This method fits a regression model to pixel values from different time points, and changes are identified based on deviations from the fitted model.

For example, to track deforestation in the Amazon, I might use image differencing to identify areas with significant changes in vegetation indices between two Landsat images acquired several years apart. Further investigation using higher-resolution imagery could then confirm the nature of those changes (e.g., deforestation vs. natural disturbances). The choice of method depends on the characteristics of the data and the level of accuracy required.

Q 20. Explain the use of object-based image analysis (OBIA) in land cover mapping.

Object-based image analysis (OBIA) is a powerful approach that moves beyond pixel-based classification. Instead of classifying individual pixels, OBIA operates on image objects, which are groups of connected pixels with similar spectral and spatial characteristics. These objects are delineated using segmentation algorithms, and then classified based on their features.

This approach is advantageous because it leverages both spectral and spatial information, making it particularly useful for complex landscapes with heterogeneous land cover. OBIA is better at handling mixed pixels (pixels containing multiple land cover types) and can incorporate shape, texture, and contextual information, leading to improved classification accuracy, especially in cases where spectral signatures are not sufficient to distinguish between classes. It is common to use software tools that incorporate OBIA functionalities.

For example, in classifying agricultural fields, OBIA could effectively distinguish between fields of different crops even if their spectral signatures overlap. By considering the shape and size of the objects along with spectral information, the OBIA approach can achieve higher classification accuracy than a traditional pixel-based classification.

Q 21. Describe your experience with specific GIS software (e.g., ArcGIS, QGIS).

I have extensive experience with both ArcGIS and QGIS. ArcGIS is a powerful and comprehensive GIS software package known for its advanced spatial analysis tools and extensive extensions. I’ve used it extensively for tasks ranging from data preprocessing and image classification to change detection and spatial modeling. Its robust capabilities are particularly useful for complex projects involving large datasets and sophisticated analyses.

QGIS, on the other hand, is a free and open-source GIS software that offers a surprisingly comprehensive set of tools. Its open-source nature allows for community development and constant improvement. I have used QGIS for numerous projects requiring rapid prototyping, data visualization, and tasks that don’t necessitate ArcGIS’s advanced features. Its flexibility and accessibility make it a valuable tool, especially for projects with limited budgets.

My proficiency in both these software packages allows me to choose the most appropriate tool based on project requirements, budget, and the complexity of the analysis. I’m also proficient in using programming languages like Python to automate workflows and extend the capabilities of both ArcGIS and QGIS.

Q 22. What are the challenges in classifying urban areas?

Classifying urban areas presents unique challenges due to their high heterogeneity. Unlike relatively uniform landscapes like forests or grasslands, urban areas are mosaics of vastly different land cover types densely packed together. This complexity makes accurate classification difficult for several reasons:

- High spectral variability: Different building materials (concrete, brick, asphalt) reflect light differently, leading to spectral confusion. Even within a single ‘urban’ class, the spectral signature can vary widely.

- Sub-pixel variability: The resolution of satellite imagery often can’t distinguish between small patches of different land cover types within a single pixel. For example, a pixel might contain a mix of buildings, roads, and vegetation, making it difficult to assign a single class.

- Rapid change: Urban areas are dynamic environments, constantly evolving through construction, demolition, and renovation. This rapid change makes it difficult to maintain up-to-date land cover maps.

- Data accessibility and quality: Obtaining high-resolution imagery covering large urban areas can be expensive and time-consuming. Additionally, cloud cover and atmospheric effects can further degrade image quality.

- Defining urban classes: Establishing consistent definitions for different urban land cover classes (e.g., residential, commercial, industrial) can be subjective and vary across studies.

To address these challenges, advanced classification techniques such as object-based image analysis (OBIA), which considers spatial context alongside spectral information, are often employed. Furthermore, incorporating ancillary data (discussed in the next question) and using higher spatial resolution imagery can greatly improve accuracy.

Q 23. How do you integrate ancillary data (e.g., elevation, soil type) into land cover classification?

Integrating ancillary data significantly improves the accuracy and detail of land cover classification. This involves combining remotely sensed data (like satellite imagery) with other information sources. For example, elevation data can help distinguish between floodplain forests and upland forests, while soil type data can help differentiate between different types of grasslands or agricultural fields.

The integration can happen at different stages of the classification process:

- Pre-classification: Ancillary data can be used to improve image preprocessing. For example, elevation data can be used to correct for topographic effects on satellite imagery.

- During classification: Ancillary data can be used as additional features in a classification algorithm. This is often done by creating a composite dataset where the spectral bands from the imagery are combined with variables representing ancillary data (e.g., elevation, slope, soil type).

- Post-classification: Ancillary data can be used to refine or correct the classification results. For example, a classification might be refined using a rule-based approach that considers elevation constraints to improve the accuracy of elevation-dependent classes like wetlands.

Techniques used for integration include:

- Supervised classification with additional bands: Treat ancillary data as additional spectral bands in a supervised classification method (e.g., maximum likelihood, support vector machines).

- Object-based image analysis (OBIA): Integrate ancillary data as object attributes to refine segmentation and classification.

- Rule-based systems: Use logical rules to combine ancillary data with classification results to make adjustments.

For example, in classifying wetlands, incorporating elevation data as a layer helps to more accurately identify areas below the water table, preventing misclassification of areas that might appear spectrally similar but are not truly wetlands.

Q 24. Discuss the role of land use/cover classification in environmental monitoring.

Land use/cover classification plays a crucial role in environmental monitoring by providing a spatial and temporal understanding of changes in the Earth’s surface. This information is essential for:

- Deforestation and forest degradation monitoring: Tracking changes in forest cover helps assess deforestation rates, identify drivers of deforestation (e.g., agriculture, logging), and implement effective conservation strategies.

- Urban sprawl analysis: Monitoring the expansion of urban areas helps understand the impacts on natural habitats, biodiversity, and resource consumption.

- Agricultural land management: Classifying different agricultural land uses helps assess crop production, monitor irrigation practices, and optimize resource allocation for improved yields.

- Habitat fragmentation analysis: Analyzing land cover patterns helps identify fragmented habitats, which can negatively impact biodiversity. This allows for the development of conservation strategies to maintain connectivity among habitats.

- Climate change impact assessment: Land cover change affects carbon sequestration, albedo (reflectivity of the Earth’s surface), and water cycles. Monitoring these changes is critical for understanding and mitigating the impacts of climate change.

- Natural disaster response: Land cover maps are crucial in assessing the damage caused by natural disasters like floods, wildfires, or hurricanes and for planning recovery efforts.

By regularly updating land cover maps over time, we can create time series data that reveal trends and patterns of change, allowing for effective environmental management and policy development. For instance, monitoring changes in wetlands using satellite imagery over several decades can provide valuable insight into the impacts of human activities and climate change on these vital ecosystems.

Q 25. Explain your understanding of national land cover datasets.

National land cover datasets are comprehensive spatial databases that represent the land cover across an entire country. They provide a standardized, consistent representation of land cover, facilitating national-level environmental monitoring, planning, and decision-making. These datasets are typically created using a combination of remotely sensed data (satellite imagery, aerial photography) and ancillary data.

Key characteristics include:

- National extent: They cover the entire geographical area of a country.

- Standardized classification scheme: They typically adhere to a nationally or internationally recognized land cover classification system, ensuring consistency and comparability across different regions.

- Regular updates: Many national datasets are updated periodically (e.g., every 2-5 years) to reflect changes in land cover.

- High spatial resolution: Often strive to achieve high spatial resolution for detailed land cover mapping, although this can vary depending on data acquisition and processing capabilities.

- Multiple data sources: Usually created from multiple sources to minimize errors and gaps in data coverage. Examples include Landsat imagery, aerial photography, LiDAR.

Examples include the National Land Cover Database (NLCD) in the United States and similar initiatives in many other countries. These datasets are crucial for many applications, including environmental impact assessment, resource management, urban planning, and disaster response. The availability of reliable, up-to-date national land cover data is vital for evidence-based policy making and environmental sustainability.

Q 26. How do you validate your land cover classification results?

Validating land cover classification results is crucial to ensure accuracy and reliability. This involves comparing the classified map to a reference dataset that represents the true land cover. There are several methods for validation:

- Accuracy Assessment: This is the most common method, involving a statistical comparison between the classified map and a reference dataset. This typically involves randomly selecting sample points on the map, determining their land cover type in both the classified map and the reference data, and then calculating various accuracy metrics such as:

- Overall Accuracy: The percentage of correctly classified pixels.

- Producer’s Accuracy: The probability that a pixel classified as a certain class actually belongs to that class.

- User’s Accuracy: The probability that a pixel that actually belongs to a certain class is classified as that class.

- Kappa Coefficient: A measure of agreement between the classified map and the reference data, accounting for chance agreement.

- Visual Inspection: This involves visually comparing the classified map to aerial photography or high-resolution imagery to identify potential errors or areas of uncertainty.

- Field Verification: Ground truthing, where field observations are made at selected locations to verify the accuracy of the classification. This is especially important for complex or heterogeneous areas.

- Comparison with Existing Datasets: Comparing the classification results with other existing land cover datasets, which can reveal inconsistencies or errors. This is important if you want to use multiple datasets together.

The choice of validation method depends on the resources available, the complexity of the study area, and the level of accuracy required. A robust validation strategy typically involves a combination of these methods to provide a comprehensive assessment of the accuracy and reliability of the land cover classification.

Q 27. Describe a time you had to overcome a technical challenge in a land cover classification project.

In one project mapping deforestation in the Amazon rainforest, we faced a significant challenge with cloud cover. A substantial portion of our satellite imagery was obscured by clouds, resulting in data gaps and hindering accurate mapping. Simply discarding cloudy images would have resulted in an incomplete and biased dataset.

To overcome this, we implemented a multi-step strategy:

- Temporal compositing: We combined images from multiple dates to minimize cloud cover. This involved selecting the clearest pixels from different images taken over several months, creating a composite image with minimal cloud contamination.

- Cloud masking: We used advanced cloud masking algorithms to identify and remove cloud pixels. This involved carefully calibrating parameters to minimize the risk of misclassifying other features, such as bright, cloud-like objects such as white sand.

- Interpolation: In some areas where cloud cover was persistent, we used spatial interpolation techniques to fill in the missing data. This method estimated the land cover in those areas based on the surrounding pixels. The choice of interpolation technique (e.g., kriging, inverse distance weighting) was determined through experimentation to minimize bias.

This combined approach allowed us to produce a significantly more complete and accurate map of deforestation, despite the initial limitations imposed by cloud cover. It highlighted the importance of proactive planning, adaptive techniques, and a thorough understanding of image processing capabilities in overcoming technical challenges.

Q 28. How do you communicate complex geospatial information to a non-technical audience?

Communicating complex geospatial information to a non-technical audience requires a clear, concise, and visual approach. Avoiding jargon and technical details is paramount.

Effective strategies include:

- Use visual aids: Maps, charts, graphs, and infographics are essential. A well-designed map, for instance, can instantly convey spatial patterns that would be difficult to explain with words alone. Keep maps simple, and focus on highlighting key findings.

- Analogies and metaphors: Relate geospatial concepts to everyday experiences. For example, explain spatial resolution by comparing it to the zoom level on a map application.

- Storytelling: Frame the information within a compelling narrative. Instead of presenting data points, weave them into a story that explains the significance of the findings.

- Focus on the ‘so what?’: Clearly communicate the implications of the geospatial information for the audience. What does this mean for them? How does it impact their lives or communities?

- Interactive elements: Utilize interactive maps or web applications that allow the audience to explore the data themselves. This promotes active engagement and enhances understanding.

- Simple language: Avoid technical jargon and use plain language that everyone can understand. Define any unavoidable technical terms.

For example, instead of saying ‘The Normalized Difference Vegetation Index (NDVI) shows a significant decrease in vegetation cover in the study area,’ one might say, ‘Our maps show a significant decrease in green areas, indicating a possible loss of plant life.’ This simple alteration makes the information accessible and easily understandable to a wider audience.

Key Topics to Learn for Land Use and Land Cover Classification Interview

- Fundamentals of Land Use and Land Cover: Understanding the difference between land use and land cover, their interrelationship, and the various classification systems (e.g., Anderson Level I-III, USGS National Land Cover Database).

- Remote Sensing and GIS Applications: Proficiency in using remote sensing data (e.g., satellite imagery, aerial photography) and GIS software (e.g., ArcGIS, QGIS) for land cover mapping, analysis, and change detection.

- Image Classification Techniques: Knowledge of supervised and unsupervised classification methods, including maximum likelihood classification, support vector machines, and object-based image analysis (OBIA).

- Accuracy Assessment and Validation: Understanding the importance of accuracy assessment, methods for calculating error matrices, and interpreting Kappa statistics to evaluate the quality of land cover classifications.

- Spatial Data Analysis: Experience with spatial analysis techniques relevant to land use/land cover data, such as spatial autocorrelation, landscape metrics, and overlay analysis.

- Land Use Change Modeling and Prediction: Familiarity with techniques used to model and predict future land use and land cover changes, including Markov chain models and cellular automata.

- Applications in Environmental Management and Planning: Understanding how land use and land cover classification is applied to solve real-world problems in areas such as urban planning, natural resource management, and environmental impact assessment.

- Data Handling and Preprocessing: Experience with geospatial data preprocessing, including data cleaning, geometric correction, and atmospheric correction.

Next Steps

Mastering Land Use and Land Cover Classification opens doors to exciting careers in environmental science, urban planning, and geographic information systems. A strong understanding of these concepts is highly sought after by employers. To significantly boost your job prospects, create a compelling and ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource that can help you build a professional resume that stands out. Take advantage of their tools and resources – they even provide examples of resumes tailored to Land Use and Land Cover Classification to help you get started. Invest the time in crafting a top-notch resume; it’s a crucial step in securing your dream job.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples