Cracking a skill-specific interview, like one for Total Survey Error (TSE) Framework, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Total Survey Error (TSE) Framework Interview

Q 1. Define Total Survey Error (TSE) and its components.

Total Survey Error (TSE) encompasses all the sources of error that can affect the results of a survey. It’s not just about the margin of error you see reported in polls; it’s a much broader concept. Think of it like baking a cake – if any ingredient is off, the final product suffers. Similarly, any error in the survey process impacts the accuracy and reliability of your findings. TSE is typically broken down into two main categories: sampling error and non-sampling error.

- Sampling Error: This arises from the fact that you’re studying a sample of the population, not the entire population. It’s the difference between the statistic calculated from the sample and the true population parameter. It’s inherently random.

- Non-sampling Error: This is a catch-all term for errors that aren’t related to the sampling process itself. These errors are systematic and often more difficult to detect and correct than sampling error. Examples include coverage error, nonresponse error, measurement error, and processing error.

Q 2. Explain the difference between sampling and non-sampling error.

The key difference lies in the source of the error. Sampling error is inherent to using a sample instead of the entire population; it’s random and unavoidable. We can estimate and quantify it using statistical methods. Imagine trying to determine the average height of all adults in a city. Even with a perfectly random sample, the average height of your sample will likely be slightly different from the true average height of all adults in the city. That difference is sampling error.

Non-sampling error, however, stems from flaws in the survey design, implementation, or data processing. It’s systematic, meaning it biases the results in a particular direction. For example, if your survey questionnaire is confusing or leading, you’ll introduce non-sampling error regardless of how representative your sample is. It’s harder to measure and correct for non-sampling error as it can often be subtle and difficult to identify.

Q 3. Describe various sources of sampling error.

Several factors contribute to sampling error. The primary source is the inherent variability in the population itself. Even with a perfect sampling method, selecting a subset will always lead to some level of difference from the true population value. This is often referred to as sampling variability.

- Sampling Frame Error: This occurs when the sampling frame (the list from which you draw your sample) doesn’t accurately represent the population of interest. For instance, if you’re surveying registered voters but some eligible voters aren’t registered, you’ll have coverage error affecting your sample.

- Sample Size: Smaller samples lead to larger sampling errors, simply due to increased variability.

- Sampling Method: The method used to select the sample affects the error. Non-probability sampling methods (convenience sampling, quota sampling, etc.) often introduce more sampling error compared to probability sampling (simple random sampling, stratified sampling, etc.).

Q 4. What are the primary sources of non-sampling error?

Non-sampling error has many sources, making it a complex challenge. Let’s consider some key sources:

- Coverage Error: This arises when the sampling frame doesn’t perfectly match the target population. For example, a phone survey might exclude individuals without landlines or cell phones.

- Nonresponse Error: This happens when some selected individuals don’t participate in the survey. Those who don’t respond might differ systematically from those who do, biasing the results.

- Measurement Error: This involves inaccuracies in the questions, their wording, the way interviewers interact with respondents, and responses themselves. Ambiguous questions or leading questions can create significant biases.

- Processing Error: Mistakes during data entry, coding, or analysis can also introduce error. For example, incorrectly entering data or miscalculating summary statistics.

Q 5. How does coverage error affect survey results?

Coverage error significantly impacts survey results by creating a systematic bias. It leads to a sample that is not truly representative of the target population. For example, imagine a survey on the prevalence of a disease within a community that only surveys those visiting a specific clinic. The results would likely overrepresent those who are already sick, giving a skewed view of the overall situation.

The effect depends on the nature of the bias. If the excluded part of the population differs systematically from the included part regarding the variable of interest, the results will be distorted. This can lead to inaccurate estimates of population parameters and incorrect conclusions.

Q 6. Explain the concept of nonresponse bias and its impact.

Nonresponse bias occurs when the respondents differ systematically from the nonrespondents concerning the characteristics of interest. Those who choose to participate may have different opinions or behaviors than those who don’t. Imagine a survey about customer satisfaction with a product. If unhappy customers are less likely to complete the survey, the results will be overly optimistic, underestimating the true level of dissatisfaction.

The impact can be substantial. It leads to biased estimates, potentially undermining the entire study. The direction and magnitude of the bias depend on the nature of the difference between respondents and nonrespondents.

Q 7. Discuss different techniques for minimizing nonresponse bias.

Minimizing nonresponse bias requires a multifaceted approach:

- Maximize Response Rates: Employ multiple contact methods (mail, phone, email, online), send reminders, offer incentives, and keep the survey brief and easy to complete.

- Weighting Adjustments: Statistically adjust the data to account for nonresponse. This involves assigning different weights to the responses based on known characteristics of the respondents and nonrespondents. This requires good auxiliary information.

- Imputation Techniques: Estimate the missing data based on observed data from similar respondents. Different methods exist, each with its strengths and weaknesses.

- Nonresponse Analysis: Try to identify factors correlated with nonresponse, helping to understand and mitigate the potential bias. This might involve examining demographic or other characteristics of respondents and nonrespondents to uncover systematic differences.

- Careful Survey Design: A well-designed survey (clear and concise questions, engaging format, user-friendly interface) enhances response rates and reduces potential bias.

Q 8. What is measurement error, and how can it be reduced?

Measurement error is the difference between the value obtained in a survey and the true value. Think of it like trying to measure the length of a table with a slightly warped ruler – you’ll get a value, but it won’t be perfectly accurate. This error can stem from various sources, including the respondent’s understanding of the question (e.g., misinterpreting a scale), the interviewer’s influence (e.g., leading questions), or even the design of the questionnaire itself (e.g., ambiguous wording).

Reducing measurement error involves several strategies:

- Improving question design: Using clear, concise, and unambiguous language; pre-testing the questionnaire to identify and rectify potential problems; and employing appropriate question types for the specific data needed.

- Interviewer training: Providing extensive training to interviewers to ensure consistent administration, minimize bias, and handle respondent queries effectively. This includes role-playing and practice interviews.

- Using reliable measurement instruments: Choosing validated scales and instruments to ensure accuracy and consistency in measuring the constructs of interest. For example, utilizing established scales for measuring attitudes or behaviors.

- Multiple-item measures: Instead of relying on single items, use multiple questions to measure the same construct. This helps to average out random error and obtain a more reliable measure.

- Data validation and cleaning: Thoroughly reviewing and cleaning the data after collection to identify and address inconsistencies or outliers that might indicate measurement error.

For example, if measuring job satisfaction, instead of asking a single question, we could use several items across different facets of job satisfaction (workload, compensation, relationships with colleagues, etc.). This multi-faceted approach provides a more robust and less error-prone measure.

Q 9. Explain the concept of processing error and its potential consequences.

Processing error refers to mistakes made during the handling of survey data after it’s been collected. This includes data entry errors, coding errors, and errors in data cleaning or manipulation. Imagine a large spreadsheet of survey responses; any mistake in entering or managing that data is a processing error.

The consequences of processing errors can be significant, leading to:

- Biased results: Incorrect data entry or coding can systematically skew the results, leading to false conclusions. For example, consistently miscoding a variable could entirely change the relationship observed between variables in analysis.

- Invalid statistical analysis: Errors in data manipulation can lead to incorrect statistical analyses and inaccurate inferences. This can undermine the validity and credibility of the entire research study.

- Wasted resources: Processing errors often require additional time and resources to identify and correct, potentially delaying project timelines and increasing costs.

- Misinformed decision-making: Incorrect results based on processing errors can lead to flawed decisions based on faulty data.

To minimize processing error, employing rigorous data entry procedures (e.g., double data entry), using data validation checks, and documenting all data cleaning steps is crucial. Automated data processing tools with built-in error checks can also greatly improve accuracy.

Q 10. Describe different methods for weighting survey data.

Weighting adjusts the survey data to better reflect the characteristics of the population from which the sample was drawn. It’s used when the sample doesn’t perfectly represent the target population. Think of it as adjusting the ‘weight’ given to different responses to make the overall picture accurate.

Several methods exist for weighting survey data:

- Post-stratification weighting: This involves adjusting the weights of the sample to match the known population proportions on key demographic variables such as age, gender, or race. For example, if our sample under-represents women, we assign higher weights to female responses to correct for this imbalance.

- Raking (iterative proportional fitting): This method adjusts weights iteratively to match marginal distributions of multiple variables simultaneously. It’s more complex than post-stratification, but provides a more refined adjustment.

- Calibration weighting: This aims to minimize the variance of the estimator by incorporating auxiliary information (from census data, for example) to adjust weights.

- Propensity score weighting: Used to adjust for non-response bias, this assigns weights based on the probability of a respondent participating in the survey, thus giving more weight to responses from groups with lower participation rates.

The choice of weighting method depends on the specific characteristics of the sample, the available auxiliary data, and the goals of the survey.

Q 11. How do you handle missing data in a survey dataset?

Missing data is a common challenge in surveys. It arises when respondents fail to answer certain questions or when data is lost during the collection or processing stages. Ignoring missing data can lead to biased results.

Several approaches exist for handling missing data:

- Listwise deletion: The simplest method, but often inefficient. It removes all cases with any missing data. This significantly reduces sample size, especially if many participants have missing responses.

- Pairwise deletion: Uses available data for each analysis. This method can lead to different sample sizes across analyses, complicating interpretation.

- Imputation: This involves replacing missing values with estimated values. Several methods exist including mean imputation, regression imputation, and multiple imputation. Multiple imputation offers more statistical rigor by creating several plausible imputed datasets, providing a more comprehensive picture and addressing uncertainty in the estimates.

- Model-based methods: These employ statistical models to predict missing values based on observed data and known relationships between variables.

The best approach depends on the nature and extent of missing data, the mechanism of missingness (missing completely at random, missing at random, missing not at random), and the analytical goals. Multiple imputation is often preferred for its robustness and handling of uncertainty associated with missing data.

Q 12. What are the advantages and disadvantages of different sampling methods?

Sampling methods determine how we select respondents for our survey. Different methods have different strengths and weaknesses:

- Simple random sampling: Each member of the population has an equal chance of being selected. It’s straightforward but may not accurately represent subgroups within the population, particularly in heterogeneous populations.

- Stratified random sampling: The population is divided into strata (e.g., age groups), and random samples are drawn from each stratum. This ensures representation of all subgroups, but requires prior knowledge of the population strata.

- Cluster sampling: The population is divided into clusters (e.g., geographical areas), and some clusters are randomly selected. Then, all or a sample of individuals within selected clusters are surveyed. It’s cost-effective, but may suffer from higher sampling error if the clusters are not homogeneous.

- Convenience sampling: Selecting participants based on their availability or accessibility. This is easy and inexpensive, but results are highly susceptible to bias and cannot be generalized to the wider population.

The optimal sampling method depends on factors such as budget, time constraints, desired level of precision, and knowledge of the population characteristics. A well-chosen sampling method significantly impacts the accuracy and generalizability of survey results.

Q 13. Explain the importance of rigorous survey design in minimizing TSE.

Rigorous survey design is paramount in minimizing Total Survey Error (TSE). TSE encompasses all errors that can affect the accuracy and reliability of survey data, from sampling error to measurement error to processing error. A poorly designed survey can amplify these errors.

A well-designed survey minimizes TSE through:

- Clear objectives and research questions: A focused design ensures that the questions directly address the study’s goals, preventing irrelevant or ambiguous questions which lead to measurement error.

- Appropriate sampling frame and method: A representative sample avoids sampling bias, reducing sampling error. The choice of sampling method must align with the research objectives and feasibility.

- Effective questionnaire design: Well-crafted questions (clear, concise, unbiased) minimize measurement error. Pre-testing the questionnaire on a smaller sample helps to identify potential issues.

- Trained interviewers: Proper training minimizes interviewer bias and improves data quality. Standardisation of interview techniques reduces variability.

- Data processing and quality control: Implementing robust data entry and cleaning procedures minimizes processing errors. The use of quality control checks throughout the process helps to identify and correct errors.

A rigorous design reduces the cumulative effect of multiple error sources, increasing the overall validity and reliability of the survey results. Ignoring any step can amplify the errors and lead to inaccurate conclusions.

Q 14. Describe your experience with evaluating the quality of survey data.

In my experience, evaluating survey data quality involves a multi-faceted approach. It’s not merely about assessing the response rate; it’s about understanding the potential sources of error at each stage of the survey process.

My evaluation typically includes:

- Assessing the sampling frame and method: Determining the representativeness of the sample and evaluating any potential sampling bias. This may involve comparing sample characteristics with known population characteristics.

- Analyzing response rates and non-response bias: Investigating whether non-respondents differ systematically from respondents. This can involve comparing respondent characteristics with data from the sampling frame or other sources.

- Reviewing the questionnaire design and its administration: Evaluating the clarity and appropriateness of questions, and assessing the consistency of interview administration. This includes looking for evidence of interviewer bias.

- Examining data quality through data validation and cleaning: Identifying and correcting data entry errors, inconsistencies, and outliers. This might involve using automated checks and manual reviews.

- Assessing measurement reliability and validity: Evaluating the consistency and accuracy of the measurements using appropriate statistical techniques like Cronbach’s alpha for reliability and factor analysis for validity.

- Investigating potential processing errors: Checking the accuracy of data coding, weighting, and any data transformations.

By systematically examining these aspects, I can build a comprehensive understanding of the strengths and limitations of the survey data and appropriately assess its overall quality and reliability. This understanding then informs how the data should be analyzed and interpreted, ensuring that conclusions are valid and meaningful.

Q 15. How do you identify and address outliers in survey data?

Identifying and addressing outliers in survey data is crucial for ensuring the accuracy and reliability of your results. Outliers are data points that significantly deviate from the overall pattern. They can skew analyses and lead to misleading conclusions. Think of it like finding a single skyscraper amidst a collection of bungalows – it’s noticeably different and might distort your understanding of the overall landscape.

My approach involves a multi-step process:

- Visual Inspection: I begin by creating histograms, box plots, and scatter plots to visually identify potential outliers. This provides a quick overview of the data distribution.

- Statistical Methods: I employ statistical methods like the Z-score or the Interquartile Range (IQR) to quantify how far a data point lies from the mean or median. Data points exceeding a predefined threshold (e.g., a Z-score above 3 or below -3) are flagged as potential outliers.

- Investigate the Cause: Once identified, I don’t automatically discard outliers. Instead, I investigate the cause. Was there a data entry error? Did the respondent misunderstand the question? Understanding the cause helps determine the appropriate action.

- Handling Outliers: The best approach depends on the cause and the impact on the analysis. Options include:

- Correction: If an error is identified, correcting the data is ideal.

- Removal: If the outlier is clearly erroneous and cannot be corrected, removal might be justified. However, this should be documented and justified.

- Transformation: Transforming the data (e.g., using a logarithmic transformation) can sometimes reduce the impact of outliers.

- Robust Methods: Employing robust statistical methods (less sensitive to outliers) such as median instead of mean, is beneficial.

For example, in a salary survey, an outlier might be a CEO’s salary significantly higher than others. While it is a valid data point, it might skew the average salary significantly. Depending on the research question, it might be appropriate to report both the overall average and the average excluding this data point, providing clear context.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What statistical methods are commonly used to analyze survey data and account for TSE?

Analyzing survey data while accounting for Total Survey Error (TSE) requires a multifaceted approach, leveraging both descriptive and inferential statistics. TSE encompasses sampling error, coverage error, nonresponse error, and measurement error – all impacting the accuracy and reliability of the results.

Commonly used methods include:

- Descriptive Statistics: Frequency distributions, means, medians, standard deviations, and cross-tabulations provide a preliminary understanding of the data. These help in identifying potential biases or patterns linked to TSE components.

- Weighting: To mitigate nonresponse bias and coverage error, we employ weighting techniques to adjust the sample to better represent the population of interest. Post-stratification weighting, for example, adjusts for known differences between the sample and the population.

- Regression Analysis: To examine the relationship between variables and account for confounding factors, regression analysis is powerful. This can help to tease out the impact of different error sources.

- Imputation: For missing data (a form of nonresponse error), multiple imputation techniques help in creating plausible values for the missing data, reducing the impact on analysis.

- Variance Estimation: It’s critical to calculate variance accounting for the complexities of survey design, such as clustering and stratification. This improves the precision of estimates.

For instance, if analyzing data from a phone survey, we might use weighting to adjust for differences in phone ownership across age groups to reduce coverage error and account for underrepresentation.

Q 17. How would you assess the validity and reliability of a survey instrument?

Assessing the validity and reliability of a survey instrument is fundamental to ensuring the quality of your data. Validity refers to whether the survey measures what it intends to measure, while reliability refers to the consistency of the measurements.

My approach encompasses several strategies:

- Content Validity: I assess whether the items in the survey comprehensively cover the construct of interest. Expert review and pilot testing are crucial steps here.

- Criterion Validity: I evaluate the survey’s ability to predict or correlate with external criteria. For example, does a job satisfaction survey correlate with employee turnover rates?

- Construct Validity: This examines how well the survey aligns with theoretical expectations. Factor analysis is often employed to explore underlying dimensions of the data.

- Test-Retest Reliability: Administering the survey to the same group at two different time points allows for assessing the consistency of responses over time. The correlation between the two sets of scores measures the reliability.

- Internal Consistency Reliability: Cronbach’s alpha is a common measure assessing the internal consistency of items within a scale. High alpha indicates that the items measure the same underlying construct.

For example, before deploying a new customer satisfaction survey, we’d conduct pilot testing and get expert feedback on the questions’ clarity and relevance. We’d also check the internal consistency to ensure the items within a satisfaction scale are reliably measuring the same thing.

Q 18. Describe your experience with using software for survey data analysis.

I have extensive experience using various software packages for survey data analysis. My proficiency includes:

- R: I utilize R’s powerful statistical capabilities, especially its packages like

surveyandmicefor handling complex survey data and implementing weighting and imputation techniques.Rallows for flexible and customized analyses. - SAS: SAS is a robust platform ideal for handling large datasets and complex survey designs. Its procedures like

PROC SURVEYMEANSandPROC REGare invaluable for weighted analyses and regression modeling. - SPSS: SPSS offers a user-friendly interface, facilitating basic to advanced statistical analyses. Its weighting and reliability analysis tools are frequently used in my work.

- Stata: Stata’s powerful command language and extensive statistical capabilities make it suitable for handling complex survey designs and advanced modeling techniques.

I’m adept at choosing the appropriate software based on the project’s scope, data size, and analytical requirements. I am also comfortable writing custom scripts for automation and data manipulation.

Q 19. How do you interpret confidence intervals in the context of survey results?

Confidence intervals provide a range of values within which we are confident (typically 95%) that the true population parameter lies. In the context of survey results, this means we can be 95% confident that the true population proportion or mean falls within the calculated interval.

For example, if a survey finds that 60% of respondents support a particular policy, with a 95% confidence interval of 55% to 65%, this means we’re 95% confident that the true proportion of the population supporting the policy lies between 55% and 65%. The wider the interval, the less precise our estimate.

The width of the confidence interval is influenced by several factors: sample size (larger samples yield narrower intervals), variability in the data (higher variability leads to wider intervals), and the chosen confidence level (higher confidence levels lead to wider intervals). Understanding and interpreting confidence intervals is essential for accurately communicating the precision and uncertainty associated with survey results.

Q 20. Explain the role of quality control measures throughout the survey process.

Quality control measures are woven throughout the entire survey process, from design to analysis. Neglecting them compromises data quality and validity. Think of it like baking a cake – you need the right ingredients and precise steps to achieve a delicious result.

Key quality control steps include:

- Questionnaire Design: Careful consideration of question wording, response options, and question order is paramount. Pilot testing and cognitive interviews help refine the questionnaire.

- Sampling: Selecting a representative sample is vital. The sampling method should be carefully documented and justified.

- Data Collection: Robust data collection protocols, including training interviewers, monitoring data entry, and employing quality checks during data entry are essential to minimize errors.

- Data Cleaning: Identifying and addressing missing data, outliers, and inconsistencies are crucial steps. Data cleaning often includes consistency checks, range checks, and plausibility checks.

- Data Analysis: Applying appropriate statistical methods, accounting for survey design features, and carefully interpreting the results are essential to draw meaningful conclusions.

For instance, during data entry, we might program range checks to flag implausible ages (e.g., ages below 0 or above 120) and employ consistency checks to identify contradictory responses. This proactive approach ensures data quality.

Q 21. Discuss the ethical considerations in conducting surveys.

Ethical considerations are paramount in conducting surveys. Maintaining ethical standards ensures the well-being of participants, protects their privacy, and promotes trust in research. My commitment to ethical research includes:

- Informed Consent: Participants should be fully informed about the survey’s purpose, procedures, risks, and benefits before participation. They must have the right to refuse or withdraw at any time.

- Confidentiality and Anonymity: Data must be handled confidentially, ensuring that individual responses cannot be linked to participants. Anonymization techniques are often employed.

- Data Security: Appropriate measures must be in place to protect the data from unauthorized access or disclosure.

- Transparency: The survey’s methodology, including sampling strategy, data collection methods, and analysis techniques, should be clearly documented and reported transparently.

- Avoiding Bias: Carefully designing questions to avoid bias and ensure that the survey does not mislead or coerce participants is essential.

- Avoiding harm: Research should not cause any physical, psychological, or emotional harm to participants.

For example, in a sensitive topic survey, we’d guarantee complete anonymity and inform participants that their responses will be aggregated and reported only at the group level to minimize the risk of identification.

Q 22. How would you approach the problem of low response rates in a survey?

Low response rates are a significant threat to survey validity, introducing sampling bias and impacting the generalizability of findings. Addressing this requires a multi-pronged approach focusing on minimizing nonresponse error within the TSE framework.

Incentives: Offering small gifts or rewards can significantly boost participation. In one study I conducted on consumer preferences, offering a $5 gift card increased response rates by 20%.

Pre-notification: Contacting potential respondents beforehand, explaining the survey’s purpose and importance, can foster trust and willingness to participate.

Survey Design: A well-designed survey is crucial. This includes keeping it concise, using clear and simple language, and ensuring the questionnaire’s visual appeal. A cluttered or lengthy survey discourages completion.

Multiple Modes: Offering various modes (online, phone, mail) caters to diverse preferences and can reach individuals who might be inaccessible through a single mode.

Follow-up: Gentle reminders and follow-up attempts are vital. A personalized email expressing appreciation and reiterating the importance of their contribution can be very effective.

Respondent Burden: Minimizing the time commitment is critical. A short survey is more likely to be completed than a lengthy one.

It’s important to analyze non-respondents to understand potential bias. We can use auxiliary data or conduct a small qualitative study to probe reasons for non-participation and adjust future surveys accordingly.

Q 23. Describe your experience with post-stratification techniques.

Post-stratification is a powerful technique to adjust for known biases in sample selection. It involves weighting the responses based on known population characteristics to reduce sampling error and improve the representativeness of the sample.

For example, if our sample under-represents a particular demographic group (say, older adults), we can adjust the weights of the responses from the younger individuals to ensure the final estimates reflect the true population proportions of age groups. This requires having access to reliable population data (census data, for instance) to determine the appropriate weighting factors. I’ve utilized post-stratification in numerous surveys, including a recent study on healthcare access where we corrected for age and geographic imbalances in the sample. The process typically involves calculating weights using statistical software (like R or SAS) and applying these weights to the analysis to obtain adjusted estimates.

A crucial aspect is ensuring the chosen auxiliary variables are truly relevant to the survey outcomes. Incorrectly applied post-stratification could introduce further biases and inaccuracies, thereby negating its advantages.

Q 24. How do you communicate complex survey results to a non-technical audience?

Communicating complex survey results to a non-technical audience necessitates simplifying complex statistical concepts without compromising accuracy.

Visualizations: Charts, graphs, and infographics are extremely effective. A simple bar chart illustrating key findings is far more impactful than a table of raw data.

Plain Language: Avoid jargon. Use simple, everyday language to explain key concepts and findings. For instance, instead of saying ‘the coefficient of determination was 0.8,’ say, ‘80% of the variation in [dependent variable] can be explained by [independent variable].’

Storytelling: Presenting results as a narrative, focusing on the implications and consequences of the findings, can greatly increase engagement and understanding. This approach contextualizes results for a non-technical audience by focusing on practical applications.

Key Messages: Identify 2-3 key takeaways that are relevant and easily understood, emphasizing the main conclusions.

Interactive elements: Depending on the setting (presentation or report), incorporating interactive elements or demonstrations can greatly enhance comprehension.

For instance, when presenting results from a survey on public transportation to city council members, I presented key findings using visually appealing charts highlighting areas needing improvement, along with clear policy recommendations. This made the complex data digestible and actionable for the decision-makers.

Q 25. What are some of the challenges you have encountered in working with TSE and how did you overcome them?

One of the biggest challenges in TSE is dealing with measurement error, particularly in questions dealing with sensitive topics. For example, in a survey on illicit drug use, social desirability bias can lead to underreporting. To mitigate this, I’ve used techniques like randomized response techniques to protect respondent anonymity, ensuring more honest responses.

Another challenge is handling missing data. Simple imputation methods can lead to biased estimates. Therefore, we need to carefully consider the nature of the missingness (missing completely at random, missing at random, etc.) and use appropriate imputation techniques like multiple imputation to minimize bias.

Finally, dealing with non-response bias is an ongoing struggle. We have to carefully evaluate the characteristics of respondents and non-respondents, using techniques like propensity score weighting to try and correct for differences, if possible. It’s a constant learning process of refining sampling methods, survey design, and data analysis to minimize TSE’s influence.

Q 26. Describe your experience with different types of survey modes (e.g., online, phone, in-person).

I’ve extensive experience with various survey modes. Online surveys offer cost-effectiveness and ease of data collection but can suffer from sampling bias due to digital divides. Phone surveys provide better control and allow for clarification but are expensive and time-consuming. In-person surveys provide the highest response rates and allow for complex questionnaires, but they’re the most resource-intensive.

The choice of mode depends on the target population, budget, time constraints, and the complexity of the questionnaire. For instance, in a study on elderly individuals’ health, in-person interviews might be preferred to ensure accurate data collection and address potential comprehension issues. For large-scale studies targeting a diverse population, a mixed-mode approach combining online and phone surveys can be optimal.

Q 27. What are some key considerations for designing a survey for a specific target population?

Designing a survey for a specific target population requires careful consideration of several factors within the TSE framework:

Language and Culture: The questionnaire must be culturally appropriate and use language that the target population understands. Translating a survey is not enough; cultural adaptation is crucial for accurate interpretation.

Literacy and Cognitive Ability: Question wording and complexity should match the target population’s literacy and cognitive levels. Simple, unambiguous language is essential, avoiding technical terms and complex sentence structures.

Accessibility: The survey should be accessible to all members of the target population, including those with disabilities. This might involve providing audio versions, larger fonts, or other accommodations.

Sampling Frame: It is vital to have an accurate and representative sampling frame for the target population. This determines how the sample is drawn and influences the survey’s generalizability.

Pilot Testing: Before deploying the survey, pilot testing on a smaller subset of the target population helps identify any issues with comprehension, wording, or question flow. This iterative approach ensures a smooth and accurate data collection process.

For instance, when designing a survey for a specific ethnic minority group, I consulted with community leaders and representatives to ensure that the survey questions were culturally sensitive and appropriate, translating the questionnaire was not enough, and further culturally adapted it to improve the response rate and validity of the data.

Key Topics to Learn for Total Survey Error (TSE) Framework Interview

- Sampling Error: Understanding its sources (e.g., sampling frame issues, non-response bias), and methods for minimizing it (e.g., stratified sampling, weighting).

- Measurement Error: Exploring different types (e.g., systematic, random), analyzing the impact of question wording, and identifying strategies for improving questionnaire design.

- Processing Error: Identifying potential errors during data entry, coding, and cleaning. Discuss quality control techniques and data validation strategies.

- Non-Sampling Error: Analyzing the influence of factors like interviewer effects, respondent bias, and response rates on overall survey accuracy. Learn about mitigation strategies.

- Total Survey Error Estimation: Understanding how to combine and quantify the various error components to obtain an overall estimate of survey accuracy.

- Practical Application: Consider real-world examples of how TSE impacts survey results and how to apply the framework in specific survey contexts (e.g., political polling, market research).

- Problem-Solving: Develop your ability to diagnose potential sources of error in survey designs and data, and propose solutions to enhance data quality.

- Advanced Topics (for Senior Roles): Explore advanced statistical methods for analyzing TSE, such as variance component estimation and design effects.

Next Steps

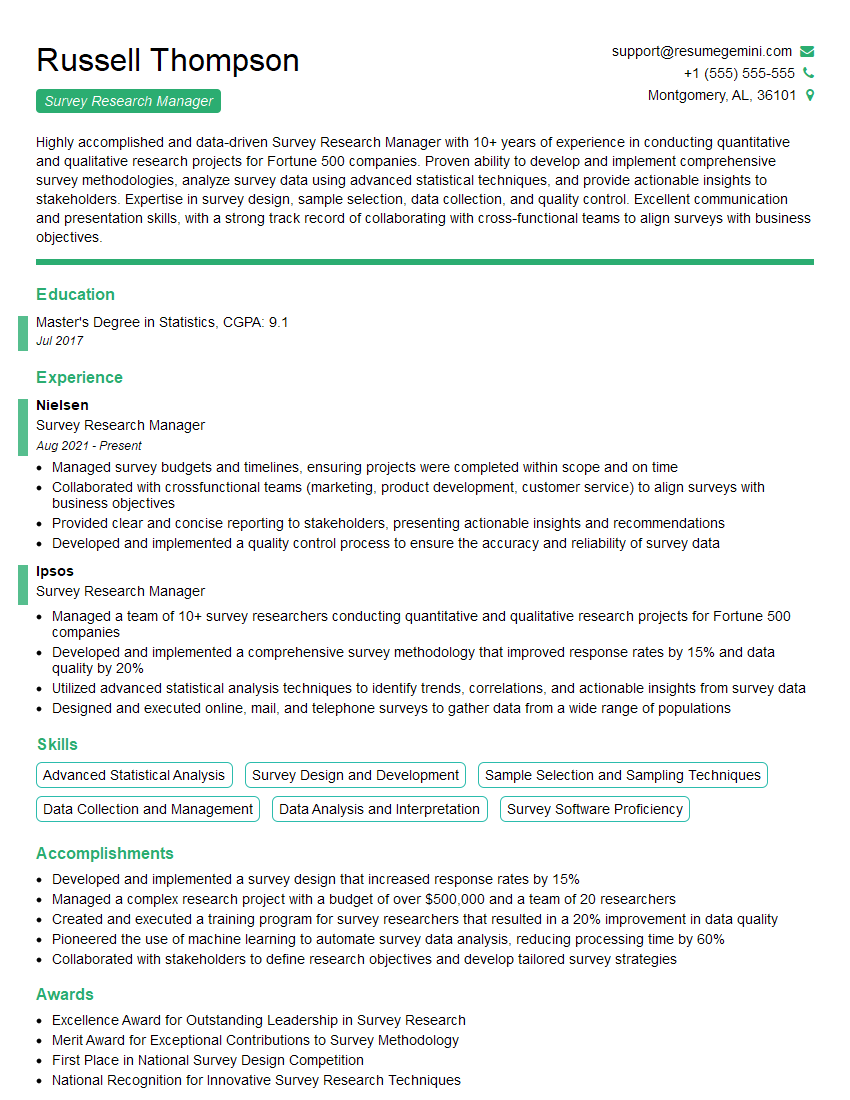

Mastering the Total Survey Error (TSE) Framework is crucial for advancing your career in research, data analysis, and related fields. A strong understanding of TSE demonstrates your commitment to data quality and rigorous methodology, making you a highly valuable asset to any organization. To maximize your job prospects, create an ATS-friendly resume that highlights your TSE expertise. ResumeGemini is a trusted resource that can help you craft a compelling and effective resume. Examples of resumes tailored to showcasing Total Survey Error (TSE) Framework proficiency are available to further assist you in your job search.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples