The thought of an interview can be nerve-wracking, but the right preparation can make all the difference. Explore this comprehensive guide to Knowledge of Artificial Intelligence (AI) interview questions and gain the confidence you need to showcase your abilities and secure the role.

Questions Asked in Knowledge of Artificial Intelligence (AI) Interview

Q 1. Explain the difference between supervised, unsupervised, and reinforcement learning.

The core difference between supervised, unsupervised, and reinforcement learning lies in how the algorithms learn from data. Think of it like teaching a dog:

- Supervised Learning: This is like explicitly showing the dog pictures of cats and dogs and telling it which is which. The algorithm learns from labeled data, where each data point is tagged with the correct answer. For example, in image classification, each image is labeled as ‘cat’ or ‘dog’. The model learns to map inputs (images) to outputs (labels). Common algorithms include linear regression, logistic regression, and support vector machines.

- Unsupervised Learning: Here, you just show the dog a bunch of pictures of cats and dogs and let it figure out the patterns on its own. The algorithm learns from unlabeled data, identifying structures and relationships within the data without explicit guidance. Clustering (grouping similar data points together) and dimensionality reduction (reducing the number of variables while retaining important information) are examples. K-means clustering and principal component analysis are common techniques.

- Reinforcement Learning: This is like teaching the dog tricks using rewards and punishments. The algorithm learns through trial and error by interacting with an environment. It receives rewards for good actions and penalties for bad actions, learning to maximize its cumulative reward. Games like chess or Go, and robotics applications, are prime examples. Q-learning and Deep Q-Networks are popular algorithms.

In essence, supervised learning uses labeled data for direct instruction, unsupervised learning explores unlabeled data to discover patterns, and reinforcement learning learns through interaction and feedback.

Q 2. What are the common challenges in implementing AI solutions?

Implementing AI solutions presents several challenges:

- Data Quality and Availability: AI models thrive on high-quality, relevant data. Insufficient, noisy, or biased data can lead to inaccurate or unfair results. Gathering and cleaning large datasets can be time-consuming and expensive.

- Model Interpretability and Explainability: Understanding *why* a model makes a particular prediction is crucial, especially in high-stakes applications like healthcare or finance. Many complex AI models (like deep neural networks) are ‘black boxes,’ making interpretation difficult.

- Computational Resources: Training sophisticated AI models, especially deep learning models, requires significant computational power and energy, which can be a barrier for smaller organizations.

- Ethical Concerns and Bias: AI systems can inherit and amplify biases present in the data they are trained on, leading to discriminatory outcomes. Addressing bias and ensuring fairness is a critical ethical challenge.

- Deployment and Maintenance: Integrating AI models into existing systems and maintaining them over time requires specialized expertise and infrastructure.

Overcoming these challenges requires careful planning, robust data management strategies, ethical considerations throughout the development lifecycle, and ongoing monitoring and evaluation.

Q 3. Describe different types of neural networks and their applications.

Neural networks come in various types, each suited to different tasks:

- Feedforward Neural Networks (FNNs): The simplest type, where information flows in one direction, from input to output, without loops. Used for tasks like classification and regression.

- Convolutional Neural Networks (CNNs): Excellent for processing grid-like data such as images and videos. Their convolutional layers efficiently extract features from the data. Used extensively in image recognition, object detection, and image segmentation.

- Recurrent Neural Networks (RNNs): Designed to handle sequential data like text and time series. They have loops, allowing information to persist across time steps. Used in natural language processing, speech recognition, and machine translation. Long Short-Term Memory (LSTM) and Gated Recurrent Units (GRUs) are advanced RNN architectures that mitigate the vanishing gradient problem.

- Autoencoders: Used for unsupervised learning, particularly dimensionality reduction and feature extraction. They learn a compressed representation of the input data.

- Generative Adversarial Networks (GANs): Consist of two networks, a generator and a discriminator, that compete against each other. The generator creates synthetic data, while the discriminator tries to distinguish between real and synthetic data. Used for generating realistic images, videos, and other data types.

The choice of neural network depends heavily on the specific problem and the nature of the data. For example, a CNN would be a suitable choice for image classification, while an RNN would be more appropriate for natural language processing.

Q 4. How do you handle imbalanced datasets in machine learning?

Imbalanced datasets, where one class has significantly more samples than others, are a common problem in machine learning. This can lead to models that perform poorly on the minority class. Here’s how to handle them:

- Resampling Techniques:

- Oversampling: Increase the number of samples in the minority class by duplicating existing samples or generating synthetic samples (SMOTE – Synthetic Minority Over-sampling Technique).

- Undersampling: Decrease the number of samples in the majority class by randomly removing samples.

- Cost-Sensitive Learning: Assign higher misclassification costs to the minority class. This encourages the model to pay more attention to the minority class during training. This can be done by adjusting the class weights in the model’s loss function.

- Ensemble Methods: Use ensemble methods like bagging or boosting, which can improve the model’s performance on imbalanced datasets by combining the predictions of multiple models.

- Anomaly Detection Techniques: If the minority class represents anomalies or outliers, anomaly detection techniques can be more appropriate than traditional classification methods.

The best approach often involves a combination of these techniques, chosen based on the specific dataset and the problem.

Q 5. Explain the bias-variance tradeoff.

The bias-variance tradeoff is a fundamental concept in machine learning. It describes the balance between a model’s ability to fit the training data (variance) and its ability to generalize to unseen data (bias).

Bias refers to the error introduced by approximating a real-world problem, which is often complex, by a simplified model. High bias leads to underfitting, where the model is too simple to capture the underlying patterns in the data. It performs poorly on both training and test data.

Variance refers to the model’s sensitivity to fluctuations in the training data. High variance leads to overfitting, where the model learns the training data too well, including its noise, and performs poorly on unseen data. It performs well on training data but poorly on test data.

The goal is to find a sweet spot that minimizes both bias and variance. This often involves choosing the right model complexity and using regularization techniques to prevent overfitting. A simple analogy is archery: high bias is consistently missing far from the target (the model is consistently wrong), high variance is all over the place (the model is inconsistently wrong).

Q 6. What are some common evaluation metrics for machine learning models?

Common evaluation metrics for machine learning models depend on the type of problem (classification, regression, clustering, etc.):

- Classification: Accuracy, Precision, Recall, F1-score, AUC-ROC (Area Under the Receiver Operating Characteristic curve)

- Regression: Mean Squared Error (MSE), Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), R-squared

- Clustering: Silhouette score, Davies-Bouldin index

Choosing the right metric is crucial. For example, in a medical diagnosis scenario where false negatives are critical, recall would be a more important metric than accuracy. Furthermore, using multiple metrics provides a more comprehensive evaluation.

Q 7. Describe your experience with deep learning frameworks like TensorFlow or PyTorch.

I have extensive experience with both TensorFlow and PyTorch, two leading deep learning frameworks. I’ve used TensorFlow for building and deploying complex models, leveraging its production-ready capabilities and large community support. I’ve worked with TensorFlow’s Keras API for its ease of use in building and experimenting with various neural network architectures. I’ve also used TensorFlow Lite for deploying models on mobile and embedded devices.

With PyTorch, I’ve appreciated its dynamic computation graph, which allows for more flexibility and easier debugging compared to TensorFlow’s static graph. I’ve used PyTorch extensively for research projects, particularly for building custom neural network architectures and experimenting with new techniques. PyTorch’s strong support for GPU acceleration has been invaluable in training large models efficiently.

My experience encompasses tasks ranging from building image classifiers and natural language processing models to developing custom architectures for specific applications. I’m proficient in utilizing both frameworks’ functionalities for data loading, model training, validation, and visualization.

Q 8. How do you choose the appropriate algorithm for a given machine learning problem?

Choosing the right machine learning algorithm is crucial for project success. It depends heavily on the nature of your data and the problem you’re trying to solve. There’s no one-size-fits-all answer, but a systematic approach helps.

- Type of problem: Is it classification (predicting categories), regression (predicting continuous values), clustering (grouping similar data points), or something else?

- Data size and characteristics: How much data do you have? Is it high-dimensional? Is it labelled (supervised learning) or unlabelled (unsupervised learning)? Is it linear or non-linear?

- Interpretability vs. accuracy: Do you need a highly accurate model, or one that’s easy to understand and explain? Linear models are often more interpretable than deep neural networks.

- Computational resources: Some algorithms are computationally expensive and require significant resources.

For example, if you have a large dataset with labelled images and want to classify them (e.g., cats vs. dogs), a Convolutional Neural Network (CNN) would be a good choice. If you have a smaller dataset and need a highly interpretable model for predicting house prices, a linear regression model might suffice. If you’re dealing with unlabelled data and want to find clusters of similar customers, k-means clustering would be appropriate.

In practice, I often start with simpler models and then iteratively explore more complex ones if necessary. Experimentation and evaluation using appropriate metrics are key to selecting the best algorithm.

Q 9. Explain the concept of overfitting and how to prevent it.

Overfitting occurs when a machine learning model learns the training data *too* well, including its noise and outliers. This results in a model that performs excellently on the training data but poorly on unseen data (generalization is poor). Imagine a student memorizing the answers to a specific exam without understanding the underlying concepts – they’ll ace that exam, but fail any other test on the same subject.

Several techniques prevent overfitting:

- Cross-validation: This involves splitting your data into multiple subsets (folds), training the model on some folds, and testing it on the remaining folds. This gives a more robust estimate of the model’s performance on unseen data.

- Regularization: This adds a penalty term to the model’s loss function, discouraging overly complex models. L1 and L2 regularization are common techniques.

- Data augmentation: Artificially increasing the size of your training dataset by creating modified versions of existing data points (e.g., rotating images, adding noise to audio). This helps the model generalize better.

- Feature selection/engineering: Removing irrelevant or redundant features from the dataset can reduce complexity and prevent overfitting.

- Dropout (for neural networks): Randomly ignoring neurons during training to prevent over-reliance on any single neuron or group of neurons.

- Early stopping: Monitoring the model’s performance on a validation set during training and stopping when performance starts to degrade. This prevents the model from learning the noise in the training data.

For instance, if you’re training a model to classify handwritten digits and notice high accuracy on the training set but low accuracy on a separate test set, it’s a sign of overfitting. Implementing techniques like cross-validation and regularization can mitigate this.

Q 10. How do you handle missing data in a dataset?

Missing data is a common challenge in real-world datasets. Ignoring it can lead to biased and inaccurate results. The best approach depends on the nature of the missing data and the percentage of missing values.

- Deletion: Remove rows or columns with missing values. This is simple but can lead to significant data loss, especially if missingness is not random.

- Imputation: Replace missing values with estimated values. Common methods include:

- Mean/median/mode imputation: Replacing missing values with the mean, median, or mode of the respective column. Simple but can distort the data distribution.

- K-Nearest Neighbors (KNN) imputation: Estimating missing values based on the values of similar data points. More sophisticated but computationally expensive.

- Multiple imputation: Creating multiple imputed datasets and combining the results. This accounts for the uncertainty in the imputed values.

- Model-based imputation: Using a predictive model to predict missing values. This is a more powerful technique but requires careful consideration of the model used.

For example, if a small percentage of values are missing and missingness is random, mean imputation might be acceptable. However, if a significant portion of data is missing or if missingness is non-random (e.g., systematically missing values for certain demographics), more sophisticated techniques like KNN imputation or multiple imputation are necessary.

Before choosing an imputation method, it’s crucial to understand the reasons behind the missing data. This helps avoid introducing bias into the analysis.

Q 11. What is regularization and why is it important?

Regularization is a technique used to prevent overfitting in machine learning models by adding a penalty term to the model’s loss function. This penalty discourages the model from learning overly complex relationships in the data that might not generalize well to unseen data.

There are two main types of regularization:

- L1 regularization (LASSO): Adds a penalty proportional to the absolute value of the model’s coefficients. This encourages sparsity, meaning that some coefficients are shrunk to zero, effectively performing feature selection.

- L2 regularization (Ridge): Adds a penalty proportional to the square of the model’s coefficients. This shrinks the coefficients towards zero but doesn’t force them to be exactly zero.

The strength of the penalty is controlled by a hyperparameter (lambda or alpha). A larger value of the hyperparameter leads to stronger regularization and a simpler model, reducing overfitting but potentially increasing bias. Finding the optimal value often involves techniques like cross-validation.

Regularization is important because it helps to improve the generalization ability of the model, leading to better performance on unseen data. It’s particularly useful when dealing with high-dimensional data or when the number of features is large compared to the number of data points.

For example, in a linear regression model, L2 regularization (Ridge regression) can help prevent overfitting by shrinking the coefficients towards zero, making the model less sensitive to small changes in the input data.

Q 12. Explain the concept of backpropagation in neural networks.

Backpropagation is an algorithm used to train artificial neural networks. It’s the cornerstone of how neural networks learn from data. In essence, it’s a method for calculating the gradient of the loss function with respect to the network’s weights and biases.

Here’s a simplified explanation:

- Forward Pass: The input data is fed forward through the network, and the output is calculated.

- Loss Calculation: The difference between the network’s output and the true target value is calculated using a loss function (e.g., mean squared error for regression, cross-entropy for classification).

- Backward Pass: The gradient of the loss function with respect to each weight and bias in the network is calculated using the chain rule of calculus. This gradient indicates the direction and magnitude of change needed to reduce the loss.

- Weight Update: The weights and biases are updated using an optimization algorithm (e.g., gradient descent) to move in the direction that reduces the loss. The learning rate determines the size of these updates.

- Repeat: Steps 1-4 are repeated for multiple iterations (epochs) until the network’s performance on a validation set reaches a satisfactory level.

Imagine a hiker trying to descend a mountain (minimize loss). The gradient tells them the steepest downhill direction. Backpropagation is like the hiker constantly checking the steepness and adjusting their path accordingly.

Backpropagation is a fundamental algorithm in deep learning, enabling the training of complex neural networks for various tasks, including image recognition, natural language processing, and speech recognition.

Q 13. What are some common techniques for feature engineering?

Feature engineering is the process of transforming raw data into features that better represent the underlying problem to the predictive model, improving its accuracy and performance. It’s a creative and often crucial step in machine learning.

Common techniques include:

- Scaling and normalization: Transforming features to a common scale (e.g., Min-Max scaling, standardization). This prevents features with larger values from dominating the model.

- One-hot encoding: Converting categorical features into numerical representations (e.g., converting ‘color’ into ‘red’=1, ‘green’=0, ‘blue’=0).

- Binning: Grouping continuous features into discrete bins (e.g., dividing age into ranges like 0-18, 19-35, 36-55, etc.).

- Interaction features: Creating new features by combining existing features (e.g., multiplying age and income to create a ‘wealth’ feature).

- Polynomial features: Adding polynomial terms of existing features (e.g., adding x2 and x3 if x is a feature). This can capture non-linear relationships.

- Feature extraction: Applying techniques like Principal Component Analysis (PCA) to reduce dimensionality while retaining most of the important information.

- Domain knowledge: Incorporating domain expertise to create features that are relevant to the problem (e.g., engineering features based on physical laws or specific industry metrics).

For example, if you’re predicting house prices, you might engineer features like ‘house size per square foot,’ ‘distance to nearest school,’ or ‘number of bedrooms’ from raw data about the property. These newly engineered features are often more predictive than the raw data alone.

Q 14. Describe your experience with data preprocessing techniques.

Data preprocessing is a critical step that significantly impacts the performance of any machine learning model. My experience encompasses a wide range of techniques, crucial for transforming raw data into a suitable format for model training.

I’ve extensively used techniques like:

- Handling missing values: Employing strategies such as imputation (mean, median, mode, KNN), deletion (if appropriate), and handling missingness as a separate feature based on the nature and extent of missing data. I always carefully analyze the reasons behind missing data to choose the most suitable technique, avoiding bias.

- Data cleaning: Identifying and addressing inconsistencies, errors, and outliers in the dataset. This often involves outlier detection algorithms, manual inspection, and data validation.

- Data transformation: Applying transformations like logarithmic, square root, or Box-Cox transformations to address skewness and improve model performance. Standardization (z-score normalization) and min-max scaling are also regularly used to bring features to comparable scales.

- Feature encoding: Converting categorical variables into numerical representations using methods like one-hot encoding, label encoding, or target encoding. The choice depends on the nature of the categorical variable and the algorithm used.

- Feature scaling: Scaling numerical features to prevent features with larger ranges from dominating the model. This often involves standardization (z-score normalization) or min-max scaling.

- Dimensionality reduction: Applying techniques like Principal Component Analysis (PCA) or t-SNE to reduce the number of features while preserving essential information. This is particularly useful for high-dimensional datasets.

In a recent project involving customer churn prediction, I handled a substantial number of missing values using a combination of KNN imputation and multiple imputation. This ensured that we retained as much information as possible while mitigating the impact of missing data on the model’s performance. Through careful preprocessing, we achieved a significant improvement in predictive accuracy compared to models trained on the raw data.

Q 15. Explain the difference between precision and recall.

Precision and recall are two crucial metrics used to evaluate the performance of a classification model, particularly in situations with imbalanced datasets. They both assess the accuracy of positive predictions but from different perspectives.

Precision answers the question: “Of all the instances predicted as positive, what proportion was actually positive?” It focuses on the accuracy of the positive predictions. A high precision indicates that when the model predicts a positive outcome, it’s usually correct. Think of it as minimizing false positives.

Recall, on the other hand, asks: “Of all the actual positive instances, what proportion did the model correctly identify?” It focuses on the model’s ability to find all the positive instances. High recall means the model successfully identifies most of the positive cases, minimizing false negatives.

Example: Imagine a spam filter. High precision means the filter rarely flags legitimate emails as spam (few false positives). High recall means the filter catches most spam emails (few false negatives). The optimal balance between precision and recall depends on the specific application. A medical diagnosis system might prioritize recall (catching all diseases, even with some false positives), while a spam filter might prioritize precision (avoiding false positives that annoy users).

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What is the difference between accuracy and F1-score?

Accuracy and the F1-score are both metrics used to evaluate a classification model’s performance, but they address different aspects and are particularly useful in different contexts.

Accuracy is the simplest metric, representing the overall correctness of the model’s predictions. It’s calculated as the ratio of correctly classified instances to the total number of instances. While easy to understand, accuracy can be misleading when dealing with imbalanced datasets (where one class significantly outnumbers others). A model could achieve high accuracy by simply predicting the majority class most of the time, even if it performs poorly on the minority class.

The F1-score is the harmonic mean of precision and recall. It provides a balanced measure of a model’s performance, considering both false positives and false negatives. It’s particularly useful when dealing with imbalanced datasets because it considers both precision and recall, offering a more comprehensive picture than accuracy alone. A higher F1-score indicates a better balance between precision and recall.

Example: Suppose you’re building a model to detect fraudulent transactions. Accuracy might be high if the model mostly predicts ‘not fraudulent’ (since most transactions are legitimate), but the F1-score would better capture the model’s ability to correctly identify fraudulent transactions (which are the minority class and most important to detect).

Q 17. Explain the concept of a confusion matrix.

A confusion matrix is a visual tool used to evaluate the performance of a classification model. It presents a summary of the model’s predictions, showing the counts of true positives, true negatives, false positives, and false negatives.

The matrix is structured as a table with predicted classes along the top and actual classes along the side. Each cell represents the number of instances that belong to a certain actual class and were predicted as belonging to a certain predicted class.

Predicted Positive Negative Actual Positive TP FN Negative FP TN

TP (True Positive): Correctly predicted positive instances.

TN (True Negative): Correctly predicted negative instances.

FP (False Positive): Incorrectly predicted positive instances (Type I error).

FN (False Negative): Incorrectly predicted negative instances (Type II error).

Example: In a medical diagnosis scenario, a confusion matrix would show the number of patients correctly diagnosed with a disease (TP), the number of healthy patients correctly identified (TN), the number of healthy patients incorrectly diagnosed (FP), and the number of sick patients missed by the diagnosis (FN). The matrix allows for a detailed analysis of the model’s performance across different classes, helping to identify areas for improvement.

Q 18. What are some common techniques for model selection?

Model selection is a crucial step in the machine learning process, involving choosing the best model from a set of candidate models. Several techniques help in this process:

- Cross-validation: A resampling technique that divides the data into multiple folds, trains the model on some folds, and validates on the remaining folds. This helps to estimate the model’s performance on unseen data and reduce overfitting.

- Hyperparameter tuning: Systematically searching for the optimal hyperparameters (parameters that control the learning process, not learned from the data) for a given model. Techniques like grid search, random search, and Bayesian optimization can be used.

- Holdout method: Splitting the data into training and testing sets. The model is trained on the training set and evaluated on the testing set. A simple but effective approach.

- Nested cross-validation: Combines cross-validation with hyperparameter tuning, providing a robust way to select the best model and hyperparameters.

- Performance metrics: Using appropriate metrics (like accuracy, precision, recall, F1-score, AUC) to compare the performance of different models. The choice of metric depends on the specific problem and business goals.

The best technique often involves a combination of these methods. For instance, you might use nested cross-validation to tune hyperparameters and then compare the final performance of different models using appropriate metrics.

Q 19. How do you deploy a machine learning model into production?

Deploying a machine learning model to production involves several key steps, ensuring the model is reliable, scalable, and maintainable. This process typically includes:

- Model serialization: Saving the trained model to a file format that can be easily loaded and used in a production environment.

- Containerization (e.g., Docker): Packaging the model and its dependencies into a container to ensure consistency across different environments.

- Infrastructure setup: Setting up the necessary hardware and software infrastructure (e.g., servers, databases) to host the model.

- API development: Creating an API (Application Programming Interface) to allow other applications or systems to interact with the model and make predictions.

- Monitoring and logging: Implementing monitoring tools to track model performance, identify issues, and ensure the model continues to perform as expected. Logging helps with debugging and troubleshooting.

- Version control: Managing different versions of the model and keeping track of changes.

- Scalability and robustness: Designing the deployment architecture to handle increased traffic and potential failures.

The specific steps and technologies used will vary depending on the complexity of the model and the specific requirements of the application. Cloud platforms offer managed services that simplify many of these steps.

Q 20. Explain your experience with cloud platforms like AWS, Azure, or GCP for AI/ML.

I have extensive experience utilizing cloud platforms like AWS, Azure, and GCP for AI/ML projects. My experience includes:

- AWS: I’ve leveraged services like SageMaker for model training, hosting, and deployment; EC2 for custom infrastructure; S3 for data storage; and Lambda for serverless functions. I’m comfortable with building and managing pipelines using AWS services.

- Azure: I have experience with Azure Machine Learning for model lifecycle management, Azure Kubernetes Service (AKS) for container orchestration, and Azure Blob Storage for data storage. I’ve used Azure Cognitive Services for pre-trained models and APIs.

- GCP: I’ve worked with Google Cloud AI Platform for model training and deployment, Google Kubernetes Engine (GKE) for container management, and Cloud Storage for data storage. I’m familiar with using BigQuery for large-scale data processing.

My experience extends beyond simply using these services. I understand the trade-offs involved in choosing between platforms and services based on project requirements, cost considerations, and scalability needs. I can design and implement robust and scalable AI/ML solutions using these platforms.

Q 21. What is transfer learning and how can it be beneficial?

Transfer learning is a powerful technique in machine learning where a pre-trained model, typically trained on a large dataset for a general task, is adapted for a new task with a smaller dataset. Instead of training a model from scratch, you leverage the knowledge learned by the pre-trained model and fine-tune it for your specific needs.

Benefits:

- Reduced training time and data requirements: Pre-trained models already have learned features, significantly reducing the training time and the amount of data needed for the new task.

- Improved performance: Leveraging the knowledge from a larger dataset often leads to better performance, especially when dealing with limited data.

- Cost savings: Less training time translates to lower computational costs.

Example: A model trained on a massive dataset of images (like ImageNet) for image classification can be used as a starting point for a new task, such as identifying specific types of plants or medical conditions. The pre-trained model’s lower layers will already have learned generic image features (edges, textures, shapes), which can be transferred to the new task. Only the upper layers need to be fine-tuned to learn the specific features relevant to the new task. This approach dramatically reduces the need for a large dataset specifically for plant or medical image classification.

Q 22. Explain the concept of dimensionality reduction.

Dimensionality reduction is a technique used to reduce the number of variables (features) in a dataset while preserving as much important information as possible. Imagine you’re trying to describe an elephant. You could list hundreds of features: ear size, trunk length, weight, color of each hair, etc. Dimensionality reduction helps you find the most important features – maybe just size and shape – that still allow you to effectively distinguish an elephant from a mouse. This is crucial because high-dimensional data can lead to computational inefficiency, the ‘curse of dimensionality’, and overfitting in machine learning models.

Common techniques include:

- Principal Component Analysis (PCA): This linear transformation finds the principal components – new uncorrelated variables that capture the maximum variance in the data. It’s like rotating your data to find the axes of greatest spread.

- t-distributed Stochastic Neighbor Embedding (t-SNE): A nonlinear technique excellent for visualizing high-dimensional data in lower dimensions (often 2D or 3D). It focuses on preserving local neighborhood structures.

- Linear Discriminant Analysis (LDA): Specifically designed for supervised learning, it aims to find the linear combinations of features that best separate different classes.

For example, in image processing, dimensionality reduction can significantly reduce the size of images while retaining essential visual information, leading to faster processing and storage.

Q 23. What are some common techniques for anomaly detection?

Anomaly detection is the process of identifying data points that significantly deviate from the norm. Think of it like spotting a counterfeit bill amongst genuine ones. These anomalies can represent errors, fraudulent activities, or simply unusual events.

Several common techniques exist:

- Statistical methods: These methods assume a probability distribution for the data and flag data points falling outside a certain threshold. For example, using Z-scores to identify outliers.

- Clustering-based methods: These group similar data points together. Anomalies are usually data points that don’t belong to any cluster or form their own tiny clusters.

- Machine learning methods: These techniques learn patterns from the data and flag instances that deviate from these learned patterns. Examples include One-class SVM, Isolation Forest, and Autoencoders.

In fraud detection, anomaly detection can identify unusual credit card transactions that might indicate fraudulent activity. In manufacturing, it can help identify defective products based on sensor data.

Q 24. Describe your experience with time series analysis.

I have extensive experience with time series analysis, which involves analyzing data points collected over time. This often involves identifying patterns, trends, seasonality, and forecasting future values. I’ve worked with various techniques including:

- ARIMA models: Autoregressive Integrated Moving Average models are powerful for analyzing stationary time series, capturing the autocorrelations within the data.

- Prophet (from Meta): A robust model specifically designed for business time series with seasonality and trend components.

- LSTM networks (Long Short-Term Memory): Recurrent neural networks excellent at capturing long-range dependencies in time series data, useful for complex patterns and irregular seasonality.

For example, I once used ARIMA models to forecast energy consumption for a large building, allowing for better resource allocation and cost optimization. In another project, LSTMs were crucial in predicting stock prices based on historical market data, though I always emphasize the inherent uncertainties in financial forecasting.

Q 25. Explain the concept of reinforcement learning and its applications.

Reinforcement learning (RL) is a type of machine learning where an agent learns to interact with an environment by taking actions and receiving rewards or penalties. It’s like training a dog: you give it treats (rewards) when it performs desired behaviors and correct it when it doesn’t (penalties). The goal is to learn a policy – a strategy – that maximizes the cumulative reward over time.

Key components:

- Agent: The learner and decision-maker.

- Environment: The world the agent interacts with.

- State: The current situation the agent finds itself in.

- Action: The choices the agent can make.

- Reward: Feedback from the environment.

Applications are vast:

- Game playing: AlphaGo’s victory over a Go champion is a prime example.

- Robotics: Training robots to perform complex tasks in real-world environments.

- Resource management: Optimizing resource allocation in various settings.

- Personalized recommendations: Suggesting products or content tailored to individual preferences.

Q 26. What are some ethical considerations in AI development?

Ethical considerations in AI development are paramount. We must be mindful of potential biases, fairness, privacy, accountability, and transparency.

- Bias: AI systems trained on biased data can perpetuate and amplify existing societal biases, leading to unfair or discriminatory outcomes. For example, a facial recognition system trained primarily on images of white faces might perform poorly on people with darker skin tones.

- Fairness: Ensuring that AI systems treat all individuals equitably, regardless of their background or characteristics.

- Privacy: Protecting sensitive personal information used to train and operate AI systems. Data anonymization and differential privacy techniques are crucial.

- Accountability: Establishing clear lines of responsibility when AI systems make decisions that impact individuals. Who is held responsible when an autonomous vehicle causes an accident?

- Transparency: Making the workings of AI systems understandable and explainable. This helps build trust and allows for identifying potential problems.

Addressing these concerns requires careful data curation, algorithm design, and ongoing monitoring and evaluation of AI systems.

Q 27. How do you stay up-to-date with the latest advancements in AI?

Staying current in the rapidly evolving field of AI requires a multi-pronged approach:

- Reading research papers: Staying abreast of the latest breakthroughs through publications on arXiv and journals like JMLR and NeurIPS.

- Following leading researchers and organizations: Engaging with experts on social media platforms and attending conferences and workshops.

- Taking online courses and attending workshops: Platforms like Coursera, edX, and Fast.ai offer excellent resources for continuous learning.

- Participating in online communities and forums: Actively engaging in discussions and knowledge sharing within the AI community.

- Experimenting with new tools and techniques: Hands-on experience with the latest AI tools and frameworks is crucial for practical understanding.

This continuous learning ensures that my knowledge base remains up-to-date and relevant, enabling me to apply the most effective and ethical AI solutions.

Q 28. Describe a challenging AI project you worked on and how you overcame the challenges.

One challenging project involved developing an AI system for automated medical diagnosis based on medical images. The primary challenge was the scarcity of high-quality labeled data – a common issue in medical AI. The limited dataset meant that the models were prone to overfitting and poor generalization to unseen data.

To overcome this, we employed several strategies:

- Data augmentation: We artificially increased the size of our dataset by applying various transformations to the existing images (rotation, flipping, etc.), which helped to improve model robustness.

- Transfer learning: We leveraged pre-trained models trained on large image datasets (like ImageNet), fine-tuning them on our smaller medical image dataset. This allowed us to utilize the knowledge learned from the larger dataset and achieve better performance despite the limited data.

- Ensemble methods: Combining predictions from multiple models helped to reduce the variance and improve the overall accuracy and reliability of the system.

- Careful model selection: We experimented with various deep learning architectures and selected the ones that provided the best balance between accuracy and computational efficiency.

This project highlighted the importance of creative problem-solving when faced with limited resources, demonstrating the critical role of data augmentation, transfer learning, and ensemble techniques in addressing real-world AI challenges.

Key Topics to Learn for Your Artificial Intelligence (AI) Interview

Ace your AI interview by mastering these core concepts. Remember, understanding the “why” behind the technology is just as important as knowing the “how.”

- Fundamental AI Concepts: Machine Learning (Supervised, Unsupervised, Reinforcement Learning), Deep Learning, Neural Networks (CNNs, RNNs, Transformers), Natural Language Processing (NLP).

- Practical Applications: Explore real-world examples like image recognition, chatbot development, recommendation systems, fraud detection, and predictive analytics. Consider projects you’ve worked on and how these AI concepts were applied.

- Algorithms & Models: Gain a strong understanding of common algorithms like linear regression, logistic regression, decision trees, and support vector machines. Understand their strengths, weaknesses, and when to apply them.

- Data Preprocessing & Feature Engineering: Master techniques for cleaning, transforming, and selecting relevant data features to improve model performance. This is often overlooked, but crucial.

- Model Evaluation & Selection: Learn how to evaluate model performance using metrics like accuracy, precision, recall, F1-score, and AUC. Understand techniques for model selection and hyperparameter tuning.

- Ethical Considerations in AI: Familiarize yourself with the ethical implications of AI, including bias, fairness, and transparency. This demonstrates responsible and thoughtful engagement with the field.

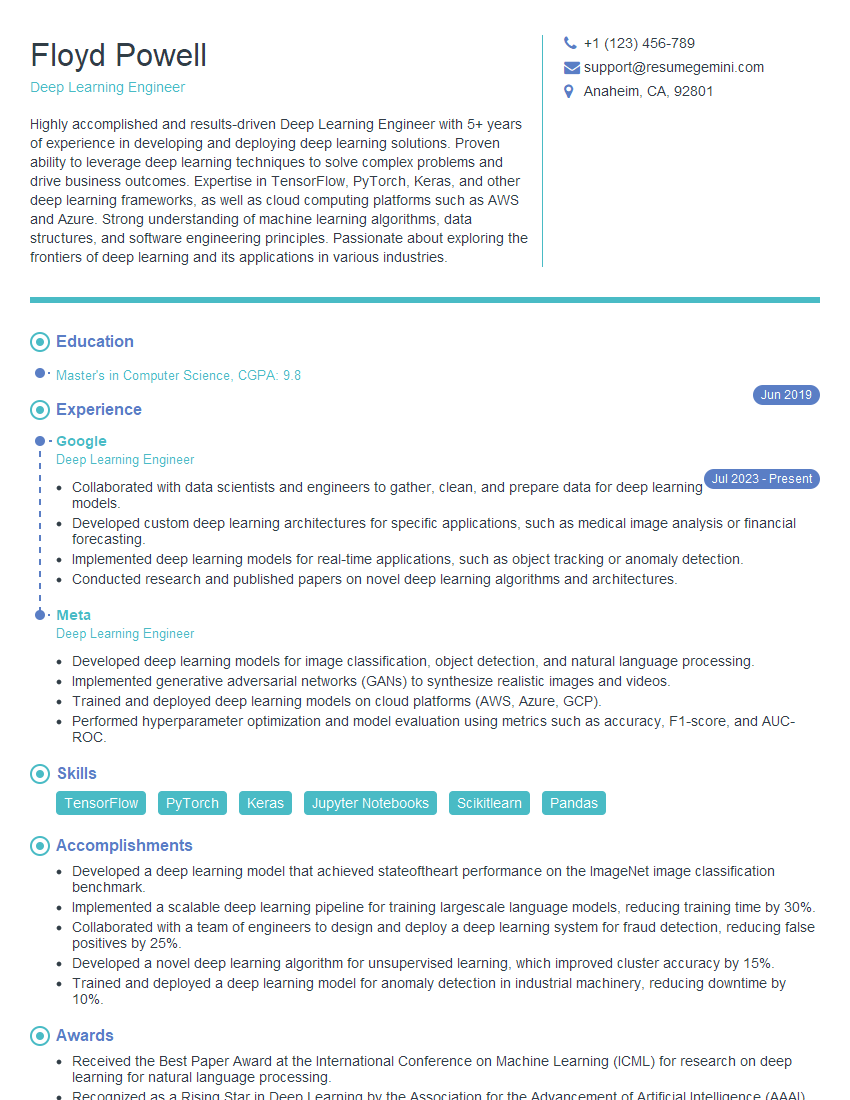

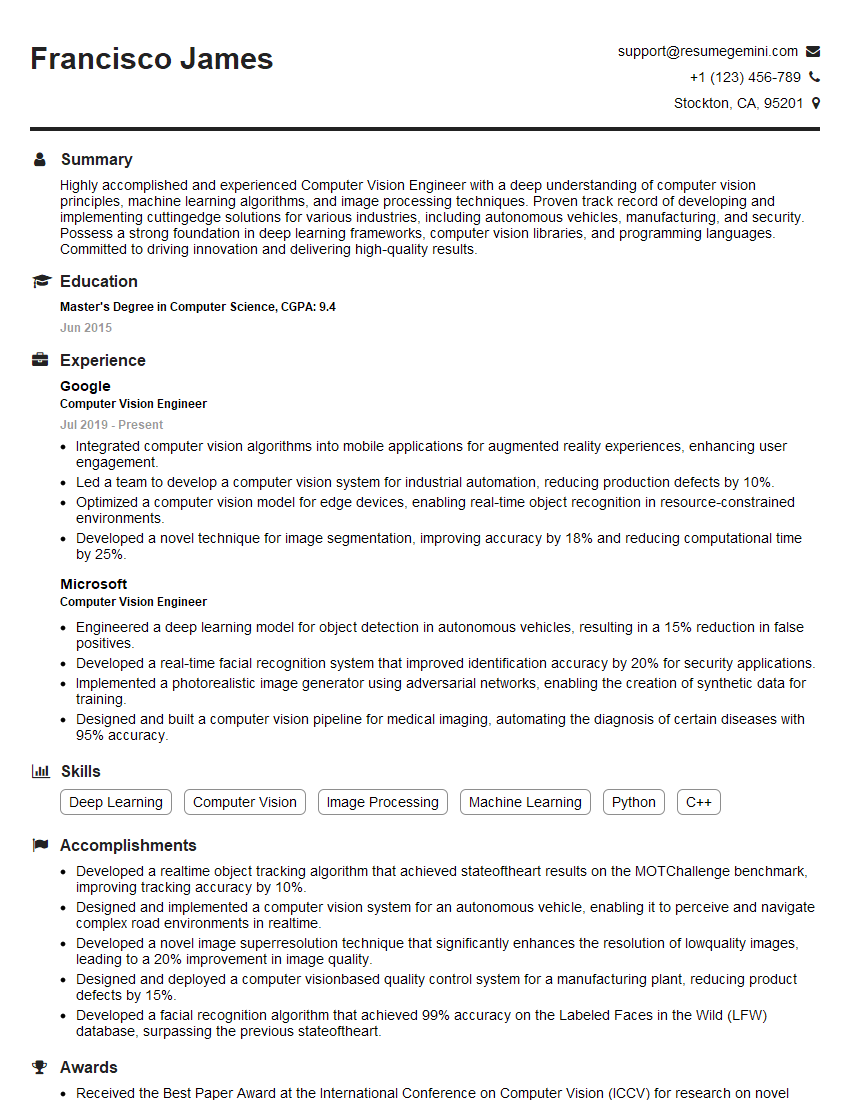

Next Steps: Level Up Your AI Career

Mastering AI opens doors to exciting career opportunities with high growth potential. To maximize your chances, a strong resume is essential. Create an ATS-friendly resume that showcases your skills and experience effectively. ResumeGemini is a trusted resource that can help you build a professional and impactful resume, tailored to the specific demands of AI roles. Examples of AI-focused resumes are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples