Unlock your full potential by mastering the most common Lidar Data Processing and Classification interview questions. This blog offers a deep dive into the critical topics, ensuring you’re not only prepared to answer but to excel. With these insights, you’ll approach your interview with clarity and confidence.

Questions Asked in Lidar Data Processing and Classification Interview

Q 1. Explain the difference between LiDAR and other remote sensing technologies.

LiDAR (Light Detection and Ranging) stands out from other remote sensing technologies like aerial photography or satellite imagery due to its ability to directly measure distances. While other methods infer elevation from image analysis or radio waves, LiDAR actively emits laser pulses and measures the time it takes for the pulses to reflect back, providing highly accurate three-dimensional point cloud data. This allows for precise measurements of terrain, objects, and features with centimeter-level accuracy, a level of detail unmatched by other technologies. Think of it like this: traditional aerial photography gives you a picture, but LiDAR gives you a detailed 3D model.

For example, imagine needing to map a forest canopy. Aerial photography might show the treetops, but LiDAR can penetrate the canopy and map individual tree heights, branches, and even the ground beneath. Similarly, in urban environments, LiDAR can easily distinguish buildings from roads and trees whereas other sensors might struggle with resolution and shadowing.

Q 2. Describe the process of point cloud registration.

Point cloud registration is the process of aligning multiple point clouds acquired from different positions or times into a single, unified coordinate system. It’s crucial for creating a complete and accurate 3D model of a larger area. This involves identifying corresponding points (features) between different scans and then using these correspondences to transform the point clouds. The process usually involves several steps:

- Data Preprocessing: This might include removing noise and outliers, filtering the point clouds, and sub-sampling to reduce computational load.

- Feature Extraction: Identifying key features like planar surfaces, edges, or points of interest within the point clouds that can serve as matching points for registration.

- Initial Alignment (Coarse Registration): Techniques like Iterative Closest Point (ICP) algorithms are commonly used to find an approximate alignment based on the extracted features. This involves iteratively comparing points in one scan to the closest points in another and refining the transformation matrix until an optimal alignment is achieved.

- Fine Registration (Refine Alignment): After coarse registration, finer adjustments are made using techniques like refining the ICP algorithm parameters or employing more sophisticated methods like bundle adjustment. This ensures accurate alignment even in complex scenarios.

- Quality Assessment: Finally, the registered point cloud is evaluated to assess the accuracy of the registration process, often visually or using statistical metrics.

Think of it like assembling a jigsaw puzzle: each scan is a piece, and registration is the process of finding the right place for each piece to build the complete picture. Inaccurate registration can lead to significant errors in subsequent analyses.

Q 3. What are the common file formats used for LiDAR data?

LiDAR data is commonly stored in several formats, each with its own strengths and weaknesses. Some of the most popular ones include:

- LAS (LASer Scan) format: This is the industry standard, specifically designed for storing LiDAR point cloud data. It’s highly efficient and supports metadata crucial for managing the data. It includes information about the point’s location, intensity, classification, and return number.

- LAZ (LASzip): This is a compressed version of the LAS format. It achieves significant file size reduction without compromising data quality, making it ideal for storage and transmission of large datasets.

- XYZ format: This simple ASCII-based format represents each point by its X, Y, and Z coordinates. While less feature-rich than LAS, its simplicity makes it suitable for certain applications and compatibility with various software.

- Shapefiles: While not native to LiDAR, they can be used to represent LiDAR data in GIS software by storing the XYZ coordinates as attributes of point features.

The choice of format depends on the specific needs of the project, considering factors like data size, processing capabilities, and integration with other GIS software.

Q 4. Explain different LiDAR point classification methods (e.g., ground, vegetation, building).

LiDAR point classification is the process of assigning meaningful labels to individual points in a point cloud, grouping them based on their characteristics and features. This greatly enhances the usefulness of the data and allows for targeted analysis. Common classifications include:

- Ground Points: Points representing the bare earth surface. Crucial for creating Digital Terrain Models (DTMs).

- Vegetation Points: Points representing trees, shrubs, and other vegetation. Used for forestry applications, biodiversity assessments, and urban green space analysis.

- Building Points: Points representing buildings and structures. Essential for building modeling, urban planning, and 3D city modeling.

- Water Points: Points reflecting water bodies like rivers, lakes, and oceans. Used for hydrological modeling and water resource management.

- Low Vegetation Points: Points representing short vegetation like grass or low shrubs.

- High Vegetation Points: Points representing tall vegetation like trees.

Classification can be performed manually, using automated algorithms (e.g., based on height, intensity, or neighborhood properties), or a combination of both. Machine learning techniques are increasingly used for automated classification, offering higher accuracy and efficiency, especially for complex scenes.

For instance, ground points can be identified using algorithms that detect planar surfaces while vegetation points may be classified based on their height relative to the ground and density within the point cloud.

Q 5. How do you handle noise and outliers in LiDAR point clouds?

Noise and outliers significantly impact the accuracy and reliability of LiDAR data. These anomalies, resulting from various factors like sensor errors or reflections from unexpected sources, need careful handling. Several techniques are used:

- Statistical Filtering: Techniques like median filtering or standard deviation filtering remove points that deviate significantly from their neighbors. This is useful for smoothing out minor inconsistencies.

- Spatial Filtering: Methods like morphological filtering or neighborhood analysis remove isolated points or clusters that are spatially inconsistent with surrounding points. This is effective for removing outliers.

- Segmentation-Based Filtering: Identifying distinct regions or segments within the point cloud, and filtering out points that don’t belong to any meaningful segment. This is especially helpful in complex environments.

- Adaptive Filtering: Algorithms that adjust their filtering parameters based on the local density and characteristics of the point cloud. This improves the robustness of filtering in areas with varying point density.

The choice of filtering technique depends on the nature and extent of the noise and outliers, as well as the specific application. It’s often necessary to experiment with different approaches to find the optimal balance between noise reduction and data preservation.

Q 6. What are the challenges in classifying LiDAR data in complex urban environments?

Classifying LiDAR data in complex urban environments presents significant challenges due to the high density of features, the diversity of objects, and the presence of many similar features. Some specific challenges include:

- High Point Density: The sheer number of points in urban areas leads to computational challenges and requires efficient algorithms for processing.

- Mixed Classes: Distinguishing between closely spaced objects with similar heights, such as buildings and trees, or even building features like balconies and walls, poses difficulty.

- Occlusion and Shadowing: Buildings and other structures can obscure the view of ground points and lead to data gaps or misclassification.

- Noise and Outliers: The complexity of urban environments tends to lead to a higher incidence of noise and outliers, making filtering more challenging.

Addressing these challenges requires advanced classification techniques such as machine learning models trained on large datasets of urban LiDAR data, incorporating contextual information, and employing multi-sensor data fusion (e.g., combining LiDAR with imagery).

Q 7. Describe different filtering techniques used in LiDAR data processing.

Several filtering techniques are employed in LiDAR data processing to remove noise, outliers, and unwanted features. These enhance data quality and improve the efficiency of subsequent processing steps.

- Statistical Filters: These methods use statistical properties of the point cloud to identify and remove outliers. Examples include median filtering, which replaces each point with the median value of its neighbors, and standard deviation filtering, which removes points that fall outside a specified number of standard deviations from the mean.

- Spatial Filters: These filters operate on the spatial distribution of points. Examples include morphological filters, which use mathematical morphology operations to smooth or thin the point cloud, and nearest neighbor filters, which remove points based on their distance to neighboring points.

- Height-based Filters: These are particularly useful for separating ground points from vegetation. Examples include progressive TIN densification, which iteratively builds a TIN (Triangulated Irregular Network) to identify ground points, and threshold-based filtering, which removes points that exceed a certain height above a reference surface.

- Intensity-based Filters: These filters use the intensity values of the laser returns, often correlated to the reflectivity of the surface. Points with unusually high or low intensity values can be considered noise and removed.

The selection of appropriate filtering techniques depends on the specific characteristics of the LiDAR data, the types of noise present, and the goals of the processing. Often, a combination of different filters is employed to achieve optimal results.

Q 8. How do you assess the accuracy of LiDAR data?

Assessing LiDAR data accuracy involves a multi-faceted approach, focusing on both the raw data quality and the accuracy of derived products. We look at several key metrics.

Point Cloud Density: This refers to the number of points per unit area. Higher density generally leads to better accuracy, allowing for a more detailed representation of the terrain. Think of it like the resolution of an image – higher resolution means more detail.

Root Mean Square Error (RMSE): This is a common metric to quantify the difference between the LiDAR-derived elevations and reference elevations, often obtained through ground surveys or high-accuracy GPS. A lower RMSE indicates higher accuracy.

Accuracy Assessment with Check Points: We establish ground control points (GCPs) – points with precisely known coordinates – and compare the LiDAR-derived coordinates to these GCPs. This allows for a direct assessment of positional accuracy.

Data Completeness: We examine the point cloud for gaps or areas with significantly lower point density. These gaps can affect the accuracy of derived products, like digital elevation models (DEMs). Data completeness is vital for reliable analysis.

For example, in a forestry application, we might compare tree heights derived from the LiDAR data with manually measured tree heights at several locations to assess the vertical accuracy. In a road survey project, we might use GCPs placed along the road to evaluate the horizontal accuracy.

Q 9. What are the different types of LiDAR systems (e.g., airborne, terrestrial, mobile)?

LiDAR systems are categorized based on their platform and application. Each type offers unique advantages and limitations.

Airborne LiDAR: Mounted on aircraft, these systems cover large areas efficiently. They are ideal for creating large-scale DEMs, mapping forests, and monitoring coastal changes. Think of it as taking a high-resolution aerial photograph but with 3D point data.

Terrestrial LiDAR (TLS): Ground-based systems offer high accuracy and detail over smaller areas. They are commonly used for precise surveying of buildings, bridges, and other infrastructure, providing incredibly detailed scans.

Mobile LiDAR: These systems are integrated into vehicles, capturing data while moving. They’re efficient for mapping roads, urban areas, and linear features. Imagine a street view car, but instead of pictures, it’s collecting millions of 3D points.

The choice of system depends on the project’s scale, required accuracy, and budget. Large-scale mapping projects benefit from airborne systems, while detailed site surveys often require terrestrial or mobile systems.

Q 10. Explain the concept of ground truthing in LiDAR data analysis.

Ground truthing is the process of validating LiDAR data by comparing it to independently collected field measurements. It’s essentially a reality check.

This involves collecting data on the ground at specific locations within the LiDAR survey area. These measurements might include GPS coordinates, elevation measurements with a total station, or even manual measurements of tree heights or building dimensions. These ground measurements then serve as reference points to assess the accuracy and reliability of the LiDAR-derived information. For example, we might compare the LiDAR-derived elevation of a specific point to the elevation measured with a highly accurate GPS device.

The accuracy of ground truthing depends on the methods and equipment used. High-precision GPS and total stations provide reliable reference data. The number of ground truth points needed depends on the project’s requirements and the variability of the terrain or features being mapped.

Q 11. How do you handle data gaps or missing data in LiDAR point clouds?

Data gaps or missing data in LiDAR point clouds are a common challenge. Several techniques can be employed to handle these issues.

Interpolation: This involves estimating the missing data based on the values of neighboring points. Several interpolation methods exist, such as kriging and inverse distance weighting. The choice depends on the characteristics of the data and the desired level of smoothing.

Inpainting: More sophisticated techniques, often employing machine learning, can be used to ‘fill in’ missing data more intelligently. This is particularly useful in complex terrains.

Data Fusion: Combining the LiDAR data with other data sources, like aerial imagery or other datasets, can help to fill gaps. This approach leverages complementary information.

Accepting Limitations: Sometimes, the missing data is simply too extensive to be effectively filled, and a realistic approach is to acknowledge its presence and adjust analysis accordingly.

The best approach depends on the extent and nature of the data gaps. Minor gaps can often be handled effectively with interpolation, while significant gaps may require more advanced techniques or accepting limitations in analysis.

Q 12. Describe your experience with various LiDAR processing software (e.g., ArcGIS, Global Mapper, LASTools).

I have extensive experience with several LiDAR processing software packages. My expertise includes:

ArcGIS: I’m proficient in using ArcGIS Pro for LiDAR data visualization, analysis, and classification. I’ve used tools such as the Spatial Analyst extension for creating DEMs and slope maps. I’ve also worked with the 3D Analyst extension for visualization and analysis of the point cloud data.

Global Mapper: I’ve utilized Global Mapper for its efficient processing of large LiDAR datasets, performing tasks like data filtering, noise removal, and point cloud classification. Its user-friendly interface streamlines many common tasks.

LASTools: For more advanced processing and customized solutions, I utilize LASTools. Its command-line interface provides greater control over the processing workflow and allows for development of tailored scripts to address specific challenges. For instance, I’ve written scripts to automate the classification of points based on specific criteria.

My experience encompasses all stages of LiDAR processing, from initial data import and preprocessing to the generation of various derived products and reporting.

Q 13. What are the applications of LiDAR data in various fields (e.g., forestry, urban planning, infrastructure)?

LiDAR data finds applications in a vast array of fields. Here are some prominent examples:

Forestry: LiDAR is invaluable for estimating forest biomass, measuring tree heights and diameters, and mapping forest canopy structure. This information is crucial for sustainable forest management and carbon accounting.

Urban Planning: LiDAR data aids in creating highly accurate 3D models of cities, supporting urban planning and infrastructure development. It allows for the assessment of building heights, road networks, and land use patterns.

Infrastructure: LiDAR is crucial for inspecting bridges, roads, and other infrastructure, identifying damage or deterioration. It enables the creation of accurate as-built models and supports maintenance planning.

Geology and Mining: LiDAR helps in creating detailed terrain models and identifying geological features. This is vital for mining operations and environmental impact assessments.

Archaeology: LiDAR can penetrate dense vegetation, revealing hidden archaeological features such as ancient settlements or earthworks.

The versatility of LiDAR stems from its ability to provide highly accurate 3D spatial data, enabling precise measurements and detailed analysis across diverse applications.

Q 14. Explain your understanding of different coordinate systems used in LiDAR data.

Understanding coordinate systems is fundamental to working with LiDAR data. Inaccurate or inconsistent coordinate systems can lead to significant errors in analysis and modeling.

LiDAR data is typically acquired and processed in a geographic coordinate system (GCS), such as latitude and longitude (WGS 84). This is a global reference system based on the Earth’s curvature. However, for local-scale applications, it’s often more practical to use a projected coordinate system (PCS). This involves projecting the spherical Earth onto a flat surface using a specific map projection (e.g., UTM, State Plane). The choice of projection depends on the area being mapped and the desired level of distortion.

Furthermore, LiDAR data may be represented in different vertical datums. The North American Vertical Datum of 1988 (NAVD88) and the ellipsoid height are common choices. The vertical datum defines the reference surface for elevations. Mismatches in vertical datums can lead to significant elevation errors.

It’s essential to carefully consider and document the coordinate system and vertical datum used throughout the entire LiDAR data processing workflow. Transformations between different coordinate systems and datums are often necessary to ensure data consistency and accurate analysis.

Q 15. How do you visualize and analyze LiDAR point clouds?

Visualizing and analyzing LiDAR point clouds involves several key steps and techniques. Think of a LiDAR point cloud as a massive, 3D scatter plot representing the surface of the earth. We need tools to make sense of this data.

Firstly, we use specialized software like CloudCompare, LAStools, or ArcGIS Pro. These platforms allow us to load the point cloud and apply various visualization methods. We can adjust point size, color based on intensity or classification, and create different viewpoints to explore the data.

For analysis, we often employ techniques like:

- Filtering: Removing noise points (e.g., from vegetation or outliers) using statistical filters or algorithms that identify points outside a certain range.

- Segmentation: Grouping points into meaningful segments based on characteristics like elevation, intensity, or proximity. This helps separate ground points from buildings, trees, etc.

- Classification: Assigning labels to points (ground, building, vegetation, etc.) using automated or manual methods. This is crucial for extracting information and creating derivative products.

- Cross-sectional analysis: Generating profiles or sections through the point cloud to reveal elevation changes and features.

For example, in a recent project mapping a historical site, we used color-coding based on intensity to highlight subtle variations in the ground surface, revealing potential buried features that were later confirmed through excavation.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with automated LiDAR classification techniques.

My experience with automated LiDAR classification techniques is extensive. I’ve worked with various algorithms, including:

- Ground classification algorithms: Such as progressive morphological filtering (PMF) and cloth simulation filtering (CSF), which efficiently separate ground points from non-ground points. PMF is known for its speed, while CSF is excellent at handling complex terrain.

- Object-based classification: Methods that group points into segments based on characteristics like height, intensity and then classify those segments. This is particularly useful for urban environments where features are distinct.

- Machine learning approaches: Algorithms like Random Forest, Support Vector Machines (SVMs), and deep learning networks have shown remarkable results. These require training datasets with labeled point clouds and can provide high accuracy when properly trained.

In a project involving urban tree inventory, we used a Random Forest classifier trained on manually classified data. The classifier achieved over 90% accuracy in identifying individual trees, significantly reducing the time and effort required for manual inspection. We leveraged the intensity of the returned signal to differentiate tree canopies from buildings.

# Example of a simple classification workflow (pseudo-code): # Load LiDAR data # Apply ground classification algorithm (e.g., PMF) # Segment non-ground points # Train a machine learning classifier (e.g., Random Forest) # Classify remaining pointsQ 17. How do you handle large LiDAR datasets efficiently?

Handling large LiDAR datasets efficiently is paramount. The sheer volume of data can overwhelm even powerful computers. My strategies include:

- Data partitioning: Breaking down the large dataset into smaller, manageable tiles or blocks. This allows parallel processing and reduces memory requirements.

- Progressive processing: Processing only the necessary parts of the dataset, as opposed to loading the entire dataset into memory. This is particularly important for tasks that don’t require the whole dataset at once (e.g., creating a DTM of a specific area).

- Data compression: Using compressed LAS files reduces storage space and improves I/O speeds. Lossless compression is preferred to maintain data integrity.

- Cloud computing: Leveraging cloud platforms (like AWS or Google Cloud) with distributed computing capabilities allows for efficient processing of massive datasets.

- Optimized algorithms: Employing algorithms designed for efficiency, such as those using spatial indexing structures (e.g., k-d trees).

For instance, in a national-scale project, we utilized a cloud-based processing pipeline, partitioning the data into smaller regions and processing them in parallel. This dramatically reduced processing time, from several weeks to a few days.

Q 18. What are the advantages and disadvantages of different classification algorithms?

Different LiDAR classification algorithms offer various advantages and disadvantages. The choice depends on the specific application and data characteristics.

| Algorithm | Advantages | Disadvantages |

|---|---|---|

| PMF | Fast, efficient for relatively flat terrain | Can struggle with complex terrain and dense vegetation |

| CSF | Handles complex terrain well | Computationally more expensive than PMF |

| Random Forest | High accuracy, handles diverse data types | Requires training data, can be computationally intensive |

| SVM | Effective for high-dimensional data | Can be sensitive to parameter tuning |

For example, PMF is a great choice for quickly classifying ground points in open areas, while CSF is better suited for mountainous regions with steep slopes. Machine learning approaches offer higher accuracy but demand more computational resources and require labeled training data. The selection is a balance between accuracy, processing speed, and available resources.

Q 19. Explain your experience with creating Digital Terrain Models (DTMs) and Digital Surface Models (DSMs).

Creating Digital Terrain Models (DTMs) and Digital Surface Models (DSMs) is a core part of LiDAR data processing. A DTM represents the bare earth surface, excluding buildings and vegetation, while a DSM represents the entire surface, including all features.

The process typically involves:

- Ground point classification: Identifying and isolating ground points from the point cloud using algorithms like PMF or CSF.

- Interpolation: Creating a continuous surface from the classified ground points. Common interpolation methods include TIN (Triangulated Irregular Network) and grid-based methods.

- DSM generation: Creating a surface from all points in the point cloud, without removing any features.

- Noise removal: Applying filtering techniques to remove outliers and artifacts.

In one project, we generated a highly accurate DTM for flood modeling. A detailed DTM is essential to simulate water flow and estimate flood risk accurately. We used TIN interpolation for its ability to accurately represent complex terrain features.

Q 20. What are the factors affecting the accuracy of LiDAR data acquisition?

Several factors influence the accuracy of LiDAR data acquisition. These can be broadly categorized into:

- Scanner parameters: Factors like pulse density, scan angle, and the scanner’s inherent accuracy directly affect the quality of the collected data. Higher pulse density typically leads to greater detail and accuracy, but increases data volume.

- Environmental conditions: Weather conditions (rain, fog, snow) can significantly impact data quality by attenuating or scattering laser pulses. Similarly, atmospheric conditions affect signal propagation.

- Platform stability: The stability of the platform (e.g., aircraft or UAV) carrying the LiDAR sensor influences the accuracy of positional data. Motion compensation techniques are used to minimize the effects of platform movement.

- Ground features: Highly reflective or absorptive surfaces can affect the return signal strength, potentially leading to data gaps or inaccuracies. Dense vegetation can also obscure the ground surface.

For example, low pulse density can lead to an incomplete representation of the terrain, especially in areas with dense vegetation. Accurate motion compensation is crucial to ensure precise geolocation of LiDAR points.

Q 21. Describe your experience with quality control and quality assurance procedures for LiDAR data.

Quality control (QC) and quality assurance (QA) are crucial for ensuring the reliability and usability of LiDAR data. My experience includes:

- Data validation: Checking for data completeness, consistency, and accuracy using statistical analysis and visual inspection.

- Accuracy assessment: Comparing LiDAR-derived products (e.g., DTMs) with reference data (e.g., ground control points) to quantify accuracy.

- Error detection and correction: Identifying and correcting errors such as outliers, spikes, and gaps in the data. This might involve employing manual editing, filtering techniques, or interpolation methods.

- Metadata review: Checking the metadata associated with the LiDAR data to ensure it’s complete and accurate. This includes information about the sensor, acquisition parameters, and processing steps.

- Documentation: Maintaining detailed records of all QC/QA procedures, including results and any corrective actions.

In a recent project, we used a combination of statistical analysis and visual inspection to identify and correct several areas of data loss caused by dense tree cover. We produced a detailed report documenting all QC/QA procedures, including error statistics and accuracy assessments.

Q 22. How do you address the challenges of dealing with varying LiDAR point densities?

Varying LiDAR point densities present a significant challenge in data processing. High-density data offers greater detail but requires more processing power and storage, while low-density data lacks detail but is easier to manage. Addressing this involves a multi-pronged approach.

- Data Resampling: Techniques like nearest-neighbor interpolation, bilinear interpolation, or more sophisticated methods like kriging can be used to create a uniform point cloud from areas of varying density. The choice of method depends on the specific application and acceptable level of interpolation error. For example, kriging is better for preserving spatial autocorrelation but is computationally more expensive.

- Adaptive Filtering: Instead of uniform resampling, adaptive filtering methods can be employed. These methods analyze local point density and adjust processing parameters accordingly. This allows for preserving detail in high-density areas while mitigating noise in low-density areas.

- Region-Specific Processing: Different processing workflows can be applied to different regions based on point density. High-density regions might undergo more detailed analysis (e.g., classification into fine-grained classes), while lower-density regions might be processed with simpler methods.

- Data Compression: Lossless or lossy compression techniques can be used to reduce the storage size of high-density datasets without significant information loss. Octrees and other spatial indexing structures can significantly improve processing speed and efficiency even with large datasets.

For example, in a project mapping a complex urban area with interspersed areas of sparse vegetation, I would use adaptive filtering to preserve fine details of buildings in the high-density urban areas, while applying a simpler classification method in the sparser vegetation areas. This approach optimizes processing time and resources while maintaining overall accuracy.

Q 23. How do you integrate LiDAR data with other geospatial datasets (e.g., imagery, CAD data)?

Integrating LiDAR data with other geospatial datasets is crucial for creating comprehensive and accurate geospatial models. This typically involves georeferencing and coordinate system transformations to ensure alignment. Several methods facilitate this integration:

- Georeferencing: Both LiDAR and other datasets (imagery, CAD) must share a common coordinate system (e.g., UTM, WGS84). This ensures that features align spatially. Georeferencing software and tools are used for this purpose.

- Data Transformation: If datasets use different coordinate systems or projections, appropriate transformations (e.g., using PROJ) are necessary to bring them into alignment.

- Spatial Join: Spatial join operations in GIS software allow merging attributes from different datasets based on spatial relationships (e.g., overlaying LiDAR-derived elevation with land cover classification from imagery).

- 3D Modeling Software: Software packages like ArcGIS Pro, QGIS, or specialized LiDAR processing software (e.g., TerraScan, LAStools) offer tools for visualizing and integrating multiple datasets in 3D. This enables the creation of integrated 3D models combining elevation, imagery, and CAD data.

For instance, in a transportation project, I integrated LiDAR-derived elevation data with aerial imagery and CAD drawings of road networks to create a 3D model accurately representing the terrain and infrastructure. This model allowed for detailed analysis of potential flooding risks and improved road design.

Q 24. Explain your knowledge of different error sources in LiDAR data.

LiDAR data is susceptible to various errors, understanding which is crucial for accurate analysis. These errors can be categorized as:

- Systematic Errors: These are consistent errors affecting the entire dataset. Examples include:

- Sensor misalignment: Incorrect alignment of internal components of the sensor leads to consistent errors in point positions.

- Platform instability: Movement of the aircraft or ground vehicle during data acquisition causes errors in point location.

- Atmospheric effects: Refraction and scattering of the laser beam by atmospheric conditions (temperature, humidity, pressure) can affect the accuracy of range measurements.

- Random Errors: These are unpredictable variations affecting individual points. Examples include:

- Noise: Random fluctuations in the signal caused by electronic noise or background reflections.

- Pulse return misinterpretation: The sensor might misinterpret multiple returns from a single pulse, leading to incorrect point locations.

- Occlusion: Obstructions like dense vegetation can prevent the laser pulse from reaching the ground, creating gaps in the data.

Addressing these errors involves careful sensor calibration, data filtering techniques (e.g., outlier removal), and error modeling. For example, in a forestry application, we might use a combination of filtering algorithms to remove noise and handle occlusion caused by dense canopy while accounting for atmospheric effects using atmospheric correction models.

Q 25. What are your preferred methods for generating elevation models from LiDAR data?

Generating elevation models from LiDAR data is a fundamental task. Several methods exist, each with its strengths and weaknesses:

- Triangulated Irregular Networks (TINs): TINs connect measured points to create a surface consisting of triangles. They are good at preserving sharp features but can be computationally expensive for large datasets.

- Gridded Digital Elevation Models (DEMs): DEMs represent elevation as a regular grid of values. Interpolation methods like inverse distance weighting (IDW), kriging, or spline interpolation are used to assign elevation values to grid cells. Grid DEMs are easier to handle and visualize but may smooth out finer details.

- Multi-resolution DEMs: Creating multiple DEMs with varying resolutions allows for efficient management and analysis. High-resolution DEMs can be used for detailed analysis while lower-resolution DEMs are suitable for regional-scale studies.

The choice of method depends on the application. For a high-precision survey, TINs might be preferred, while for broader applications like hydrological modeling, a gridded DEM may be more suitable. I often use a combination of techniques. For example, I might generate a high-resolution TIN for detailed analysis of a specific area and then create a lower-resolution DEM for regional analysis.

Q 26. Describe your experience with using LiDAR data for change detection analysis.

LiDAR data is exceptionally well-suited for change detection analysis, as it provides highly accurate 3D information. The process typically involves:

- Data Acquisition: Obtaining LiDAR datasets from two different time periods covering the same area.

- Pre-processing: Georeferencing, alignment, and cleaning both datasets to ensure consistency.

- Point Cloud Comparison: Various techniques exist to compare the point clouds from different time periods. These include:

- Difference Analysis: Computing the difference in elevation or other attributes between corresponding points in the two datasets.

- Classification-Based Change Detection: Classifying changes using features extracted from point clouds (e.g., identifying areas of vegetation loss or building construction).

- Visualization and Analysis: Visualizing the changes using GIS software and extracting quantitative information about the extent and nature of changes.

In a coastal erosion study, I used LiDAR data from two time points to precisely map changes in shoreline position. The difference in elevation values identified areas of significant erosion, providing crucial data for coastal management. Combining LiDAR data with other datasets, such as aerial imagery and historical maps, enhances the accuracy and context of the change detection analysis.

Q 27. How familiar are you with the concept of intensity values in LiDAR data and their applications?

Intensity values in LiDAR data represent the strength of the laser return signal. They are not directly related to elevation but carry valuable information about the surface properties of the target. Applications include:

- Material Classification: Different materials reflect laser pulses with different intensities. Analyzing intensity patterns can help differentiate between various surface types (e.g., vegetation, bare earth, buildings). Higher intensities might indicate hard surfaces (e.g., buildings), while lower intensities could signify vegetation.

- Vegetation Analysis: Intensity values can be used to estimate canopy cover, vegetation density, and biomass. For instance, a dense canopy will produce low return intensity due to multiple scattering.

- Feature Extraction: Intensity data can enhance feature extraction from LiDAR point clouds. For example, identifying road networks or buildings by analyzing intensity variations.

In a project mapping urban areas, I used intensity values to differentiate between buildings and vegetation. Buildings generally exhibit higher intensity returns, facilitating accurate building detection and delineation. This improved the accuracy of urban land cover classification significantly.

Q 28. Explain your understanding of the impact of different pulse waveforms on LiDAR data quality.

Different pulse waveforms used in LiDAR systems impact data quality. Waveform LiDAR systems, unlike traditional single-return systems, capture the entire return signal, allowing for the extraction of more information.

- Single-return systems: These record only the first return pulse, leading to potential occlusion and loss of information about underlying surfaces. They are generally faster and less computationally expensive but have lower accuracy for complex terrain.

- Multi-return systems: These record multiple return pulses, providing data about the vertical structure of the target. This is particularly valuable in vegetation studies, where multiple returns from different layers of the canopy provide more information about vegetation structure.

- Waveform LiDAR: These systems record the entire waveform of the return signal. This enables extracting detailed information about the shape and structure of objects, including precise measurements of vegetation height and density. It’s highly accurate but computationally intensive.

The choice of pulse waveform depends on the application. For a simple topographic survey, a single-return system might suffice. However, for detailed vegetation analysis, waveform LiDAR is ideal despite its higher cost and computational demands. I’ve used waveform LiDAR in forestry projects to derive accurate canopy height models and assess biomass with higher precision than with traditional multi-return systems.

Key Topics to Learn for Lidar Data Processing and Classification Interview

- Data Preprocessing: Understanding noise reduction techniques (e.g., filtering, outlier removal), data registration, and georeferencing. Practical application: Preparing lidar point clouds for accurate analysis and model generation.

- Point Cloud Classification: Mastering various classification algorithms (e.g., supervised, unsupervised, deep learning-based methods). Practical application: Accurately classifying points into ground, vegetation, buildings, etc., for applications like 3D city modeling or terrain analysis.

- Feature Extraction: Learning how to extract meaningful features from point clouds (e.g., intensity, elevation, normal vectors). Practical application: Improving the accuracy of classification algorithms and enabling more advanced analysis.

- Data Structures and Algorithms: Familiarity with efficient data structures (e.g., k-d trees, octrees) and algorithms for point cloud processing. Practical application: Optimizing the speed and efficiency of lidar data processing pipelines.

- Software and Tools: Proficiency in commonly used software packages (e.g., PDAL, LAStools, CloudCompare) and programming languages (e.g., Python with libraries like NumPy, SciPy, and laspy). Practical application: Demonstrating practical experience in handling and manipulating large lidar datasets.

- Accuracy Assessment and Validation: Understanding methods for evaluating the accuracy of lidar data processing and classification results. Practical application: Ensuring the reliability and quality of your work for critical applications.

- Applications of Lidar Data Processing: Understanding diverse applications across fields like surveying, mapping, autonomous driving, and precision agriculture. Practical application: Demonstrating breadth of knowledge and ability to contextualize your skills.

Next Steps

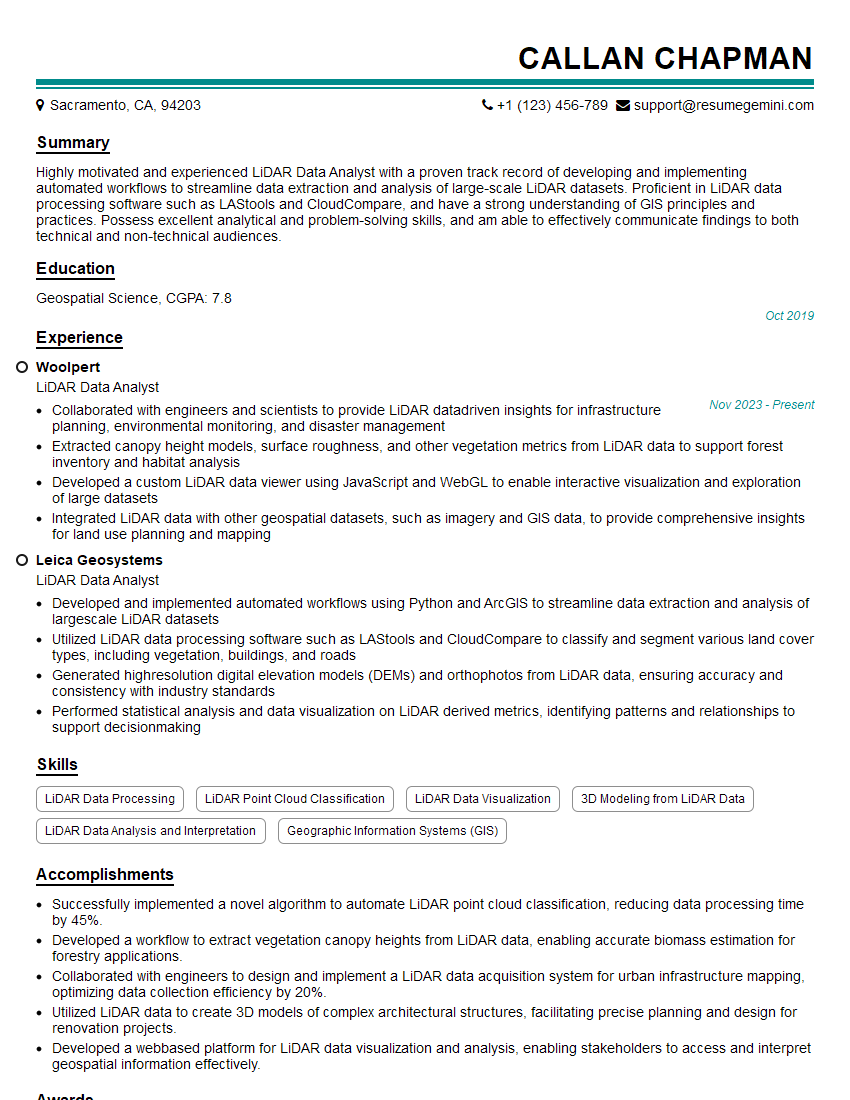

Mastering Lidar Data Processing and Classification opens doors to exciting and high-demand roles in various industries. To maximize your job prospects, a strong and ATS-friendly resume is crucial. ResumeGemini is a trusted resource to help you create a professional and impactful resume that showcases your skills effectively. Examples of resumes tailored to Lidar Data Processing and Classification are available to guide you. Invest the time to craft a compelling resume – it’s your first impression with potential employers and a key step in advancing your career.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples