Cracking a skill-specific interview, like one for Quality Assurance and Traceability, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Quality Assurance and Traceability Interview

Q 1. Explain the difference between Quality Assurance and Quality Control.

Quality Assurance (QA) and Quality Control (QC) are often confused, but they represent distinct yet complementary aspects of ensuring product quality. Think of QA as preventative and QC as reactive.

QA focuses on establishing and maintaining a robust system to prevent defects from occurring in the first place. This involves processes, procedures, and a proactive approach to identify potential risks and weaknesses before they lead to problems. It’s about building quality into the software development lifecycle (SDLC) from the start.

QC, on the other hand, is focused on detecting defects after they’ve been introduced. This is done through various testing activities, such as unit testing, integration testing, and system testing. QC aims to identify and rectify defects to ensure the final product meets quality standards.

Example: In a software project, QA might involve reviewing requirements documents for completeness and clarity, establishing coding standards, and implementing automated testing frameworks. QC, meanwhile, would involve executing test cases, logging defects, and verifying that fixes are implemented correctly.

Q 2. Describe your experience with various testing methodologies (e.g., Agile, Waterfall).

My experience spans both Agile and Waterfall methodologies. In Waterfall projects, QA activities are typically performed in a sequential manner, with testing occurring after the development phase is complete. This requires meticulous planning and comprehensive test documentation upfront. I’ve successfully managed this in projects involving extensive system integration, where thorough testing at each stage was crucial.

In Agile projects, QA is integrated throughout the development process. I’ve utilized iterative testing approaches, like sprint testing and continuous integration/continuous delivery (CI/CD), to ensure frequent feedback and early defect detection. This fast-paced environment demands adaptability and strong collaboration with developers. I’ve leveraged tools like JIRA and automated testing frameworks like Selenium to support rapid iteration and feedback loops. My experience includes working in Scrum teams where daily stand-ups and sprint reviews facilitated continuous quality improvement.

Q 3. How do you ensure traceability throughout the software development lifecycle?

Ensuring traceability throughout the SDLC is paramount for effective quality management. It allows you to track the origin of a requirement, how it’s implemented in the code, and the test cases used to verify its functionality. This ‘traceability matrix’ provides a complete audit trail.

I achieve this through several methods:

- Requirement Traceability: Linking requirements to design documents, code modules, and test cases. This is often done using a requirements management tool.

- Test Case Traceability: Ensuring every test case is linked to a specific requirement or use case. This verifies that all requirements are tested.

- Defect Traceability: Linking defects to the corresponding test cases, code modules, and requirements. This allows for root cause analysis and prevents recurrence.

- Version Control: Using version control systems (like Git) to track changes in code and documentation, providing a clear history of modifications.

Example: If a defect is found in a specific function, the traceability matrix should lead back to the requirement that function was designed to meet, the code that implements it, and the test case that revealed the error. This allows for efficient debugging and remediation.

Q 4. What are your preferred tools for defect tracking and management?

My preferred tools for defect tracking and management depend on project context, but I have extensive experience with several popular options. Jira is a robust platform offering customizable workflows and powerful reporting features. It is particularly effective for Agile environments, facilitating collaboration and tracking progress across sprints. Bugzilla is another option, known for its flexibility and open-source nature, suitable for larger projects requiring detailed defect analysis. For simpler projects, even a spreadsheet can suffice for initial defect tracking; however, more sophisticated solutions are generally preferred for larger or more complex projects.

Q 5. Explain your understanding of ISO 9001 standards.

ISO 9001 is an internationally recognized standard that outlines requirements for a quality management system (QMS). It provides a framework for organizations to consistently meet customer and regulatory requirements, enhance customer satisfaction, and improve overall performance. My understanding encompasses the key principles:

- Customer Focus: Understanding and meeting customer expectations.

- Leadership: Establishing a leadership commitment to quality.

- Engagement of People: Empowering employees to contribute to quality improvement.

- Process Approach: Managing processes effectively to achieve objectives.

- Improvement: Continuously seeking improvement in the QMS.

- Evidence-based Decision Making: Making decisions based on data and analysis.

- Relationship Management: Managing relationships with suppliers and other stakeholders.

In practice, this means implementing documented processes, conducting regular audits, and maintaining records to demonstrate compliance. I’ve been involved in projects that implemented ISO 9001 standards, ensuring compliance through thorough documentation and continuous monitoring of our processes.

Q 6. How do you handle conflicting priorities in a QA project?

Conflicting priorities in QA are common. My approach involves prioritizing based on risk and impact. I use a risk assessment matrix to evaluate each task based on the likelihood of failure and its potential consequences. Critical functionalities with high impact are prioritized over less essential features. This prioritization is often done in collaboration with the project manager and development team. Transparency is key; all stakeholders must understand the prioritization rationale. Sometimes, this involves negotiation and compromise, but always keeping the overall project goals in mind.

Example: If a deadline is looming, but a critical security vulnerability is discovered, fixing the security issue takes precedence regardless of the deadline. This is a risk-based approach, ensuring the most damaging defects are addressed first.

Q 7. Describe a time you identified a critical bug and how you resolved it.

In a previous project, we were developing a critical e-commerce platform. During system testing, I discovered a memory leak in the shopping cart functionality. Under heavy load, the application would eventually crash, resulting in lost sales and a significant impact on the business.

My approach was systematic:

- Reproduction: I documented the exact steps to reproduce the error, including system configuration and load parameters.

- Root Cause Analysis: I worked with the development team using debugging tools to pinpoint the location of the memory leak in the code.

- Solution Proposal: We brainstormed potential solutions, analyzing the trade-offs between performance and complexity.

- Implementation & Verification: The developers implemented a fix, and I retested the functionality thoroughly, using both manual and automated tests to ensure the problem was resolved. This included load testing to validate the fix under realistic conditions.

- Documentation: I meticulously documented the bug, its resolution, and lessons learned to prevent similar issues in the future.

This situation highlighted the importance of thorough testing, particularly under stress conditions. The timely identification and resolution of this critical bug prevented significant financial and reputational damage.

Q 8. How do you prioritize testing tasks in a sprint?

Prioritizing testing tasks within a sprint requires a strategic approach that balances risk, value, and time constraints. I typically use a combination of methods to ensure the most critical functionalities are tested first. This often involves a risk-based prioritization, where we identify features with the highest potential impact on the user experience or the stability of the system. For example, a critical login functionality would take precedence over a minor UI cosmetic change.

We also prioritize based on the value delivered to the user. Features that directly impact user engagement or core business objectives get priority. Additionally, we consider the dependency between tasks; tests for dependent modules are scheduled after the completion of tests on its dependencies. I utilize tools like Jira, which allows for task assignment, status tracking and priority management, facilitating efficient sprint planning and execution.

- Risk-Based Prioritization: Identify high-risk areas prone to bugs that could significantly affect the user experience or product functionality.

- Value-Based Prioritization: Focus on testing features that add the most value to the user or business.

- Dependency Prioritization: Sequence testing based on interdependencies between modules or components.

- Test Coverage: Ensure adequate test coverage across all prioritized functionalities.

Q 9. Explain your experience with test automation frameworks (e.g., Selenium, Appium).

I have extensive experience with Selenium and Appium for test automation. Selenium is my go-to for web application testing. I’ve used it to create robust and maintainable automated tests covering various functionalities, including login flows, data entry forms, and complex user interactions. I’m proficient in using different locators (ID, XPath, CSS selectors) to effectively identify elements on the webpage. My tests utilize page object model (POM) design pattern for improved maintainability and code reusability. For example, I’ve automated regression tests for a large e-commerce website ensuring the core shopping cart functionality remained functional after new releases.

Appium, on the other hand, is my preferred tool for mobile application testing. I’ve used it to automate tests on both iOS and Android platforms, covering aspects like touch gestures, device orientation changes, and native app interactions. I’m comfortable with integrating Appium with cloud-based testing services for parallel execution, significantly reducing testing times. For example, I automated UI tests for a mobile banking app to verify transaction workflows and security features across different mobile devices and operating systems.

// Example Selenium code snippet (Java):

WebDriver driver = new ChromeDriver();

driver.get("https://www.example.com");

WebElement element = driver.findElement(By.id("myElement"));

element.click();Q 10. How do you create effective test cases?

Creating effective test cases requires careful planning and attention to detail. I follow a structured approach that ensures comprehensive test coverage. First, I start by reviewing requirements and design documents to fully understand the system’s functionality and intended behavior. Then, I identify the key functionalities, defining various scenarios, both positive (expected behavior) and negative (unexpected inputs or edge cases).

Each test case should be clear, concise, and independent. I use a consistent template that includes a unique ID, test objective, preconditions, steps to execute, expected results, and postconditions. The test steps should be detailed and unambiguous, enabling anyone to replicate the test. I also utilize boundary value analysis and equivalence partitioning to minimize the number of test cases while ensuring comprehensive coverage. For example, if a field accepts numbers between 1 and 100, I would focus on testing the values 0, 1, 2, 99, 100, and 101 instead of testing every value between 1 and 100 individually.

- Clear Objective: Define the specific functionality being tested.

- Preconditions: Specify the required setup or conditions before running the test.

- Test Steps: Provide detailed steps to execute the test.

- Expected Results: Describe the anticipated outcomes.

- Postconditions: Specify actions to take after the test is complete.

Q 11. What is traceability matrix and why is it important?

A traceability matrix is a document that maps requirements to test cases, ensuring that every requirement has corresponding test cases to validate its functionality. It’s crucial for demonstrating that all requirements have been adequately tested and for ensuring that changes to requirements are reflected in the testing process. This bidirectional mapping helps track the testing process and identify gaps in testing coverage.

The importance of a traceability matrix is multifold. First, it helps improve the quality of the software by providing evidence that all requirements have been addressed. Second, it enhances communication and collaboration between developers and testers by providing a clear link between requirements and test results. Finally, it assists in managing changes and tracking the impact of changes to requirements on testing efforts. In short, it’s an invaluable tool for managing complex projects and improving overall quality assurance.

For instance, in a recent project, we used a traceability matrix to ensure that all user stories (requirements) were linked to specific test cases, facilitating quick identification of uncovered functionalities and ensuring comprehensive testing before release.

Q 12. Describe your experience with different types of testing (e.g., unit, integration, system, acceptance).

My experience spans various types of software testing. Unit testing verifies individual components or modules of the software. I’ve worked extensively with unit testing frameworks like JUnit and pytest, focusing on isolating individual pieces of code and verifying their correctness. I often advocate for developers to write unit tests for their own code ensuring a high level of code quality from the outset.

Integration testing focuses on verifying the interaction between different modules and components. I typically use integration tests to check the proper flow of data and communication between different parts of the system. System testing involves testing the entire system as a whole, ensuring it meets the specified requirements. I use system tests to identify integration issues and verify that the system operates as a cohesive unit.

Acceptance testing validates the system against user requirements and business needs. I employ various acceptance testing strategies including User Acceptance Testing (UAT) involving actual end-users, and Beta testing releasing software to a limited set of users for feedback.

Q 13. How do you ensure data integrity in your QA process?

Ensuring data integrity is paramount in the QA process. This involves several key strategies. First, I use data masking techniques to protect sensitive data during testing, while maintaining the structural integrity for testing purposes. This ensures compliance with data privacy regulations.

Second, I implement rigorous data validation checks at various stages of the testing process. This includes input validation to ensure data conforms to expected formats and ranges, and output validation to verify data accuracy and consistency after processing. Furthermore, I use database testing techniques to verify data consistency and integrity within the database itself. We perform regular database audits and comparisons to identify any discrepancies or anomalies.

Finally, I employ data-driven testing, where test data is extracted from external sources (like spreadsheets or databases), enabling more efficient and comprehensive testing, and facilitating easy maintenance of test data. This method is especially useful when dealing with a large volume of test data.

Q 14. How do you handle situations where there is a conflict between developers and QA?

Conflicts between developers and QA are sometimes inevitable. My approach focuses on fostering collaboration and clear communication. I believe that developers and QA teams are partners with a shared goal of delivering high-quality software. When conflicts arise, I start by understanding the root cause. This often involves carefully reviewing the requirements, test cases, and code to identify any misunderstandings or discrepancies.

Open and respectful communication is vital. I facilitate discussions involving both teams and encourage constructive feedback. I emphasize that QA is not about finding fault but about improving the product’s quality. A collaborative approach, where we identify the issue, discuss the potential solutions and agree on a path forward, is key. If the conflict persists, I escalate the issue to the project manager or a senior stakeholder to provide mediation and facilitate a resolution.

Documentation is also crucial. Maintaining detailed records of reported bugs, proposed fixes, and the resolution process can prevent similar conflicts in the future. Finally, I promote a culture of trust and mutual respect between developers and QA teams through regular communication and collaborative problem solving.

Q 15. Describe your experience with performance testing.

Performance testing is crucial for ensuring an application meets its expected speed, stability, and scalability under various load conditions. My experience encompasses a range of techniques, including load testing, stress testing, and endurance testing. In a recent project, we used JMeter to simulate thousands of concurrent users accessing a new e-commerce platform. This allowed us to identify bottlenecks in the database and server infrastructure before the launch, preventing a potential performance disaster. We also employed spike testing to see how the system reacted to sudden surges in traffic, which helped us fine-tune our auto-scaling configuration. My approach always begins with a thorough understanding of the application’s performance goals and key performance indicators (KPIs) – metrics like response times, throughput, and resource utilization. Then, we create realistic test scenarios that mirror real-world usage patterns. The results are then analyzed to pinpoint areas for optimization and improvement.

For example, in another project involving a high-frequency trading application, we used a specialized tool called k6 to simulate market conditions and measure latency. Achieving sub-millisecond response times was critical, and our performance testing played a significant role in verifying the system’s ability to handle the required speed and reliability.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you measure the effectiveness of your QA process?

Measuring the effectiveness of a QA process is not simply about the number of bugs found; it’s about demonstrating its value in delivering high-quality software. I use a multi-faceted approach. First, we track key metrics such as defect density (number of defects per lines of code), defect leakage (number of defects found after release), and test coverage (percentage of code executed during testing). Lower defect density and leakage indicate a more effective process. High test coverage gives confidence that a significant portion of the application has been tested.

Secondly, we regularly assess the efficiency of the testing process. This includes evaluating the time it takes to complete testing cycles, the resources consumed, and the cost-effectiveness of different testing strategies. We continually look for opportunities to improve efficiency through automation and process improvements. Finally, and perhaps most importantly, we gather feedback from stakeholders – developers, product owners, and end-users – to understand their perception of the software quality and the effectiveness of the QA process in preventing issues.

For example, customer satisfaction surveys and feedback forms can provide valuable insights. A reduction in post-release support requests is a powerful indicator of the QA process’s success in preventing production issues.

Q 17. Explain your understanding of risk-based testing.

Risk-based testing prioritizes testing efforts based on the potential impact and likelihood of defects. Instead of testing everything equally, we focus on areas of the application that pose the greatest risk of failure. This requires a thorough risk assessment, which involves identifying potential risks, assessing their likelihood and impact, and prioritizing them accordingly. This often involves collaboration with the development team, product managers and business stakeholders to gain a holistic view of potential risks.

For instance, a critical business function might warrant more extensive testing than a less-critical feature. Likewise, areas of the codebase with a history of frequent bugs might require more rigorous testing. We might use a risk matrix to visually represent the risks, allowing for easy prioritization. The risk matrix usually depicts likelihood (e.g., low, medium, high) on one axis and impact (e.g., low, medium, high) on the other. High-likelihood, high-impact risks are prioritized first.

Risk-based testing saves time and resources by focusing on the most critical aspects of the application and helps mitigate the risk of serious production failures.

Q 18. How do you use traceability to demonstrate regulatory compliance?

Traceability is the ability to track the life cycle of a software requirement from its inception to its implementation and verification. In demonstrating regulatory compliance, traceability provides an audit trail that shows how requirements are met. For instance, if a regulation requires specific security measures, traceability links the regulation, the requirements that address the regulation, the test cases that verify those requirements, and ultimately, the test results. This chain of evidence allows auditors to see the complete path from requirement to verification, demonstrating compliance.

We use a requirements traceability matrix (RTM) to manage this. An RTM is a table that maps requirements to test cases and test results. This matrix facilitates clear documentation and allows us to quickly identify any gaps in testing or inconsistencies between requirements and implementation. Tools like Jira or dedicated test management software are often utilized to manage requirements and link them to test artifacts, creating an auditable record.

For example, in a medical device development project, rigorous traceability is paramount due to stringent regulations. We would meticulously document the linkage between each regulatory requirement, the corresponding software requirements, the designed tests and ultimately, the results, to demonstrate full compliance during audits.

Q 19. What are some common challenges in establishing and maintaining traceability?

Establishing and maintaining traceability can be challenging. One common issue is the lack of a well-defined requirements management process. Without a clear and consistent approach to defining and documenting requirements, tracing them through the development lifecycle becomes difficult. Another challenge is the use of informal communication and undocumented changes. If requirements are changed without proper documentation, the traceability links break, leading to inaccurate information and a compromised audit trail.

Keeping traceability up-to-date as the project evolves can also be a struggle. Software changes frequently during development, and it requires significant effort to maintain the integrity of the traceability links. Furthermore, integrating traceability across multiple tools and systems can be a logistical hurdle. Different teams may use various tools to manage requirements, test cases, and code, making it challenging to establish a unified view of the traceability information. Finally, lack of tool support or manual processes can make traceability cumbersome and prone to human error. Choosing the right tool which supports bi-directional traceability is paramount.

Q 20. How do you communicate test results effectively to stakeholders?

Effective communication of test results is critical for ensuring that the right information reaches the right people at the right time. My approach focuses on clarity, conciseness, and visual representation. I tailor my communication to the audience. For technical stakeholders like developers, I provide detailed reports with technical specifics and logs. For management, I focus on high-level summaries of key findings, risks, and overall project health. I use a combination of methods: dashboards, graphs, and charts, alongside concise written reports.

I often start with a summary of the key findings, highlighting major issues and their impact. I then provide more detailed information, including severity levels, priority rankings, and suggested resolutions. Visual aids like graphs showing defect trends over time, or heat maps showing areas of high defect concentration, help to communicate complex information quickly and easily. Regular meetings, either formally scheduled or ad-hoc, provide opportunities to discuss results and answer questions. I strive to make the information easily accessible, utilizing collaborative platforms and version control systems for sharing test results and reports.

For example, using a dashboard to track the number of open defects, their severity, and their resolution status provides a clear, up-to-date view of the project’s quality and helps stakeholders quickly understand the status of testing efforts.

Q 21. Explain your experience with version control systems (e.g., Git).

I have extensive experience with Git and other version control systems (VCS). Git is integral to my workflow, enabling efficient collaboration and tracking of changes in test scripts, test data, and even test results. I use Git for managing test automation frameworks, which allows multiple team members to work concurrently on the same project without overwriting each other’s changes. This is essential for managing large, complex automation suites. I’m proficient in using Git branching strategies such as Gitflow, which allows for structured development and testing of new features in isolation before merging them into the main branch. I also leverage Git’s capabilities for code review, which is crucial for ensuring the quality and maintainability of automated test scripts.

Beyond basic commands like git clone, git add, git commit, git push, and git pull, I frequently use features such as branching, merging, rebasing, and resolving merge conflicts. I understand the importance of writing clear and concise commit messages that accurately reflect the changes made. Using Git tags is another important practice, allowing us to mark specific points in the development history (like releases) making it easy to revert to previous versions if needed.

In a recent project, our use of Git’s branching strategy prevented a critical bug from impacting the production release. A developer accidentally introduced a regression in a feature branch. Git’s branching structure allowed us to isolate and fix the bug in the feature branch without affecting the main branch, which was already prepared for deployment.

Q 22. How do you ensure test data integrity and security?

Ensuring test data integrity and security is paramount to reliable testing. It involves a multi-faceted approach encompassing data masking, anonymization, and secure storage. Think of it like protecting a valuable treasure – you wouldn’t leave it unguarded!

Data Masking: This technique replaces sensitive information with realistic but fake data. For example, instead of using real credit card numbers, we might use a pattern that looks like a real credit card number but is completely fictitious. This preserves data structure and volume without compromising privacy.

Data Anonymization: This goes a step further by removing or altering personally identifiable information (PII) like names, addresses, and social security numbers. This is crucial for complying with data privacy regulations like GDPR and CCPA.

Secure Storage: Test data should be stored in secure environments, often using encryption both in transit and at rest. Access should be tightly controlled, using role-based access control (RBAC) to limit who can view, modify, or delete the data. We might use a dedicated test database separate from the production database, with stringent access controls.

Example: In a recent project involving a banking application, we used a data masking tool to replace real account numbers with synthetically generated numbers that maintained the same data type and length, ensuring accurate testing without exposing real customer data. We also employed encryption for data at rest and in transit.

Q 23. Describe your experience with requirements gathering and analysis.

Requirements gathering and analysis are the bedrock of successful software development. It’s about understanding the ‘what’ and ‘why’ behind a project. I approach this using a combination of techniques. Think of it as becoming a detective, uncovering the clues that will lead to a successful project.

Techniques Used:

- Joint Application Development (JAD) sessions: Facilitated workshops with stakeholders to collaboratively define requirements.

- Interviews: One-on-one conversations with key individuals to gain in-depth understanding.

- Document Review: Analyzing existing documentation, including user manuals, business process flows, and previous specifications.

- Prototyping: Creating working models to validate requirements and gather feedback.

Analysis: Once gathered, I analyze requirements for completeness, consistency, feasibility, and testability. I look for conflicts or ambiguities, creating a clear and concise requirements document that serves as a foundation for the entire development lifecycle. This involves creating traceability matrices to link requirements to test cases, ensuring all requirements are adequately covered.

Example: In a previous project, we used JAD sessions to define the requirements for a new e-commerce platform. This collaborative approach allowed us to address potential conflicts early on, resulting in a smoother development process and reduced rework later.

Q 24. What are your strategies for managing a large number of defects?

Managing a large number of defects effectively requires a systematic approach. It’s not just about fixing bugs; it’s about prioritizing them, understanding their root causes, and preventing future occurrences. Imagine it like managing a hospital—you triage the patients (defects) based on severity and need.

Strategies:

- Defect Tracking System: Utilize a robust defect tracking system (e.g., Jira, Bugzilla) to log, categorize, prioritize, and track defects.

- Prioritization: Employ techniques like the MoSCoW method (Must have, Should have, Could have, Won’t have) to prioritize defects based on severity and impact.

- Root Cause Analysis (RCA): Investigate the root cause of defects to prevent recurrence. Tools like the 5 Whys technique can be helpful here.

- Defect Triaging: Regular meetings with the development team to review and assign defects.

- Regression Testing: Ensure that fixing one defect doesn’t introduce new ones.

Example: In a recent project, we were facing a large number of defects. We implemented a robust defect tracking system and used the MoSCoW method to prioritize them. By focusing on high-severity defects first, we were able to deliver a stable product quickly.

Q 25. How do you collaborate effectively with cross-functional teams?

Effective cross-functional collaboration is essential for success. It requires clear communication, mutual respect, and a shared understanding of goals. Think of it as a well-orchestrated symphony—each instrument (team) plays its part, but the conductor (project manager) ensures harmony.

Strategies:

- Regular Communication: Daily stand-up meetings, weekly progress reports, and regular communication channels (e.g., Slack, email).

- Shared Tools and Platforms: Using collaborative tools for documentation, defect tracking, and communication.

- Empathy and Understanding: Recognizing that each team has its own perspective and constraints.

- Conflict Resolution: Having mechanisms in place to address and resolve conflicts constructively.

Example: In a recent project involving developers, designers, and product owners, we used a shared online project management tool to track progress, manage tasks, and communicate effectively. Regular stand-up meetings ensured everyone was aligned on the day’s goals and any roadblocks were addressed promptly.

Q 26. Explain your understanding of different testing environments.

Understanding different testing environments is crucial for effective testing. Each environment mimics a different stage of the software lifecycle, allowing us to test under various conditions. Think of it like preparing for a marathon; you wouldn’t just train on one type of terrain.

Common Testing Environments:

- Development Environment: Where developers write and test code. It is usually a local environment on the developer’s machine.

- Testing Environment: A dedicated environment for QA teams to perform testing. It mirrors the production environment as closely as possible.

- Staging Environment: A near-production environment used for final testing before deployment. It allows for a more realistic test environment than the testing environment.

- Production Environment: The live environment where the software is used by end-users.

Example: In a recent project, we had separate development, testing, and staging environments. This allowed developers to work concurrently without interfering with testing activities. The staging environment, closely mirroring production, enabled us to catch any last-minute issues before release.

Q 27. How do you contribute to continuous improvement of the QA process?

Continuous improvement of the QA process is an ongoing effort. It’s about constantly evaluating our processes, identifying areas for improvement, and implementing changes to enhance efficiency and effectiveness. Think of it as a gardener tending to their garden – always pruning, fertilizing, and ensuring healthy growth.

Contribution to Continuous Improvement:

- Regular Process Reviews: Conducting regular reviews of the QA process to identify bottlenecks and areas for optimization.

- Automation: Automating repetitive testing tasks to reduce manual effort and improve efficiency.

- Defect Analysis: Analyzing defect data to identify trends and patterns, leading to proactive measures to prevent similar defects in the future.

- Tooling and Technology: Exploring and implementing new tools and technologies to improve testing effectiveness.

- Knowledge Sharing: Sharing best practices and lessons learned within the QA team and across other teams.

Example: In a recent project, we analyzed defect data and identified a pattern of defects related to a specific module. This led us to implement more rigorous testing for that module, resulting in a significant reduction in defects in subsequent releases.

Q 28. Describe your experience with any specific traceability tools or systems.

I have extensive experience with various traceability tools and systems, recognizing their critical role in ensuring quality and compliance. These tools are like a detailed map, guiding us through the development process and ensuring all requirements are addressed.

Tools and Systems:

- Jira: A widely used tool for managing projects, tracking defects, and linking requirements to test cases. Its robust workflow capabilities greatly enhance traceability.

- TestRail: A test management tool that provides features for test case management, execution, and reporting. It allows for effective linking of test cases to requirements, ensuring complete test coverage.

- HP ALM (Application Lifecycle Management): A comprehensive suite for managing the entire software development lifecycle, including requirements management, test case management, and defect tracking, providing a highly effective traceability solution.

Example: In a recent project, we used Jira to manage requirements, create test cases, and track defects. We established clear traceability links between requirements, test cases, and defects, providing a clear audit trail and facilitating efficient issue resolution. This allowed us to demonstrate comprehensive test coverage and compliance with regulations.

Key Topics to Learn for Quality Assurance and Traceability Interview

- Quality Assurance Fundamentals: Understanding QA methodologies (Agile, Waterfall), testing types (functional, non-functional, regression), and the software development lifecycle (SDLC).

- Traceability Matrix & Documentation: Creating and interpreting traceability matrices, documenting test cases, and managing test execution results. Practical application: Explain how a traceability matrix ensures requirements are met throughout development.

- Defect Tracking and Management: Utilizing defect tracking systems (e.g., Jira, Bugzilla), effectively reporting and prioritizing bugs, and understanding the defect lifecycle.

- Risk Assessment and Mitigation in QA: Identifying potential risks during software development, proposing mitigation strategies, and communicating risks effectively to stakeholders.

- Test Automation Frameworks: Familiarity with common automation frameworks (e.g., Selenium, Appium) and their application in achieving efficient testing.

- Data Integrity and Validation: Understanding techniques for ensuring data accuracy, consistency, and validity throughout the system. Practical application: Describe methods for validating data in a database or API.

- Software Testing Standards and Best Practices: Knowledge of industry standards (e.g., ISO 9001) and best practices for designing robust and effective testing strategies.

- Performance and Security Testing Concepts: Understanding the basics of performance and security testing, and how these contribute to overall product quality and traceability.

- Communication and Collaboration: Effectively communicating testing results and collaborating with developers, project managers, and other stakeholders.

Next Steps

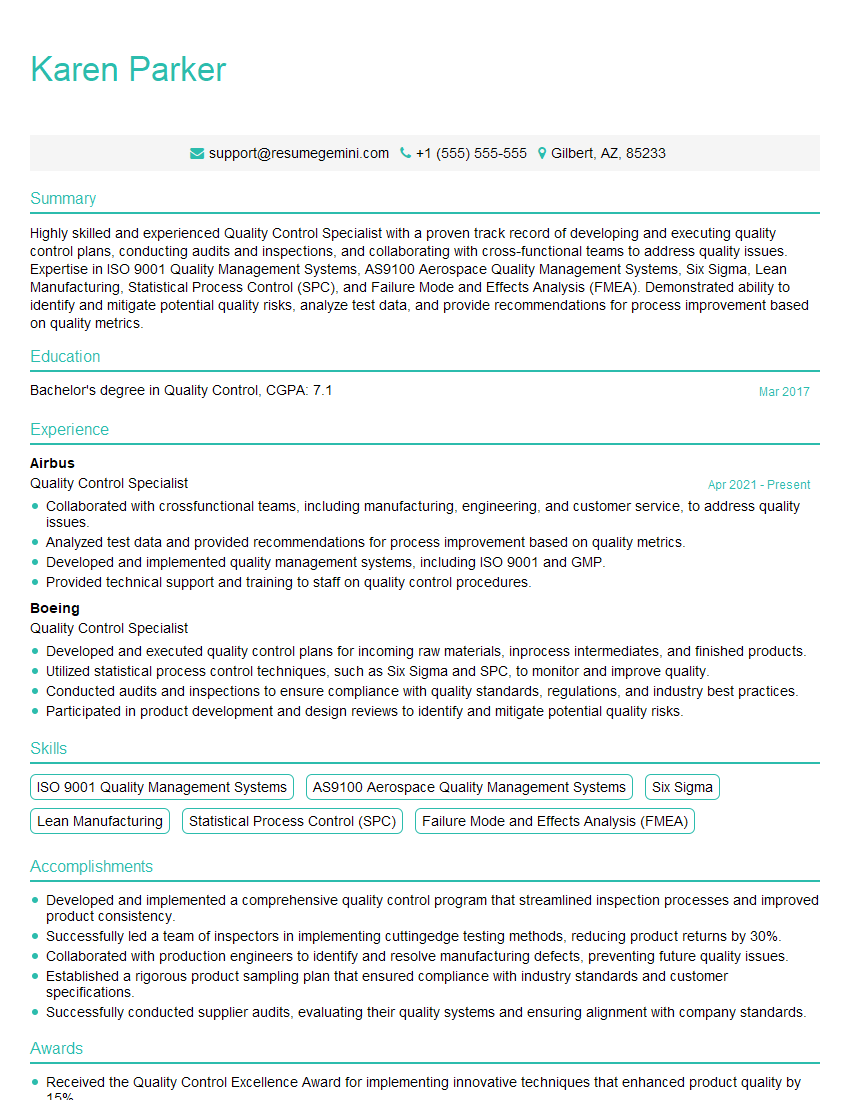

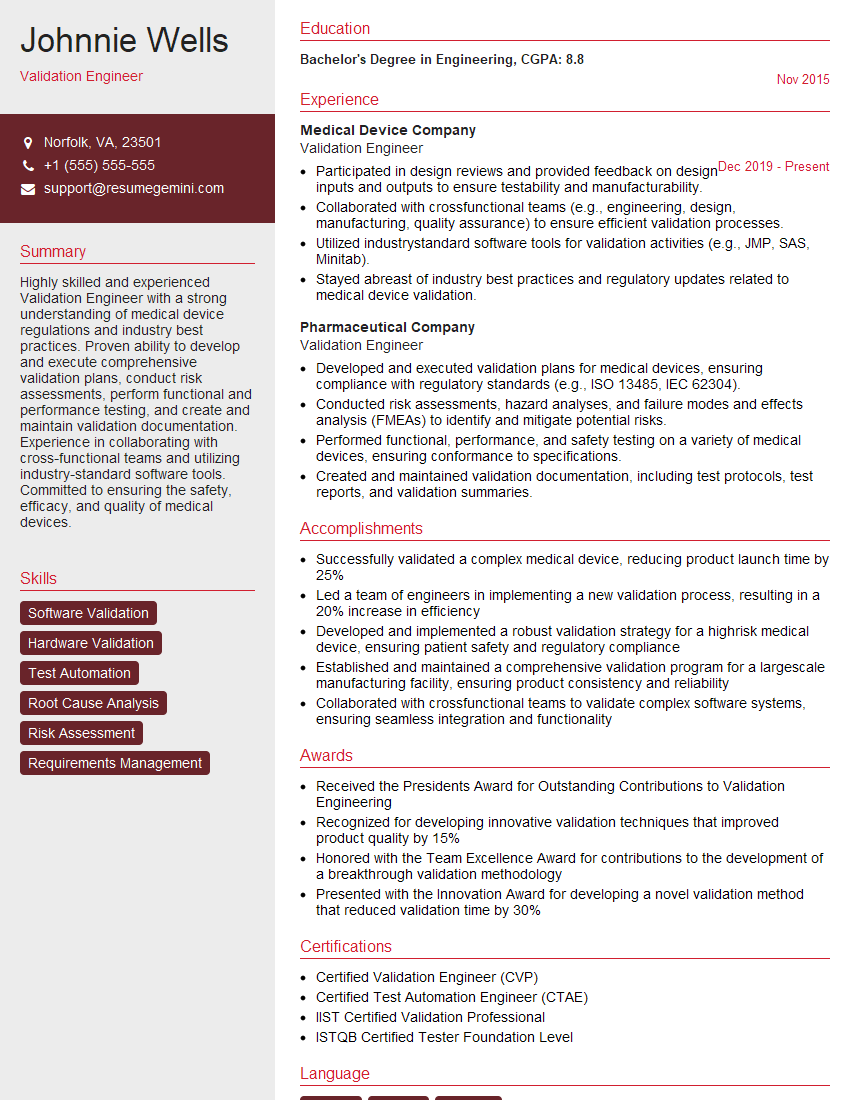

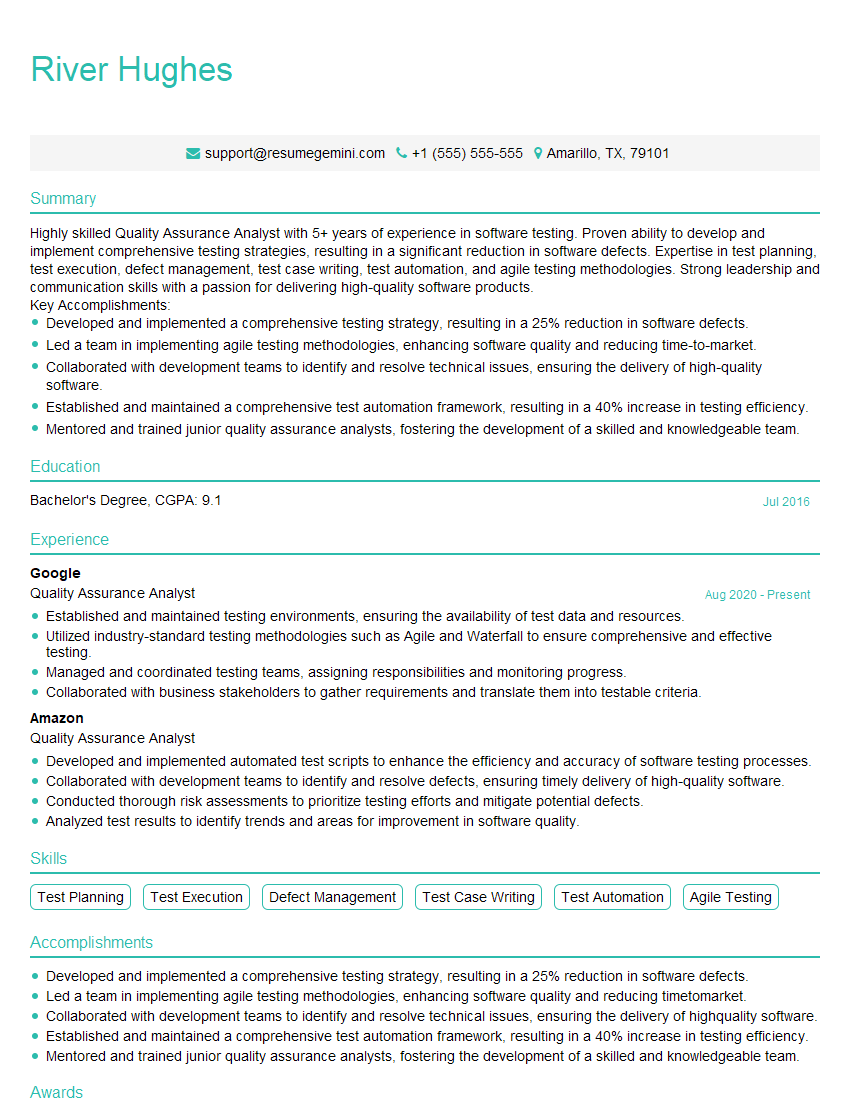

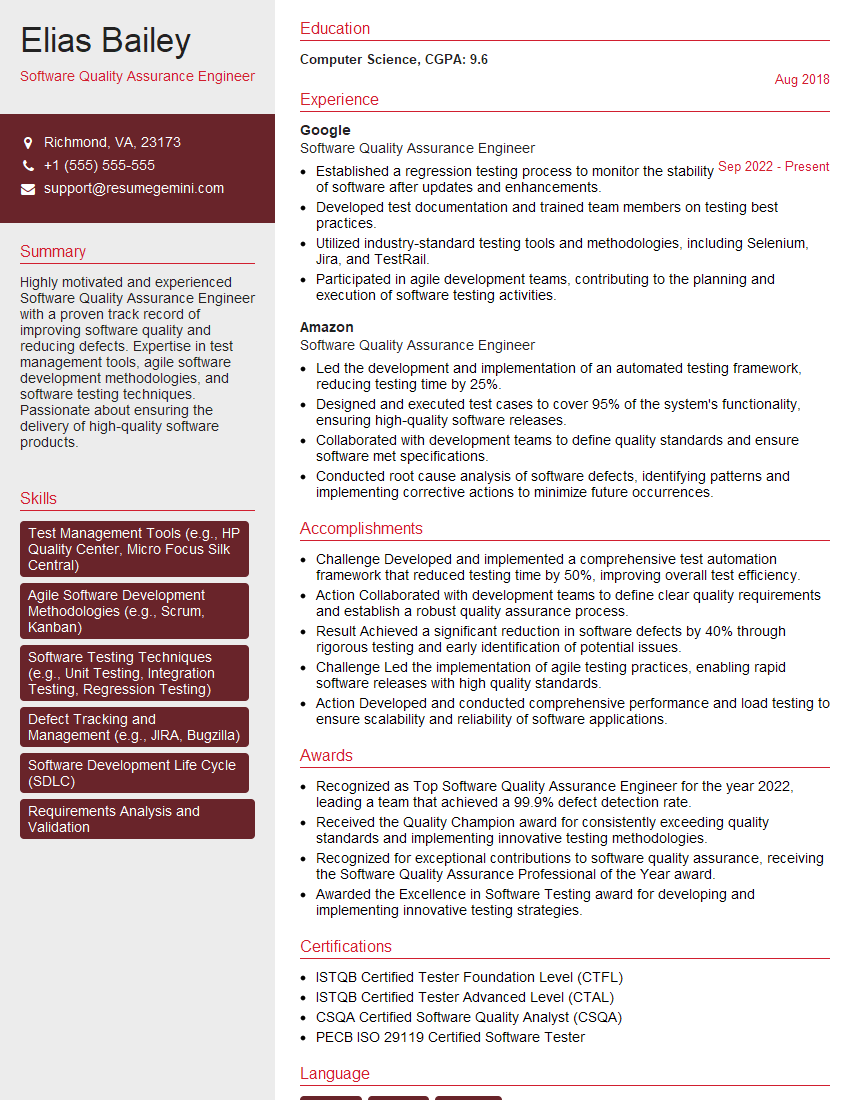

Mastering Quality Assurance and Traceability is crucial for a successful career in the tech industry. These skills are highly sought after and demonstrate your commitment to delivering high-quality products. To significantly enhance your job prospects, create an ATS-friendly resume that highlights your relevant skills and experience. ResumeGemini is a trusted resource to help you build a professional and impactful resume. We provide examples of resumes tailored to Quality Assurance and Traceability roles to guide you in creating your own compelling application materials.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Really detailed insights and content, thank you for writing this detailed article.

IT gave me an insight and words to use and be able to think of examples